You can create a data migration task to migrate data from an Oracle database to an Oracle-compatible tenant of OceanBase Database. By performing schema migration, full migration, and incremental synchronization, you can seamlessly migrate existing business data and incremental data from the source database to the Oracle-compatible OceanBase Database.

Notice

A data migration task remaining in an inactive state for a long time may fail to be resumed depending on the retention period of incremental logs. Inactive states are Failed, Stopped, and Completed. The data migration service automatically releases data migration tasks that remain in an inactive state for more than 7 days to recycle resources. We recommend that you configure alerting for tasks and handle task exceptions in a timely manner.

Prerequisites

You have created the corresponding schema in the target Oracle database.

The source Oracle instance must have archive log enabled, and the log file must have been switched before incremental replication in the data migration service.

The source Oracle instance must have installed and be able to use the LogMiner tool.

You have created the target OceanBase instance and tenant. For more information, see Create an instance and Create a tenant.

You have created dedicated database users for data migration in the source and the target, and granted required privileges to the users. For more information, see User privileges.

The Oracle instance must have enabled supplemental logging at the database or table level.

If you enable supplemental logging at the database level for primary keys (PKs) and unique keys (UKs), the LogMiner Reader will pull more logs when the tables that do not need to be synchronized generate a large number of unnecessary logs. This will increase the pressure on the LogMiner Reader and the Oracle instance. Therefore, the data migration service supports enabling supplemental logging for only PKs and UKs at the table level. However, if you set ETL filters for non-PK or non-UK columns when you create a migration task, you must enable supplemental logging for the corresponding columns, or enable supplemental logging for all columns.

Clock synchronization must be performed between the Oracle server and the the data migration service server (for example, by configuring the Network Time Protocol (NTP) service). Otherwise, data risks will exist. If the Oracle instance is an Oracle Real Application Clusters (Oracle RAC) instance, clock synchronization must also be performed between the Oracle instances.

Limitations

Only users with the Project Owner, Project Admin or Data Services Admin roles are allowed to create data migration tasks.

Limitations on the source database

Do not perform DDL operations that modify database or table schemas during schema migration or full data migration. Otherwise, the data migration task may be interrupted.

At present, the data migration service supports Oracle Database 10g, 11g, 12c, 18c, and 19c. Oracle Database 12c and later versions support container database (CDB) and pluggable database (PDB). It also supports OceanBase Database (in the Oracle compatible mode) V2.x, V3.x, and V4.x.

The data migration service supports the migration of only ordinary tables and views.

The data migration service supports the migration of only objects whose database name, table name, and column name are ASCII-encoded and do not contain special characters. The special characters are line breaks, spaces, and . | " ' ` ( ) = ; / &

If the target is a database, the data migration service does not support triggers in the target database. If triggers exist in the target database, the data migration may fail.

The data migration service does not support incremental data migration for a table whose data in all columns is of any of the following large object (LOB) types: BLOB, CLOB, and NCLOB.

Data source identifiers and user accounts must be globally unique in the data migration service system.

The maximum incremental log parsing for an Oracle database is 5 TB per day.

You cannot create a database object with a name exceeding 30 bytes in an Oracle database version 11g or earlier.

The data migration service does not support the execution of certain

UPDATEcommands on an Oracle database. The following example shows an unsupportedUPDATEcommand.UPDATE TABLE_NAME SET KEY=KEY+1;In the preceding example,

TABLE_NAMEis the name of the table, andKEYis a NUMERIC column defined as the primary key.

Considerations

When you need to perform incremental synchronization for an Oracle database, the size of a single archive file is recommended to be less than 2 GB.

The archive files of an Oracle database are retained for more than 2 days. Otherwise, if the number of archive files increases sharply in a certain period, the archive files may be unavailable when you prepare to restore data, which prevents you from restoring data.

If the source Oracle database contains DML statements that exchange primary keys, the data migration service may fail to parse the logs, resulting in data loss during migration to the target. The following is an example of a DML statement that exchanges primary keys:

UPDATE test SET c1=(CASE WHEN c1=1 THEN 2 WHEN c1=2 THEN 1 END) WHERE c1 IN (1,2);The character set of an Oracle instance can be AL32UTF8, AL16UTF16, ZHS16GBK, or GB18030. If the UTF-8 character set is used in the source, we recommend that you use a compatible character set, such as UTF-8 or UTF-16, in the target to avoid garbled characters.

When you migrate a table without a primary key from an Oracle database to an Oracle-compatible tenant of OceanBase Database, do not perform operations that change the ROWID of the table, such as import, export, Alter Table, FlashBack Table, partition splitting, or partition merging.

If the clocks between nodes or between the client and the server are out of synchronization, the latency may be inaccurate during incremental synchronization.

For example, if the clock is earlier than the standard time, the latency can be negative. If the clock is later than the standard time, the latency can be positive.

Due to the historical practice of daylight saving time in China, the incremental synchronization from an Oracle database to an Oracle-compatible tenant of OceanBase Database may have a 1-hour time difference between the source and target for the start and end dates of daylight saving time in 1986 to 1991, and for the period from April 10 to April 17, 1988, for the

TIMESTAMP(6) WITH TIME ZONEdata type.If you modify a unique index at the target when DDL synchronization is disabled, you must restart the data migration task to avoid data inconsistency.

If the character encoding configurations of the source and target are different, the schema migration provides a strategy for expanding the field length definition. For example, the field length may be expanded to 1.5 times the original length, and the length unit may be converted from BYTE to CHAR.

If the source contains a data type that contains time zone information (such as TIMESTAMP WITH TIME ZONE), make sure that the target database supports and contains the corresponding time zone. Otherwise, data inconsistency may occur during data migration.

In the scenario of table aggregation:

We recommend that you map the source and target relationships by using matching rules.

We recommend that you create the table schema in the target. If you use the data migration service to create the table schema, skip failed objects in the schema migration step.

Check the objects in the recycle bin of the Oracle database. If the recycle bin contains more than 100 objects, internal table queries may time out. You must clear the objects in the recycle bin.

Query whether the recycle bin is enabled.

SELECT Value FROM V$parameter WHERE Name = 'recyclebin';Query the number of objects in the recycle bin.

SELECT COUNT(*) FROM RECYCLEBIN;

If you select only Incremental Synchronization when you create the data migration task, the data migration service requires that the local incremental logs in the source database be retained for more than 48 hours.

If you select Full Migration and Incremental Synchronization when you create the data migration task, the data migration service requires that the local incremental logs in the source database be retained for at least 7 days. Otherwise, the data migration task may fail or the data in the source and target databases may be inconsistent because the data migration service cannot obtain incremental logs.

For incremental synchronization tasks of source Oracle databases (excluding those that obtain incremental data from Kafka), if a single transaction spans multiple archive files, LogMiner may fail to return complete data information in the transaction. In this case, data loss may occur. We recommend that you enable full data validation and data correction to ensure data consistency.

Data type mappings

Notice

CLOB and BLOB data must be less than 48 MB in size.

Migration of ROWID, BFILE, XMLType, UROWID, UNDEFINED, and UDT data is not supported.

Incremental synchronization of tables of the LONG or LONG RAW type is not supported.

Oracle Data Types |

OceanBase Database Oracle compatible mode data types |

|---|---|

| CHAR(n CHAR) | CHAR(n CHAR) |

| CHAR(n BYTE) | CHAR(n BYTE) |

| NCHAR(n) | NCHAR(n) |

| VARCHAR2(n) | VARCHAR2(n) |

| NVARCHAR2(n) | NVARCHAR2(n) |

| NUMBER(n) | NUMBER(n) |

| NUMBER (p, s) | NUMBER(p,s) |

| RAW | RAW |

| CLOB | CLOB |

| NCLOB | NVARCHAR2 Note In OceanBase Database Oracle compatible mode, fields of the NVARCHAR2 type do not support null values. If the source data contains null values, they are represented as the string "NULL". |

| BLOB | BLOB |

| REAL | FLOAT |

| FLOAT(n) | FLOAT |

| BINARY_FLOAT | BINARY_FLOAT |

| BINARY_DOUBLE | BINARY_DOUBLE |

| DATE | DATE |

| TIMESTAMP | TIMESTAMP |

| TIMESTAMP WITH TIME ZONE | TIMESTAMP WITH TIME ZONE |

| TIMESTAMP WITH LOCAL TIME ZONE | TIMESTAMP WITH LOCAL TIME ZONE |

| INTERVAL YEAR(p) TO MONTH | INTERVAL YEAR(p) TO MONTH |

| INTERVAL DAY(p) TO SECOND | INTERVAL DAY(p) TO SECOND |

| LONG | CLOB Note: This type is not supported for incremental synchronization. |

| LONG RAW | BLOB Note: This type is not supported for incremental synchronization. |

Convert Oracle table partitions

During data migration, the data migration service converts business SQL statements for Oracle databases.

Note

The partition conversion rules in this topic apply to all partition types.

Original table definition |

Converted output |

|---|---|

CREATE TABLE T_RANGE_0 (A INT, B INT, PRIMARY KEY (B) )PARTITION BY RANGE(A)(....); |

CREATE TABLE "T_RANGE_0" ("A" NUMBER, "B" NUMBER NOT NULL,CONSTRAINT "T_RANGE_10_UK" UNIQUE ("B") )PARTITION BY RANGE ("A")( .... ); |

CREATE TABLE T_RANGE_10 ("A" INT, "B" INT, "C" DATE, "D" NUMBER GENERATED ALWAYS AS (TO_NUMBER(TO_CHAR("C",'dd'))) VIRTUAL, CONSTRAINT "T_RANGE_10_PK" PRIMARY KEY (A) )PARTITION BY RANGE(D)( .... ); |

CREATE TABLE T_RANGE_10 ("A" INT NOT NULL,"B" INT,"C" DATE,"D" NUMBER GENERATED ALWAYS AS (TO_NUMBER(TO_CHAR("C",'dd'))) VIRTUAL,CONSTRAINT "T_RANGE_10_PK" UNIQUE (A) )PARTITION BY RANGE(D)( .... ); |

CREATE TABLE T_RANGE_1 (A INT,B INT,UNIQUE (B) )PARTITION BY RANGE(A)( partition P_MAX values less than (10) ); |

The original table definition is supported. |

CREATE TABLE T_RANGE_2 (A INT,B INT NOT NULL,UNIQUE (B) )PARTITION BY RANGE(A)( partition P_MAX values less than (10) ); |

The original table definition is supported. |

CREATE TABLE T_RANGE_3 (A INT,B INT,UNIQUE (A) )PARTITION BY RANGE(A)( .... ); |

The original table definition is supported. |

CREATE TABLE T_RANGE_4 (A INT NOT NULL,B INT,UNIQUE (A) )PARTITION BY RANGE(A)( .... ); |

CREATE TABLE "T_RANGE_4" ("A" NUMBER NOT NULL,"B" NUMBER,PRIMARY KEY ("A") )PARTITION BY RANGE ("A")( .... ); |

CREATE TABLE T_RANGE_5 (A INT,B INT,UNIQUE (A, B) )PARTITION BY RANGE(A)( partition P_MAX values less than (10) ); |

Supported in the original table definition. |

CREATE TABLE T_RANGE_6 (A INT NOT NULL,B INT,UNIQUE (A, B) )PARTITION BY RANGE(A)( partition P_MAX values less than (10) ); |

Supported in the original table definition. |

CREATE TABLE T_RANGE_7 (A INT NOT NULL,B INT NOT NULL,UNIQUE (A, B) )PARTITION BY RANGE(A)( partition P_MAX values less than (10) ); |

CREATE TABLE "T_RANGE_7" ("A" NUMBER NOT NULL,"B" NUMBER NOT NULL,PRIMARY KEY ("A", "B") )PARTITION BY RANGE ("A")( .... ); |

CREATE TABLE T_RANGE_8 ("A" INT,"B" INT,"C" INT NOT NULL,UNIQUE (A),UNIQUE (B),UNIQUE (C) )PARTITION BY RANGE(B)( partition P_MAX values less than (10) ); |

Supported in the original table definition. |

CREATE TABLE T_RANGE_9 ("A" INT,"B" INT,"C" INT NOT NULL,UNIQUE(A),UNIQUE(B),UNIQUE (C) )PARTITION BY RANGE(C)( partition P_MAX values less than (10) ); |

CREATE TABLE "T_RANGE_9" ("A" NUMBER,"B" NUMBER,"C" NUMBER NOT NULL,PRIMARY KEY ("C"),UNIQUE ("A"),UNIQUE ("B") )PARTITION BY RANGE ("C")( .... ); |

Check and modify the system configuration of the Oracle instance

To do this, perform the following steps:

Notice

When you perform the following operations on an AWS RDS Oracle instance, there are some permission limitations. For more information, see Users and privileges for RDS for Oracle.

Enable the archive mode in the source Oracle database.

Enable the supplemental logging in the source Oracle database.

(Optional) Set the system parameters of the Oracle database.

Note

When you select Instance type as Self-managed Oracle, you can set the system parameter

_log_parallelism_max.

Enable archive mode on the source Oracle database

Enable archive mode on an AWS RDS Oracle instance

When you enable archive mode on an AWS RDS Oracle instance, you can only set it based on the retention period, and cannot set it based on the size.

EXEC rdsadmin.rdsadmin_util.set_configuration('archivelog retention hours',24);

Enable archive mode on a self-managed Oracle database

SELECT log_mode FROM v$database;

The log_mode field must be archivelog. Otherwise, you need to modify it as described below.

Run the following command to enable archive mode.

SHUTDOWN IMMEDIATE; STARTUP MOUNT; ALTER DATABASE ARCHIVELOG; ALTER DATABASE OPEN;Run the following command to view the path and quota of the archive logs.

Check the path and quota of the

recovery file. It is recommended that you set thedb_recovery_file_dest_sizeparameter to a large value. After enabling archive mode, you need to regularly clean up archive logs using tools such as RMAN.SHOW PARAMETER db_recovery_file_dest;Change the quota of the archive logs based on your business needs.

ALTER SYSTEM SET db_recovery_file_dest_size =50G SCOPE = BOTH;

Enable supplemental logging in the source Oracle database

Enable supplemental logging in an AWS RDS Oracle instance

EXEC rdsadmin.rdsadmin_util.alter_supplemental_logging('ADD');

EXEC rdsadmin.rdsadmin_util.alter_supplemental_logging('ADD','PRIMARY KEY');

EXEC rdsadmin.rdsadmin_util.alter_supplemental_logging('ADD','UNIQUE');

After you enable supplemental logging, switch to archive logs.

EXEC rdsadmin.rdsadmin_util.switch_logfile;

When you enable supplemental logging in an AWS RDS Oracle instance, the lack of table-level control can lead to the following effects:

Only PK and UK settings are supported, which may affect the filtering conditions for data. If you need to filter data, you must set

alter_supplemental_logging('ADD','ALL'), which will significantly increase the log volume.Inconsistencies in the table structures (such as primary keys and unique keys) between the source and target databases can lead to data quality issues.

Enable supplemental logging in a self-managed Oracle database

LogMiner Reader supports configuring only table-level supplemental logging in the Oracle system. If new tables are created in the source Oracle database during migration, you need to enable PK and UK supplemental logging before executing DML operations. Otherwise, the data migration service will report an incomplete log exception.

Notice

Supplemental logging must be enabled in the Oracle primary database.

To address issues such as inconsistent indexes between the source and target databases, ETL operations not meeting expectations, and reduced performance for partitioned table migrations, you need to add the following supplemental logs:

Add

supplemental_log_data_pkandsupplemental_log_data_uiat the database or table level.Add specific columns to the supplemental logs.

Add all PK and UK-related columns from both the source and target databases. This resolves inconsistencies in indexes between the source and target databases.

If ETL is involved, add the ETL columns. This ensures ETL operations meet expectations.

If the target database is a partitioned table, add the partitioning columns. This prevents performance degradation due to the inability to perform partition pruning.

You can execute the following statement to check the addition results.

SELECT log_group_type FROM all_log_groups WHERE OWNER = '<schema_name>' AND table_name = '<table_name>';If the query result contains "ALL COLUMN LOGGING," the check is successful. If not, verify whether all the mentioned columns are present in the

ALL_LOG_GROUP_COLUMNStable.Here is an example of how to add specific columns to the supplemental logs:

ALTER TABLE <table_name> ADD SUPPLEMENTAL LOG GROUP <table_name_group> (c1, c2) ALWAYS;

The following table lists the risks and solutions when a DDL operation is performed during the running of a data migration task.

Operation |

Risk |

Solution |

|---|---|---|

| CREATE TABLE (and the table needs to be synchronized) | If the target database has a partitioned table and the indexes are inconsistent between the source and target databases, or ETL is required, it may affect data migration performance and lead to ETL not meeting expectations. | Enable PK and UK supplemental logging at the database level. Manually add the relevant columns to the supplemental logs. |

| Add, delete, or modify PK/UK/partition columns or ETL columns | This may not meet the rule of adding supplemental logs at startup, leading to data inconsistencies or reduced data migration performance. | Follow the rules for adding supplemental logs as mentioned above. |

LogMiner Reader checks in the following two ways. If it detects that supplemental logging is not enabled, it exits.

Check if

supplemental_log_data_pkandsupplemental_log_data_uiare enabled at the database level.Execute the following command to check if supplemental logging is enabled. If the query result shows

YESfor all, it indicates that supplemental logging is enabled.SELECT supplemental_log_data_pk, supplemental_log_data_ui FROM v$database;If not enabled, perform the following steps:

Execute the following statement to enable supplemental logging.

ALTER DATABASE ADD supplemental log DATA(PRIMARY KEY, UNIQUE) columns;After enabling, switch to archive logs twice, and wait more than 5 minutes before starting the task. For Oracle RAC, multiple instances alternate in switching.

ALTER SYSTEM SWITCH LOGFILE;In the case of Oracle RAC, if one instance switches multiple times and then another instance is switched without alternating, the later switched instance may locate logs before supplemental logging was enabled when determining the starting log file.

Check if

supplemental_log_data_pkandsupplemental_log_data_uiare enabled at the table level.Execute the following statement to check if

supplemental_log_data_minis enabled at the database level.SELECT supplemental_log_data_min FROM v$database;If the query result is

YESorIMPLICIT, it indicates that it is enabled.Execute the following statement to check if table-level supplemental logging is enabled for the table to be synchronized.

SELECT log_group_type FROM all_log_groups WHERE OWNER = '<schema_name>' AND table_name = '<table_name>';Each type of supplemental log returns one row. The result must include

ALL COLUMN LOGGING, or bothPRIMARY KEY LOGGINGandUNIQUE KEY LOGGING.If table-level supplemental logging is not enabled, execute the following statement.

ALTER TABLE table_name ADD SUPPLEMENTAL LOG DATA (PRIMARY KEY, UNIQUE) COLUMNS;After enabling, switch to archive logs twice, and wait more than 5 minutes before starting the task. For Oracle RAC, multiple instances alternate in switching.

ALTER SYSTEM SWITCH LOGFILE;

Set the system parameter of the Oracle database (optional)

When you use a self-managed Oracle database, we recommend that you set the _log_parallelism_max parameter of the Oracle database to 1. By default, the value of this parameter is 2.

Query the value of

_log_parallelism_max. You can use the following two methods:Method 1

SELECT NAM.KSPPINM,VAL.KSPPSTVL,NAM.KSPPDESC FROM SYS.X$KSPPI NAM,SYS.X$KSPPSV VAL WHERE NAM.INDX= VAL.INDX AND NAM.KSPPINM LIKE '_%' AND UPPER(NAM.KSPPINM) LIKE '%LOG_PARALLEL%';Method 2

SELECT VALUE FROM v$parameter WHERE name = '_log_parallelism_max';

Modify the value of

_log_parallelism_max. You can use the following two methods:Method 1: Modify the parameter for an Oracle RAC database

ALTER SYSTEM SET "_log_parallelism_max" = 1 SID = '*' SCOPE = spfile;Method 2: Modify the parameter for a non-Oracle RAC database

ALTER SYSTEM SET "_log_parallelism_max" = 1 SCOPE = spfile;

After you modify the

_log_parallelism_maxparameter, restart the instance, switch the archive logs twice, and wait for more than 5 minutes before you start a task.

Supported source and target instance types

Cloud vendor |

Source |

Target |

|---|---|---|

| AWS | RDS Oracle | OceanBase Oracle Compatible (Transactional) |

| AWS | Self-managed Oracle | OceanBase Oracle Compatible (Transactional) |

| Huawei Cloud | Self-managed Oracle | OceanBase Oracle Compatible (Transactional) |

| Google Cloud | Self-managed Oracle | OceanBase Oracle Compatible (Transactional) |

| Alibaba Cloud | Self-managed Oracle | OceanBase Oracle Compatible (Transactional) |

Procedure

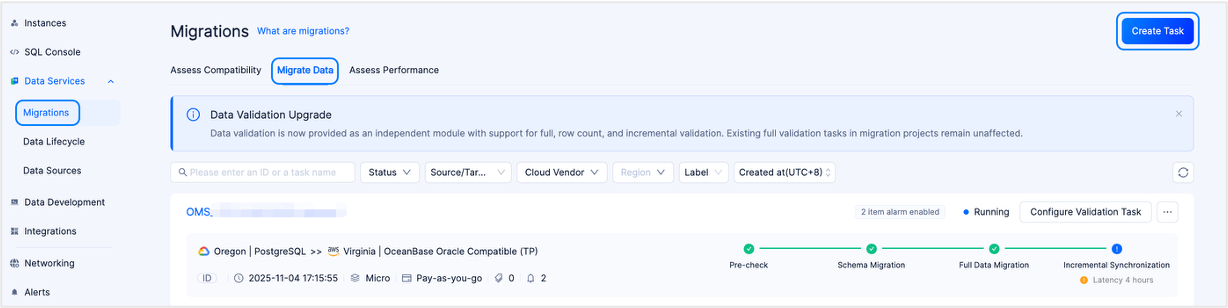

Create a data migration task.

Log in to the OceanBase Cloud console.

In the left-side navigation pane, select Data Services > Migrations.

On the Migrations page, click the Migrate Data tab.

On the Migrate Data tab, click Create Task in the upper-right corner.

In the task name field, enter a custom migration task name.

We recommend that you use a combination of Chinese characters, numbers, and English letters. The name cannot contain spaces and must be less than 64 characters in length.

On the Configure Source & Target page, configure the parameters.

In the Source Profile section, configure the parameters.

If you want to reference the configurations of an existing data source, click Quick Fill next to Source Profile and select the required data source from the drop-down list. Then, the parameters in the Source Profile section are automatically populated. If you need to save the current configuration as a new data source, click on the Save icon located on the right side of the Quick Fill.

You can also click Quick Fill > Manage Data Sources, enter Data Sources page to check and manage data sources. You can manage different types of data sources on the Data Sources page. For more information, see Data Source.

ParameterDescriptionCloud Vendor At present, supported cloud vendors are AWS, Huawei Cloud, Google Cloud, and Alibaba Cloud. Database Type The type of the source. Select Oracle. Instance Type ul> - When you select AWS as the cloud vendor, the instance types supported are RDS Oracle and Self-managed Oracle.

- When you select Huawei Cloud or Google Cloud as the cloud vendor, the instance type supported is Self-managed Oracle.

Region The region of the source database. Connection Type Available connection types are Endpoint and Public IP. - If you select Endpoint connection type, you need to first add the account ID displayed on the page to the whitelist of your endpoint service. This allows the endpoint from that account to connect to the endpoint service. For more information, see the corresponding topic under Connect via private network.

- When you select Cloud Vendor as AWS, if you selected Acceptance required for the parameter Require acceptance for endpoint when you created the endpoint service, the data migration service will prompt you to go to the AWS console to perform the Accept endpoint connection request operation in the AWS console when the data migration service first connects to the PrivateLink.

- When your Cloud Vendor is Google Cloud, add authorized projects to Published Services. After authorization, no manual authorization is needed when you test the data source connection.

Note

You need to select the source and target regions before the page displays the data source IP addresses that need to be added to the whitelist.

Connection Details - When you select Connection Type as Endpoint, enter the endpoint service name.

- When you select Connection Type as Public IP, enter the IP address and port number of the database host machine.

Service Name The service name of the Oracle database. Database Account The name of the Oracle database user for data migration. Password The password of the database user. In the Target Profile section, configure the parameters.

If you want to reference the configurations of an existing data source, click Quick Fill next to Target Profile and select the required data source from the drop-down list. Then, the parameters in the Target Profile section are automatically populated. If you need to save the current configuration as a new data source, click on the Save icon located on the right side of the Quick Fill.

You can also click Quick Fill > Manage Data Sources, enter Data Sources page to check and manage data sources. You can manage different types of data sources on the Data Sources page. For more information, see Data Source.

ParameterDescriptionCloud Vendor We support AWS, Huawei Cloud, Google Cloud, and Alibaba Cloud. You can choose the same cloud vendor as the source, or perform cross-cloud data migration. Notice

Cross-cloud vendor data migration is disabled by default. If you need to use this feature, please contact our technical support.

Database Type Select OceanBase Oracle Compatible as the database type for the target. Instance Type Select Dedicated (Transactional). Region The region of the target database. Instance The ID or name of the instance to which the Oracle-compatible tenant of OceanBase Database belongs. You can view the ID or name of the instance on the Instances page. Note

When your cloud vendor is Alibaba Cloud, you can also select a cross-account authorized instance of an Alibaba Cloud primary account. For more information, see Alibaba Cloud account authorization.

Tenant The ID or name of the Oracle-compatible tenant of OceanBase Database. You can expand the information about the target instance on the Instances page and view the ID or name of the tenant. Database Account The name of the database user in the Oracle-compatible tenant of OceanBase Database for data migration. Password The password of the database user.

Click Test and Continue.

On the Select Type & Objects page, configure the parameters.

Select One-way Sync for Sync Topology.

Data migration supports One-way Sync and Two-way Sync. This topic introduces the operation of one-way synchronization. For more information on two-way synchronization, see Configure a two-way synchronization task.

Select the migration type for your data migration task.

Options of Migration Type are Schema Migration, Full Migration, and Incremental Synchronization.

Migration typeDescriptionSchema migration If you select this migration type, you must define the mapping between the character sets. The data migration service only copies schemas from the source database to the target database without affecting the schemas in the source. Full Migration After the full migration task begins, the data migration service will transfer the existing data from the source database tables to the corresponding tables in the target database. Incremental Synchronization After the incremental synchronization task begins, the data migration service will synchronize the changes (inserts, updates, or deletes) from the source database to the corresponding tables in the target database. Incremental Synchronization includes DML Synchronization and DDL Synchronization. You can select based on your needs. For more information on synchronizing DDL, see Custom DML/DDL configurations. In the Select Migration Objects section, specify your way to select migration objects.

You can select migration objects in two ways: Specify Objects and Match by Rule.

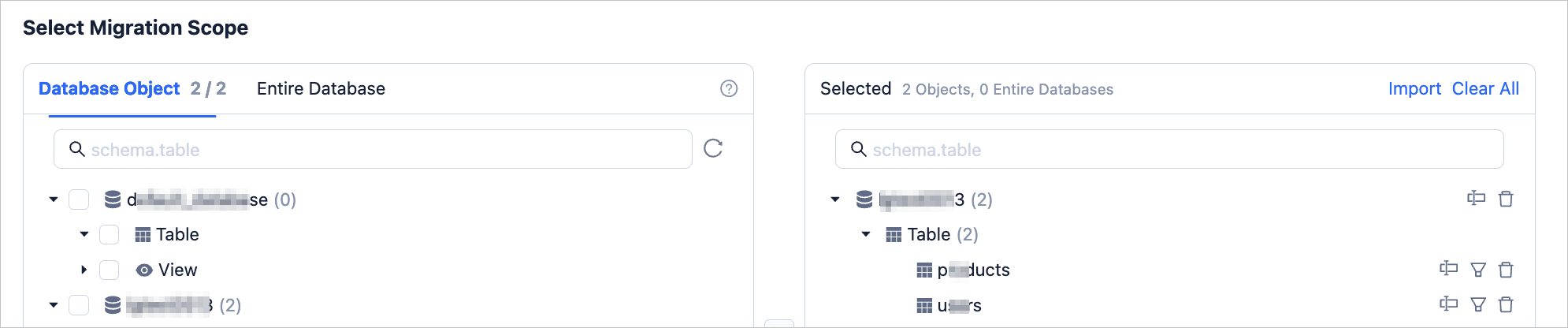

In the Select Migration Scope section, select migration objects.

If you select Specify Objects, data migration supports Table-level and Database-level. Table-level migration allows you to select one or more tables or views from one or more databases as migration objects. Database-level migration allows you to select an entire database as a migration object. If you select table-level migration for a database, database-level migration is no longer supported for that database. Conversely, if you select database-level migration for a database, table-level migration is no longer supported for that database.

After selecting Table-level or Database-level, select the objects to be migrated in the left pane and click > to add them to the right pane.

The data migration service allows you to rename objects, set row filters, and remove a single migration object or all migration objects.

Note

Take note of the following items when you select Database-level:

The right-side pane displays only the database name and does not list all objects in the database.

If you have selected DDL Synchronization-Synchronize DDL, newly added tables in the source database can also be synchronized to the target database.

OperationDescriptionImport Objects In the list on the right side of the selection area, click Import in the upper right corner. For more information, see Import migration objects. Rename an object The data migration service allows you to rename a migration object. For more information, see Rename a migration object. Set row filters The data migration service allows you to filter rows by using WHEREconditions. For more information, see Use SQL conditions to filter data. You can also view column information about the migration objects in the View Column section.Remove one or all objects The data migration service allows you to remove one or all migration objects during data mapping. - Remove a single migration object

In the right-side pane, hover the pointer over the object that you want to remove, and then click Remove. - Remove all migration objects

In the right-side pane, click Clear All. In the dialog box that appears, click OK to remove all migration objects.

If you select Match by Rule, for more information, see Configure database-to-database matching rules.

Click Next. On the Migration Options page, configure the parameters.

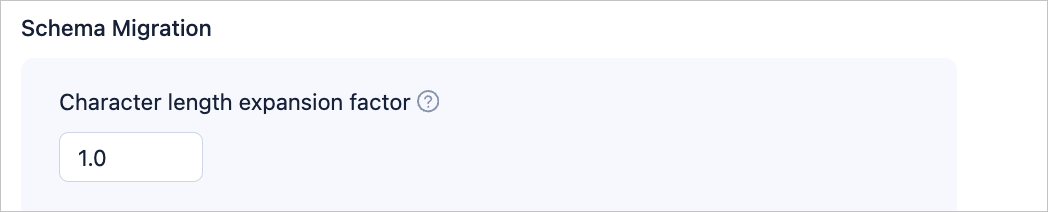

Schema migration

On the Select Type & Objects page, select Schema Migration, and the following parameters will be displayed only if the character sets of the source and target are different.

When the character sets of the source and target are different (e.g., the source is GBK and the target is UTF-8), there may be cases of field truncation and data inconsistency. You can configure the Character Length Expansion Factor to increase the length of character type fields.

Note

The extended length cannot exceed the maximum limit of the target.

Full migration

The following parameters will be displayed only if Full Migration is selected on the Select Type & Objects page.

ParameterDescriptionRead Concurrency This parameter specifies the number of concurrent threads for reading data from the source during full migration. The maximum number of concurrent threads is 512. A high number of concurrent threads may cause high pressure on the source and affect business operations. Write Concurrency This parameter specifies the number of concurrent threads for writing data to the target during full migration. The maximum number of concurrent threads is 512. A high number of concurrent threads may cause high pressure on the target and affect business operations. Rate Limiting for Full Migration You can decide whether to limit the full migration rate based on your needs. If you enable this option, you must also set the source read RPS (maximum number of rows that can be read from the source per second during full migration), source read BPS (maximum amount of traffic that can be read from the source per second during full migration), target write RPS (maximum number of rows that can be written to the target per second during full migration), and target write BPS (maximum amount of data that can be written to the target per second during full migration). Note

The RPS and BPS values specified here are only for throttling and limiting capabilities. The actual performance of full migration is limited by factors such as the source, target, and instance specifications.

Handle Non-empty Tables in Target Database This parameter specifies the strategy for handling records in target table objects. Valid values: Stop Migration and Ignore. - If you select Stop Migration, data migration will report an error when target table objects contain data, indicating that migration is not allowed. Please handle the data in the target database before resuming migration.

Notice

If you click Restore after an error occurs, data migration will ignore this setting and continue to migrate table data. Proceed with caution.

- If you select Ignore, when target table objects contain data, data migration will adopt the strategy of recording conflicting data in logs and retaining the original data.

Post-Indexing This parameter specifies whether to allow index creation to be postponed after full migration is completed. If you select this option, note the following items. Notice

Before you select this option, make sure that you have selected both Schema Migration and Full Migration on the Select Migration Type page.

- Only non-unique key indexes support index creation after migration.

When post index is allowed, we recommend that you adjust the following business tenant parameters based on the hardware conditions of the OceanBase Database and the current business traffic using a command-line client tool.

// File memory buffer limit ALTER SYSTEM SET _temporary_file_io_area_size = '10' tenant = 'xxx'; // For OceanBase database V4.x, disable throttling ALTER SYSTEM SET sys_bkgd_net_percentage = 100;- If you select Stop Migration, data migration will report an error when target table objects contain data, indicating that migration is not allowed. Please handle the data in the target database before resuming migration.

Incremental synchronization

On the Select Type & Objects page, select One-way Sync > Incremental Synchronization to display the following parameters.

ParameterDescriptionWrite Concurrency Specifies target data write concurrency during incremental synchronization. The maximum limit is 512. Excessive concurrency may overload the target system and impact business operations. Rate Limiting for Full Migration You can decide whether to limit the full migration rate based on your needs. If you enable this option, you must also set the source read RPS (maximum number of rows that can be read from the source per second during full migration), source read BPS (maximum amount of traffic that can be read from the source per second during full migration), target write RPS (maximum number of rows that can be written to the target per second during full migration), and target write BPS (maximum amount of data that can be written to the target per second during full migration). Note

The RPS and BPS values specified here are only for throttling and limiting capabilities. The actual performance of full migration is limited by factors such as the source, target, and instance specifications.

Incremental Synchronization Start Timestamp - If Full Migration has been selected when choosing the migration type, this parameter will not be displayed.

- If Full Migration has not been selected when choosing the migration type, but Incremental Synchronization has been selected, please specify here the data to be migrated after a certain timestamp. The default is the current system time. For more information, see Set incremental synchronization timestamp.

Advanced Options

The parameters in this section will only be displayed if the target OceanBase Database Oracle-compatible tenant is V4.3.0 or later, and Schema Migration or Incremental Synchronization > DDL Synchronization was selected on the Select Type & Objects page.

The storage types for target table objects include Default, Row Storage, Column Storage, and Hybrid Row-Column Storage. This configuration determines the storage type of target table objects during schema migration or incremental synchronization.

Note

The Default option adapts to other options based on target parameter settings, and structures of schema migration table objects or new table objects created by incremental DDL will follow the configured storage type.

Click Next to proceed to the pre-check stage for the data migration task.

During the precheck, the data migration service checks the read and write privileges of the database user and the network connection of the database. A data migration task can be started only after it passes all check items. If an error is returned during the precheck, you can perform the following operations:

You can identify and troubleshoot the problem and then perform the precheck again.

You can also click Skip in the Actions column of a failed precheck item. In the dialog box that appears, you can view the prompt for the consequences of the operation and click OK.

After the pre-check succeeds, click Purchase to go to the Purchase Data Migration Instance page.

After the purchase succeeds, you can start the data migration task. For more information about how to purchase a data migration instance, see Purchase a data migration instance. If you do not need to purchase a data migration instance at this time, click Save to go to the details page of the data migration task. You can manually purchase a data migration instance later as needed.

You can click Configure Validation Task in the upper-right corner of the details page to compare the data differences between the source database and the target database. For more information, see Create a data validation task.

The data migration service allows you to modify the migration objects when the task is running. For more information, see View and modify migration objects. After the data migration task is started, it is executed based on the selected migration types. For more information, see the "View migration details" section in View details of a data migration task.