This topic describes how to archive data by submitting a data cleanup ticket.

Background information

OceanBase Cloud allows you to clean up data in the source database after it is archived to the target database, to improve query performance and reduce costs of online storage.

The example in this topic describes how to create a data cleanup task in OceanBase Cloud to clean up data in the employee table in the test1 database in the OceanBase Cloud console.

Note

All data used in this example is for reference only. You can replace the data as needed.

Prerequisites

You have the database account and password of the current tenant to log in to the SQL console.

The table to be cleaned up has a primary key.

Limitations

Precondition:

- CPU and memory exhaustion prevention is not supported for MySQL data sources.

Supported data sources for data cleanup:

MySQL-compatible tenants of OceanBase Database

Oracle-compatible tenants of OceanBase Database

MySQL databases

Data cleanup is not supported in the following conditions:

The table of a MySQL-compatible tenant of OceanBase Database or a MySQL database does not contain a primary key or a unique non-null index.

The table of an Oracle-compatible tenant of OceanBase Database does not contain a primary key.

The table of an Oracle-compatible tenant of OceanBase Database contains the JSON or XMLTYPE data type.

The archiving condition contains a LIMIT clause.

The table contains a foreign key.

Create a data cleanup task

In the left-side navigation pane, click Data Services > Data Lifecycle > Data Cleanup. On the Data Lifecycle page, click Create Job > Data Cleanup.

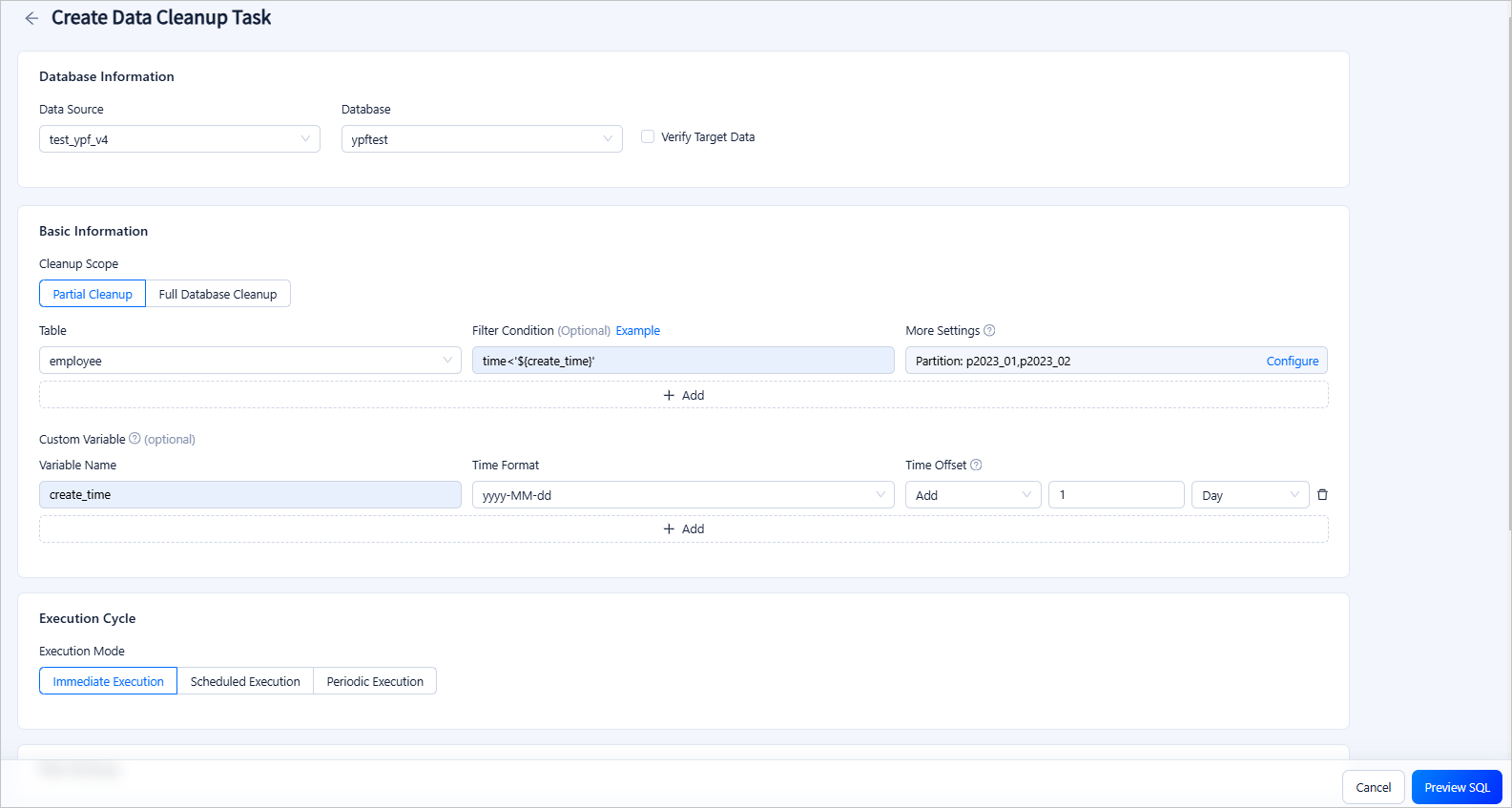

On the Create Data Cleanup Task page, configure the following parameters.

ParameterDescription

ParameterDescriptionDatabase Information Select the data source and database. You can also choose to verify the status of the target data source and database. Cleanup Scope - Partial Cleanup: specifies to clean up only tables that meet filter conditions in the source database.

- You can configure filter conditions by using constants or referencing variables defined in Custom Variable. For example, in

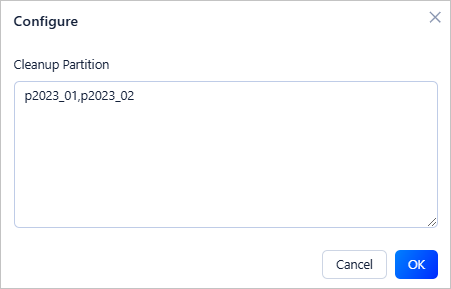

time<'${create_time}',create_timeis the name of a variable configured in Custom Variable, andtimeis a field in the table to be cleaned up. - You can click More Settings > Configure and specify the partitions to be cleaned up.

- You can configure filter conditions by using constants or referencing variables defined in Custom Variable. For example, in

- Full Database Cleanup: specifies to clean up all tables in the source database.

Custom Variable You can define variables and set time offsets to filter rows to be cleaned up. Execution Mode The execution mode of the task. Valid values: Immediate Execution, Scheduled Execution, and Periodic Execution. Task Settings Configure the throttling strategy. - Execution Timeout: If the task is not completed within the specified time, the task will be terminated.

- Data Retrieval Strategy: specifies the way to retrieve target records. Full table scan is stable, suitable for scenarios where a large proportion of the data is to be retrieved. Condition matching is fast and can avoid unnecessary scans and is suitable for scenarios where only a small proportion of the data is to be retrieved.

- Row Limit: specifies the maximum number of rows processed per second.

- Data Volume Limit: specifies the maximum data volume processed per second.

- Use Primary Key for Cleanup: specifies whether to use the primary key for cleanup.

Remarks Optional. Additional information about the task, which cannot exceed 200 characters in length. - Partial Cleanup: specifies to clean up only tables that meet filter conditions in the source database.

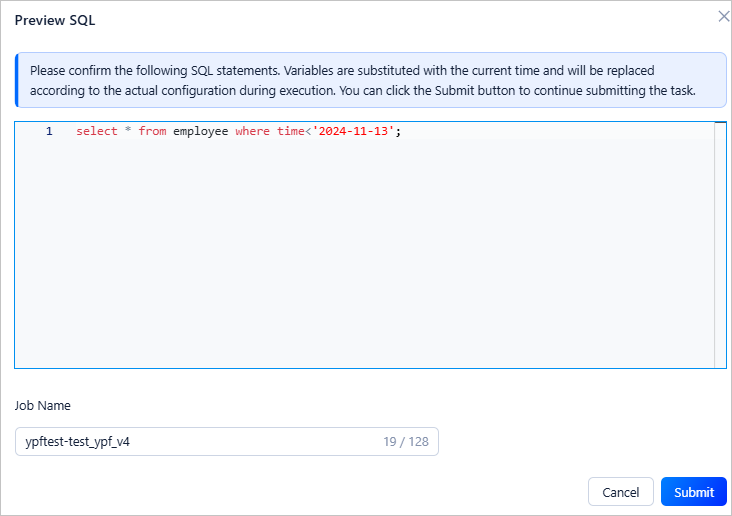

Click Preview SQL to preview the SQL statements and specify a job name. Click Submit to complete the data cleanup task creation.

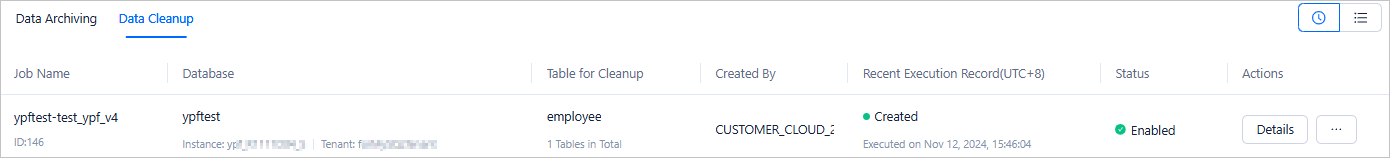

After the task is generated, you can view the task information in the Data Cleanup list.

View a data cleanup task

Go to Data Services > Data Lifecycle > Data Cleanup in the left-side navigation pane to view the list of data cleanup jobs and the basic information of each job.

In the data cleanup job list, click the name of the target job to view the details. You can also click the ··· icon in the Actions column to perform more actions on the job.

- Terminate: If the job is scheduled to run periodically or at regular intervals, you can terminate the job.

- Disable/Enable: If the job is scheduled to run periodically or at regular intervals, you can disable the job to temporarily stop its execution. After re-enabling the job, it will resume execution.

- Edit: If the job is disabled, you can edit it to update its content.

- Delete: If the job is completed or terminated, you can delete it.

- Details: View the details of the job.

- Initiate Again: Copy the job execution content to quickly generate a new job with the same configuration.

On the Job Information tab of the job details panel, view the job type, database name, variable configuration, and cleanup scope.

In the job details panel, click the Execution Records tab to view the job status and execution details.

In the job details panel, click the Operation Records tab to view the job status and records.

Import a data cleanup task

After migrating an instance from ApsaraDB for OceanBase to OceanBase Cloud, you can import the data cleanup tasks of the migrated instance to OceanBase Cloud.

Step 1: Export data cleanup tasks from ApsaraDB for OceanBase

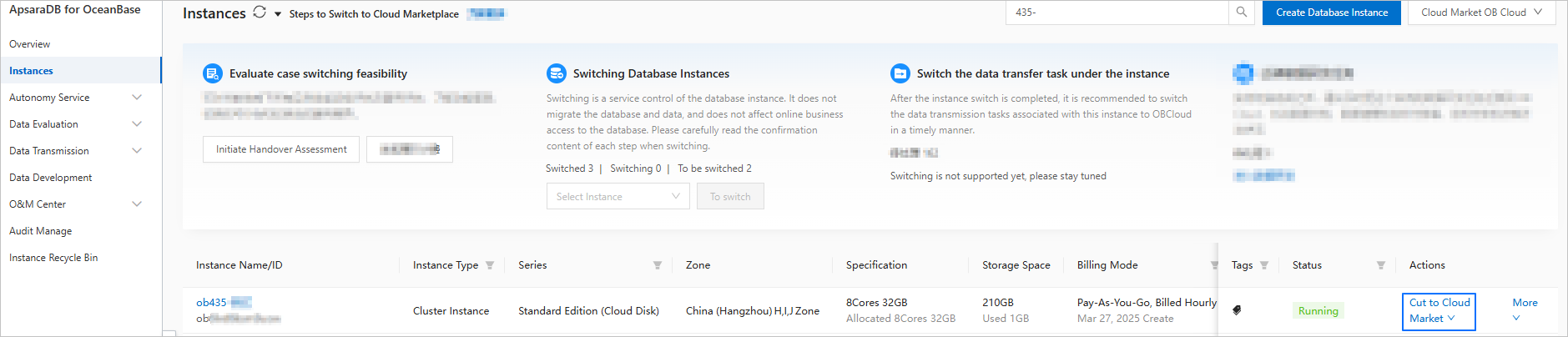

Click Instances in the left navigation bar of the ApsaraDB for OceanBase console.

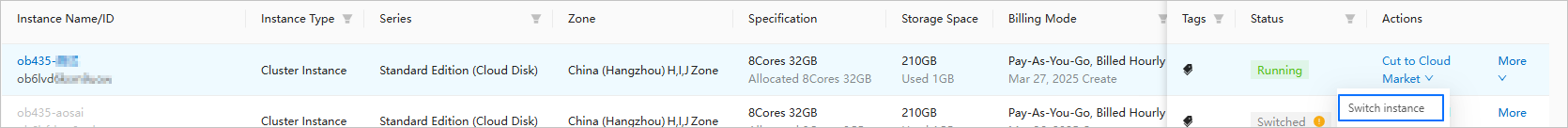

Click Cut to Cloud Market under Actions for the instance.

Click Processing data research and development tasks under Actions after the instance is switched.

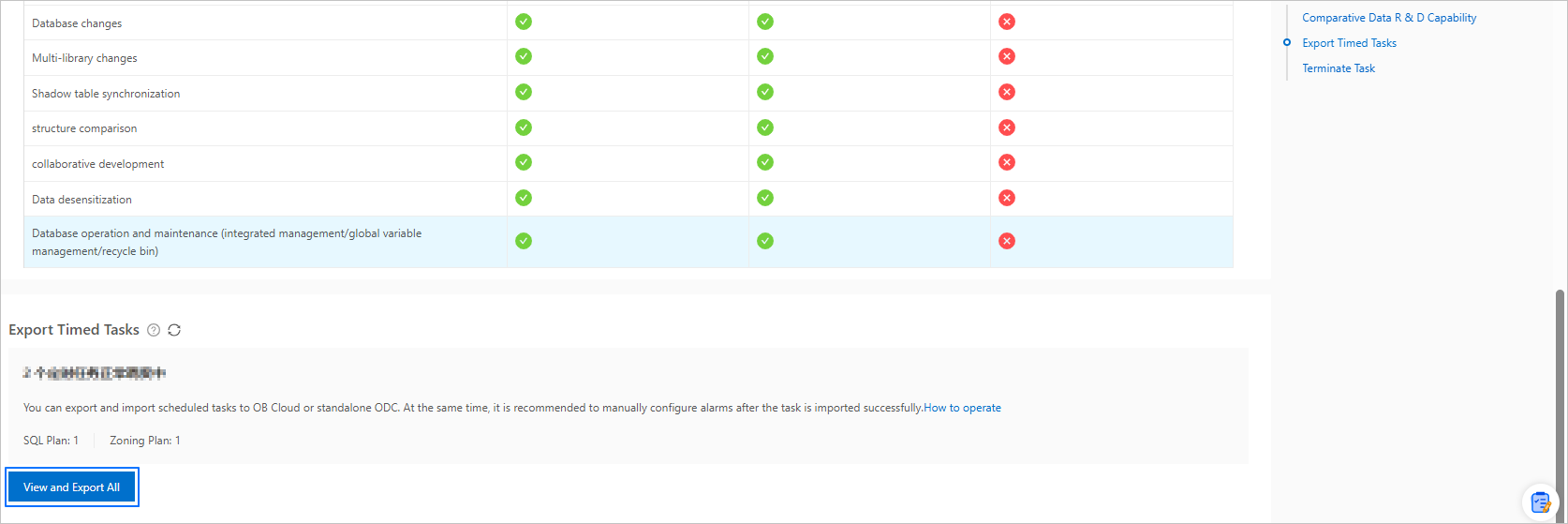

On the Processing data research and development tasks page, click View and Export All to export scheduled tasks to local.

Step 2: Import data cleanup tasks to OceanBase Cloud

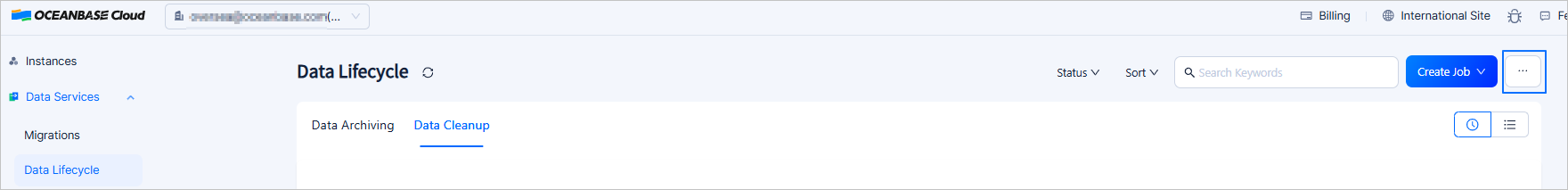

Log in to the OceanBase Cloud console, click Data Services > Data Lifecycle , and click ... > Import Job on the Lifecycle page.

Upload the downloaded data cleanup configuration file to the import job page.