This topic describes how to build an application by using the SpringBatch framework and OceanBase Cloud to perform basic operations such as creating tables, inserting data, and querying data.

Download the java-oceanbase-springbatch sample project

Download the java-oceanbase-springbatch sample project  SpringBatch application example for connecting to OceanBase Cloud (Oracle compatible mode)

SpringBatch application example for connecting to OceanBase Cloud (Oracle compatible mode)

Prerequisites

- You have registered an account on OceanBase Cloud, created an instance and an Oracle-compatible tenant. For more information, see Create an instance and Create a tenant.

- You have installed JDK 1.8 and Maven.

- You have installed IntelliJ IDEA.

Note

The code examples in this topic are run in IntelliJ IDEA 2021.3.2 (Community Edition). You can also choose your preferred tool to run the code examples.

Procedure

Note

The following steps are based on the Windows environment. If you are using a different operating system or compiler, the steps may vary slightly.

- Obtain the connection string of the OceanBase Cloud database.

- Import the

java-oceanbase-springbatchproject into IDEA. - Modify the database connection information in the

java-oceanbase-springbatchproject. - Run the

java-oceanbase-springbatchproject.

Step 1: Obtain the connection string of the OceanBase Cloud database

Log in to the OceanBase Cloud console. In the instance list, expand the information of the target instance, and in the target tenant, choose Connect > Get Connection String.

For more information, see Obtain a connection string.

Fill in the URL with the information of the created OceanBase Cloud database.

The URL for connecting to the Oracle compatible mode of the OceanBase Cloud database is as follows:

jdbc:oceanbase://$host:$port/$schema_name?user=$user_name&password=$passwordParameter description:

$host: the connection address of the OceanBase Cloud database, for example,t********.********.oceanbase.cloud.$port: the connection port of the OceanBase Cloud database. The default value is 1521.$schema_name: the name of the schema to be accessed.$user_name: the account for accessing the database.$password: the password of the account.

For more information about the URL parameters, see Database URL.

Step 2: Import the java-oceanbase-springbatch project into IDEA

Start IntelliJ IDEA and choose File > Open....

In the Open File or Project window that appears, select the project file and click OK.

IntelliJ IDEA automatically identifies various files in the project and displays the project structure, file list, module list, and dependency relationships in the Project tool window. The Project tool window is usually located on the left side of the IntelliJ IDEA interface and is open by default. If the Project tool window is closed, you can click View > Tool Windows > Project in the menu bar or press Alt + 1 to reopen it.

Note

When you import a project into IntelliJ IDEA, it automatically detects the pom.xml file in the project, downloads the required dependency libraries based on the described dependencies, and adds them to the project.

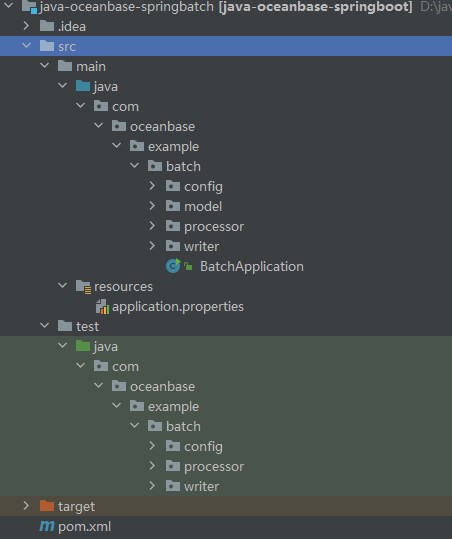

View the project.

Step 3: Modify the database connection information in the java-oceanbase-springbatch project

Modify the database connection information in the application.properties file based on the information obtained in Step 1: Obtain the connection string of the OceanBase Cloud database.

Here is an example:

- The name of the database driver is

com.oceanbase.jdbc.Driver. - The connection address of the OceanBase Cloud database is

t5******.********.oceanbase.cloud. - The access port is 1521.

- The name of the schema to be accessed is

sys. - The tenant account is oracle001.

- The password is

******.

Here is the sample code:

spring.datasource.driver-class-name=com.oceanbase.jdbc.Driver

spring.datasource.url=jdbc:oceanbase://t5******.********.oceanbase.cloud:1521/sys?characterEncoding=utf-8

spring.datasource.username=oracle001

spring.datasource.password=******

spring.jpa.show-sql=true

spring.jpa.hibernate.ddl-auto=update

spring.batch.job.enabled=false

logging.level.org.springframework=INFO

logging.level.com.example=DEBUG

Step 4: Run the java-oceanbase-springbatch project

Run the

AddDescPeopleWriterTest.javafile.- Find the

AddDescPeopleWriterTest.javafile in the src > test > java directory. - In the tool menu bar, choose Run > Run... > AddDescPeopleWriterTest.testWrite, or click the green triangle in the upper right corner to run.

- View the log information and output results in the IDEA console.

Data in the people_desc table: PeopleDESC [name=John, age=25, desc=This is John with age 25] PeopleDESC [name=Alice, age=30, desc=This is Alice with age 30] Batch job execution completed.- Find the

Run the

AddPeopleWriterTest.javafile.- Find the

AddDescPeopleWriterTest.javafile in the src > test > java directory. - In the tool menu bar, choose Run > Run... > AddPeopleWriterTest.testWrite, or click the green triangle in the upper right corner to run.

- View the log information and output results in the IDEA console.

Data in the people table: People [name=zhangsan, age=27] People [name=lisi, age=35] Batch job execution completed.- Find the

Project code

Click java-oceanbase-springbatch to download the project code, which is a compressed package named java-oceanbase-springbatch.

After decompressing it, you will find a folder named java-oceanbase-springbatch. The directory structure is as follows:

│ pom.xml

│

├─.idea

│

├─src

│ ├─main

│ │ ├─java

│ │ │ └─com

│ │ │ └─oceanbase

│ │ │ └─example

│ │ │ └─batch

│ │ │ │──BatchApplication.java

│ │ │ │

│ │ │ ├─config

│ │ │ │ └─BatchConfig.java

│ │ │ │

│ │ │ ├─model

│ │ │ │ ├─People.java

│ │ │ │ └─PeopleDESC.java

│ │ │ │

│ │ │ ├─processor

│ │ │ │ └─AddPeopleDescProcessor.java

│ │ │ │

│ │ │ └─writer

│ │ │ ├─AddDescPeopleWriter.java

│ │ │ └─AddPeopleWriter.java

│ │ │

│ │ └─resources

│ │ └─application.properties

│ │

│ └─test

│ └─java

│ └─com

│ └─oceanbase

│ └─example

│ └─batch

│ ├─config

│ │ └─BatchConfigTest.java

│ │

│ ├─processor

│ │ └─AddPeopleDescProcessorTest.java

│ │

│ └─writer

│ ├─AddDescPeopleWriterTest.java

│ └─AddPeopleWriterTest.java

│

└─target

File description:

pom.xml: the configuration file of the Maven project, which contains the dependencies, plugins, and build information of the project..idea: the directory used by the IDE (integrated development environment) to store project-related configuration information.src: the directory where the source code of the project is stored.main: the directory where the main source code and resource files are stored.java: the directory where the Java source code is stored.com: the root directory where the Java packages are stored.oceanbase: the root directory of the project.example: the root directory of the project.batch: the main package name of the project.BatchApplication.java: the entry class of the application, which contains the main method of the application.config: the folder where the configuration classes of the application are stored.BatchConfig.java: the configuration class of the application, which is used to configure some properties and behaviors of the application.model: the folder where the model classes of the application are stored.People.java: the data model class of the personnel.PeopleDESC.java: the data model class of the personnelDESC.processor: the folder where the processor classes of the application are stored.AddPeopleDescProcessor.java: the processor class for adding personnelDESC information.writer: the folder where the writer classes of the application are stored.AddDescPeopleWriter.java: the writer class for writing personnelDESC information.AddPeopleWriter.java: the writer class for writing personnel information.resources: the folder where the configuration files and other static resource files of the application are stored.application.properties: the configuration file of the application, which is used to configure the properties of the application.test: the directory where the test code and resource files are stored.BatchConfigTest.java: the test class of the application configuration class.AddPeopleDescProcessorTest.java: the test class of the processor for adding personnelDESC information.AddDescPeopleWriterTest.java: the test class of the writer for writing personnelDESC information.AddPeopleWriterTest.java: the test class of the writer for writing personnel information.target: the directory where the compiled class files, JAR packages, and other files are stored.

Introduction to the pom.xml file

Note

If you only want to verify the sample, you can use the default code without any modifications. You can also modify the pom.xml file based on your specific requirements as explained below.

The content of the pom.xml configuration file is as follows:

File declaration statement.

This statement declares the file as an XML file using XML version

1.0and character encodingUTF-8.Sample code:

<?xml version="1.0" encoding="UTF-8"?>Configure the namespaces and model version of POM.

- Use

xmlnsto set the POM namespace tohttp://maven.apache.org/POM/4.0.0. - Use

xmlns:xsito set the XML namespace tohttp://www.w3.org/2001/XMLSchema-instance. - Use

xsi:schemaLocationto set the POM namespace tohttp://maven.apache.org/POM/4.0.0and the location of the POM XSD file tohttps://maven.apache.org/xsd/maven-4.0.0.xsd. - Use the

<modelVersion>element to set the POM model version to4.0.0.

Sample code:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> </project>- Use

Configure the parent information.

- Use

<groupId>to set the parent identifier toorg.springframework.boot. - Use

<artifactId>to set the parent dependency tospring-boot-starter-parent. - Use

<version>to set the parent version to2.7.11. - Use

relativePathto indicate that the parent path is empty.

Sample code:

<parent> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-parent</artifactId> <version>2.7.11</version> <relativePath/> </parent>- Use

Configure the basic information.

- Use

<groupId>to set the project identifier tocom.oceanbase. - Use

<artifactId>to set the project dependency tojava-oceanbase-springboot. - Use

<version>to set the project version to0.0.1-SNAPSHOT. - Use

descriptionto introduce the project information asDemo project for Spring Batch.

Sample code:

<groupId>com.oceanbase</groupId> <artifactId>java-oceanbase-springboot</artifactId> <version>0.0.1-SNAPSHOT</version> <name>java-oceanbase-springbatch</name> <description>Demo project for Spring Batch</description>- Use

Configure the Java version.

Set the Java version used by the project to 1.8.

Sample code:

<properties> <java.version>1.8</java.version> </properties>Configure the core dependencies.

Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-starter, and use this dependency to access the default components supported by Spring Boot, including Web, data processing, security, and Test features.Sample code:

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter</artifactId> </dependency>Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-starter-jdbc, and use this dependency to access the JDBC-related features provided by Spring Boot, including connection pools and data source configurations.Sample code:

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-jdbc</artifactId> </dependency>Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-starter-test, and the scope totest. Use this dependency to access the testing framework and tools provided by Spring Boot, including JUnit, Mockito, and Hamcrest.Sample code:

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-test</artifactId> <scope>test</scope> </dependency>Set the organization of the dependency to

com.oceanbase, the name tooceanbase-client, and the version to2.4.3. Use this dependency to access the client features provided by OceanBase, including connections, queries, and transactions.Sample code:

<dependency> <groupId>com.oceanbase</groupId> <artifactId>oceanbase-client</artifactId> <version>2.4.3</version> </dependency>Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-starter-batch, and use this dependency to access the batch processing features provided by Spring Boot.Sample code:

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-batch</artifactId> </dependency>Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-starter-data-jpa, and use this dependency to access the necessary dependencies and configurations for data access using JPA. Spring Boot Starter Data JPA is a Spring Boot starter.Sample code:

<dependency> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-starter-data-jpa</artifactId> </dependency>Set the organization of the dependency to

org.apache.tomcat, the name totomcat-jdbc, and use this dependency to access the JDBC connection pool features provided by Tomcat, including connection pool configuration, connection acquisition and release, and connection management.Sample code:

<dependency> <groupId>org.apache.tomcat</groupId> <artifactId>tomcat-jdbc</artifactId> </dependency>Set the test framework of the dependency to

junit, the name tojunit, the version to4.10, and the scope totest. Use this dependency to add JUnit unit test dependencies.Sample code:

<dependency> <groupId>junit</groupId> <artifactId>junit</artifactId> <version>4.10</version> <scope>test</scope> </dependency>Set the organization of the dependency to

javax.activation, the name tojavax.activation-api, and the version to1.2.0. Use this dependency to introduce the Java Activation Framework (JAF) library.Sample code:

<dependency> <groupId>javax.activation</groupId> <artifactId>javax.activation-api</artifactId> <version>1.2.0</version> </dependency>Set the organization of the dependency to

jakarta.persistence, the name tojakarta.persistence-api, and the version to2.2.3. Use this dependency to add the Jakarta Persistence API dependency. Sample code:<dependency> <groupId>jakarta.persistence</groupId> <artifactId>jakarta.persistence-api</artifactId> <version>2.2.3</version> </dependency>

Configure the Maven plugins.

Set the organization of the dependency to

org.springframework.boot, the name tospring-boot-maven-plugin, and use this plugin to package the Spring Boot application into an executable JAR or WAR file, which can be directly run.Sample code:

<build> <plugins> <plugin> <groupId>org.springframework.boot</groupId> <artifactId>spring-boot-maven-plugin</artifactId> </plugin> </plugins> </build>

Introduction to the application.properties file

The application.properties file is used to configure database connections and other related settings. This includes database drivers, connection URLs, usernames, and passwords. It also contains configurations for JPA (Java Persistence API) and Spring Batch, as well as log level settings.

Database connection configuration.

- Use

spring.datasource.driverto specify the database driver ascom.oceanbase.jdbc.Driver, which is used to establish a connection with OceanBase Cloud. - Use

spring.datasource.urlto specify the URL for connecting to the database. - Use

spring.datasource.usernameto specify the username for connecting to the database. - Use

spring.datasource.passwordto specify the password for connecting to the database.

Sample code:

spring.datasource.driver-class-name=com.oceanbase.jdbc.Driver spring.datasource.url=jdbc:oceanbase://host:port/schema_name?characterEncoding=utf-8 spring.datasource.username=user_name spring.datasource.password=******- Use

JPA configuration.

- Use

spring.jpa.show-sqlto specify whether to display SQL statements in the logs. Setting it totruemeans SQL statements will be displayed. - Use

spring.jpa.hibernate.ddl-autoto specify the Hibernate DDL operation behavior. Setting it toupdatemeans the database structure will be automatically updated when the application starts.

Sample code:

spring.jpa.show-sql=true spring.jpa.hibernate.ddl-auto=update- Use

Spring Batch configuration:

Use

spring.batch.job.enabledto specify whether to enable Spring Batch jobs. Setting it tofalsemeans Spring Batch jobs are disabled.Sample code:

spring.batch.job.enabled=falseLog configuration:

- Use

logging.level.org.springframeworkto specify the log level for the Spring framework asINFO. - Use

logging.level.com.exampleto specify the log level for custom application code asDEBUG.

Sample code:

logging.level.org.springframework=INFO logging.level.com.example=DEBUG- Use

Introduction to the BatchApplication.java file

The BatchApplication.java file is the entry point of the Spring Boot application.

The code in the BatchApplication.java file mainly includes the following parts:

Importing other classes and interfaces.

Declare that this file contains the following interfaces and classes:

SpringApplicationclass: used to start the Spring Boot application.SpringBootApplicationannotation: used to mark this class as the entry point of the Spring Boot application.

Sample code:

import org.springframework.boot.SpringApplication; import org.springframework.boot.autoconfigure.SpringBootApplication;Define the

BatchApplicationclass.Use the

@SpringBootApplicationannotation to mark theBatchApplicationclass as the entry point of the Spring Boot application. Define a staticmainmethod in theBatchApplicationclass as the entry point of the application. In this method, use theSpringApplication.runmethod to start the Spring Boot application. Define a method namedrunBatchJobto run the batch job.Sample code:

@SpringBootApplication public class BatchApplication { public static void main(String[] args) { SpringApplication.run(BatchApplication.class, args); } public void runBatchJob() { } }

Introduction to the BatchConfig.java file

The BatchConfig.java file is used to configure components such as steps, readers, processors, and writers for batch processing jobs.

The code in the BatchConfig.java file mainly includes the following parts:

Import other classes and interfaces.

Declare the interfaces and classes included in the current file:

Peopleclass: stores the personnel information read from the database.PeopleDESCclass: stores the description information after the personnel information is converted or processed.AddPeopleDescProcessorclass: converts the readPeopleobject to thePeopleDESCobject. This class implements theItemProcessorinterface.AddDescPeopleWriterclass: writes thePeopleDESCobject to the target location. This class implements theItemWriterinterface.Jobinterface: represents a batch processing job.Stepinterface: represents a step in a job.EnableBatchProcessingannotation: a Spring Batch configuration annotation used to enable and configure Spring Batch processing.JobBuilderFactoryclass: used to create and configure jobs.StepBuilderFactoryclass: used to create and configure steps.RunIdIncrementerclass: a Spring Batch run ID (Run ID) auto-incrementer used to increase the run ID each time a job is run.ItemProcessorinterface: used to process or convert the read items.ItemReaderinterface: used to read items from the data source.ItemWriterinterface: used to write the processed or converted items to the specified target location.JdbcCursorItemReaderclass: used to read data from the database and return the cursor result set.Autowiredannotation: used for dependency injection.Beanannotation: used to create and configure beans.ComponentScanannotation: used to specify the packages or classes to be scanned for components.Configurationannotation: used to mark a class as a configuration class.EnableAutoConfigurationannotation: used to enable Spring Boot auto-configuration.SpringBootApplicationannotation: used to mark the class as the entry point of a Spring Boot application.DataSourceinterface: used to represent the database connection.

Sample code:

import com.oceanbase.example.batch.model.People; import com.oceanbase.example.batch.model.PeopleDESC; import com.oceanbase.example.batch.processor.AddPeopleDescProcessor; import com.oceanbase.example.batch.writer.AddDescPeopleWriter; import org.springframework.batch.core.Job; import org.springframework.batch.core.Step; import org.springframework.batch.core.configuration.annotation.EnableBatchProcessing; import org.springframework.batch.core.configuration.annotation.JobBuilderFactory; import org.springframework.batch.core.configuration.annotation.StepBuilderFactory; import org.springframework.batch.core.launch.support.RunIdIncrementer; import org.springframework.batch.item.ItemProcessor; import org.springframework.batch.item.ItemReader; import org.springframework.batch.item.ItemWriter; import org.springframework.batch.item.database.JdbcCursorItemReader; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.autoconfigure.EnableAutoConfiguration; import org.springframework.boot.autoconfigure.SpringBootApplication; import org.springframework.context.annotation.Bean; import org.springframework.context.annotation.ComponentScan; import org.springframework.context.annotation.Configuration; import org.springframework.jdbc.core.BeanPropertyRowMapper; import javax.sql.DataSource;Define the

BatchConfigclass.This is a simple Spring Batch batch processing job. It defines the methods for reading, processing, and writing data and encapsulates these steps into a job. By using Spring Batch annotations and auto-configuration features, you can create corresponding component instances through the

@Beanmethods in the configuration class and use these components instep1to complete the data reading, processing, and writing.- Use

@Configurationto indicate that this class is a configuration class. - Use

@EnableBatchProcessingto enable Spring Batch processing. This annotation automatically creates necessary beans such asJobRepositoryandJobLauncher. - Use

@SpringBootApplicationas the main class annotation for Spring Boot applications, serving as the starting point of a Spring Boot application. - Use

@ComponentScanto specify the packages to be scanned for components, telling Spring to scan and register all components in this package and its subpackages. - Use

@EnableAutoConfigurationto automatically configure the infrastructure of Spring Boot applications.

Sample code:

@Configuration @EnableBatchProcessing @SpringBootApplication @ComponentScan("com.oceanbase.example.batch.writer") @EnableAutoConfiguration public class BatchConfig { }Define the

@Autowiredannotation.Use the

@Autowiredannotation to injectJobBuilderFactory,StepBuilderFactory, andDataSourceinto the member variables of theBatchConfigclass.JobBuilderFactoryis a factory class used to create and configure jobs (Job),StepBuilderFactoryis a factory class used to create and configure steps (Step), andDataSourceis an interface used to obtain the database connection.Sample code:

@Autowired private JobBuilderFactory jobBuilderFactory; @Autowired private StepBuilderFactory stepBuilderFactory; @Autowired private DataSource dataSource;Define the

@Beanannotation.Use the

@Beanannotation to define several methods for creating readers, processors, writers, steps, and jobs for batch processing.Use the

peopleReadermethod to create anItemReadercomponent instance. This component usesJdbcCursorItemReaderto readPeopleobject data from the database. Set the data source todataSource, set theRowMapperto map database rows toPeopleobjects, and set the SQL query statement toSELECT * FROM people.Use the

addPeopleDescProcessormethod to create anItemProcessorcomponent instance. This component usesAddPeopleDescProcessorto processPeopleobjects and returns the convertedPeopleDESCobjects.Use the

addDescPeopleWritermethod to create anItemWritercomponent instance. This component usesAddDescPeopleWriterto writePeopleDESCobjects to the target location.Use the

step1method to create aStepcomponent instance. The step name isstep1. UsestepBuilderFactory.getto obtain the step builder, set the reader to theItemReadercomponent, set the processor to theItemProcessorcomponent, set the writer to theItemWritercomponent, set thechunksize to10, and finally callbuildto build and return the configuredStep.Use the

importJobmethod to create aJobcomponent instance. The job name isimportJob. UsejobBuilderFactory.getto obtain the job builder, set the incrementer toRunIdIncrementer, set the initial step of the jobflowtoStep, and finally callbuildto build and return the configuredJob.Sample code:

@Bean public ItemReader<People> peopleReader() { JdbcCursorItemReader<People> reader = new JdbcCursorItemReader<>(); reader.setDataSource((javax.sql.DataSource) dataSource); reader.setRowMapper(new BeanPropertyRowMapper<>(People.class)); reader.setSql("SELECT * FROM people"); return reader; } @Bean public ItemProcessor<People, PeopleDESC> addPeopleDescProcessor() { return new AddPeopleDescProcessor(); } @Bean public ItemWriter<PeopleDESC> addDescPeopleWriter() { return new AddDescPeopleWriter(); } @Bean public Step step1(ItemReader<People> reader, ItemProcessor<People, PeopleDESC> processor, ItemWriter<PeopleDESC> writer) { return stepBuilderFactory.get("step1") .<People, PeopleDESC>chunk(10) .reader(reader) .processor(processor) .writer(writer) .build(); } @Bean public Job importJob(Step step1) { return jobBuilderFactory.get("importJob") .incrementer(new RunIdIncrementer()) .flow(step1) .end() .build(); }

- Use

Introduction to the People.java file

The People.java file creates a People class data model to represent a person's information. This class includes two private member variables, name and age, along with corresponding getter and setter methods. Finally, the toString method is overridden to print the object's information. Here, name represents the person's name, and age represents the person's age. You can use the getter and setter methods to obtain and set the values of these attributes.

This class provides a way to store and pass data for batch processing programs. In batch processing, the People object is used to store data, and the setter method is used to set data, while the getter method is used to obtain data.

Code:

public class People {

private String name;

private int age;

// getters and setters

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

@Override

public String toString() {

return "People [name=" + name + ", age=" + age + "]";

}

// Getters and setters

}

Introduction to the PeopleDESC.java file

The PeopleDESC.java file creates a PeopleDESC class data model to represent a person's information. The PeopleDESC class has four attributes: name, age, desc, and id, which represent the person's name, age, description, and identifier, respectively. This class includes corresponding getter and setter methods to access and set the attribute values. The toString method is overridden to return the string representation of the class, including the name, age, and description.

Similar to the People class, the PeopleDESC class is used to store and pass data in batch processing programs.

Code:

public class PeopleDESC {

private String name;

private int age;

private String desc;

private int id;

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

public String getDesc() {

return desc;

}

public void setDesc(String desc) {

this.desc = desc;

}

public int getId() {

return id;

}

public void setId(int id) {

this.id = id;

}

@Override

public String toString() {

return "PeopleDESC [name=" + name + ", age=" + age + ", desc=" + desc + "]";

}

}

Introduction to the AddPeopleDescProcessor.java file

The AddPeopleDescProcessor.java file defines a class named AddPeopleDescProcessor that implements the ItemProcessor interface to convert a People object to a PeopleDESC object.

The code in the AddPeopleDescProcessor.java file mainly includes the following parts:

Import other classes and interfaces.

Declare the interfaces and classes included in this file:

Peopleclass: used to store the information of a person read from a database.PeopleDESCclass: used to store the description information of a person after conversion or processing.ItemProcessorinterface: used to process or convert the read items.

Code:

import com.oceanbase.example.batch.model.People; import com.oceanbase.example.batch.model.PeopleDESC; import org.springframework.batch.item.ItemProcessor;Define the

AddPeopleDescProcessorclass.The

AddPeopleDescProcessorclass of theItemProcessorinterface is used to convert aPeopleobject to aPeopleDESCobject, implementing the logic for processing input data in batch processing.In the

processmethod of this class, first, aPeopleDESCobjectdescis created. Then, theitemparameter is used to obtain thenameandageattributes of thePeopleobject and set these attributes to thedescobject. At the same time, thedescattribute of thedescobject is assigned a value, which is a description generated based on the attributes of thePeopleobject. Finally, the processedPeopleDESCobject is returned.Code:

public class AddPeopleDescProcessor implements ItemProcessor<People, PeopleDESC> { @Override public PeopleDESC process(People item) throws Exception { PeopleDESC desc = new PeopleDESC(); desc.setName(item.getName()); desc.setAge(item.getAge()); desc.setDesc("This is " + item.getName() + " with age " + item.getAge()); return desc; } }

AddDescPeopleWriter.java file

The AddDescPeopleWriter.java file implements the AddDescPeopleWriter class of the ItemWriter interface, which is used to write People objects to a database.

The AddDescPeopleWriter.java file contains the following main parts:

Import other classes and interfaces.

Declare the following interfaces and classes in the current file:

PeopleDESCclass: used to store the description information of the personnel after conversion or processing.ItemWriterinterface: used to write the processed or converted items to the specified target location.Autowiredannotation: used for dependency injection.JdbcTemplateclass: provides methods for executing SQL statements.Listinterface: used to operate on the result set.

Code:

import com.oceanbase.example.batch.model.PeopleDESC; import org.springframework.batch.item.ItemWriter; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.jdbc.core.JdbcTemplate; import java.util.List;Define the

AddDescPeopleWriterclass.Use the

@Autowiredannotation to automatically inject theJdbcTemplateinstance, which is used to execute database operations when writing data.Code:

@Autowired private JdbcTemplate jdbcTemplate;In the

writemethod, traverse the inputList<? extends PeopleDESC>and extract eachPeopleDESCobject. First, execute the SQL statementDROP TABLE people_descto delete the table namedpeople_descif it exists. Then, execute the SQL statementCREATE TABLE people_desc (id INT PRIMARY KEY, name VARCHAR2(255), age INT, description VARCHAR2(255))to create a table namedpeople_descwith four columns:id,name,age, anddescription. Finally, use the SQL statementINSERT INTO people_desc (id, name, age, description) VALUES (?, ?, ?, ?)to insert the attribute values of eachPeopleDESCobject into thepeople_desctable.Code:

@Override public void write(List<? extends PeopleDESC> items) throws Exception { // Delete the table if it exists jdbcTemplate.execute("DROP TABLE people_desc"); // Create the table String createTableSql = "CREATE TABLE people_desc (id INT PRIMARY KEY, name VARCHAR2(255), age INT, description VARCHAR2(255))"; jdbcTemplate.execute(createTableSql); for (PeopleDESC item : items) { String sql = "INSERT INTO people_desc (id, name, age, description) VALUES (?, ?, ?, ?)"; jdbcTemplate.update(sql, item.getId(), item.getName(), item.getAge(), item.getDesc()); } }

Introduction to the AddPeopleWriter.java file

The AddPeopleWriter.java file implements the AddDescPeopleWriter class of the ItemWriter interface, which is used to write PeopleDESC objects to a database.

The code in the AddPeopleWriter.java file mainly includes the following parts:

Import other classes and interfaces.

Declare the following interfaces and classes in this file:

Peopleclass: used to store personnel information read from the database.ItemWriterinterface: used to write processed or converted items to the specified target location.@Autowiredannotation: used for dependency injection.JdbcTemplateclass: provides methods for executing SQL statements.@Componentannotation: used to mark this class as a Spring component.Listinterface: used to operate on query result sets.

Code:

import com.oceanbase.example.batch.model.People; import org.springframework.batch.item.ItemWriter; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.jdbc.core.JdbcTemplate; import org.springframework.stereotype.Component; import java.util.List;Define the

AddPeopleWriterclass.Use the

@Autowiredannotation to automatically inject aJdbcTemplateinstance, which is used to execute database operations when writing data.Code:

@Autowired private JdbcTemplate jdbcTemplate;In the

writemethod, traverse the inputList<? extends People>and extract eachPeopleobject. First, execute the SQL statementDROP TABLE peopleto delete the table namedpeopleif it exists. Then, execute the SQL statementCREATE TABLE people (name VARCHAR2(255), age INT)to create a table namedpeoplewith two columns,nameandage. Finally, use the SQL statementINSERT INTO people (name, age) VALUES (?, ?)to insert the attribute values of eachPeopleobject into thepeopletable.Code:

@Override public void write(List<? extends People> items) throws Exception { // Drop the table if it exists jdbcTemplate.execute("DROP TABLE people"); // Create the table String createTableSql = "CREATE TABLE people (name VARCHAR2(255), age INT)"; jdbcTemplate.execute(createTableSql); for (People item : items) { String sql = "INSERT INTO people (name, age) VALUES (?, ?)"; jdbcTemplate.update(sql, item.getName(), item.getAge()); } }

Introduction to the BatchConfigTest.java file

The BatchConfigTest.java file is a class that uses JUnit for testing, used to test the job configuration of Spring Batch.

The code in the BatchConfigTest.java file mainly includes the following parts:

Import other classes and interfaces.

Declare the following interfaces and classes in this file:

Assertclass: used to assert test results.@Testannotation: used to mark a method as a test method.@RunWithannotation: used to specify the test runner.Jobinterface: represents a batch processing job.JobExecutionclass: used to represent the execution of a batch processing job.JobParametersclass: used to represent the parameters of a batch processing job.JobParametersBuilderclass: used to build the parameters of a batch processing job.JobLauncherinterface: used to start a batch processing job.@Autowiredannotation: used for dependency injection.@SpringBootTestannotation: used to specify the test class as a Spring Boot test.SpringRunnerclass: used to specify the test runner as SpringRunner.

Code:

import org.junit.Assert; import org.junit.jupiter.api.Test; import org.junit.runner.RunWith; import org.springframework.batch.core.Job; import org.springframework.batch.core.JobExecution; import org.springframework.batch.core.JobParameters; import org.springframework.batch.core.JobParametersBuilder; import org.springframework.batch.core.launch.JobLauncher; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.test.context.SpringBootTest; import org.springframework.test.context.junit4.SpringRunner; import javax.batch.runtime.BatchStatus;Define the

BatchConfigTestclass.By using the

@SpringBootTestannotation and theSpringRunnerrunner, you can perform integration tests for Spring Boot. In thetestJobmethod, use theJobLauncherTestUtilshelper class to start a batch processing job and use assertions to verify the job's execution status.Use the

@Autowiredannotation to automatically inject aJobLauncherTestUtilsinstance.Code:

@Autowired private JobLauncherTestUtils jobLauncherTestUtils;Use the

@Testannotation to mark thetestJobmethod as a test method. In this method, first create aJobParametersobject, then use thejobLauncherTestUtils.launchJobmethod to start the batch processing job, and use theAssert.assertEqualsmethod to assert that the job's execution status isCOMPLETED.Code:

@Test public void testJob() throws Exception { JobParameters jobParameters = new JobParametersBuilder() .addString("jobParam", "paramValue") .toJobParameters(); JobExecution jobExecution = jobLauncherTestUtils.launchJob(jobParameters); Assert.assertEquals(BatchStatus.COMPLETED, jobExecution.getStatus()); }Use the

@Autowiredannotation to automatically inject aJobLauncherinstance.Code:

@Autowired private JobLauncher jobLauncher;Use the

@Autowiredannotation to automatically inject aJobinstance.Code:

@Autowired private Job job;Define an internal class named

JobLauncherTestUtilsto assist in starting the batch processing job. In this class, define alaunchJobmethod to start the batch processing job. In this method, use thejobLauncher.runmethod to start the job and return the job's execution result.Code:

private class JobLauncherTestUtils { public JobExecution launchJob(JobParameters jobParameters) throws Exception { return jobLauncher.run(job, jobParameters); } }

AddPeopleDescProcessorTest.java file

The AddPeopleDescProcessorTest.java file is a class that uses JUnit for testing Spring Batch job configurations.

The code in the AddPeopleDescProcessorTest.java file mainly includes the following parts:

Import other classes and interfaces.

Declare the interfaces and classes included in the current file:

Peopleclass: stores the personnel information read from the database.PeopleDESCclass: stores the description information after the personnel information is converted or processed.Testannotation: marks a method as a test method.RunWithannotation: specifies the test runner.Autowiredannotation: performs dependency injection.SpringBootTestannotation: specifies the test class as a Spring Boot test.SpringRunnerclass: specifies the test runner as SpringRunner.

Sample code:

import com.oceanbase.example.batch.model.People; import com.oceanbase.example.batch.model.PeopleDESC; import org.junit.jupiter.api.Test; import org.junit.runner.RunWith; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.test.context.SpringBootTest; import org.springframework.test.context.junit4.SpringRunner;Define the

AddPeopleDescProcessorTestclass.Use the

SpringBootTestannotation andSpringRunnerrunner for Spring Boot integration testing.Use the

@Autowiredannotation to automatically inject theAddPeopleDescProcessorinstance.Sample code:

@Autowired private AddPeopleDescProcessor processor;Use the

@Testannotation to mark thetestProcessmethod as a test method. In this method, first create aPeopleobject, then use theprocessor.processmethod to process the object, and assign the result to aPeopleDESCobject.Sample code:

@Test public void testProcess() throws Exception { People people = new People(); PeopleDESC desc = processor.process(people); }

Introduction to AddDescPeopleWriterTest.java

The AddDescPeopleWriterTest.java file is a class that uses JUnit to test the write logic of AddDescPeopleWriter.

The code in the AddDescPeopleWriterTest.java file mainly includes the following parts:

Reference other classes and interfaces.

The current file contains the following interfaces and classes:

PeopleDESCclass: stores description information of people after conversion or processing of people information.Assertclass: Used to assert test results.Testannotation: specifies the test method.RunWithannotation: specifies a test runner.Autowiredannotation: used for dependency injection.@SpringBootTestannotation: specifies that the test class is for Spring Boot testing.JdbcTemplateclass: provides methods for executing SQL statements.SpringRunnerclass: specify the test runner as SpringRunner.ArrayListclass to create an empty list.Listinterface: For querying results.

Sample code:

import com.oceanbase.example.batch.model.PeopleDESC; import org.junit.Assert; import org.junit.jupiter.api.Test; import org.junit.runner.RunWith; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.test.context.SpringBootTest; import org.springframework.jdbc.core.JdbcTemplate; import org.springframework.test.context.junit4.SpringRunner; import java.util.ArrayList; import java.util.List;Define the

AddDescPeopleWriterTestclass.You can write an integration test for Spring Boot by using the

SpringBootTestannotation and theSpringRunnerrunner.Use

@Autowiredto inject an instance. Use the@Autowiredannotation to automatically inject theAddPeopleDescProcessorandJdbcTemplateinstances.Sample code:

@Autowired private AddDescPeopleWriter writer; @Autowired private JdbcTemplate jdbcTemplate;Use

@Testto test data insertion and output. Annotate thetestWritemethod with the@Testannotation to indicate that it is a test method. In this method, first create an emptypeopleDescListand add twoPeopleDESCobjects to it. Then, call thewriter.writemethod to write the data from the list to the database. UsejdbcTemplateto execute a query statement and retrieve data from thepeople_desctable. Use assertion statements to verify the accuracy of the data. Finally, print the query results to the console and output a message indicating that the job execution has completed.Insert data into the

people_desctable. First, an empty listpeopleDescListofPeopleDESCobjects is created. Then, twoPeopleDESCobjectsdesc1anddesc2are created, and their properties are set.desc1anddesc2are added topeopleDescList. Thewritemethod ofwriteris called, writing the objects inpeopleDescListto thepeople_desctable in the database. TheJdbcTemplateis used to execute the query statementSELECT COUNT(*) FROM people_desc, obtaining the number of records in thepeople_desctable, and assigning the result to the variablecount. Finally, theAssert.assertEqualsmethod is used for assertions to check whether the value ofcountis equal to2.Code:

List<PeopleDESC> peopleDescList = new ArrayList<>(); PeopleDESC desc1 = new PeopleDESC(); desc1.setId(1); desc1.setName("John"); desc1.setAge(25); desc1.setDesc("This is John with age 25"); peopleDescList.add(desc1); PeopleDESC desc2 = new PeopleDESC(); desc2.setId(2); desc2.setName("Alice"); desc2.setAge(30); desc2.setDesc("This is Alice with age 30"); peopleDescList.add(desc2); writer.write(peopleDescList); String selectSql = "SELECT COUNT(*) FROM people_desc"; int count = jdbcTemplate.queryForObject(selectSql, Integer.class); Assert.assertEquals(2, count);Query the data from the

people_desctable. TheJdbcTemplateis used to query data from thepeople_desctable by using theSELECT * FROM people_descstatement, and the query result is processed by using alambdaexpression. In thelambdaexpression, the field values in the query result set are obtained by using methods such asrs.getIntandrs.getString, and the field values are set to a newPeopleDESCobject. The newPeopleDESCobject is added to theresultDesclist. Then, a prompt line ofpeople_desc table data:is printed. Aforloop is used to traverse eachPeopleDESCobject in theresultDesclist. TheSystem.out.printlnmethod is used to print the content of eachPeopleDESCobject. At the end of the program, an execution completion message is printed.The code is as follows:

List<PeopleDESC> resultDesc = jdbcTemplate.query("SELECT * FROM people_desc", (rs, rowNum) -> { PeopleDESC desc = new PeopleDESC(); desc.setId(rs.getInt("id")); desc.setName(rs.getString("name")); desc.setAge(rs.getInt("age")); desc.setDesc(rs.getString("description")); return desc; }); System.out.println("people_desc data:"); for (PeopleDESC desc : resultDesc) { System.out.println(desc); } // Outputs the information after the job execution is completed. System.out.println("Batch Job execution completed.");

AddPeopleWriterTest.java

The AddPeopleWriterTest.java file is a class that uses JUnit to test the writing logic of AddPeopleWriterTest.

The AddPeopleWriterTest.java file contains the following parts:

References other classes and interfaces.

The current file includes the following interfaces and classes:

Peopleclass: Stores the information of personnel read from the database.Testannotation: indicates a test method.RunWithannotation: Specifies the test runner.Autowiredannotation: Used to inject dependencies.SpringBootApplicationannotation: used to indicate that this class serves as the entry point of a Spring Boot application.SpringBootTestannotation: Specifies the test class as a Spring Boot test class.ComponentScanannotation: specifies the package or class to be scanned for components.JdbcTemplateclass: provides methods for executing SQL statements.SpringRunnerclass: specifies the test runner asSpringRunner.ArrayListclass for creating an empty list.Listinterface: used to operate a query result set.

The following example shows the code:

import com.oceanbase.example.batch.model.People; import org.junit.jupiter.api.Test; import org.junit.runner.RunWith; import org.springframework.beans.factory.annotation.Autowired; import org.springframework.boot.autoconfigure.SpringBootApplication; import org.springframework.boot.test.context.SpringBootTest; import org.springframework.context.annotation.ComponentScan; import org.springframework.jdbc.core.JdbcTemplate; import org.springframework.test.context.junit4.SpringRunner; import java.util.ArrayList; import java.util.List;Define the

AddPeopleWriterTestclass.We use the

SpringBootTestannotation and theSpringRunnerrunner for integration testing of Spring Boot applications. The package to be scanned is specified using the@ComponentScanannotation.Inject the instance by using

@Autowired. Use the@Autowiredannotation to automatically inject instances ofaddPeopleWriterandJdbcTemplate.Sample code:

@Autowired private AddPeopleWriter addPeopleWriter; @Autowired private JdbcTemplate jdbcTemplate;Use the

@Testannotation to test data insertion and output.Insert data into the

peopletable. First, create an emptypeopleListofPeopleobjects. Then, create twoPeopleobjectsperson1andperson2, set their name and age properties, and add them topeopleList. After that, call thewritemethod of theaddPeopleWriterobject and passpeopleListto the method to write thesePeopleobjects to the database.The following is the code:

List<People> peopleList = new ArrayList<>(); People person1 = new People(); person1.setName("zhangsan"); person1.setAge(27); peopleList.add(person1); People person2 = new People(); person2.setName("lisi"); person2.setAge(35); peopleList.add(person2); addPeopleWriter.write(peopleList);Output the data in the

peopletable. First, we execute a query statementSELECT * FROM peopleusingJdbcTemplate. We use alambdaexpression to process the query results. In thelambdaexpression, we use thers.getStringandrs.getIntmethods to extract field values from the result set and assign these field values to a newPeopleobject. We add the newPeopleobject to a result listresult. We then print a message that prompts the user to press Enter to continue, followed by a message that indicates the operation was performed. We next loop through theresultlist by using aforloop and useSystem.out.printlnto print the contents of eachPeopleobject. Finally, we print the message "Job has been completed."The following sample code is available:

List<People> result = jdbcTemplate.query("SELECT * FROM people", (rs, rowNum) -> { People person = new People(); person.setName(rs.getString("name")); person.setAge(rs.getInt("age")); return person; }); System.out.println("Data in the people table:"); for (People person : result) { System.out.println(person); } // Output the information after the job is executed. System.out.println("Batch Job execution completed.");

Full code

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 https://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.7.11</version>

<relativePath/> <!-- lookup parent from repository -->

</parent>

<groupId>com.oceanbase</groupId>

<artifactId>java-oceanbase-springboot</artifactId>

<version>0.0.1-SNAPSHOT</version>

<name>java-oceanbase-springbatch</name>

<description>Demo project for Spring Batch</description>

<properties>

<java.version>1.8</java.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter</artifactId>

</dependency>

<dependency>

<groupId>com.oceanbase</groupId>

<artifactId>oceanbase-client</artifactId>

<version>2.4.3</version>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-jdbc</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-test</artifactId>

<scope>test</scope>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-batch</artifactId>

</dependency>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-data-jpa</artifactId>

</dependency>

<dependency>

<groupId>org.apache.tomcat</groupId>

<artifactId>tomcat-jdbc</artifactId>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.10</version>

<scope>test</scope>

</dependency>

<dependency>

<groupId>javax.activation</groupId>

<artifactId>javax.activation-api</artifactId>

<version>1.2.0</version>

</dependency>

<dependency>

<groupId>jakarta.persistence</groupId>

<artifactId>jakarta.persistence-api</artifactId>

<version>2.2.3</version>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

</plugin>

</plugins>

</build>

</project>

#configuration database

spring.datasource.driver-class-name=com.oceanbase.jdbc.Driver

spring.datasource.url=jdbc:oceanbase://host:port/schema_name?characterEncoding=utf-8

spring.datasource.username=user_name

spring.datasource.password=

# JPA

spring.jpa.show-sql=true

spring.jpa.hibernate.ddl-auto=update

# Spring Batch

spring.batch.job.enabled=false

#

logging.level.org.springframework=INFO

logging.level.com.example=DEBUG

package com.oceanbase.example.batch;

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

@SpringBootApplication

public class BatchApplication {

public static void main(String[] args) {

SpringApplication.run(BatchApplication.class, args);

}

public void runBatchJob() {

}

}

package com.oceanbase.example.batch.config;

import com.oceanbase.example.batch.model.People;

import com.oceanbase.example.batch.model.PeopleDESC;

import com.oceanbase.example.batch.processor.AddPeopleDescProcessor;

import com.oceanbase.example.batch.writer.AddDescPeopleWriter;

import org.springframework.batch.core.Job;

import org.springframework.batch.core.Step;

import org.springframework.batch.core.configuration.annotation.EnableBatchProcessing;

import org.springframework.batch.core.configuration.annotation.JobBuilderFactory;

import org.springframework.batch.core.configuration.annotation.StepBuilderFactory;

import org.springframework.batch.core.launch.support.RunIdIncrementer;

import org.springframework.batch.item.ItemProcessor;

import org.springframework.batch.item.ItemReader;

import org.springframework.batch.item.ItemWriter;

import org.springframework.batch.item.database.JdbcCursorItemReader;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.EnableAutoConfiguration;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.ComponentScan;

import org.springframework.context.annotation.Configuration;

import org.springframework.jdbc.core.BeanPropertyRowMapper;

import javax.sql.DataSource;

//import javax.activation.DataSource;

@Configuration

@EnableBatchProcessing

@SpringBootApplication

@ComponentScan("com.oceanbase.example.batch.writer")

@EnableAutoConfiguration

public class BatchConfig {

@Autowired

private JobBuilderFactory jobBuilderFactory;

@Autowired

private StepBuilderFactory stepBuilderFactory;

@Autowired

private DataSource dataSource;// Use the default dataSource provided by Spring Boot auto-configuration

@Bean

public ItemReader<People> peopleReader() {

JdbcCursorItemReader<People> reader = new JdbcCursorItemReader<>();

reader.setDataSource((javax.sql.DataSource) dataSource);

reader.setRowMapper(new BeanPropertyRowMapper<>(People.class));

reader.setSql("SELECT * FROM people");

return reader;

}

@Bean

public ItemProcessor<People, PeopleDESC> addPeopleDescProcessor() {

return new AddPeopleDescProcessor();

}

@Bean

public ItemWriter<PeopleDESC> addDescPeopleWriter() {

return new AddDescPeopleWriter();

}

@Bean

public Step step1(ItemReader<People> reader, ItemProcessor<People, PeopleDESC> processor,

ItemWriter<PeopleDESC> writer) {

return stepBuilderFactory.get("step1")

.<People, PeopleDESC>chunk(10)

.reader(reader)

.processor(processor)

.writer(writer)

.build();

}

@Bean

public Job importJob(Step step1) {

return jobBuilderFactory.get("importJob")

.incrementer(new RunIdIncrementer())

.flow(step1)

.end()

.build();

}

}

package com.oceanbase.example.batch.model;

public class People {

private String name;

private int age;

// getters and setters

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

@Override

public String toString() {

return "People [name=" + name + ", age=" + age + "]";

}

// Getters and setters

}

package com.oceanbase.example.batch.model;

public class PeopleDESC {

private String name;

private int age;

private String desc;

private int id;

public String getName() {

return name;

}

public void setName(String name) {

this.name = name;

}

public int getAge() {

return age;

}

public void setAge(int age) {

this.age = age;

}

public String getDesc() {

return desc;

}

public void setDesc(String desc) {

this.desc = desc;

}

public int getId() {

return id;

}

public void setId(int id) {

this.id = id;

}

@Override

public String toString() {

return "PeopleDESC [name=" + name + ", age=" + age + ", desc=" + desc + "]";

}

}

package com.oceanbase.example.batch.processor;

import com.oceanbase.example.batch.model.People;

import com.oceanbase.example.batch.model.PeopleDESC;

import org.springframework.batch.item.ItemProcessor;

public class AddPeopleDescProcessor implements ItemProcessor<People, PeopleDESC> {

@Override

public PeopleDESC process(People item) throws Exception {

PeopleDESC desc = new PeopleDESC();

desc.setName(item.getName());

desc.setAge(item.getAge());

desc.setDesc("This is " + item.getName() + " with age " + item.getAge());

return desc;

}

}

package com.oceanbase.example.batch.writer;

import com.oceanbase.example.batch.model.PeopleDESC;

import org.springframework.batch.item.ItemWriter;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.jdbc.core.JdbcTemplate;

import java.util.List;

public class AddDescPeopleWriter implements ItemWriter<PeopleDESC> {

@Autowired

private JdbcTemplate jdbcTemplate;

@Override

public void write(List<? extends PeopleDESC> items) throws Exception {

// Delete the table if it exists.

jdbcTemplate.execute("DROP TABLE people_desc");

// create table statement

String createTableSql = "CREATE TABLE people_desc (id INT PRIMARY KEY, name VARCHAR2(255), age INT, description VARCHAR2(255))";

jdbcTemplate.execute(createTableSql);

for (PeopleDESC item : items) {

String sql = "INSERT INTO people_desc (id, name, age, description) VALUES (?, ?, ?, ?)";

jdbcTemplate.update(sql, item.getId(), item.getName(), item.getAge(), item.getDesc());

}

}

}

package com.oceanbase.example.batch.writer;

import com.oceanbase.example.batch.model.People;

import org.springframework.batch.item.ItemWriter;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.jdbc.core.JdbcTemplate;

import org.springframework.stereotype.Component;

import java.util.List;

@Component

public class AddPeopleWriter implements ItemWriter<People> {

@Autowired

private JdbcTemplate jdbcTemplate;

@Override

public void write(List<? extends People> items) throws Exception {

// Delete an existing table.

jdbcTemplate.execute("DROP TABLE people");

// CREATE TABLE statement

String createTableSql = "CREATE TABLE people (name VARCHAR2(255), age INT)";

jdbcTemplate.execute(createTableSql);

for (People item : items) {

String sql = "INSERT INTO people (name, age) VALUES (?, ?)";

jdbcTemplate.update(sql, item.getName(), item.getAge());

}

}

}

package com.oceanbase.example.batch.config;

import org.junit.Assert;

import org.junit.jupiter.api.Test;

import org.junit.runner.RunWith;

import org.springframework.batch.core.Job;

import org.springframework.batch.core.JobExecution;

import org.springframework.batch.core.JobParameters;

import org.springframework.batch.core.JobParametersBuilder;

import org.springframework.batch.core.launch.JobLauncher;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.test.context.junit4.SpringRunner;

import javax.batch.runtime.BatchStatus;

@RunWith(SpringRunner.class)

@SpringBootTest

public class BatchConfigTest {

@Autowired

private JobLauncherTestUtils jobLauncherTestUtils;

@Test

public void testJob() throws Exception {

JobParameters jobParameters = new JobParametersBuilder()

.addString("jobParam", "paramValue")

.toJobParameters();

JobExecution jobExecution = jobLauncherTestUtils.launchJob(jobParameters);

Assert.assertEquals(BatchStatus.COMPLETED, jobExecution.getStatus());

}

@Autowired

private JobLauncher jobLauncher;

@Autowired

private Job job;

private class JobLauncherTestUtils {

public JobExecution launchJob(JobParameters jobParameters) throws Exception {

return jobLauncher.run(job, jobParameters);

}

}

}

package com.oceanbase.example.batch.processor;

import com.oceanbase.example.batch.model.People;

import com.oceanbase.example.batch.model.PeopleDESC;

import org.junit.jupiter.api.Test;

import org.junit.runner.RunWith;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.test.context.junit4.SpringRunner;

@RunWith(SpringRunner.class)

@SpringBootTest

public class AddPeopleDescProcessorTest {

@Autowired

private AddPeopleDescProcessor processor;

@Test

public void testProcess() throws Exception {

People people = new People();

// people.setName("John");

// people.setAge(25);

PeopleDESC desc = processor.process(people);

// Assert.assertEquals("John", desc.getName());

// Assert.assertEquals(25, desc.getAge());

// Assert.assertEquals("This is John with age 25", desc.getDesc());

}

}

package com.oceanbase.example.batch.writer;

import com.oceanbase.example.batch.model.PeopleDESC;

import org.junit.Assert;

import org.junit.jupiter.api.Test;

import org.junit.runner.RunWith;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.jdbc.core.JdbcTemplate;

import org.springframework.test.context.junit4.SpringRunner;

import java.util.ArrayList;

import java.util.List;

@RunWith(SpringRunner.class)

@SpringBootTest

public class AddDescPeopleWriterTest {

@Autowired

private AddDescPeopleWriter writer;

@Autowired

private JdbcTemplate jdbcTemplate;

@Test

public void testWrite() throws Exception {

// Insert data into the people_desc table

List<PeopleDESC> peopleDescList = new ArrayList<>();

PeopleDESC desc1 = new PeopleDESC();

desc1.setId(1);

desc1.setName("John");

desc1.setAge(25);

desc1.setDesc("This is John with age 25");

peopleDescList.add(desc1);

PeopleDESC desc2 = new PeopleDESC();

desc2.setId(2);

desc2.setName("Alice");

desc2.setAge(30);

desc2.setDesc("This is Alice with age 30");

peopleDescList.add(desc2);

writer.write(peopleDescList);

String selectSql = "SELECT COUNT(*) FROM people_desc";

int count = jdbcTemplate.queryForObject(selectSql, Integer.class);

Assert.assertEquals(2, count);

// Output data from the people_desc table

List<PeopleDESC> resultDesc = jdbcTemplate.query("SELECT * FROM people_desc", (rs, rowNum) -> {

PeopleDESC desc = new PeopleDESC();

desc.setId(rs.getInt("id"));

desc.setName(rs.getString("name"));

desc.setAge(rs.getInt("age"));

desc.setDesc(rs.getString("description"));

return desc;

});

System.out.println("people_desc table data:");

for (PeopleDESC desc : resultDesc) {

System.out.println(desc);

}

// Output information after the job is completed

System.out.println("Batch Job execution completed.");

}

}

package com.oceanbase.example.batch.writer;

import com.oceanbase.example.batch.model.People;

import org.junit.jupiter.api.Test;

import org.junit.runner.RunWith;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.boot.test.context.SpringBootTest;

import org.springframework.context.annotation.ComponentScan;

import org.springframework.jdbc.core.JdbcTemplate;

import org.springframework.test.context.junit4.SpringRunner;

import java.util.ArrayList;

import java.util.List;

@RunWith(SpringRunner.class)

@SpringBootTest

@SpringBootApplication

@ComponentScan("com.oceanbase.example.batch.writer")

public class AddPeopleWriterTest {

@Autowired

private AddPeopleWriter addPeopleWriter;

@Autowired

private JdbcTemplate jdbcTemplate;

@Test

public void testWrite() throws Exception {

// Insert data into the people table

List<People> peopleList = new ArrayList<>();

People person1 = new People();

person1.setName("zhangsan");

person1.setAge(27);

peopleList.add(person1);

People person2 = new People();

person2.setName("lisi");

person2.setAge(35);

peopleList.add(person2);

addPeopleWriter.write(peopleList);

// Query and output the result

List<People> result = jdbcTemplate.query("SELECT * FROM people", (rs, rowNum) -> {

People person = new People();

person.setName(rs.getString("name"));

person.setAge(rs.getInt("age"));

return person;

});

System.out.println("people table data:");

for (People person : result) {

System.out.println(person);

}

// Output information after the job is completed

System.out.println("Batch Job execution completed.");

}

}

References

For more information about OceanBase Connector/J, see OceanBase JDBC driver.