This topic describes how to build an application by using Proxool connection pool, OceanBase Connector/J, and OceanBase Cloud. The application can perform basic database operations, such as creating tables, inserting, deleting, updating, and querying data.

Download the proxool-oceanbase-client sample project

Download the proxool-oceanbase-client sample project  Connect to OceanBase Cloud using Proxool connection pool (Oracle compatible mode)

Connect to OceanBase Cloud using Proxool connection pool (Oracle compatible mode)

Prerequisites

You have registered an OceanBase Cloud account, created an instance and an Oracle-compatible tenant. For more information, see Create an instance and Create a tenant.

You have obtained the connection string of the target Oracle-compatible tenant. For more information, see Obtain the connection string.

You have installed JDK 1.8 and Maven.

You have installed IntelliJ IDEA.

Note

The code examples in this topic are run in IntelliJ IDEA Community Edition 2021.3.2. You can also choose any other tool that you prefer to run the code examples.

Procedure

Note

The following steps describe how to compile and run the proxool-oceanbase-client project in the Windows environment by using IntelliJ IDEA Community Edition 2021.3.2. If you use a different operating system or compiler, the steps may vary.

Step 1: Import the proxool-oceanbase-client project into IntelliJ IDEA

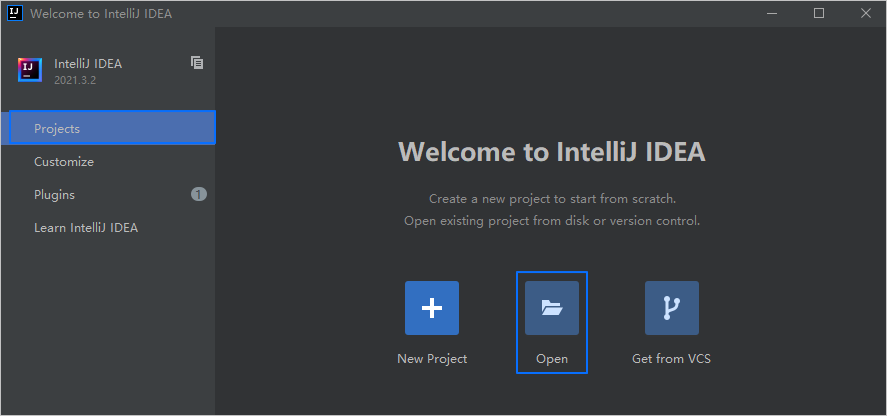

Start IntelliJ IDEA.

On the welcome page, click Open. Navigate to the project directory, select the root directory of the project, and click OK.

IntelliJ IDEA automatically detects the project type and loads the project.

Note

When you import a Maven project by using IntelliJ IDEA, it automatically detects the

pom.xmlfile in the project, downloads the required dependency libraries based on the described dependencies in the file, and adds them to the project.

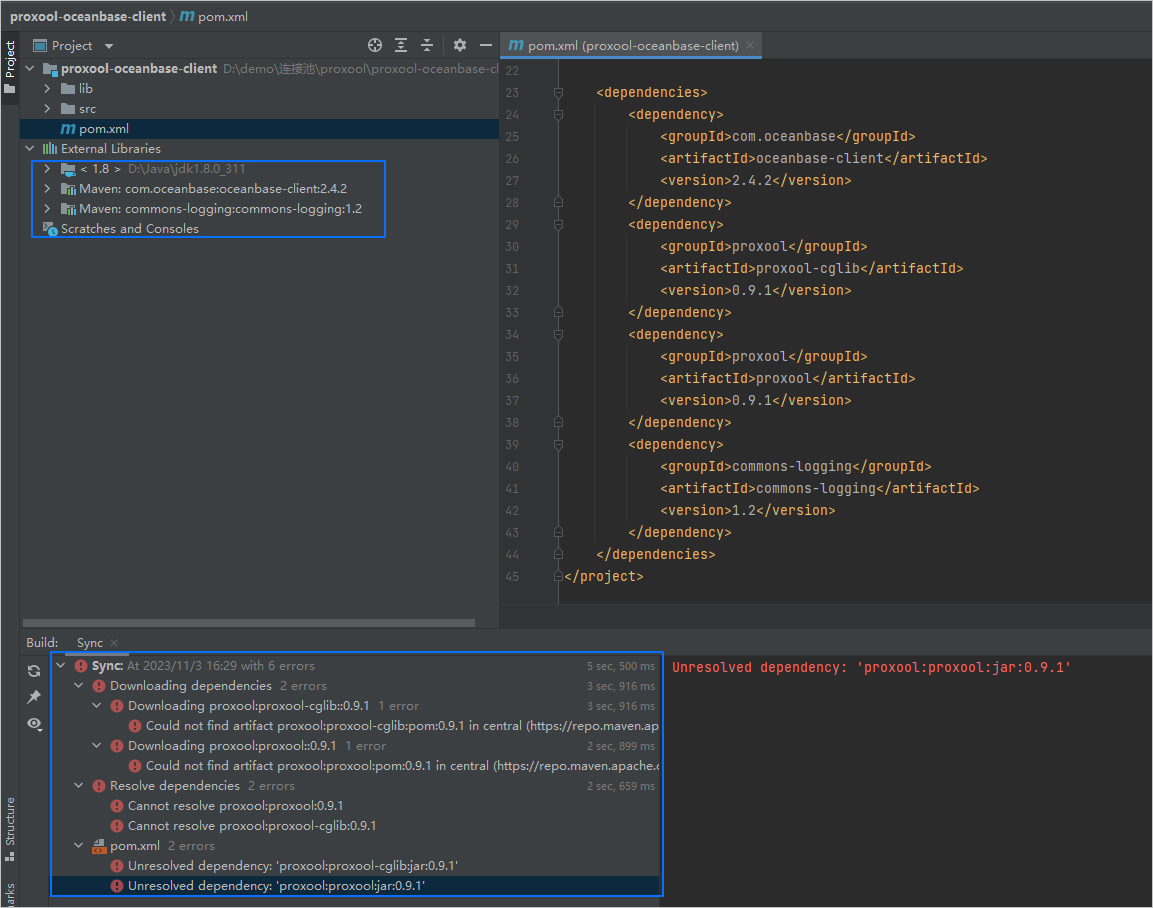

(Optional) Manually import unresolved dependencies.

If all the dependencies in the

pom.xmlfile are automatically imported to the project, you can skip this step.Based on the prompt in the Sync window of IntelliJ IDEA, you can find that the

proxool-cglibandproxooldependencies are unresolved. Theproxool-cglibandproxooljarfiles are stored in thelibfolder under the root directory of theproxool-oceanbase-clientproject. Perform the following steps to add thejarfiles to the project:- In IntelliJ IDEA, choose File > Project Structure.

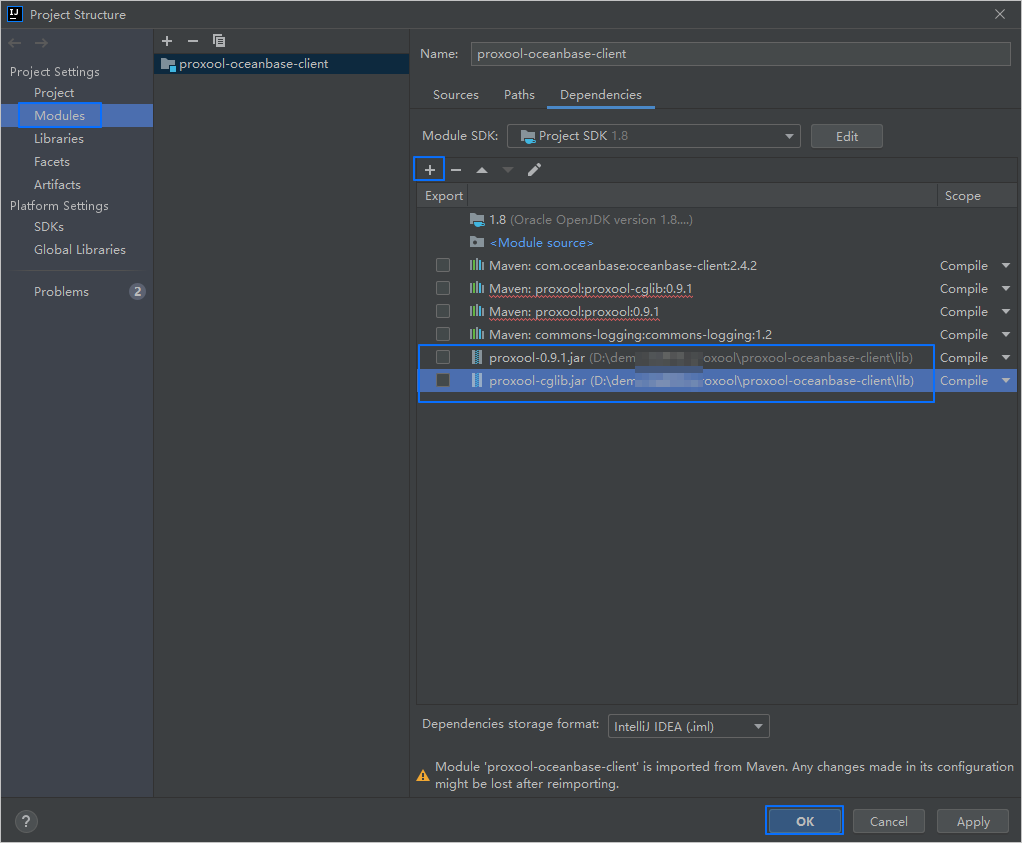

- In the left-side navigation pane, choose Modules.

- In the right-side pane, select the Dependencies tab. Click the + icon and choose JARs or directories.

- In the dialog box that appears, navigate to the

libdirectory that stores thejarfiles, select thejarfiles, and click OK. - On the Dependencies tab, you can see the newly added

jarfiles in the list. - Click Apply or OK to save the changes.

Step 2: Modify the database connection information in the proxool-oceanbase-client project

Modify the database connection information in the proxool-oceanbase-client/src/main/resources/db.properties file based on the connection string information obtained in the prerequisites.

Here is an example:

...

jdbc-1.proxool.driver-url=jdbc:oceanbase://t5******.********.oceanbase.cloud:1521/test_schema001

jdbc-1.user=test_user

jdbc-1.password=******

...

- The connection address is

t5******.********.oceanbase.cloud. - The access port is 1521.

- The name of the database to be accessed is

test_schema001. - The tenant account is

test_user. - The password is

******.

Step 3: Run the proxool-oceanbase-client project

In the project navigation pane, find and expand the src/main/java/com.example directory.

Right-click the Main file and choose Run 'Main.main()'.

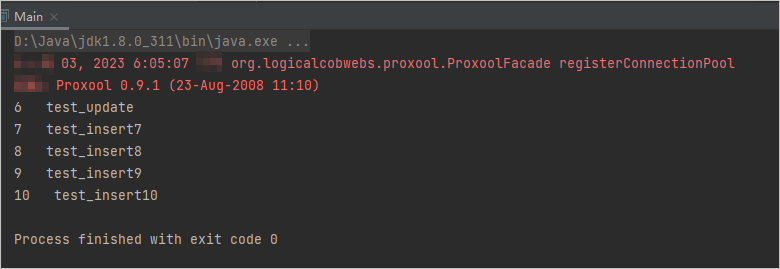

IntelliJ IDEA automatically compiles and runs the project, and displays the output result in the Run panel.

You can also execute the following SQL statement in OceanBase Client (OBClient) to view the result.

obclient [TEST_USER001]> SELECT * FROM test_schema001.test_proxool;The return result is as follows:

+------+---------------+ | C1 | C2 | +------+---------------+ | 6 | test_update | | 7 | test_insert7 | | 8 | test_insert8 | | 9 | test_insert9 | | 10 | test_insert10 | +------+---------------+ 5 rows in set

Project code introduction

Click proxool-oceanbase-client to download the project code, which is a compressed file named proxool-oceanbase-client.zip.

After decompressing it, you will find a folder named proxool-oceanbase-client. The directory structure is as follows:

proxool-oceanbase-client

├── lib

│ ├── proxool-0.9.1.jar

│ └── proxool-cglib.jar

├── src

│ └── main

│ ├── java

│ │ └── com

│ │ └── example

│ │ └── Main.java

│ └── resources

│ └── db.properties

└── pom.xml

File description:

lib: stores the dependency library files required by the project.proxool-0.9.1.jar: the Proxool connection pool library file.proxool-cglib.jar: the CGLib library file used to support the Proxool connection pool.src: the root directory for source code.main: the main code directory, containing the core logic of the application.java: the Java source code directory.com: the Java package directory.example: the package directory for the sample project.Main.java: the main class program file, containing logic for creating tables, inserting, deleting, updating, and querying data.resources: the resource file directory, containing configuration files.db.properties: the configuration file for the connection pool, containing relevant database connection parameters.pom.xml: the configuration file for the Maven project, used to manage project dependencies and build settings.

Code of pom.xml

The pom.xml file is a configuration file for Maven projects. It defines the dependencies, plugins, and build rules of the project. Maven is a Java project management tool that can automatically download dependencies, compile, and package projects.

The code in this topic's pom.xml file mainly includes the following parts:

The file declaration statement.

This statement declares that the file is an XML file, the XML version is

1.0, and the character encoding isUTF-8.Sample code:

<?xml version="1.0" encoding="UTF-8"?>The POM namespace and POM model version.

- The

xmlnsattribute specifies the POM namespace ashttp://maven.apache.org/POM/4.0.0. - The

xmlns:xsiattribute specifies the XML namespace ashttp://www.w3.org/2001/XMLSchema-instance. - The

xsi:schemaLocationattribute specifies the POM namespace ashttp://maven.apache.org/POM/4.0.0and the location of the POM XSD file ashttp://maven.apache.org/xsd/maven-4.0.0.xsd. - The

<modelVersion>element specifies the POM model version used by the POM file as4.0.0.

Sample code:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd"> <modelVersion>4.0.0</modelVersion> <!-- Other configurations --> </project>- The

Basic information.

- The

<groupId>element specifies the project's group ID ascom.example. - The

<artifactId>element specifies the project's name asproxool-oceanbase-client. - The

<version>element specifies the project's version as1.0-SNAPSHOT.

Sample code:

<groupId>com.example</groupId> <artifactId>proxool-oceanbase-client</artifactId> <version>1.0-SNAPSHOT</version>- The

Project source file attributes.

The

maven-compiler-plugincompiler plugin is specified for Maven, and the source code and target Java versions are set to 8. This means that the project's source code is written using Java 8 features, and the compiled bytecode will also be compatible with the Java 8 runtime environment. This setting ensures that the project can correctly handle Java 8 syntax and features during compilation and runtime.Note

Java 1.8 and Java 8 are different names for the same version.

Sample code:

<build> <plugins> <plugin> <groupId>org.apache.maven.plugins</groupId> <artifactId>maven-compiler-plugin</artifactId> <configuration> <source>8</source> <target>8</target> </configuration> </plugin> </plugins> </build>Project dependencies.

The

oceanbase-clientdependency library is added to connect to and operate on the database:- The

<groupId>element specifies the dependency's group ID ascom.oceanbase. - The

<artifactId>element specifies the dependency's name asoceanbase-client. - The

<version>element specifies the dependency's version as2.4.2.

Note

This part of the code defines the project's dependency on OceanBase Connector/J V2.4.2. For information about other versions, see OceanBase JDBC Driver.

Sample code:

<dependency> <groupId>com.oceanbase</groupId> <artifactId>oceanbase-client</artifactId> <version>2.4.2</version> </dependency>- The

The

proxool-cglibdependency library is added to support the CGLib library for the Proxool connection pool:- The

<groupId>element specifies the dependency's group ID asproxool. - The

<artifactId>element specifies the dependency's name asproxool-cglib. - The

<version>element specifies the dependency's version as0.9.1.

Sample code:

<dependency> <groupId>proxool</groupId> <artifactId>proxool-cglib</artifactId> <version>0.9.1</version> </dependency>- The

The

proxooldependency library is added as the core library for the Proxool connection pool:- The

<groupId>element specifies the dependency's group ID asproxool. - The

<artifactId>element specifies the dependency's name asproxool. - The

<version>element specifies the dependency's version as0.9.1.

Sample code:

<dependency> <groupId>proxool</groupId> <artifactId>proxool</artifactId> <version>0.9.1</version> </dependency>- The

The

commons-loggingdependency library is added as a general-purpose logging library for logging in applications:- The

<groupId>element specifies the dependency's group ID ascommons-logging. - The

<artifactId>element specifies the dependency's name ascommons-logging. - The

<version>element specifies the dependency's version as1.2.

Sample code:

<dependency> <groupId>commons-logging</groupId> <artifactId>commons-logging</artifactId> <version>1.2</version> </dependency>- The

db.properties code introduction

db.properties is the connection pool configuration file used in the example in this topic. It contains the configuration attributes of the connection pool.

Note

When you configure the Proxool connection pool by using a .properties file, you must follow these rules:

- Use a custom name with

jdbcas the prefix to identify each connection pool. You can customize this name to uniquely identify each connection pool. - Proxool connection pool attributes should be prefixed with

proxool.. These attributes are used to configure the properties of the Proxool connection pool itself. - Attributes without the

jdbcprefix will be ignored and will not be used by Proxool. - Attributes without the

proxool.prefix will be passed to the actual database connection, that is, these attributes will be passed to the actual database driver.

The db.properties file in this topic is an example of a properties file used to configure the connection pool attributes of a data source named jdbc-1. It mainly contains the following parts:

Sets the alias of the data source to

TEST.Sample code:

jdbc-1.proxool.alias=TESTConfigures the database connection parameters.

- Sets the class name of the driver to

com.oceanbase.jdbc.Driver, which is the class name of the OceanBase Database JDBC driver. - Sets the database connection URL, including the host IP address, port number, and schema to be accessed.

- Sets the username of the database.

- Sets the password of the database.

Sample code:

jdbc-1.proxool.driver-class=com.oceanbase.jdbc.Driver jdbc-1.proxool.driver-url=jdbc:oceanbase://$host:$port/$schema_name jdbc-1.user=$user_name jdbc-1.password=$passwordParameter description:

$host: the connection address of the cloud database of OceanBase Database, which is specified by the-hparameter in the connection string.$port: the connection port of the cloud database of OceanBase Database, which is specified by the-Pparameter in the connection string.$schema_name: the name of the database to be accessed, which is specified by the-Dparameter in the connection string.$user_name: the username, which is specified by the-uparameter in the connection string.$password: the password, which is specified by the-pparameter in the connection string.

- Sets the class name of the driver to

Configures other Proxool connection pool parameters.

- Sets the maximum number of connections in the connection pool to 8.

- Sets the minimum number of connections in the connection pool to 5.

- Sets the number of available connections in the connection pool to 4.

- Enables the detailed mode of the connection pool to display more log information.

- Sets the statistics recording cycle of the connection pool to 10 seconds, 1 minute, and 1 day.

- Sets the log level of the connection pool statistics recording to the error level.

Sample code:

jdbc-1.proxool.maximum-connection-count=8 jdbc-1.proxool.minimum-connection-count=5 jdbc-1.proxool.prototype-count=4 jdbc-1.proxool.verbose=true jdbc-1.proxool.statistics=10s,1m,1d jdbc-1.proxool.statistics-log-level=error

Notice

The specific attribute (parameter) configurations depend on the project requirements and the characteristics of the database. We recommend that you adjust and configure the parameters based on your actual situation. For more information about Proxool connection pool parameters, see Properties.

Common configuration parameters:

Configuration |

Default value |

Description |

|---|---|---|

| alias | N/A | Specifies the alias of the connection pool. This parameter is useful for identifying a connection pool among multiple connection pools. |

| driver-class | N/A | Specifies the class name of the database driver. |

| driver-url | N/A | Specifies the database connection URL, including the host IP address, port number, schema to be accessed, and optional database driver parameters. |

| username | N/A | Specifies the database username. |

| password | N/A | Specifies the database password. |

| maximum-connection-count | 15 | Specifies the maximum number of connections in the connection pool. The default value is 15, which means the connection pool can create up to 15 connections. |

| minimum-connection-count | 5 | Specifies the minimum number of connections in the connection pool. The default value is 5, which means the connection pool will always maintain at least 5 connections. |

| prototype-count | 0 | Specifies the number of prototype connections in the connection pool. The default value is 0, which means the connection pool will not actively create additional connections. |

| verbose | false | Specifies whether to enable detailed output mode for the connection pool. The default value is false, which means quiet mode. When the verbose attribute is set to true, the connection pool will output more detailed information for debugging and monitoring. This information may include the status of the connection pool, connection creation and release, and connection usage. Enabling verbose mode helps developers better understand the operation of the connection pool and check whether connection allocation and recycling are normal. This is very useful for troubleshooting connection leaks, performance issues, and tuning. In a production environment, it is generally not recommended to set verbose to true because it generates a large amount of output information, which may affect system performance and the size of log files. Typically, it is recommended to set verbose to false and only temporarily enable it when debugging and monitoring are needed. |

| statistics | null | Specifies the sampling interval for statistics, which tracks the usage status of the connection pool. The sampling interval can be set to a comma-separated list of time units, such as 10s,15m, indicating sampling every 10 seconds and every 15 minutes. Valid units are s (seconds), m (minutes), h (hours), and d (days). The default value is null, indicating that no statistics will be collected. When the statistics attribute is set, the connection pool will periodically sample statistics such as the number of active connections, idle connections, and connection requests. The sampling interval determines the granularity and frequency of the statistics. |

| statistics-log-level | null | Specifies the log level for statistics, which determines the type of log tracking. Valid log levels are DEBUG, INFO, WARN, ERROR, and FATAL. The default value is null, indicating that no statistics logs will be recorded. When the statistics-log-level attribute is set, the connection pool will record the generated statistics at the specified log level. These statistics may include the status of the connection pool, connection creation and release, and connection usage. |

| test-after-use | N/A | Specifies whether to test a connection after it is closed. If this attribute is set to true and the house-keeping-test-sql attribute is defined, each connection will be tested after it is closed (i.e., returned to the connection pool). If the connection test fails, the connection will be discarded. After a connection is used, it is typically returned to the connection pool for reuse. The test-after-use attribute ensures that a connection is tested after it is returned to the connection pool to verify its availability and validity. Connection testing usually uses the SQL statement specified by the house-keeping-test-sql attribute. Enabling the test-after-use feature allows the connection pool to promptly detect and remove unavailable connections, preventing the application from obtaining invalid connections. This improves the stability and reliability of the application. Note that to use the test-after-use feature, the house-keeping-test-sql attribute must be set. This attribute defines the SQL statement used for connection testing. The connection pool then uses the rules defined by house-keeping-test-sql to test and evaluate connections. |

| house-keeping-test-sql | N/A | Specifies the SQL statement for testing idle connections in the connection pool. When the housekeeping thread of the connection pool detects idle connections, it uses this SQL statement to test them. The test SQL statement should be very quick to execute, such as checking the current date. If this attribute is not defined, no connection testing will be performed. For MySQL compatible mode, you can use SELECT CURRENT_DATE or SELECT 1. For Oracle compatible mode, you can use SELECT sysdate FROM DUAL or SELECT 1 FROM DUAL. |

| trace | false | Specifies whether to record log information for each SQL call. When set to true, each SQL call will be recorded in the log at the DEBUG level, along with the execution time. You can also register a ConnectionListener (see ProxoolFacade) to obtain this information. The default value is false. Enabling the trace feature may generate a large amount of log output, especially in high-concurrency and frequent SQL call scenarios. In a production environment, it is recommended to use this feature cautiously to avoid excessive logs and unnecessary impact on system performance. |

| maximum-connection-lifetime | 4 hours | Specifies the maximum lifetime of a connection. This is the maximum time (in milliseconds) a connection can exist before it is destroyed. The default value is 4 hours. The lifetime of a connection refers to the period from its creation to its destruction. By setting the maximum-connection-lifetime attribute, you can limit the maximum time a connection can remain in the connection pool, preventing resource leaks and connections from being unused for too long. |

| maximum-active-time | 5 minutes | Specifies the maximum active time for a thread. If the housekeeping thread of the connection pool detects that a thread's active time exceeds this setting, it will terminate the thread. Therefore, ensure that this attribute is set to a value greater than the expected slowest response time. The default value is 5 minutes. The housekeeping thread terminates idle threads in the connection pool that have been unused for longer than this time, ultimately retaining the number of connections specified by minimum-connection-count. The housekeeping thread checks periodically based on the house-keeping-sleep-time parameter. |

| maximum-new-connections | N/A | Specifies the maximum number of new connections that can be established simultaneously by the connection pool. This attribute is deprecated. It is recommended to use the simultaneous-build-throttle attribute instead. |

| simultaneous-build-throttle | 10 | Specifies the maximum number of connections that can be established simultaneously by the connection pool. This is the upper limit for new connections that are being established but not yet available. Since connection establishment may involve multiple threads (e.g., when connections are established on demand), and there is a delay between deciding to establish a connection and the connection becoming available, we need a way to ensure that a large number of threads do not simultaneously decide to establish connections. The simultaneous-build-throttle attribute limits the number of new connections that can be established simultaneously by the connection pool to control its concurrency. When the maximum number of concurrent connections is reached, further requests for new connections will be blocked until an available connection is available or the specified timeout is exceeded. By setting an appropriate simultaneous-build-throttle value, you can balance the concurrency and resource consumption of the connection pool. The default value is 10, meaning the connection pool can establish up to 10 connections simultaneously. |

| overload-without-refusal-lifetime | 60 | Helps determine the status of the connection pool. If connection requests are rejected within the specified time threshold (in milliseconds), it indicates that the connection pool is overloaded. The default value is 60 seconds. |

| test-before-use | N/A | Specifies whether to test each connection before it is provided to an application. If this property is set to true, each connection is tested by executing the predefined test SQL (defined by the house-keeping-test-sql property) before it is provided to an application. If the connection test fails, the connection is discarded, and the connection pool selects another available connection. If all connections fail the test, a new connection is created. If the new connection also fails the test, a SQLException is thrown.Note that for MySQL databases, you must also include the autoReconnect=true parameter in the connection parameters. Otherwise, even if test-before-use is set to true, reconnection will not be possible. |

| fatal-sql-exception | null | Specifies the messages to detect and handle SQL exceptions. It is a list of message fragments separated by commas. When a SQLException occurs, its message is compared with these message fragments. If it contains any of the message fragments (case-sensitive), it is considered a fatal SQL exception. This will result in the connection being discarded. Regardless of the situation, the exception is rethrown so that the user is informed of what occurred. You can also configure different exceptions to be thrown (see the fatal-sql-exception-wrapper-class property). The default value is null.Note that if the fatal-sql-exception-wrapper-class property is set, you can configure an alternative exception class to throw. This allows you to customize how SQL exceptions are handled. |

| fatal-sql-exception-wrapper-class | null | Specifies the exception wrapper for fatal SQL exceptions. When the fatal-sql-exception property is configured, the default behavior is to discard the exception that caused the fatal SQLException and directly throw the original exception to the user. Using this property, you can wrap the SQLException in another exception. This exception can be any class you choose, as long as it extends SQLException or RuntimeException. Proxool provides two classes for your use if you do not want to build your own exception class: FatalSQLException and FatalRuntimeException. To use these classes, set this property to org.logicalcobwebs.proxool.FatalSQLException or org.logicalcobwebs.proxool.FatalRuntimeException as needed. The default value is null, indicating that fatal SQLException is not wrapped. The default value is null.Note that the exception wrapper class must be a subclass of SQLException or RuntimeException. |

| house-keeping-sleep-time | 30 seconds | Specifies the sleep time for the maintenance thread (house keeping thread) of the connection pool. The maintenance thread is responsible for checking the status of all connections and determining whether to destroy or create connections. The default value is 30 seconds, meaning the maintenance thread will execute maintenance tasks every 30 seconds. |

| injectable-connection-interface | N/A | Allows Proxool to implement the methods defined in the delegated Connection object. |

| injectable-statement-interface | N/A | Allows Proxool to implement the methods defined in the delegated Statement object. |

| injectable-prepared-statement-interface | N/A | Allows Proxool to implement the methods defined in the delegated PreparedStatement object. |

| injectable-callable-statement-interface | N/A | Allows Proxool to implement the methods defined in the delegated CallableStatement object. |

| jndi-name | N/A | Specifies the name under which the connection pool is registered in JNDI (Java Naming and Directory Interface). |

Introduction to Main.java

The Main.java file is part of the sample program, which demonstrates how to obtain a database connection through a Proxool connection pool and perform a series of database operations, including creating tables, inserting data, deleting data, updating data, querying data, and printing the query results.

The code in the Main.java file in this topic mainly includes the following parts:

Import the required classes and interfaces.

Define the package where the code is located and import the classes and interfaces related to Proxool and JDBC. These classes are used to implement the configuration and management of the database connection pool and to execute SQL statements. By using the Proxool connection pool, you can improve the performance and reliability of database operations. The specific steps are as follows:

- Define the package where the code is located as

com.example, which is used to store the current Java class. - Import the

org.logicalcobwebs.proxool.configuration.PropertyConfiguratorclass, which is used to configure Proxool. - Import the

java.io.InputStreamclass, which is used to read the configuration file. - Import the

java.sql.Connectionclass, which is used to obtain a database connection. - Import the

java.sql.DriverManagerclass, which is used to obtain a database connection. - Import the

java.sql.ResultSetclass, which is used to obtain query results. - Import the

java.sql.Statementclass, which is used to execute SQL statements. - Import the

java.util.Propertiesclass, which is used to load and read the configuration file.

Sample code:

package com.example; import org.logicalcobwebs.proxool.configuration.PropertyConfigurator; import java.io.InputStream; import java.sql.Connection; import java.sql.DriverManager; import java.sql.ResultSet; import java.sql.Statement; import java.util.Properties;- Define the package where the code is located as

Define the class name and method.

Define the entry method of the Java program. In this method, the database connection information is obtained by reading the configuration file. After the database connection is established by using the Proxool driver, the defined methods are called in sequence to execute DDL statements, DML statements, and query statements. The exceptions that may occur are captured and printed. The purpose of this code is to execute database-related operations and record logs by using a logger. The specific steps are as follows:

Define a public class named Main.

Define a private static constant named

DB_PROPERTIES_FILE, which indicates the path of the database configuration (property) file. This constant can be referenced in the code to load and read the property file.Define a public static method

main, which is the starting point of the program.Define a code block for capturing exceptions that may occur.

- Create a

Propertiesobject to read the properties in the configuration file. - Use the class loader of the Main class to obtain the input stream of the configuration file.

- Load the configuration file by using the loaded input stream and load the properties into the

Propertiesobject. - Configure the connection pool by using the loaded properties.

- Dynamically load the Proxool database driver.

- Establish a database connection by using the Proxool driver.

- Create a

Statementobject. - Call the defined method

executeDDLStatements()to execute DDL statements, which are statements for creating tables. - Call the defined method

executeDMLStatements()to execute DML statements, which are statements for inserting, updating, and deleting data. - Call the defined method

executeQueryStatements()to execute query statements and obtain data.

- Create a

Capture and print the exception information that may occur.

Define methods for creating tables, executing DML statements, and querying data.

Sample code:

public class Main { private static final String DB_PROPERTIES_FILE = "/db.properties"; public static void main(String[] args) { try { Properties properties = new Properties(); InputStream is = Main.class.getResourceAsStream(DB_PROPERTIES_FILE); properties.load(is); PropertyConfigurator.configure(properties); Class.forName("org.logicalcobwebs.proxool.ProxoolDriver"); try (Connection conn = DriverManager.getConnection("proxool.TEST"); Statement stmt = conn.createStatement()) { executeDDLStatements(stmt); executeDMLStatements(stmt); executeQueryStatements(stmt); } } catch (Exception e) { e.printStackTrace(); } } // Define the method for creating tables. // Define the method for executing DML statements. // Define the method for querying data. }Define the method for creating tables.

Define a private static method

executeDDLStatements()to execute DDL (data definition language) statements, including statements for creating tables. The specific steps are as follows:- Define a private static method

executeDDLStatements()that receives aStatementobject as a parameter and may throw anExceptionexception. - Use the

execute()method to execute SQL statements and create a table namedtest_proxool, which has two columns,c1andc2, of theNUMBERandVARCHAR2(32)types, respectively.

Sample code:

private static void executeDDLStatements(Statement stmt) throws Exception { stmt.execute("CREATE TABLE test_proxool (c1 NUMBER, c2 VARCHAR2(32))"); }- Define a private static method

Define the method for executing DML statements.

Define a private static method

executeDMLStatements()to execute DML (data manipulation language) statements, including statements for inserting, deleting, and updating data. The specific steps are as follows:- Define a private static method

executeDMLStatements()that receives aStatementobject as a parameter and throws anExceptionexception if an exception occurs during execution. - Use a

forloop to iterate from 1 to 10. In the loop, use theexecute()method to execute SQL insert statements and insert the variableiand the corresponding string value into thetest_proxooltable. - Execute an SQL delete statement to delete rows from the

test_proxooltable where the value of thec1column is less than or equal to 5. - Execute an SQL update statement to update the value of the

c2column totest_updatefor rows in thetest_proxooltable where the value of thec1column is 6.

Sample code:

private static void executeDMLStatements(Statement stmt) throws Exception { for (int i = 1; i <= 10; i++) { stmt.execute("INSERT INTO test_proxool VALUES (" + i + ",'test_insert" + i + "')"); } stmt.execute("DELETE FROM test_proxool WHERE c1 <= 5"); stmt.execute("UPDATE test_proxool SET c2 = 'test_update' WHERE c1 = 6"); }- Define a private static method

Define a method for querying data.

Define a private static method

executeQueryStatements()to executeSELECTqueries and process the results. The steps are as follows:- Define a private static method

executeQueryStatements()that receives aStatementobject as a parameter. If an exception occurs during execution, the method will throw anException. - Use the

executeQuery()method to execute theSELECTquery statement and store the results in aResultSetobjectrs. In this case, the query returns all data from thetest_proxooltable. Use thetry-with-resourcesstatement to ensure that theResultSetis automatically closed after it is no longer needed. - Use a

whileloop and thenext()method to iterate through each row of data in theResultSetobjectrs. In each iteration, thers.next()method moves the pointer to the next row in the result set. If there is another row of data available, this method returnstrue; otherwise, it returnsfalse. In thewhileloop, as long asrs.next()returnstrue, it indicates that there are more rows of data available. The code within the loop will execute and process the data of the current row. Once all rows of data have been processed,rs.next()will returnfalse, and the loop will end. - Use the

getInt()andgetString()methods to retrieve the values of specified columns in the current row and print them to the console. In this case, the values of thec1andc2columns are printed. ThegetInt()method is used to retrieve integer values, and thegetString()method is used to retrieve string values.

Code:

private static void executeQueryStatements(Statement stmt) throws Exception { try (ResultSet rs = stmt.executeQuery("SELECT * FROM test_proxool")) { while (rs.next()) { System.out.println(rs.getInt("c1") + " " + rs.getString("c2")); } } }- Define a private static method

Complete code

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.oceanbase</groupId>

<artifactId>proxool-oceanbase-client</artifactId>

<version>1.0-SNAPSHOT</version>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>8</source>

<target>8</target>

</configuration>

</plugin>

</plugins>

</build>

<dependencies>

<dependency>

<groupId>com.oceanbase</groupId>

<artifactId>oceanbase-client</artifactId>

<version>2.4.2</version>

</dependency>

<dependency>

<groupId>proxool</groupId>

<artifactId>proxool-cglib</artifactId>

<version>0.9.1</version>

</dependency>

<dependency>

<groupId>proxool</groupId>

<artifactId>proxool</artifactId>

<version>0.9.1</version>

</dependency>

<dependency>

<groupId>commons-logging</groupId>

<artifactId>commons-logging</artifactId>

<version>1.2</version>

</dependency>

</dependencies>

</project>

#alias: the alias of the data source

jdbc-1.proxool.alias=TEST

#driver-class: driver name

jdbc-1.proxool.driver-class=com.oceanbase.jdbc.Driver

#driver-url: url connection string, username and password must be determined

jdbc-1.proxool.driver-url=jdbc:oceanbase://$host:$port/$schema_name

jdbc-1.user=$user_name

jdbc-1.password=$password

#The maximum number of database connections. The default is 15

jdbc-1.proxool.maximum-connection-count=8

#The minimum number of database connections, defaults to 5

jdbc-1.proxool.minimum-connection-count=5

#The number of available connections in the Connection pool. If the number of connections in the current Connection pool is less than this value, new connections will be established (assuming that the maximum number of available connections is not exceeded). For example, if we have three active connections and two available connections, and our prototype count is 4, the database Connection pool will try to establish another two connections. This is different from the minimum connection count Minimum connection count also counts active connections. Prototype count is the number of spare connections

jdbc-1.proxool.prototype-count=4

#verbose: detailed information settings. Parameter bool value

jdbc-1.proxool.verbose=true

#statistics: connection pool usage statistics. Parameter "10s, 1m, 1d"

jdbc-1.proxool.statistics=10s,1m,1d

#statistics-log-level: log statistics tracking type. Parameter 'ERROR' or 'INFO'

jdbc-1.proxool.statistics-log-level=error

package com.example;

import org.logicalcobwebs.proxool.configuration.PropertyConfigurator;

import java.io.InputStream;

import java.sql.Connection;

import java.sql.DriverManager;

import java.sql.ResultSet;

import java.sql.Statement;

import java.util.Properties;

public class Main {

private static final String DB_PROPERTIES_FILE = "/db.properties";

public static void main(String[] args) {

try {

Properties properties = new Properties();

InputStream is = Main.class.getResourceAsStream(DB_PROPERTIES_FILE);

properties.load(is);

PropertyConfigurator.configure(properties);

Class.forName("org.logicalcobwebs.proxool.ProxoolDriver");

try (Connection conn = DriverManager.getConnection("proxool.TEST");

Statement stmt = conn.createStatement()) {

executeDDLStatements(stmt);

executeDMLStatements(stmt);

executeQueryStatements(stmt);

}

} catch (Exception e) {

e.printStackTrace();

}

}

private static void executeDDLStatements(Statement stmt) throws Exception {

stmt.execute("CREATE TABLE test_proxool (c1 NUMBER, c2 VARCHAR2(32))");

}

private static void executeDMLStatements(Statement stmt) throws Exception {

for (int i = 1; i <= 10; i++) {

stmt.execute("INSERT INTO test_proxool VALUES ("+ i +",'test_insert" + i + "')");

}

stmt.execute("DELETE FROM test_proxool WHERE c1 <= 5");

stmt.execute("UPDATE test_proxool SET c2 = 'test_update' WHERE c1 = 6");

}

private static void executeQueryStatements(Statement stmt) throws Exception {

try (ResultSet rs = stmt.executeQuery("SELECT * FROM test_proxool")) {

while (rs.next()) {

System.out.println(rs.getInt("c1") + " " + rs.getString("c2"));

}

}

}

}

References

- For more information about OceanBase Connector/J, see OceanBase JDBC driver.

- For more information about using the Proxool connection pool, see Introduction for Users.