This topic describes how to deploy OceanBase Migration Service (OMS) on multiple nodes in multiple regions by using the deployment tool.

Background information

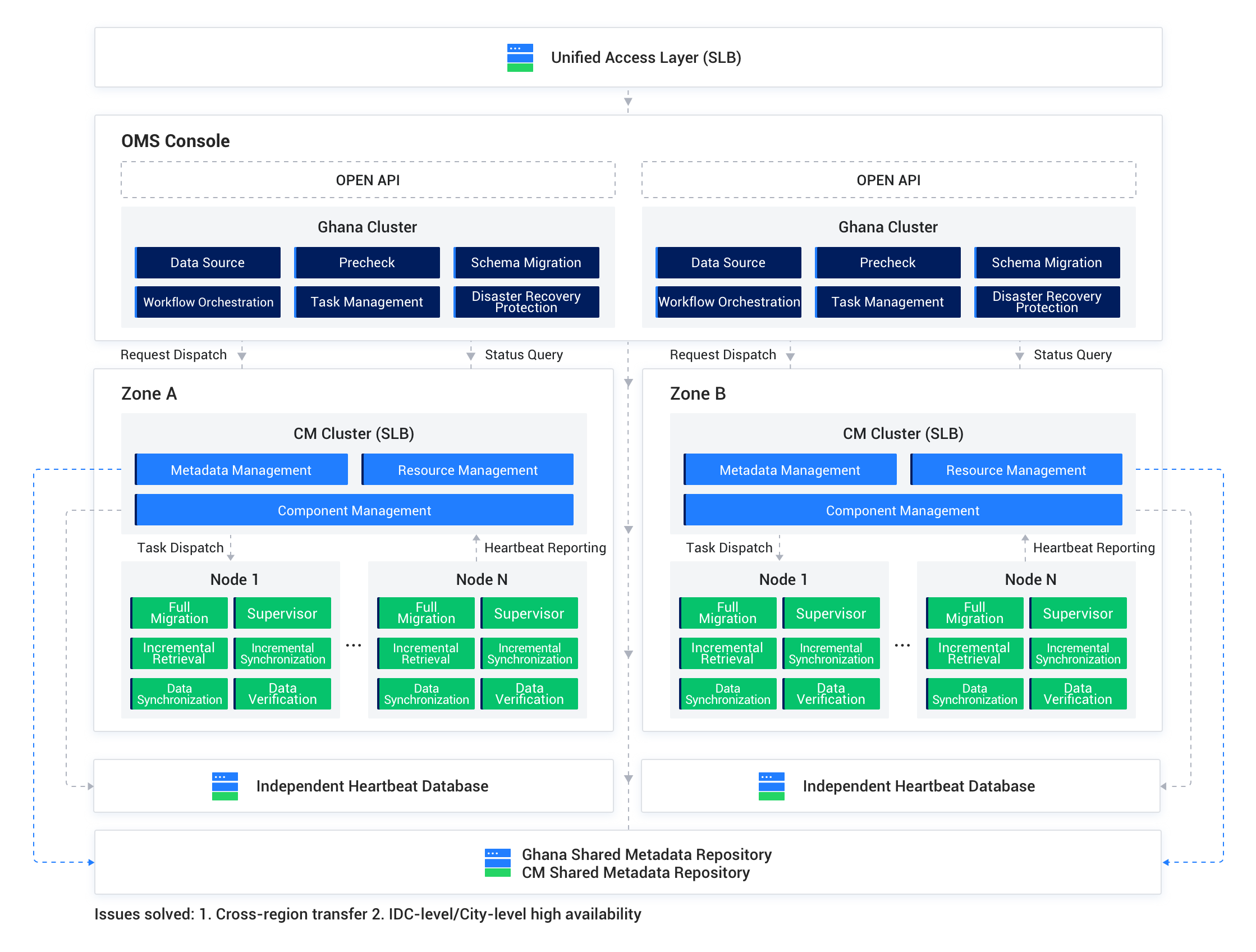

As more users apply OMS in data migration, OMS must adapt to increasingly diverse scenarios. In addition to single-region data migration and data synchronization, OMS supports data synchronization across regions, data migration between IDCs in different regions, and active-active data synchronization.

You can deploy OMS on one or more nodes in each region. OMS can be deployed on multiple nodes in a region to build an environment with high availability. In this way, OMS can start components on appropriate nodes based on the tasks.

For example, if you want to synchronize data from Region Hangzhou to Region Heilongjiang, OMS starts the Score component on a node in the Hangzhou region to collect incremental logs and starts the Incr-Sync component on a node in the Heilongjiang region to synchronize incremental data.

Observe the following notes on multi-node deployment:

You can deploy OMS on a single node first and then scale out to multiple nodes. For more information, see Scale out OMS.

To deploy OMS on multiple nodes across multiple regions, you must apply for a virtual IP address (VIP) for each region and use it as the mount point for the OMS console. In addition, you must configure the mapping rules for Ports 8088 and 8089 in the VIP-based network strategy.

You can use the VIP to access the OMS console even if an OMS node fails.

OMS V4.3.1 and later support setting the primary region when deploying OMS across multiple regions. After setting the primary region, you can start the management process only on the primary region, and other regions will not start the management process.

Notice

You must deploy the primary region first before deploying other regions.

Prerequisites

The installation environment meets the system and network requirements. For more information, see System and network requirements.

You have created a resource manager (RM) database, as well as a cluster manager (CM) database and a heartbeat database for each region for the MetaDB of OMS.

The server to deploy OMS can connect to all other servers.

All servers involved in the multi-node deployment can connect to each other and you can obtain root permissions on a node by using its username and password.

You have obtained the installation package of OMS, which is generally a

tar.gzfile whose name starts withoms.You have downloaded the OMS installation package and loaded it to the local image repository of the Docker container on each server node.

docker load -i <OMS installation package>You have prepared a directory for mounting the OMS container. In the mount directory, OMS will create the

/home/admin/logs,/home/ds/store, and/home/ds/rundirectories for storing the component information and logs generated during the running of OMS.(Optional) You have prepared a time-series database for storing performance monitoring data and DDL/DML statistics of OMS.

Terms

You need to replace variable names in some commands and prompts. A variable name is enclosed with angle brackets (<>).

OMS container mount directory: See the description of the mount directory in the "Prerequisites" section of this topic.

IP address of the server: the IP address of the host that executes the script.

OMS_IMAGE: the unique identifier of the loaded image. After you load the OMS installation package by using Docker, run the

docker imagescommand to obtain the [IMAGE ID] or [REPOSITORY:TAG] of the loaded image. The obtained value is the unique identifier of the loaded image. Here is an example:$sudo docker images REPOSITORY TAG IMAGE ID work.oceanbase-dev.com/obartifact-store/oms feature_3.4.0 2a6a77257d35In this example,

<OMS_IMAGE>can bework.oceanbase-dev.com/obartifact-store/oms:feature_3.4.0or2a6a77257d35. Replace the value of<OMS_IMAGE>in related commands with the preceding value.Directory of the

config.yamlfile: If you want to deploy OMS based on an existingconfig.yamlconfiguration file, this directory is the one where the configuration file is located.

Deployment procedure without a configuration file

To modify the configuration after deployment, perform the following steps:

Notice

If you deploy OMS on multiple nodes in multiple regions, you must manually modify the configuration of each node.

Log in to the OMS container.

Modify the

config.yamlfile in the/home/admin/conf/directory as needed.Initialize the metadata.

sh /root/docker_init.sh

Integrated deployment mode

Log in to the server where OMS is to be deployed.

(Optional) Deploy a time-series database.

If you need to collect and display OMS monitoring data, deploy a time-series database. Otherwise, you can skip this step. For more information, see Deploy a time-series database.

Run the following command to obtain the deployment script

docker_remote_deploy.shfrom the loaded image:sudo docker run -d --name oms-config-tool <OMS_IMAGE> bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy.sh . && sudo docker rm -f oms-config-toolHere is an example:

sudo docker run -d --net host --name oms-config-tool work.oceanbase-dev.com/obartifact-store/oms:feature_3.4.0 bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy.sh . && sudo docker rm -f oms-config-toolUse the deployment script to start the deployment tool.

sh docker_remote_deploy.sh -o <Mount directory of the OMS container> -i <IP address of the server> -d <OMS_IMAGE>Complete the deployment for the first region as prompted. After you set each parameter, press Enter to move on to the next parameter.

Select a deployment mode.

Select Multiple Regions.

Select a task.

Select No configuration file. Deploy OMS for the first time. Start from generating the configuration file.

Determine whether to deploy the RM database and CM database separately. By default, they do not need to be deployed separately.

If yes, enter

y.Configure the MetaDB for storing the metadata generated during the running of OMS.

Enter the IP address, port, username, and password of the MetaDB.

If you need to deploy the RM database and CM database separately, enter the IP address, port, username, and password of the RM database and CM database.

Set a prefix for names of databases in the MetaDB.

For example, when the prefix is set to

oms, the databases in the MetaDB are namedoms_rm,oms_cm, andoms_cm_hb.Confirm your settings.

If the settings are correct, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.If the system displays Database name already exists in the MetaDB. Continue?, it indicates that the database names you specified already exist in the MetaDB. This may be caused by repeated deployment or upgrade of OMS. You can enter

yand press Enter to proceed, or enternand press Enter to modify the settings.

Perform the following operations to configure the OMS cluster settings:

Enter the region ID, for example, cn-hangzhou.

Enter the region ID for the parameter cm_region_cn. It is the same as cm_region, for example, cn-hangzhou.

Specify the URL of the CM service, which is the VIP or domain name to which all CM servers in the region are mounted. The original parameter is

cm-url.Enter the VIP or domain name as the URL of the CM service. You can separately specify the IP address and port number in the URL, or use a colon (:) to join the IP address and port number in the <IP address>:<port number> format.

Note

The

http://prefix in the URL is optional.Enter the IP addresses of all servers in the region. Separate them with commas (,).

Set a region ID for the current region (Region name in Chinese). Value range: [0,127].

An ID uniquely identifies a region.

Confirm whether the displayed OMS cluster settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.

Determine whether to monitor historical data of OMS.

If you have deployed a time-series database in Step 2, enter

yand press Enter to go to the step of configuring the time-series database and enable monitoring for OMS historical data.If you chose not to deploy a time-series database in Step 2, enter

nand press Enter to go to the step of determining whether to enable the audit log feature and configure Simple Log Service (SLS) parameters. In this case, OMS does not monitor the historical data after deployment.

Configure the time-series database.

Perform the following operations:

Confirm whether you have deployed a time-series database.

Enter the value based on the actual situation. If yes, enter

yand press Enter. If no, enternand press Enter to go to the step of determining whether to enable the audit log feature and set SLS parameters.Set the type of the time-series database to

INFLUXDB.Notice

At present, only INFLUXDB is supported.

Enter the URL, username, and password of the time-series database. For more information, see Deploy a time-series database.

Confirm whether the displayed settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.

Determine whether to enable the audit log feature and write the audit logs to SLS.

To enable the audit log feature, enter

yand press Enter to go to the next step to specify the SLS parameters.Otherwise, enter

nand press Enter to start the deployment. In this case, OMS does not audit the logs after deployment.Specify the SLS parameters.

Set the SLS parameters as prompted.

Confirm whether the displayed settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.If the configuration file passes the check, all the settings are displayed. If the settings are correct, enter

nand press Enter to proceed. Otherwise, enteryand press Enter to modify the settings.If the configuration file fails the check, modify the settings as prompted.

Start the deployment on each node one after another.

Specify the directory to which the OMS container is mounted on the node.

Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.

Start deployment for a new region as prompted after you complete the deployment for the first region.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.Enter the OMS cluster configuration information as prompted and confirm the settings.

Determine whether to separately deploy the RM database and the CM database for the new region. By default, they do not need to be deployed separately.

If you need to deploy the RM database and CM database separately, enter the IP address, port, username, and password of the CM database for the new region as prompted.

Deploy the nodes in this region one by one as prompted.

If the deployment fails, you can log in to the OMS container and view logs in the

.logfiles prefixed withdocker_initin the/home/admin/logsdirectory. If the OMS container fails to be started, you cannot obtain logs.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.

Separate deployment mode

Log in to the server where OMS is to be deployed.

(Optional) Deploy a time-series database.

If you need to collect and display OMS monitoring data, deploy a time-series database. Otherwise, you can skip this step. For more information, see Deploy a time-series database.

Run the following command to obtain the deployment script

docker_remote_deploy_v2.shfrom the loaded image:sudo docker run -d --net host --name oms-config-tool <management image or component image> bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy_v2.sh . && sudo docker rm -f oms-config-toolHere is an example:

sudo docker run -d --net host --name oms-config-tool 0719**** bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy_v2.sh . && sudo docker rm -f oms-config-toolUse the deployment script to start the deployment tool.

sh docker_remote_deploy_v2.sh -o <OMS container mount directory> -i <IP address of the server> -v <management image> -s <component image>Here is an example:

sh docker_remote_deploy_v2.sh -o /home/l****.***/l****_oms_run_022102 -i xxx.xxx.xxx.1 -v 0719**** -s 188a****Complete the deployment for the first region as prompted. After you set each parameter, press Enter to move on to the next parameter.

Select a deployment mode.

Select Multiple Regions.

Select a task.

Select No configuration file. Deploy OMS for the first time. Start from generating the configuration file.

Determine whether to deploy the RM database and CM database separately. By default, they do not need to be deployed separately.

If yes, enter

y.Configure the MetaDB for storing the metadata generated during the running of OMS.

Enter the IP address, port, username, and password of the MetaDB.

If you need to deploy the RM database and CM database separately, enter the IP address, port, username, and password of the RM database and CM database.

Set a prefix for names of databases in the MetaDB.

For example, when the prefix is set to

oms, the databases in the MetaDB are namedoms_rm,oms_cm, andoms_cm_hb.Confirm your settings.

If the settings are correct, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.If the system displays Database name already exists in the MetaDB. Continue?, it indicates that the database names you specified already exist in the MetaDB. This may be caused by repeated deployment or upgrade of OMS. You can enter

yand press Enter to proceed, or enternand press Enter to modify the settings.

Perform the following operations to configure the OMS cluster settings:

Enter the region ID, for example, cn-hangzhou.

Enter the region ID for the parameter cm_region_cn. It is the same as cm_region, for example, cn-hangzhou.

Specify the URL of the CM service, which is the VIP or domain name to which all CM servers in the region are mounted. The original parameter is

cm-url.Enter the VIP or domain name as the URL of the CM service. You can separately specify the IP address and port number in the URL, or use a colon (:) to join the IP address and port number in the <IP address>:<port number> format. For example, enter

xxx.xxx.xxx.1:8088.Note

The

http://prefix in the URL is optional.Enter the IP addresses of all management nodes in the region. Separate them with commas (,).

For example, enter the IP address of one management node:

xxx.xxx.xxx.1.Enter the IP addresses of all component nodes in the region. Separate them with commas (,).

For example, enter the IP address of two component nodes:

xxx.xxx.xxx.1,xxx.xxx.xxx.2.Set a region ID for the current region (Region name in Chinese). Value range: [0,127].

An ID uniquely identifies a region.

Confirm whether the current region is the primary region.

If yes, enter

yand press Enter to proceed. In the OMS cluster settings of the next step,primary_region_ipwill be empty.If no, enter

nand press Enter. Then, you need to enter the IP address of the primary region. In the OMS cluster settings of the next step,primary_region_ipwill display the IP address of the primary region.Confirm whether the displayed OMS cluster settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.

Determine whether to monitor historical data of OMS.

If you have deployed a time-series database in Step 2, enter

yand press Enter to go to the step of configuring the time-series database and enable monitoring for OMS historical data.If you chose not to deploy a time-series database in Step 2, enter

nand press Enter to go to the step of determining whether to enable the audit log feature and configure Simple Log Service (SLS) parameters. In this case, OMS does not monitor the historical data after deployment.

Configure the time-series database.

Perform the following operations:

Confirm whether you have deployed a time-series database.

Enter the value based on the actual situation. If yes, enter

yand press Enter. If no, enternand press Enter to go to the step of determining whether to enable the audit log feature and set SLS parameters.Set the type of the time-series database to

INFLUXDB.Notice

At present, only INFLUXDB is supported.

Enter the URL, username, and password of the time-series database. For more information, see Deploy a time-series database.

Confirm whether the displayed settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.

Determine whether to enable the audit log feature and write the audit logs to SLS.

To enable the audit log feature, enter

yand press Enter to go to the next step to specify the SLS parameters.Otherwise, enter

nand press Enter to start the deployment. In this case, OMS does not audit the logs after deployment.Specify the SLS parameters.

Set the SLS parameters as prompted.

Confirm whether the displayed settings are correct.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.If the configuration file passes the check, all the settings are displayed. If the settings are correct, enter

nand press Enter to proceed. Otherwise, enteryand press Enter to modify the settings.Deploy the management nodes one by one as prompted.

Enter the mount directory on the management node

for deploying the OMS container. Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.Confirm whether the path to which the

config.yamlconfiguration file will be written is correct.If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Start to deploy the management nodes.

To deploy multiple management nodes, complete the deployment on one server and then another until all management nodes are deployed.

Deploy the component nodes one by one as prompted.

Specify the directory to which the OMS container is mounted on the component node.

Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.Confirm whether the path to which the

config_yamlconfiguration file will be written is correct.If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Start to deploy the component nodes.

To deploy multiple component nodes, complete the deployment on one server and then another until all component nodes are deployed.

Start deployment for a new region as prompted after you complete the deployment for the first region.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.Enter the OMS cluster configuration information as prompted and confirm the settings.

Determine whether to separately deploy the RM database and the CM database for the new region. By default, they do not need to be deployed separately.

If you need to deploy the RM database and CM database separately, enter the IP address, port, username, and password of the CM database for the new region as prompted.

Deploy the management nodes one by one as prompted.

To deploy multiple management nodes, complete the deployment on one server and then another until all management nodes are deployed.

Deploy the component nodes one by one as prompted.

To deploy multiple component nodes, complete the deployment on one server and then another until all component nodes are deployed.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.

Deployment procedure with a configuration file

Integrated deployment mode

Log in to the server where OMS is to be deployed.

(Optional) Deploy a time-series database.

If you need to collect and display OMS monitoring data, deploy a time-series database. Otherwise, you can skip this step. For more information, see Deploy a time-series database.

Run the following command to obtain the deployment script

docker_remote_deploy.shfrom the loaded image:sudo docker run -d --name oms-config-tool <OMS_IMAGE> bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy.sh . && sudo docker rm -f oms-config-toolUse the deployment script to start the deployment tool.

sh docker_remote_deploy.sh -o <Mount directory of the OMS container> -c <Directory of the existing config.yaml file> -i <IP address of the host> -d <OMS_IMAGE>Note

For more information about settings of the

config.yamlfile, see the "Template and example of a configuration file" section.Complete the deployment as prompted. After you set each parameter, press Enter to move on to the next parameter.

Select a deployment mode.

Select Multiple Regions.

Select a task.

Select Reference configuration file has been passed in through the [-c] option of the script. Start to configure based on the file.

If the system displays Database name already exists in the MetaDB. Continue?, it indicates that the database names you specified already exist in the RM database and CM database of the MetaDB in the original configuration file. This may be caused by repeated deployment or upgrade of OMS. You can enter

yand press Enter to proceed, or enternand press Enter to modify the settings.If the configuration file passes the check, all the settings are displayed. If the settings are correct, enter

nand press Enter to proceed. Otherwise, enteryand press Enter to modify the settings.If the configuration file fails the check, modify the settings as prompted.

Start the deployment on each node one after another.

Specify the directory to which the OMS container is mounted on the node.

Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.Perform the following operations to configure the OMS cluster settings:

Enter the region ID, for example, cn-hangzhou.

Enter the region ID for the parameter cm_region_cn. It is the same as cm_region, for example, cn-hangzhou.

Set a region ID for the current region (Region name in Chinese). Value range: [0,127].

An ID uniquely identifies a region.

A message is displayed, showing the names and IDs of existing regions, to help you avoid using an existing name or ID for a new region.

Repeat the deployment steps on each node in the region.

If the deployment fails, you can log in to the OMS container and view logs in the

.logfiles prefixed withdocker_initin the/home/admin/logsdirectory. If the OMS container fails to be started, you cannot obtain logs.

Separate deployment mode

Log in to the server where OMS is to be deployed.

(Optional) Deploy a time-series database.

If you need to collect and display OMS monitoring data, deploy a time-series database. Otherwise, you can skip this step. For more information, see Deploy a time-series database.

Run the following command to obtain the deployment script

docker_remote_deploy_v2.shfrom the loaded image:sudo docker run -d --name oms-config-tool <OMS_IMAGE> bash && sudo docker cp oms-config-tool:/root/docker_remote_deploy_v2.sh . && sudo docker rm -f oms-config-toolUse the deployment script to start the deployment tool.

sh docker_remote_deploy_v2.sh -o <Mount directory of the OMS container> -c <Directory of the existing config.yaml file> -i <IP address of the host> -d <OMS_IMAGE>Note

For more information about settings of the

config.yamlfile, see the "Template and example of a configuration file" section.Complete the deployment as prompted. After you set each parameter, press Enter to move on to the next parameter.

Select a deployment mode.

Select Multiple Regions.

Select a task.

Select Reference configuration file has been passed in through the [-c] option of the script. Start to configure based on the file.

If the system displays Database name already exists in the MetaDB. Continue?, it indicates that the database names you specified already exist in the RM database and CM database of the MetaDB in the original configuration file. This may be caused by repeated deployment or upgrade of OMS. You can enter

yand press Enter to proceed, or enternand press Enter to modify the settings.If the configuration file passes the check, all the settings are displayed. If the settings are correct, enter

nand press Enter to proceed. Otherwise, enteryand press Enter to modify the settings.If the configuration file fails the check, modify the settings as prompted.

Deploy the management nodes one by one as prompted.

Enter the mount directory on the management node

for deploying the OMS container. Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.Confirm whether the path to which the

config.yamlconfiguration file will be written is correct.If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Start to deploy the management nodes.

To deploy multiple management nodes, complete the deployment on one server and then another until all management nodes are deployed.

Deploy the component nodes one by one as prompted.

Specify the directory to which the OMS container is mounted on the component node.

Specify a directory with a large capacity.

For a remote node, the username and password for logging in to the remote node are required. The corresponding user account must have the sudo privilege on the remote node.

Confirm whether the OMS image file can be named <OMS_IMAGE>.

If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Determine whether to mount an SSL certificate to the OMS container.

If yes, enter

y, press Enter, and specify thehttps_keyandhttps_crtdirectories as prompted. Otherwise, enternand press Enter.Confirm whether the path to which the

config_yamlconfiguration file will be written is correct.If yes, enter

yand press Enter to proceed. Otherwise, enternand press Enter to modify the settings.Start to deploy the component nodes.

To deploy multiple component nodes, complete the deployment on one server and then another until all component nodes are deployed.

Determine whether to deploy OMS in a new region.

After the deployment is completed, the system displays "OMS has been deployed in Regions [<Region ID 1>,<Region ID 2>…]. Do you want to deploy OMS in a new region?"

If yes, enter

yand press Enter to proceed. If no, enternand press Enter to end the deployment process.Perform the following operations to configure the OMS cluster settings:

Enter the region ID, for example, cn-hangzhou.

Enter the region ID for the parameter cm_region_cn. It is the same as cm_region, for example, cn-hangzhou.

Set a region ID for the current region (Region name in Chinese). Value range: [0,127].

An ID uniquely identifies a region.

A message is displayed, showing the names and IDs of existing regions, to help you avoid using an existing name or ID for a new region.

Repeat the deployment steps on each management node and component node in the region until all nodes are deployed.

Template and example of the configuration file

Configuration file template

The configuration file template in this topic is used for the regular password-based login method. If you log in to the OMS console by using single sign-on (SSO), you must integrate the OpenID Connect (OIDC) protocol and add parameters in the config.yaml file template. For more information, see Integrate the OIDC protocol to OMS to implement SSO.

Notice

To deploy multiple nodes in the Hangzhou region, specify the IP addresses of all nodes for the

cm_nodesparameter.You must replace the sample values of required parameters based on your actual deployment environment. Both the required and optional parameters are described in the following table. You can specify the optional parameters as needed.

In the

config.yamlfile, you must specify the parameters in the key: value format, with a space after the colon (:).

In the following example of the config.yaml file for the multi-node multi-region deployment mode, OMS is deployed on two nodes separately in the Hangzhou and Heilongjiang regions. OMS V4.3.1 and later support the primary region feature. In this example, Hangzhou is the primary region.

Here is a template of the

config.yamlfile for you to deploy OMS in the primary region Hangzhou:# Information about the RM database and CM database oms_cm_meta_host: ${oms_cm_meta_host} oms_cm_meta_password: ${oms_cm_meta_password} oms_cm_meta_port: ${oms_cm_meta_port} oms_cm_meta_user: ${oms_cm_meta_user} oms_rm_meta_host: ${oms_rm_meta_host} oms_rm_meta_password: ${oms_rm_meta_password} oms_rm_meta_port: ${oms_rm_meta_port} oms_rm_meta_user: ${oms_rm_meta_user} # You can customize the names of the following three databases, which are created in the MetaDB when you deploy OMS. drc_rm_db: ${drc_rm_db} drc_cm_db: ${drc_cm_db} drc_cm_heartbeat_db: ${drc_cm_heartbeat_db} # Configure the OMS cluster in the Hangzhou region. # To deploy OMS on multiple nodes in multiple regions, you must set the cm_url parameter to a VIP or domain name to which all CM servers in the region are mounted. cm_url: ${cm_url} cm_location: ${cm_location} cm_region: ${cm_region} cm_region_cn: ${cm_region_cn} cm_nodes: - ${host_ip1} - ${host_ip2} console_nodes: - ${host_ip3} - ${host_ip4} # primary_region_ip is empty to indicate that the current region is the primary region. "primary_region_ip": "" # Configurations of the time-series database # The default value of `tsdb_enabled`, which specifies whether to configure a time-series database, is `false`. To enable metric reporting, set the parameter to `true`. # tsdb_enabled: false # If the `tsdb_enabled` parameter is set to `true`, delete comments for the following parameters and specify the values based on your actual configurations. # tsdb_service: 'INFLUXDB' # tsdb_url: '${tsdb_url}' # tsdb_username: ${tsdb_user} # tsdb_password: ${tsdb_password}ParameterDescriptionRequired?oms_cm_meta_host The IP address of the CM database. It can only be a MySQL-compatible tenant of OceanBase Database V2.0 or later. Yes oms_cm_meta_password The password for connecting to the CM database. Yes oms_cm_meta_port The port number for connecting to the CM database. Yes oms_cm_meta_user The username for connecting to the CM database. Yes oms_rm_meta_host The IP address of the RM database. It can only be a MySQL-compatible tenant of OceanBase Database V2.0 or later. Yes oms_rm_meta_password The password for connecting to the RM database. Yes oms_rm_meta_port The port number for connecting to the RM database. Yes oms_rm_meta_user The username for connecting to the RM database. Yes drc_rm_db The name of the database for the OMS console. Yes drc_cm_db The name of the database for the CM service. Yes drc_cm_heartbeat_db The name of the heartbeat database for the CM service. Yes cm_url The URL of the OMS CM service, for example, http://VIP:8088.

Note

To deploy OMS on multiple nodes in multiple regions, you must set thecm_urlparameter to a VIP or domain name to which all CM servers in the current region are mounted. We recommend that you do not set it tohttp://127.0.0.1:8088.

The access URL of the OMS console is in the following format:IP address of the host where OMS is deployed:8089, for example,http://xxx.xxx.xxx.xxx:8089orhttps://xxx.xxx.xxx.xxx:8089. Port 8088 is used for program calls, and Port 8089 is used for web page access. You must specify port 8088.Yes cm_location The code of the region. Value range: [0,127]. You can select one number for each region.

Notice

If you upgrade to OMS V3.2.1 from an earlier version, you must set thecm_locationparameter to0.Yes cm_region The name of the region, for example, cn-hangzhou.

Notice

If you use OMS with the Alibaba Cloud Multi-Site High Availability (MSHA) service in an active-active disaster recovery scenario, use the region configured for the Alibaba Cloud service. The active-active disaster recovery feature is deprecated in OMS V4.3.1.Yes cm_region_cn The value here is the same as the value of cm_region. Yes cm_nodes In multi-node deployment mode, you must specify multiple IP addresses for the parameter. - In integrated deployment mode,

cm_nodesindicates the IP addresses of servers on which the OMS CM service is deployed. - In separate deployment mode,

cm_nodesindicates on which servers the components are deployed.

Yes console_nodes - In integrated deployment mode, the value of

console_nodesis the same as that ofcm_nodes. - In separate deployment mode,

console_nodesindicates on which servers the management nodes are deployed.

This parameter is optional in integrated deployment mode.

This parameter is required in separate deployment mode.primary_region_ip If this parameter is not specified or is empty, it indicates that the current region is the primary region. If this parameter is not empty, it indicates that the current server is in another region and the console is not to be started. During initialization, requests are sent to the specified primary_region_ip. For example, "primary_region_ip": "xxx.xxx.xxx.1".No tsdb_service The type of the time-series database. Valid values: INFLUXDBandCERESDB.No. Default value: INFLUXDB.tsdb_enabled Specifies whether metric reporting is enabled for monitoring. Valid values: trueandfalse.No. Default value: false.tsdb_url The IP address of the server where InfluxDB is deployed, which needs to be changed based on the actual environment. You need to modify this parameter based on the actual environment if you set the tsdb_enabledparameter totrue. After the time-series database is deployed, it maps to OMS deployed for the whole cluster. This means that although OMS is deployed in multiple regions, all regions map to the same time-series database.No tsdb_username The username used to connect to the time-series database. You need to modify this parameter based on the actual environment if you set the tsdb_enabledparameter totrue. After you deploy a time-series database, manually create a user and specify the username and password.No tsdb_password The password used to connect to the time-series database. You need to modify this parameter based on the actual environment if you set the tsdb_enabledparameter totrue.No - In integrated deployment mode,

Here is a template of the

config.yamlfile for you to deploy OMS in the Heilongjiang region:The operations are the same as those for deploying OMS in the Hangzhou region, except that you must modify the following parameters in the

config.yamlfile:drc_cm_heartbeat_db,cm_url,cm_location,cm_region,cm_region_cn, andcm_nodes.Notice

To deploy multiple nodes in the Heilongjiang region, specify the IP addresses of all nodes for the

cm_nodesparameter.You must execute the

docker_init.shscript on at least one node in each region.

# Information about the RM database and CM database oms_cm_meta_host: ${oms_cm_meta_host} oms_cm_meta_password: ${oms_cm_meta_password} oms_cm_meta_port: ${oms_cm_meta_port} oms_cm_meta_user: ${oms_cm_meta_user} oms_rm_meta_host: ${oms_rm_meta_host} oms_rm_meta_password: ${oms_rm_meta_password} oms_rm_meta_port: ${oms_rm_meta_port} oms_rm_meta_user: ${oms_rm_meta_user} # You can customize the names of the following three databases, which are created in the MetaDB when you deploy OMS. drc_rm_db: ${drc_rm_db} drc_cm_db: ${drc_cm_db} drc_cm_heartbeat_db: ${drc_cm_heartbeat_db} # Configure the OMS cluster in the Heilongjiang region. # To deploy OMS on multiple nodes in multiple regions, you must set the cm_url parameter to a VIP or domain name to which all CM servers in the region are mounted. cm_url: ${cm_url} cm_location: ${cm_location} cm_region: ${cm_region} cm_region_cn: ${cm_region_cn} cm_nodes: - ${host_ip1} - ${host_ip2} console_nodes: - ${host_ip3} - ${host_ip4} # Configurations of the time-series database # tsdb_service: 'INFLUXDB' # Default value: false. Set the value based on your actual configuration. # tsdb_enabled: false # The IP address of the server where InfluxDB is deployed. # You need to modify the following parameters based on the actual environment if you set the tsdb_enabled parameter to true. # tsdb_url: ${tsdb_url} # tsdb_username: ${tsdb_user} # tsdb_password: ${tsdb_password}

Configuration file sample

Replace related parameters with the actual values in the target deployment environment.

Here is a sample

config.yamlfile for you to deploy OMS in the primary region Hangzhou:oms_cm_meta_host: xxx.xxx.xxx.xxx oms_cm_meta_password: ********** oms_cm_meta_port: 2883 oms_cm_meta_user: oms_cm_meta_user oms_rm_meta_host: xxx.xxx.xxx.xxx oms_rm_meta_password: ********** oms_rm_meta_port: 2883 oms_rm_meta_user: oms_rm_meta_user drc_rm_db: oms_rm drc_cm_db: oms_cm drc_cm_heartbeat_db: oms_cm_heartbeat cm_url: http://VIP:8088 cm_location: 1 cm_region: cn-hangzhou cm_region_cn: cn-hangzhou cm_nodes: - xxx.xxx.xxx.xx1 - xxx.xxx.xxx.xx2 console_nodes: - xxx.xxx.xxx.xx3 - xxx.xxx.xxx.xx4 "primary_region_ip": "" tsdb_service: 'INFLUXDB' tsdb_enabled: true tsdb_url: 'xxx.xxx.xxx.xxx:8086' tsdb_username: username tsdb_password: *************Here is a sample

config.yamlfile for you to deploy OMS in the Heilongjiang region:oms_cm_meta_host: xxx.xxx.xxx.xxx oms_cm_meta_password: ********** oms_cm_meta_port: 2883 oms_cm_meta_user: oms_cm_meta_user oms_rm_meta_host: xxx.xxx.xxx.xxx oms_rm_meta_password: ********** oms_rm_meta_port: 2883 oms_rm_meta_user: oms_rm_meta_user drc_rm_db: oms_rm drc_cm_db: oms_cm drc_cm_heartbeat_db: oms_cm_heartbeat_1 cm_url: http://VIP:8088 cm_location: 2 cm_region: cn-heilongjiang cm_region_cn: cn-heilongjiang cm_nodes: - xxx.xxx.xxx.xx1 - xxx.xxx.xxx.xx2 tsdb_service: 'INFLUXDB' tsdb_enabled: true tsdb_url: 'xxx.xxx.xxx.xxx:8086' tsdb_username: username tsdb_password: *************