This topic introduces vector embedding technology in vector search.

What is vector embedding?

Vector embedding is a technique for converting unstructured data into numerical vectors. These vectors can capture the semantic information of unstructured data, enabling computers to "understand" and process the meaning of such data. Specifically:

Vector embedding maps unstructured data such as text, images, or audio/video to points in a high-dimensional vector space.

In this vector space, semantically similar unstructured data is mapped to nearby locations.

Vectors are typically composed of hundreds of numbers (such as 512 or 1024 dimensions).

Mathematical methods (such as cosine similarity) can be used to calculate the similarity between vectors.

Common vector embedding models include Word2Vec, BERT, and BGE. For example, when developing RAG applications, text data is often embedded into vector data and stored in a vector database, while other structured data is stored in a relational database.

Starting from OceanBase Database V4.3.3, vector data can be stored as a data type in a relational table, allowing vectors and traditional scalar data to be stored in an orderly and efficient manner within OceanBase Database.

Common text embedding methods

This section introduces common text embedding methods.

Preparations

You need to have the pip command installed in advance.

Use an offline, locally pre-trained embedding model

Using pre-trained models for local text embedding is the most flexible approach, but it requires significant computing resources. Commonly used models include:

Use Sentence Transformers

Sentence Transformers is an NLP model that converts sentences or paragraphs into vector embeddings. It uses deep learning, particularly the Transformer architecture, to capture the semantic meaning of text. Direct access to Hugging Face's domain may be slow in China, so please set the Hugging Face mirror address export HF_ENDPOINT=https://hf-mirror.com in advance. After setting it, run the code below:

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("BAAI/bge-m3")

sentences = [

"That is a happy person",

"That is a happy dog",

"That is a very happy person",

"Today is a sunny day"

]

embeddings = model.encode(sentences)

print(embeddings)

# [[-0.01178016 0.00884024 -0.05844684 ... 0.00750248 -0.04790139

# 0.00330675]

# [-0.03470375 -0.00886354 -0.05242309 ... 0.00899352 -0.02396279

# 0.02985837]

# [-0.01356584 0.01900942 -0.05800966 ... 0.00523864 -0.05689549

# 0.00077098]

# [-0.02149693 0.02998871 -0.05638731 ... 0.01443702 -0.02131325

# -0.00112451]]

similarities = model.similarity(embeddings, embeddings)

print(similarities.shape)

# torch.Size([4, 4])

Use Hugging Face Transformers

Hugging Face Transformers is an open-source library that provides a wide range of pre-trained deep learning models, especially for NLP tasks. Due to geographical reasons, direct access to Hugging Face's domain may be slow in China. Please set the Hugging Face mirror address export HF_ENDPOINT=https://hf-mirror.com in advance. After setting it, run the code below:

from transformers import AutoTokenizer, AutoModel

import torch

# Load the model and tokenizer

tokenizer = AutoTokenizer.from_pretrained("BAAI/bge-m3")

model = AutoModel.from_pretrained("BAAI/bge-m3")

# Prepare the input

texts = ["This is an example text."]

inputs = tokenizer(texts, padding=True, truncation=True, return_tensors="pt")

# Generate embeddings

with torch.no_grad():

outputs = model(**inputs)

embeddings = outputs.last_hidden_state[:, 0] # Use the [CLS] token's output

print(embeddings)

# tensor([[-1.4136, 0.7477, -0.9914, ..., 0.0937, -0.0362, -0.1650]])

print(embeddings.shape)

# torch.Size([1, 1024])

Ollama

Ollama is an open-source model that allows users to easily run, manage, and use various large language models locally. In addition to supporting open-source language models like Llama 3 and Mistral, it also supports embedding models like bge-m3.

Deploy Ollama.

You can directly download and install the package from the official website on MacOS and Windows. For more information, see the Ollama documentation. After installation, Ollama runs as a background service.

To install Ollama on Linux:

curl -fsSL https://ollama.ai/install.sh | shPull an embedding model.

Ollama supports the bge-m3 model for text embeddings:

ollama pull bge-m3Use Ollama for text embeddings.

You can use Ollama's embedding capabilities through the HTTP API or Python SDK:

HTTP API

import requests def get_embedding(text: str) -> list: """Use the HTTP API of Ollama to obtain text embeddings.""" response = requests.post( 'http://localhost:11434/api/embeddings', json={ 'model': 'bge-m3', 'prompt': text } ) return response.json()['embedding'] # Example usage text = "This is an example text." embedding = get_embedding(text) print(embedding) # [-1.4269912242889404, 0.9092104434967041, ...]Python SDK

First, install the Python SDK for Ollama:

pip install ollamaThen, you can use it like this:

import ollama # Example usage texts = ["First sentence", "Second sentence"] embeddings = ollama.embed(model="bge-m3", input=texts)['embeddings'] print(embeddings) # [[0.03486196, 0.0625187, ...], [...]]

Advantages and limitations of Ollama:

Advantages:

- Fully local deployment without the need for internet connectivity

- Open-source and free, without the need for an API key

- Supports multiple models, making it easy to switch and compare

- Relatively low resource usage

Limitations:

- Limited selection of embedding models

- Performance may not match commercial services

- Requires self-maintenance and updates

- Lacks enterprise-level support

When deciding whether to use Ollama, consider these factors. If your application has high privacy requirements or needs to run completely offline, Ollama is a good choice. However, if you need more stable service quality and better performance, commercial services may be a better option.

Use online or remote embedding services

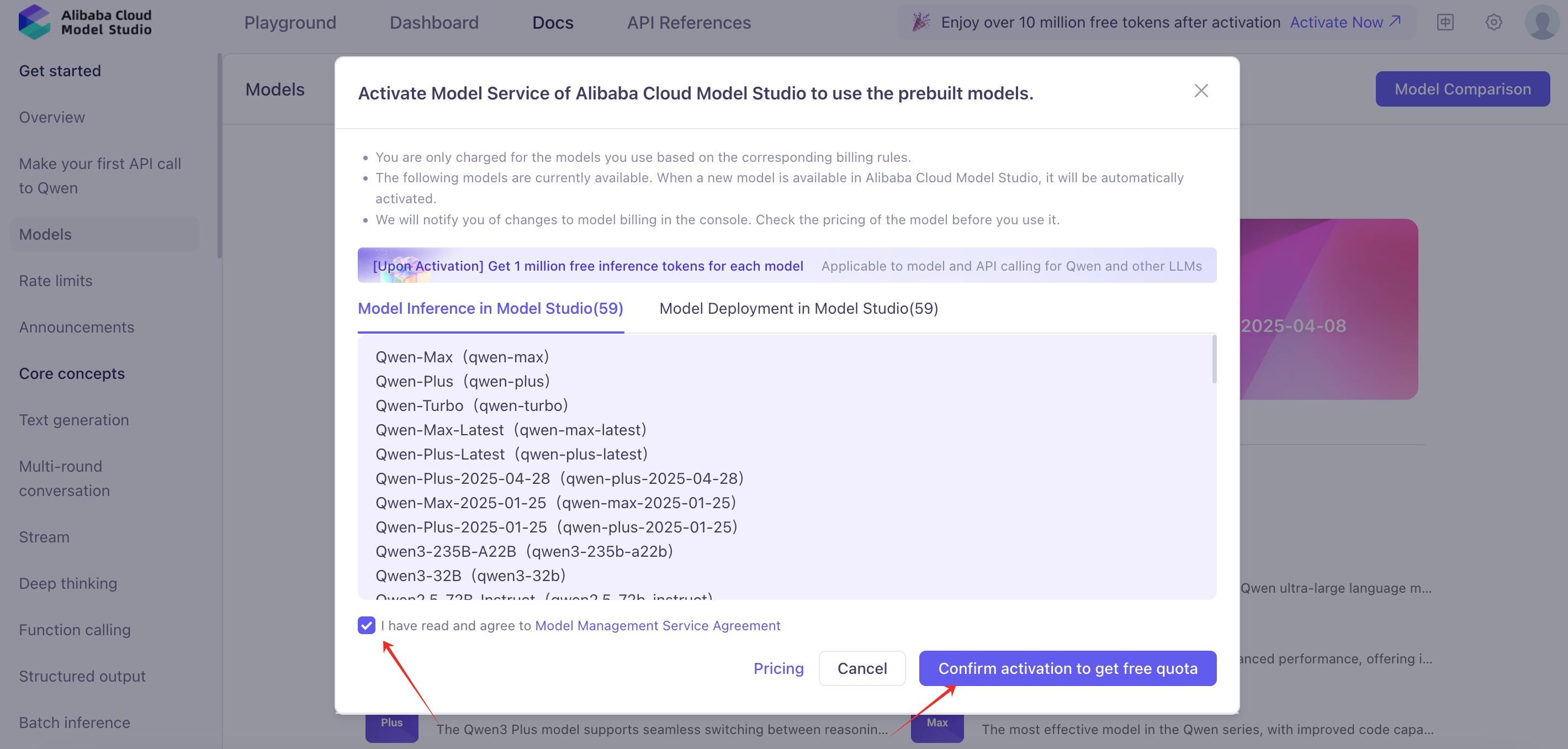

Using offline, local embedding models usually requires high hardware specifications for the deployment machine and also demands advanced management of processes such as model loading and unloading. As a result, many users have a strong need for online embedding services. Currently, many AI inference service providers offer corresponding text embedding services. Taking Tongyi Qwen's text embedding service as an example, you can first register for an account with Alibaba Cloud Model Studio and obtain an API key. Then, you can call its public API to get text embeddings.

HTTP call

After obtaining the necessary credentials, you can try performing text embedding with the following code. If the requests package is not installed in your Python environment, you need to install it first with pip install requests to enable sending network requests.

import requests

from typing import List

class RemoteEmbedding():

def __init__(

self,

base_url: str,

api_key: str,

model: str,

dimensions: int = 1024,

**kwargs,

):

self._base_url = base_url

self._api_key = api_key

self._model = model

self._dimensions = dimensions

"""

OpenAI compatible embedding API. Tongyi, Baichuan, Doubao, etc.

"""

def embed_documents(

self,

texts: List[str],

) -> List[List[float]]:

"""Embed search docs.

Args:

texts: List of text to embed.

Returns:

List of embeddings.

"""

res = requests.post(

f"{self._base_url}",

headers={"Authorization": f"Bearer {self._api_key}"},

json={

"input": texts,

"model": self._model,

"encoding_format": "float",

"dimensions": self._dimensions,

},

)

data = res.json()

embeddings = []

try:

for d in data["data"]:

embeddings.append(d["embedding"][: self._dimensions])

return embeddings

except Exception as e:

print(data)

print("Error", e)

raise e

def embed_query(self, text: str, **kwargs) -> List[float]:

"""Embed query text.

Args:

text: Text to embed.

Returns:

Embedding.

"""

return self.embed_documents([text])[0]

embedding = RemoteEmbedding(

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1/embeddings", # You can also visit https://bailian.console.alibabacloud.com/?tab=doc#/doc/?type=model&url=https://www.alibabacloud.com/help/en/doc-detail/2840914_2.html&renderType=component&modelId=text-embedding-v3 for more information.

api_key="your-api-key", # Replace this with your API key.

model="text-embedding-v3",

)

print("Embedding result:", embedding.embed_query("The weather is nice today"), "\n")

# Embedding result: [-0.03573227673768997, 0.0645645260810852, ...]

print("Embedding results:", embedding.embed_documents(["The weather is nice today", "What about tomorrow?"]), "\n")

# Embedding results: [[-0.03573227673768997, 0.0645645260810852, ...], [-0.05443647876381874, 0.07368793338537216, ...]]

Use Qwen SDK

Qwen provides an SDK called dashscope for quickly accessing model capabilities. After installing it using pip install dashscope, you can obtain text embeddings.

import dashscope

from dashscope import TextEmbedding

# Set the API key.

dashscope.api_key = "your-api-key"

# Prepare the input text.

texts = ["This is the first sentence", "This is the second sentence"]

# Call the embedding service.

response = TextEmbedding.call(

model="text-embedding-v3",

input=texts

)

# Retrieve the embeddings.

if response.status_code == 200:

print(response.output['embeddings'])

# [{"embedding": [-0.03193652629852295, 0.08152323216199875, ...]}, {"embedding": [...]}]

Common image embedding methods

This section describes image embedding methods.

Use an offline, locally pre-trained embedding model

Use CLIP

Contrastive Language-Image Pre-training (CLIP) is a model proposed by OpenAI for multimodal learning by combining images and text. CLIP can understand and process the relationships between images and text, making it perform well in various tasks such as image classification, image search, and text generation.

from PIL import Image

from transformers import CLIPProcessor, CLIPModel

model = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

processor = CLIPProcessor.from_pretrained("openai/clip-vit-base-patch32")

# Prepare the input image

image = Image.open("path_to_your_image.jpg")

texts = ["This is the first sentence", "This is the second sentence"]

# Call the embedding service

inputs = processor(text=texts, images=image, return_tensors="pt", padding=True)

outputs = model(**inputs)

# Obtain the embedding results

if outputs.status_code == 200:

print(outputs.output['embeddings'])

# [{"embedding": [-0.03193652629852295, 0.08152323216199875, ...]}, {"embedding": [...]}]