This topic describes how to use OceanBase Migration Service (OMS) Community Edition to migrate data from a Hive database to OceanBase Database Community Edition.

Background information

OMS Community Edition allows you to migrate data from a Hive database in the following modes:

Hive external table mode, where you can migrate data from a Hive database to OceanBase Database Community Edition by using Hive external tables.

Spark local mode, where you can start a Spark container in the migration process of OMS Community Edition and migrate data from a Hive database to OceanBase Database Community Edition in the Spark container.

Spark cluster mode, where the migration process of OMS Community Edition submits a data migration task to a Spark cluster to migrate data from a Hive database to OceanBase Database Community Edition.

Limitations

Limitations on the source database:

Do not perform DDL operations that modify database or table schemas during full migration. Otherwise, the data migration task may be interrupted.

At present, OMS Community Edition supports only Hive 3.1.3.

At present, OMS Community Edition supports only full migration from a Hive database to OceanBase Database Community Edition.

The data source identifiers and user accounts must be globally unique in OMS Community Edition.

OMS Community Edition supports the migration of only objects whose database name, table name, and column name are ASCII-encoded without special characters. The special characters are line breaks and

| " ' ` ( ) = ; / &.To ensure the performance of a data migration task, we recommend that you migrate no more than 1,000 tables at a time.

Data type mappings

Hive database |

OceanBase Database Community Edition |

|---|---|

| TINYINT | TINYINT |

| SMALLINT | SMALLINT |

| INT | INT |

| BIGINT | BIGINT |

| BOOLEAN Valid values: true and false. |

BOOLEAN/TINYINT(1) Valid values: 0 and 1. |

| FLOAT | FLOAT |

| DOUBLE | DOUBLE |

| DECIMAL | DECIMAL |

| STRING | LONGTEXT/TINYTEXT/MEDIUMTEXT/TEXT/VARCHAR/CHAR You can select from the supported mapped-to data types in OceanBase Database Community Edition as needed. |

| VARCHAR | VARCHAR |

| CHAR | CHAR |

| TIMESTAMP | TIMESTAMP |

| TIMESTAMP WITH LOCAL TIME ZONE | TIMESTAMP

|

| DATE | DATE |

| BINARY |

|

| STRUCT | JSON Hive supports this data type in external table mode. For example, data of the STRUCT<field1:STRING, field2:INT> type follows a format similar to ["example",42]. |

| MAP | JSON Hive supports this data type in external table mode. For example, data of the MAP<STRING, INT> type follows a format similar to {"key1":1,"key2":2}. |

| ARRAY | JSON Hive supports this data type in external table mode. For example, data of the ARRAY<STRING> type follows a format similar to ["item1","item2","item3"]. |

| UNIONTYPE | JSON Hive supports this data type in external table mode. For example, {1:9.9} in a Hive database is converted to {"tag": 1, "object": 9.9} after migration to OceanBase Database Community Edition. |

Procedure

Create a data migration task.

Log in to the console of OMS Community Edition.

In the left-side navigation pane, click Data Migration.

On the Data Migration page, click New Task in the upper-right corner.

On the Select Source and Target page, configure the parameters.

ParameterDescriptionData Migration Task Name We recommend that you set it to a combination of digits and letters. It must not contain any spaces and cannot exceed 64 characters in length. Tag (Optional) Click the field and select a tag from the drop-down list. You can also click Manage Tags to create, modify, and delete tags. For more information, see Use tags to manage data migration tasks. Source If you have created a Hive data source, select it from the drop-down list. If not, click New Data Source in the drop-down list and create one in the dialog box that appears on the right. For more information about the parameters, see Create a Hive data source. Target If you have created an OceanBase-CE data source, select it from the drop-down list. If not, click New Data Source in the drop-down list and create one in the dialog box that appears on the right. For more information about the parameters, see Create an OceanBase-CE data source. Click Next.

On the Select Migration Type page, select Full Migration.

After a full migration task is started, OMS Community Edition migrates the existing data of tables in the source database to corresponding tables in the target database.

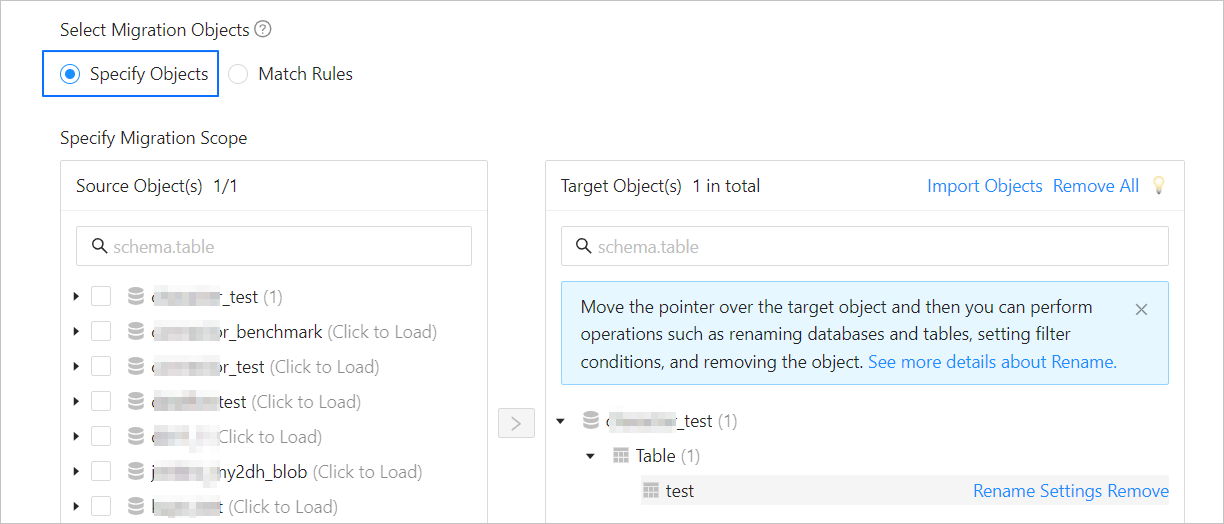

Click Next. On the Select Migration Objects page, select the migration objects and migration scope.

You can select Specify Objects or Match Rules to specify the migration objects. The following procedure describes how to specify migration objects by using the Specify Objects option. For information about the procedure for specifying migration objects by using the Match Rules option, see Configure matching rules for migration objects.

Notice

The name of a table to be migrated, as well as the names of columns in the table, must not contain Chinese characters.

If a database or table name contains double dollar signs ("$$"), you cannot create the migration task.

In the Select Migration Objects section, select Specify Objects.

In the Specify Migration Scope section, select the objects to be migrated from the Source Object(s) list. You can select tables and views of one or more databases as the migration objects.

Click > to add the selected objects to the Target Object(s) list.

When you migrate data from a Hive database to OceanBase Database Community Edition, OMS Community Edition allows you to import objects from text, rename objects, and remove one or all migration objects.

OperationDescriptionImport objects - In the right-side pane of the Specify Migration Scope section, click Import Objects in the upper-right corner.

- In the dialog box that appears, click OK.

Notice

This operation will overwrite previous selections. Proceed with caution. - In the Import Objects dialog box, import the objects to be migrated.

You can import CSV files to rename databases/tables. For more information, see Download and import the settings of migration objects. - Click Validate.

- After the validation succeeds, click OK.

Rename objects OMS Community Edition allows you to rename migration objects. For more information, see Rename a database table. Remove one or all objects OMS Community Edition allows you to remove a single object or all objects to be migrated to the target database during data mapping. - To remove a single migration object:

In the right-side pane of the Specify Migration Scope section, move the pointer over the target object and click Remove. - To remove all migration objects:

In the right-side pane of the Specify Migration Scope section, click Remove All in the upper-right corner. In the dialog box that appears, click OK.

Click Next. On the Migration Options page, configure the parameters.

Notice

The following parameters are displayed only if you have selected Full Migration on the Select Migration Type page.

ParameterDescription

ParameterDescriptionConcurrency Speed Valid values: Stable, Normal, Fast, and Custom. The resources consumed by a full migration task depends on the migration performance. If you select Custom, you can set Read Concurrency, Write Concurrency, and JVM Memory as needed. Handle Non-empty Tables in Target Database Valid values: Ignore and Stop Migration. - If you select Ignore, when the data to be inserted conflicts with the existing data of a target table, OMS Community Edition retains the existing data and records the conflict data.

Notice

If you select Ignore, data is pulled in IN mode for full verification. In this case, the scenario where the target table contains more data than the source table cannot be verified, and the verification efficiency will be decreased.

- If you select Stop Migration and a target table contains data, an error is returned during full migration, indicating that the migration is not allowed. In this case, you must clear the data in the target table before you can continue with the migration.

Notice

After an error is returned, if you click Resume in the dialog box, OMS Community Edition ignores this error and continues to migrate data. Proceed with caution.

Computing Platform The computing platform. Default value: Local, which indicates the local running mode. You can also choose to run the task on the Spark computing platform in the Spark local mode or Spark cluster mode. To add a computing platform, click Manage Computing Platform in the drop-down list. For more information, see Manage computing platforms. Writing Method Valid values: SQL (specifies to write data to tables by using INSERTorREPLACE) and Direct Load (specifies to write data through direct load). At present, you cannot write vector data by using direct load.To view or modify parameters of the full migration component Full-Import, click Configuration Details in the upper-right corner of the Full Migration section. For more information about the parameters, see Component parameters.

- If you select Ignore, when the data to be inserted conflicts with the existing data of a target table, OMS Community Edition retains the existing data and records the conflict data.

Click Precheck to start a precheck on the data migration task.

During the Precheck stage, OMS Community Edition checks database network connectivity and other items as needed. A data migration task can be started only after it passes all check items. If an error is returned during the precheck, you can perform the following operations:

Identify and troubleshoot the issue and then perform the precheck again.

Click Skip in the Actions column of the failed precheck item. In the dialog box that prompts the consequences of the operation, click OK.

Click Start Task. If you do not need to start the task now, click Save to go to the details page of the task. You can start the task later as needed.

OMS Community Edition allows you to modify the migration objects when the data migration task is running. For more information, see View and modify migration objects. After the data migration task is started, it is executed based on the selected migration types. For more information, see the View migration details section in View details of a data migration task.