After you start a data migration task, you can view the basic information, progress, and status of the task on its details page.

Access the details page

Log in to the console of OceanBase Migration Service (OMS) Community Edition.

In the left-side navigation pane, click Data Migration.

On the Data Migration tab, click the name of the target task. On the details page that appears, view the basic information and migration details of the task.

On the Data Migration page, you can search for data migration tasks by tag, status, type, or keyword. A data migration task can be in one of the following states:

Not Started: The data migration task has not been started. You can click Start in the Actions column to start the task.

Running: The data migration task is in progress. You can view the data migration plan and current progress on the right.

Modifying: The migration objects in the migration task are being modified.

Integrating: The data migration task of the modified migration object is being integrated with the migration object modification task.

Paused: The data migration task is manually paused. You can click Resume in the Actions column to resume the task.

Failed: The data migration task has failed. You can view where the failure occurred on the right. To view the error message, click the task name to go to the task details page.

Completed: The data migration task is completed, and OMS Community Edition has migrated the specified data to the target database in the configured migration mode.

Releasing: The data migration task is being released. You cannot edit a data migration task in this state.

Released: The data migration task is released. After the task is released, OMS Community Edition terminates the current migration and incremental synchronization task.

View basic information

The Basic Information section displays the basic information about a data migration task.

Parameter |

Description |

|---|---|

| ID | The unique ID of the data migration task. |

| Migration Type | The migration type selected when the data migration task was created. |

| Alert Level | The alert level of the data migration task. OMS Community Edition supports the following alert levels: No Protection, High Protection, Medium Protection, and Low Protection. For more information, see Alert settings. |

| Created By | The user who created the data migration task. |

| Created At | The time when the data migration task was created. |

| Concurrency for Full Migration | The value can be Smooth, Normal, or Fast. The amount of resources to be consumed by a full migration task depends on the migration performance. |

| Full Verification Concurrency | The value can be Smooth, Normal, or Fast. Resources consumed at the source and target databases depend on the specified concurrency. |

| Connection Details | Click Connection Details to view information about the connection between the source and target databases of the data migration task. |

You can perform the following operations:

View migration objects

Click View Objects in the upper-right corner to view the migration objects of the data migration task. You can also modify the migration objects of an ongoing data migration task. For more information, see View and modify migration objects.

View component monitoring metrics

Click View Component Monitoring in the upper-right corner to view the information about the Store, Incr-Sync, Full-Import, and Full-Verification components. You can perform the following operations on the components:

Start a component: Click Start in the Actions column of the component that you want to start. In the dialog box that appears, click OK.

Pause a component: Click Pause in the Actions column of the component that you want to pause. In the dialog box that appears, click OK.

Update a component: Click Update in the Actions column of the component that you want to update. On the Update Configuration page, modify the configurations and then click Update.

Notice

The system restarts after you update the component. Proceed with caution.

View logs: Click View Logs in the Actions column of the component. The View Logs page displays the latest logs. You can search for, download, and copy the logs.

View or modify parameter configurations

For a data migration task in the Running state, click the More icon in the upper-right corner and then select Settings from the drop-down list to view the parameters configured when the data migration task was created.

For a data migration task in the Not Started, Stopped, or Failed state, click the More icon in the upper-right corner and then select Modify Parameter Configurations from the drop-down list. In the Modify Parameter Configurations dialog box, modify the parameters and click OK.

The parameters that can be modified depend on the type of the data migration task and the stage of the task.

Download object settings

OMS Community Edition allows you to download the settings of data migration tasks and import task settings in batches. For more information, see Download and import the settings of migration objects.

Modify the alert level

OMS Community Edition allows you to modify the alert level of a data migration task. For more information, see Alert settings.

Change data sources

OMS Community Edition allows you to change a data source of a data migration task. However, you can use this feature only in the following scenarios. Otherwise, the data migration task will fail and cannot be recovered.

The data source has experienced a primary/standby switchover and you need to replace the IP address of the original primary database with that of the new primary database.

The IP address or port number of the data source is changed, but the data source remains unchanged.

The username or password for logging in to the data source is changed.

Perform the following steps to change the data source:

Go to the details page of a data migration task.

Click More in the upper-right corner and select Modify Data.

In the Modify Data Source dialog box, select the new source or target database as needed.

Notice

The type of the new data source must be the same as that of the current data source.

Click OK.

View migration details

The Migration Details section displays the status, progress, start time, end time, and total duration of all subtasks.

Schema migration

The definitions of data objects, such as tables, indexes, constraints, comments, and views, are migrated from the source database to the target database. Temporary tables are automatically filtered out. If the source database is not of OceanBase database Community Edition, OMS Community Edition performs SQL format conversion and construction based on the syntax definition and standard of the type of the target tenant of OceanBase Database, and then replicates the data to the target database.

When you advance to the forward switchover step in a data migration task, OMS Community Edition will automatically drop the hidden columns and unique indexes based on the type of the data migration task.

You can view the overall status, start time, end time, total amount of time consumed, and database, table, and view migration progress for a schema migration task on the Schema Migration page. When you migrate data between tenants of OceanBase Database Community Edition, you can view the migration progress of stored procedures and functions. You can also perform the following operations on an object:

View Creation Syntax: On the Database or Table tab, you can click View next to the target object to view the creation syntax of a database, table, or index.

Compatible DDL syntax executed on the OBServer node is displayed. Incompatible syntax is converted before it is displayed.

Modify Creation Syntax and Try Again: You can view the error information, check and modify the definition of the conversion result of a failed DDL statement, and then migrate the data to the target database again.

Retry/Retry All Failed Objects: You can retry failed schema migration tasks one by one or retry all failed tasks at a time.

Export All Schemas: You can export the schema information of all objects and save it to your local storage.

Skip/Batch Skip: You can skip failed schema migration tasks one by one or skip multiple failed tasks at a time. To skip multiple objects at a time, click Batch Skip in the upper-right corner. If you skip an object, its index is also skipped.

Remove/Batch Remove: You can remove failed schema migration tasks one by one or remove multiple failed tasks at a time. To remove multiple failed tasks at a time, click Batch Remove in the upper-right corner. If you remove an object, its index is also removed.

View Details: You can view the DDL statements executed on the OBServer node and the execution error information of a failed schema migration task.

Full migration

Full migration aims to migrate existing data from tables in the source database to corresponding tables in the target database. On the Full Migration page, you can filter objects by source and target databases, or select View Objects with Errors to filter for objects that hinder the overall migration progress. You can also view related information on the Table Objects, Table Indexes, and Full Migration Performance tabs. The status of a full migration task changes to Completed only after the table objects and table indexes are migrated.

Notice

When you use OMS Community Edition to migrate data from a Hive database to OceanBase Database Community Edition, the full migration performance metrics such as the requests per second (RPS) and migration traffic are not provided.

On the Table Objects tab, you can view the names, source and target databases, estimated data volume, migrated data volume, and status of tables.

On the Table Indexes tab, you can view the table objects, source and target databases, creation time, end time, amount of time consumed, and status of indexes. You can also view the index creation syntax and remove unwanted indexes.

On the Full Migration Performance tab, you can view the graphs of performance data such as the RPS and migration traffic of the source and target databases, average read time and average sharding time of the source database, average write time of the target database, and performance benchmarks. Such information can help you identify performance issues in a timely manner.

You can combine full migration with incremental synchronization to ensure data consistency between the source and target databases. If any objects fail to be migrated during full migration, the causes of the failure are displayed.

Notice

If you do not select Schema Migration for Migration Type, OMS Community Edition migrates the columns in the source database that match those in the target database during full migration, without checking whether the schemas are consistent.

After full migration is completed and the subsequent step is started, you cannot choose OPS & Monitoring > Component > Full-Verification and click Rerun in the Actions column to rerun the target Full-Verification component.

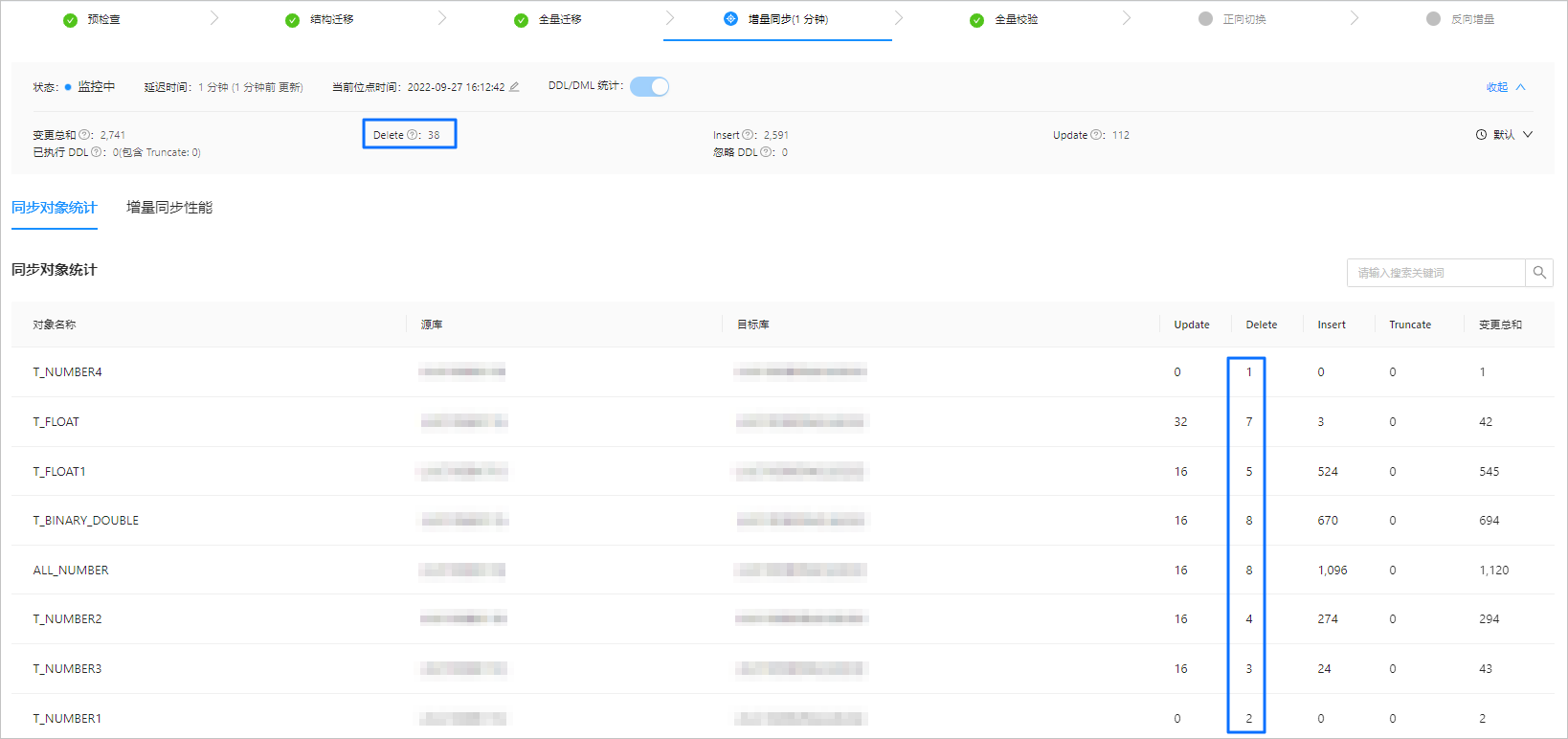

Incremental synchronization

After incremental synchronization starts, data that has been changed (added, modified, or deleted) in the source database is synchronized to the corresponding tables in the target database. When services continuously write data to the source database, OMS Community Edition starts the incremental data pull module to pull incremental data from the source instance, parses and encapsulates the incremental data, and then stores the data. After that, OMS Community Edition starts the full data migration.

After the full data migration task is completed, OMS Community Edition starts the incremental data replay module to pull incremental data from the incremental data pull module. The incremental data is synchronized to the target instance after being filtered, mapped, and converted. If an Incr-Sync exception occurs after you execute a DDL statement in the source database and the data migration task fails, a page appears, displaying the DDL statement that causes the task failure and the Skip button. You can click Skip and confirm your operation.

Notice

This operation may lead to data structure inconsistency between the source and target databases. Proceed with caution.

For a data migration task in the Running state, you can view its latency, current timestamp, and incremental synchronization performance in the incremental synchronization section. The latency is displayed in the following format: X seconds (updated Y seconds ago). Normally, Y is less than 20.

For a data migration task in the Stopped or Failed state, you can enable DDL/DML statistics collection to collect statistics on database operations performed after this feature is enabled. You can also view the specific information about incremental synchronization objects and the incremental synchronization performance.

The Synchronization Object Statistics tab displays the statistics about table-level DML statements executed for each incremental synchronization object in the current task. The numbers displayed in the Change Sum, Delete, Insert, and Update fields in the section above the Synchronization Object Statistics tab are the sums of the corresponding columns on this tab.

The Incremental Synchronization Performance tab displays the following content:

Latency: the latency in synchronizing incremental data from the source database to the target database, in seconds.

Migration traffic: The throughput of data flow for the synchronization from the source database to the target database, in KB/s.

Average execution time: the average execution time of an SQL statement, in ms.

Average commit time: the average commit time of a transaction, in ms.

RPS: the number of rows written to the target per second.

When you create a data migration task, we recommend that you specify related information such as the alert level and alert frequency, to help you understand the task status. OMS Community Edition provides low-level protection by default. You can modify the alert level based on your business requirements. For more information, see Alert settings.

When the incremental synchronization latency exceeds the specified alert threshold, the incremental synchronization status stays at Running and the system does not trigger any alerts.

When the incremental synchronization latency is less than or equal to the specified alert threshold, the incremental synchronization status changes from *Running to Monitoring. After the incremental synchronization status changes to Monitoring, it will not change back to Running when the latency exceeds the specified alert threshold.

Full verification

After the full data migration and incremental data migration are completed, OMS Community Edition automatically initiates a full verification task to verify the data tables in the source and target databases.

Notice

If you do not select Schema Migration for Migration Type, OMS Community Edition verifies the columns in the source database that match those in the target database during full verification, without checking whether the schemas are consistent.

During a full verification task, if the

CREATE,DROP,ALTER, orRENAMEoperation is performed on the source table, the task may exit.

You can also initiate custom data verification tasks during incremental synchronization. On the Full Verification page, you can view the overall status, start time, end time, total amount of time consumed, estimated total number of rows, number of migrated rows, real-time traffic, and RPS of the full verification task.

The Full Verification page contains the Verified Objects and Verification Performance tabs.

On the Verified Objects tab, you can view the verification progress and verification object list.

You can view the names, source and target databases, full verification progress and results, and result summary of all migration objects.

You can filter migration objects by source or target database.

You can select View Completed Objects Only to view the basic information of objects that have completed schema migration, such as the object names.

You can choose Reverify > Restart Full Verification to run full verification again for all migration objects.

Take note of the following items for tables with inconsistent verification results:

If you need to reverify all data in the tables, choose Reverify > Reverify Abnormal Table.

If you need to reverify only inconsistent data, choose Reverify > Verify Only Inconsistent Records.

Notice

Correction operations are not supported if the source database has no corresponding data.

On the Full Verification Performance tab, you can view the graphs of performance data such as the RPS and verification traffic of the source and target databases and performance benchmarks. Such information can help you identify performance issues in a timely manner.

OMS Community Edition allows you to skip full verification for a task that is being verified or has failed verification. On the Full Verification page, click Skip Full Verification in the upper-right corner. In the dialog box that appears, click OK.

Notice

If you skip full verification, you cannot resume the verification task for data comparison and correction. You can only clone the current task to initiate full verification again. Therefore, proceed with caution.

After the full verification is completed, you can click Go To Next Stage to start a forward switchover. After you enter the switchover process, you cannot recheck the current verification task to compare or correct data.

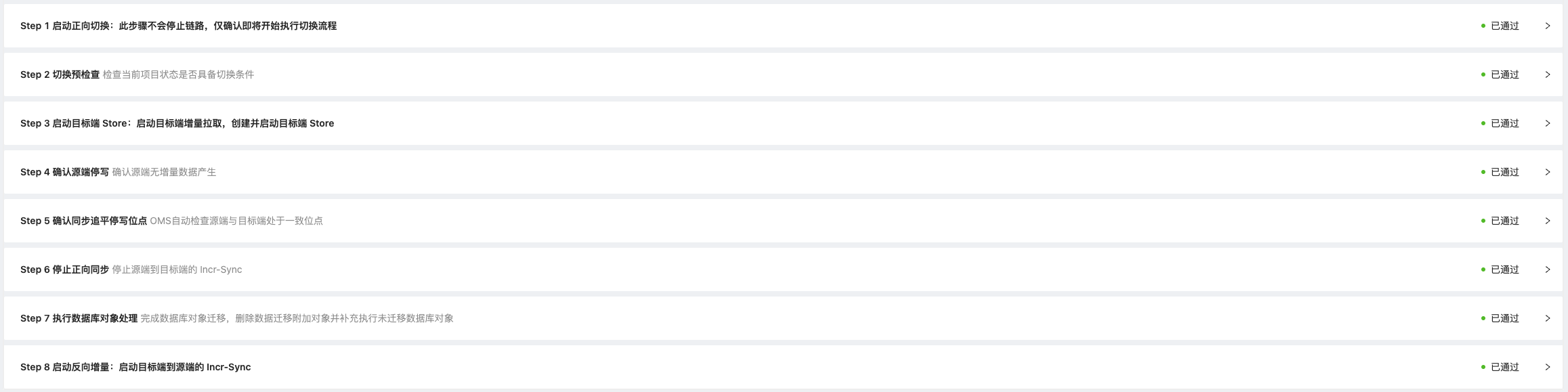

Forward switchover

Notice

When you execute a data migration task in an active-active disaster recovery scenario, forward switchover is not supported.

Forward switchover is an abstract and standard process of conventional system cutover and does not involve the switchover of application connections. This process includes a series of tasks that are performed by OMS Community Edition for application switchover in a data migration task. You must make sure that the entire forward switchover process is completed before the application connections are switched over to the target database.

Forward switchover is required for data migration. OMS Community Edition ensures the completion of forward data migration in this process, and you can start the reverse incremental synchronization component based on your business needs. The forward switchover process involves the following operations:

You must make sure that data migration is completed and wait until forward synchronization is completed.

OMS Community Edition automatically supplements CHECK constraints, FOREIGN KEY constraints, and other objects that are ignored in the schema migration stage.

OMS Community Edition automatically drops the additional hidden columns and unique indexes that the migration depends on.

This operation is required only when you migrate data between tenants of OceanBase Database Community Edition. For more information, see Hidden column mechanisms.

You must migrate triggers, functions, and stored procedures in the source database that are not supported by OMS Community Edition to the target database.

You must disable triggers and FOREIGN KEY constraints in the source database. This operation is required only when the data migration task involves reverse incremental synchronization.

The forward switchover process contains the following steps:

Start forward switchover.

In this step, you can start forward switchover, but no operation is performed in the background. After you confirm that data migration is completed, you can click Start Forward Switchover to start the forward switchover process for business cutover.

If you want to complete forward switchover with a few clicks, you can select Fast Switching to skip the manual confirmation step. After you select this option, OMS Community Edition no longer requires manual confirmation. Proceed with caution.

Notice

Before you start forward switchover, make sure that data writing has stopped in the source database.

Perform a switchover precheck.

In this step, OMS checks the following items:

Synchronization latency between the source and target databases. If the synchronization latency is within 15 seconds, this check item is passed.

Write privilege of the account in the source database. If the data migration task involves reverse incremental synchronization, OMS additionally checks whether the account configured in the source database has the privilege to write data, to ensure that data can be properly written during reverse incremental synchronization.

Privilege of the account in the target database for incremental data read. If the data migration task involves reverse incremental synchronization, OMS additionally checks whether the account configured in the target database has the privilege to read data. This ensures that data can be properly written to the target database during reverse incremental synchronization.

Incremental logs in the target database. If the data migration task involves reverse incremental synchronization, OMS additionally checks whether the incremental logging configuration in the target database meets the log extraction requirements of reverse incremental synchronization.

If the switchover precheck is passed, OMS Community Edition automatically performs the next step. If the switchover precheck fails, OMS Community Edition provides two options: Retry and Skip.

Notice

If you click Skip, data loss may occur in the target database, or the reverse incremental synchronization process may fail. Proceed with caution.

Start the target store.

Note

This step is available only when the data migration task involves reverse incremental synchronization.

If the precheck for forward switchover is passed, OMS Community Edition automatically starts incremental log pulling for the target database. This way, OMS Community Edition obtains DML and DDL operations in the target database and parses and saves related log data to prepare for reverse incremental synchronization. This step takes 3 to 5 minutes.

Confirm that data writing has stopped in the source database.

In this step, OMS checks whether business data is still being written to the source database. If you make sure that no new data is written to the source database, click OK to go to the next step.

Confirm the data writing stop timestamp upon synchronization completion.

In this step, OMS checks whether the target database is synchronized to the data writing stop timestamp in the source database. If not, OMS Community Edition continues to check the target database until it is synchronized to the timestamp. This way, OMS Community Edition makes sure that all data in the target database is updated.

Stop forward synchronization.

In this step, you can stop forward synchronization. After forward synchronization is stopped, any database changes in the source database will no longer be synchronized to the target database. If the service fails to be stopped, OMS Community Edition provides two options: Retry and Skip.

Notice

You can click Skip only after forward synchronization is completed in the background. Otherwise, data in the source database may be unexpectedly written to the target database. Proceed with caution.

Process database objects.

In this step, you can process the objects that are ignored in data migration or not supported by OMS Community Edition. This ensures normal operations of your business after the switchover to the target database.

Migrate database objects to the target database: You must migrate triggers, functions, and stored procedures in the source database that are not supported by OMS Community Edition to the target database. After you complete the migration, click Mark as Complete.

Disable triggers and FOREIGN KEY constraints in the source database: This operation is required only when the data migration task involves reverse incremental synchronization. It prevents data from being affected by triggers or FOREIGN KEY constraints, to avoid failures of reverse incremental synchronization. After you complete this operation, click Mark as Complete.

Supplement the objects ignored in schema migration to the target database: OMS Community Edition automatically supplements the objects that are ignored in schema migration to the target database, such as check constraints and FOREIGN KEY constraints. The preceding objects are migrated during schema migration by default.

Drop hidden columns and unique indexes added by OMS Community Edition: This operation is required only when you migrate data between tenants of OceanBase Database Community Edition. OMS Community Edition automatically drops the hidden columns and unique indexes that are added to the target database to ensure data consistency. This operation runs automatically, and the amount of time required depends on the amount of data in the target database. OMS Community Edition provides the Skip option for this operation. If you choose to skip this operation, you need to manually perform the drop operation. Proceed with caution. For more information, see Hidden column mechanisms.

Start reverse incremental synchronization.

Note

This step is available only when the data migration task involves reverse incremental synchronization.

In this step, you can start incremental synchronization for the target database to synchronize incremental DML or DDL operations from the target database to the source database in real time. The configuration of incremental synchronization is the same as that specified when the task was created. For more information, see Incremental synchronization of DDL operations.

Reverse incremental synchronization

Notice

When you execute a data migration task in an active-active disaster recovery scenario, OMS Community Edition automatically starts reverse incremental synchronization before full verification based on the settings of incremental synchronization.

For a data migration task in the Running state, you can view its latency, current timestamp, and performance of reverse incremental synchronization in the Reverse Incremental Migration section. The latency is displayed in the following format: X seconds (updated Y seconds ago). Normally, Y is less than 20.

For a data migration task in the Stopped or Failed state, you can enable DDL/DML statistics collection to collect statistics on database operations performed after this feature is enabled. You can also view the specific information about the objects and performance of reverse incremental synchronization.

The Synchronization Object Statistics tab displays the statistics about table-level DML statements executed for each reverse incremental synchronization object in the current task. The numbers displayed in the Change Sum, Delete, Insert, and Update fields in the section above the Synchronization Object Statistics tab are the sums of the corresponding columns on this tab.

The Reverse Incremental Migration Performance tab displays the following content:

Latency: the latency in synchronizing incremental data from the target database to the source database, in seconds.

Migration traffic: the traffic throughput of incremental data synchronization from the target database to the source database, in Kbit/s.

Average execution time: the average execution time of an SQL statement, in ms.

Average commit time: the average commit time of a transaction, in ms.

RPS: the number of rows written to the target per second.