As a distributed database, OceanBase Database naturally distributes data across multiple OBServer nodes. In order to shield the complexity of distributed databases from applications (APPs) and make accessing OceanBase Database as simple as accessing a standalone database, the OceanBase Database Proxy (ODP), also known as OBProxy, is introduced in the architecture. ODP is a dedicated reverse proxy service to address the SQL statement routing and disaster recovery issues for OceanBase Database.

As a core component on the data link, ODP has multiple deployment forms:

OBServer-side deployment: ODP and OBServer are deployed on the same server, which may result in resource competition and difficulty in troubleshooting under heavy load. This deployment form is commonly used in private cloud scenarios.

Independent deployment: ODP is deployed as an independent cluster, which reduces the mutual impact between ODP and OBServer. This deployment form is commonly used in public cloud scenarios.

Client-side deployment: Another container running ODP is deployed next to the AppServer container. ODP and AppServer are located in the same pod but support independent upgrade, release, and maintenance. This deployment form has high requirements for the control plane and is less commonly used.

For the first two deployment forms, AppServer accessing ODP needs to go through a load balancer, which is responsible for the high availability and load balancing of ODP. For example, F5 distributes user requests to multiple ODPs.

In terms of product form, in addition to the proxy form, ODP also has the SDK form. The SDK form is integrated into the business code as a library. Compared with the proxy form, the SDK form has one less hop on the access link, achieving better performance and shorter troubleshooting process. However, the SDK form also has some disadvantages, for example, there is mutual impact between SDK and business code because of the integration; there is a need to restart the business when upgrading ODP SDK.

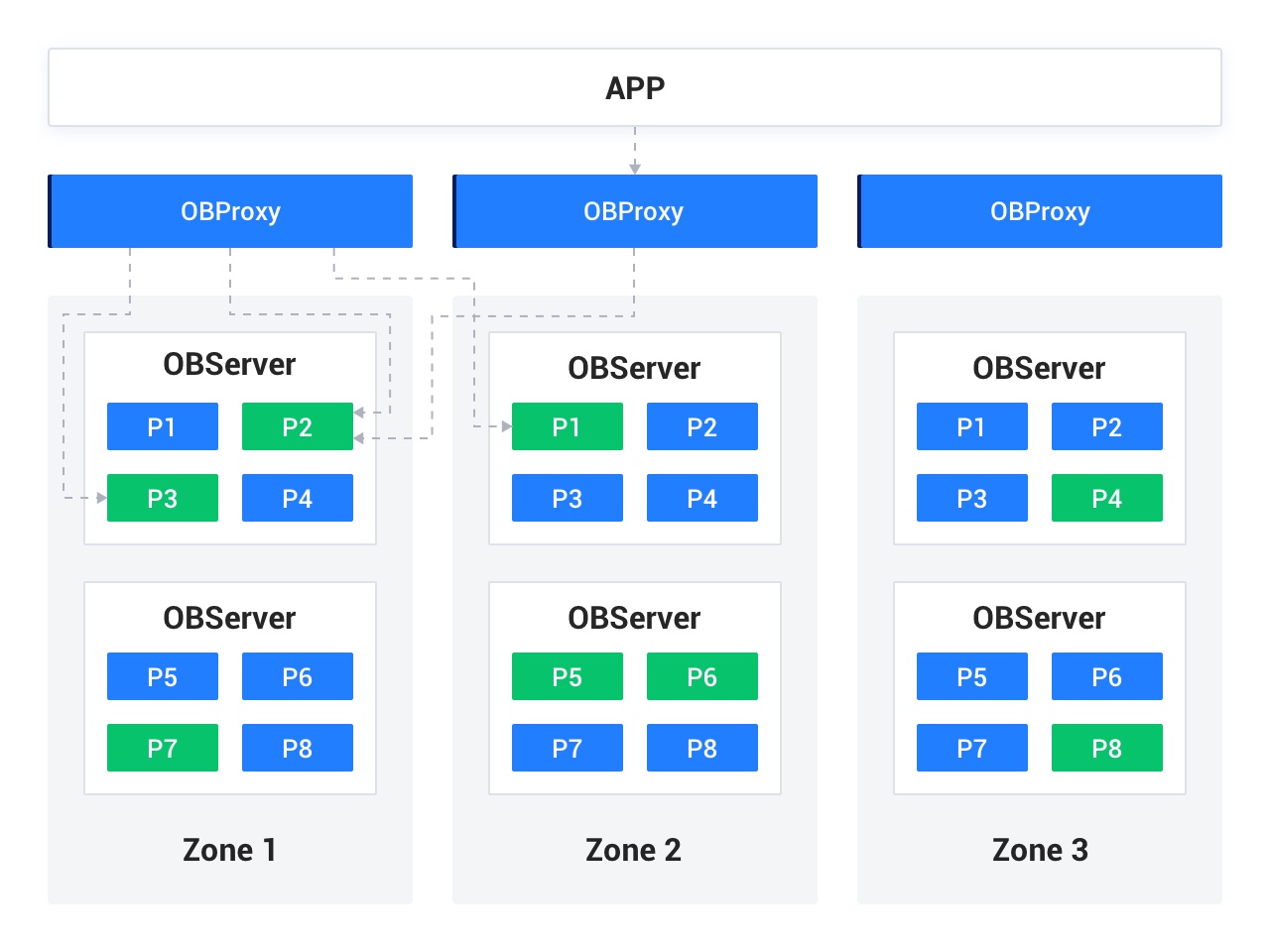

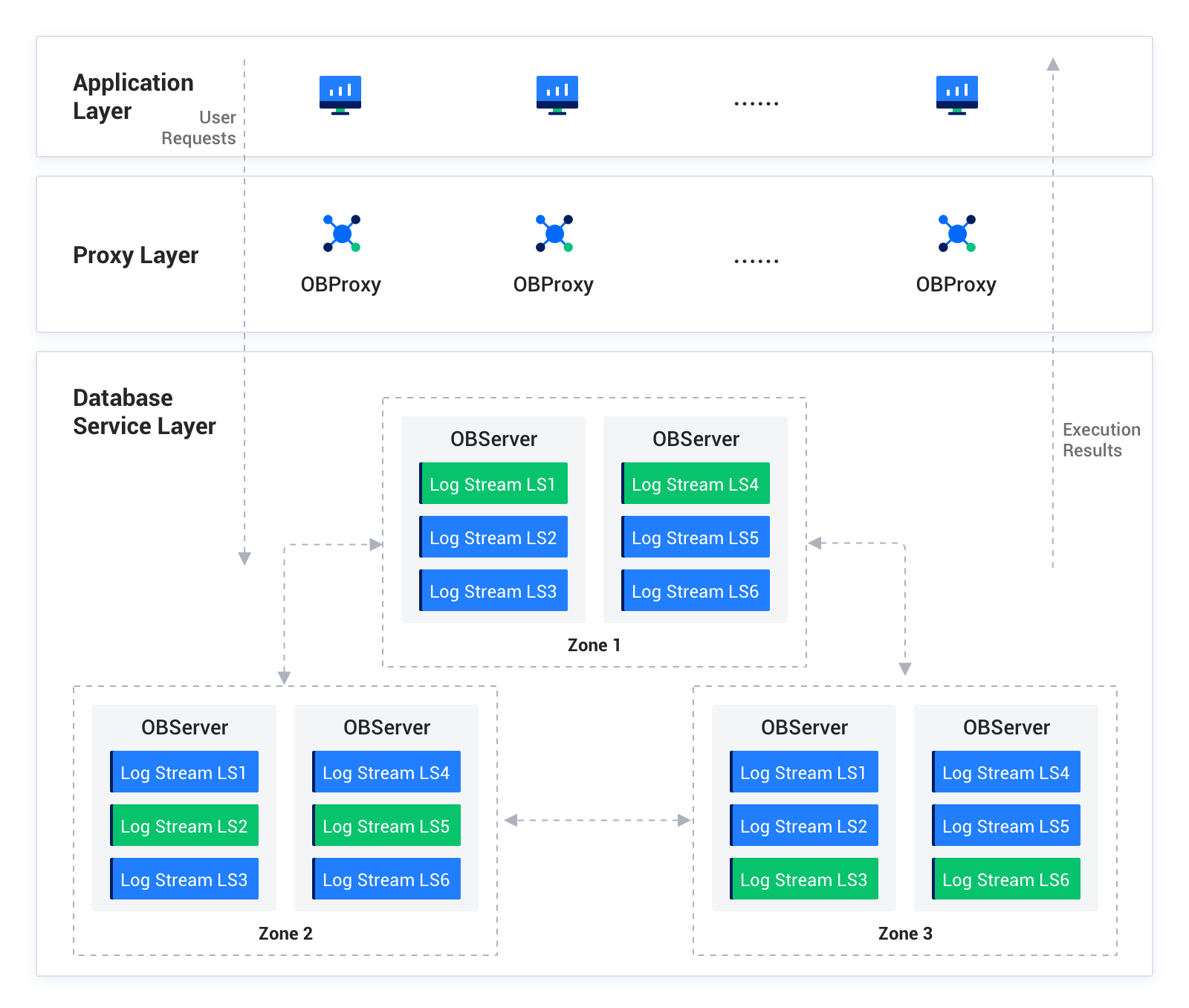

After ODP is introduced, the OceanBase Database data link is as follows: AppServer <-> ODP <-> OBServer. AppServer sends user requests to ODP through the database driver. Due to the distributed architecture of OceanBase Database, user data is distributed across multiple OBServer nodes in the form of multiple log streams and replicas. ODP forwards the requests to the most suitable OBServer node for execution and returns the execution result to the user. Since each OBServer node is capable of route forwarding, it forwards the request to the correct OBServer node upon detecting that it cannot execute the request, which requires remote execution and will have a certain impact on the response time (RT) of the request.

As the reverse proxy service for OceanBase Database, ODP provides route selection and disaster recovery from the user side to the database side, shielding applications from the perception of the distributed database. Each OBServer node has a complete set of SQL engine, storage engine, and distributed transaction engine. It is responsible for parsing SQL statements, generating and executing physical execution plans, and committing transactions based on the Paxos protocol. OBServer provides high-performance, high-availability, and high-scalability database services. ODP and OBServer are loosely coupled, allowing for incorrect routing selection due to delayed routing information updates on ODP. OBServer will forward the request internally and notify ODP to update the routing information when returning the result.

As described above, AppServer sends user requests to ODP, and ODP forwards the requests to active OBServer nodes. The execution results are returned to AppServer through the reverse path. Different components on the entire data link achieve high availability in different ways.

References

For more information about the data link, see Overview.