Basic concepts

Cluster

An OceanBase cluster consists of one or more regions, a region consists of one or more zones, and a zone consists of one or more OBServer nodes. Each OBServer node can have multiple units, each unit can have the replicas of multiple log streams, and each log stream can use multiple tablets.

Region

A region refers to a city or a geographic area. If an OceanBase cluster spans two or more regions, the geo-disaster recovery capability is implemented for the data and services of the OceanBase cluster. If an OceanBase cluster spans only one region, the data and services of the OceanBase cluster are affected when a city-wide failure occurs.

Zone

In OceanBase Database, a zone is an IDC or a physical region. It is a logical concept. Generally, a zone contains multiple storage nodes that are physically distributed in different IDCs, racks, or servers. A zone can contain multiple IDCs but an IDC belongs only to one zone.

In OceanBase Database, zones are used to implement data redundancy across IDCs. OceanBase Database distributes data across different zones based on specific rules to implement data redundancy. When one zone fails, the system automatically switches to the standby zone to ensure data availability.

In addition to data redundancy, OceanBase Database also uses zones as containers for data shards. Data sharding is a technology that divides data into multiple shards and stores the shards on different nodes. This improves the system throughput and performance. In OceanBase Database, different zones serve as the primary zones of different data shards to implement distributed data storage and processing.

OBServer

An OBserver is a physical server that runs the observer process. You can deploy one or more OBServer nodes on a physical server. Generally, only one OBServer node is deployed on a physical server. In OceanBase Database, each OBServer node is uniquely identified by its IP address and service port.

Unit

A unit is a container of a tenant on the OBServer node. It indicates the resources such as the CPU and memory resources available for the tenant on the OBServer node. A tenant can have only one unit on an OBServer node.

Partition

A partition is a logical object created by a user and a mechanism used to divide and manage table data. You can perform multiple partition management operations, such as create, drop, truncate, split, merge, and exchange.

Tablet

OceanBase Database V4.0.0 introduced the concept of tablet to represent actual data storage objects. As the smallest unit in data balancing, a tablet can store data and supports transfer between servers. Tablets and partitions are in a one-to-one correspondence. One tablet is created for a single-partition table, and one tablet is created for each partition of a multi-partition table. In an indexed table, which can be a table with a local index or a global index, each partition also corresponds to one tablet. Particularly, the tablets of a table with a local index will be bound with those in the primary table to ensure that they are stored on the same server.

Log stream

A log stream (LS) is an entity that is automatically created and managed by OceanBase Database. It is a collection of data and contains several tablets and ordered redo logs. It uses the Paxos protocol to synchronize logs between replicas to ensure data consistency between the replicas and thereby implement high availability of data. Log stream replicas can be migrated and replicated between servers for server management and system disaster recovery.

From the perspective of data storage, a log stream can be abstracted as a tablet container that supports the addition and management of tablet data and the transfer of tablets between log streams for data balancing and horizontal scale-out.

From the perspective of transactions, a log stream is a unit for committing transactions. If the modification in a transaction is completed within a single log stream, the transaction can be committed by using the one-phase atomic commit logic. If the modification in the transaction is completed across multiple log streams, the transaction can be committed by using the two-phase atomic commit protocol of OceanBase Database. Log streams are participants of distributed transactions.

Deployment modes

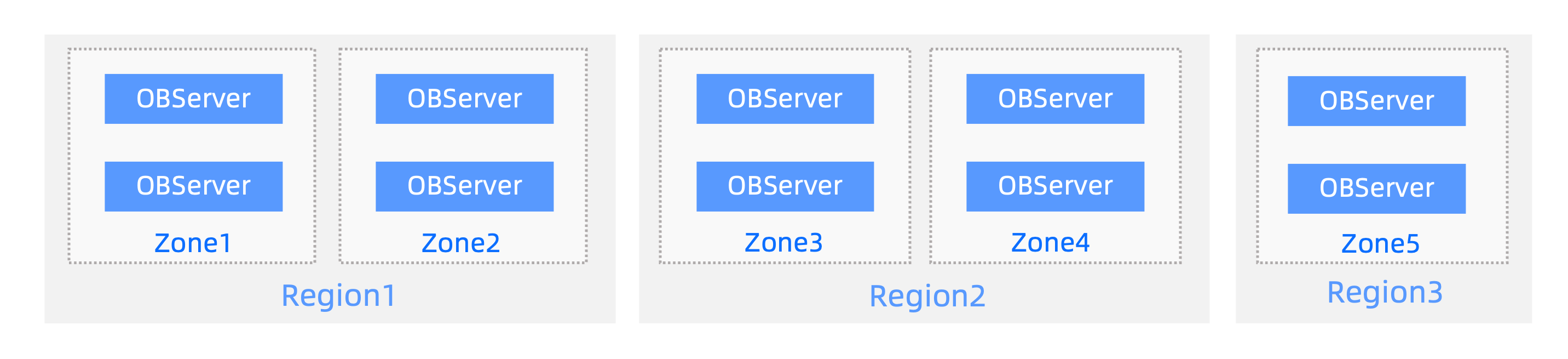

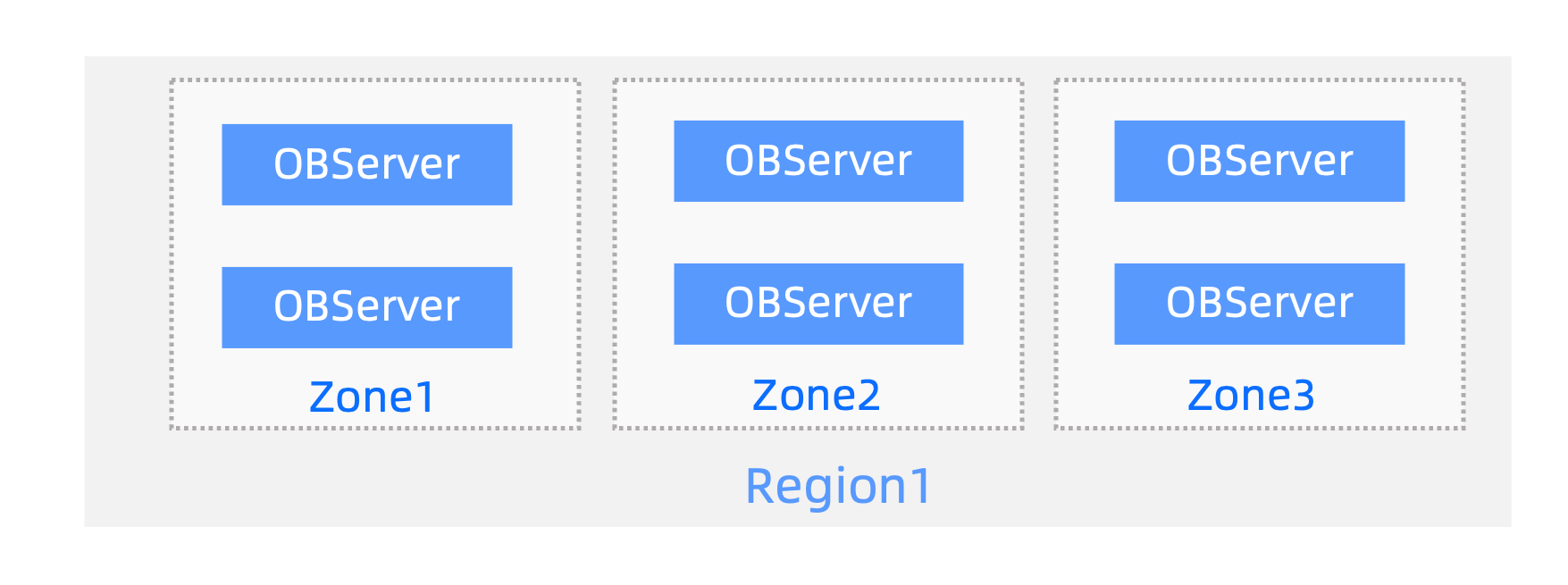

To make the majority of replicas of a partition available when a server fails, OceanBase Database ensures that multiple replicas of a partition are scheduled on different servers. Replicas of a partition are distributed across zones or regions. This ensures both data reliability and database service availability in case of city-wide disasters or IDC failures, achieving a balance between reliability and availability. OceanBase Database provides innovative disaster recovery capabilities in several deployment modes. For example, you can deploy your cluster in five IDCs across three regions to implement lossless disaster recovery for city-wide disasters or in three IDCs in the same region to implement lossless disaster recovery for IDC failures.

Five IDCs across three regions

Three IDCs in the same region

The lossless disaster recovery capability of OceanBase Database also facilitates O&M operations on an OceanBase cluster. If you want to replace or repair an IDC or server, you can bring the corresponding IDC or server offline, replace or repair it, and then add a new one as needed. OceanBase Database automatically replicates and balances log stream replicas to avoid impacting the database service.

RootService

An OceanBase cluster provides RootService, which runs on an OBServer node. When the OBServer node on which RootService runs fails, another OBServer node is elected for running RootService. RootService provides resource management, disaster recovery, load balancing, and schema management features.

Resource management

RootService manages metadata of regions, zones, OBServer nodes, resource pools, and resource units. For example, RootService starts or stops OBServer nodes and changes the resource specifications of a tenant.

Load balancing

RootService determines the distribution of units on servers.

Disaster recovery

RootService uses methods such as automatic replication and migration to ensure that the distribution and types of log streams are consistent with those in the specified locality.

Schema management

RootService responds to Data Definition Language (DDL) requests and generates new schemas.

Locality

The distribution and types of log stream replicas in a tenant in different zones are called locality. When you create a tenant, you can specify its locality to determine the initial types and distribution of log stream replicas in the tenant. After a tenant is created, you can change the locality of the tenant to change the types and distribution of replicas.

The following statement creates a tenant named mysql_tenant. Log stream replicas in the tenant in the zones z1, z2, and z3 are full-featured replicas.

obclient> CREATE TENANT mysql_tenant RESOURCE_POOL_LIST =('resource_pool_1'), primary_zone = "z1;z2;z3", locality ="F@z1, F@z2, F@z3" setob_tcp_invited_nodes='%';

The following statement changes the locality of the mysql_tenant tenant so that its log stream replicas in z1 and z2 are full-featured replicas and those in z3 are log replicas. OceanBase Database compares the new locality with the original locality to determine whether to create, delete, or convert the replicas in the corresponding zones.

ALTER TENANT mysql_tenant set locality = "F@z1, F@z2, L@z3";

Each log stream has only one replica on an OBServer node.

Each log stream can have at most one Paxos replica and multiple non-Paxos replicas in each zone. You can specify non-Paxos replicas in the locality, as shown in the following example:

locality = "F{1}@z1, R{2}@z1": indicates that one full-featured replica and two read-only replicas are distributed in z1.locality = "F{1}@z1, R{ALL_SERVER}@z1": indicates that one full-featured replica is distributed in z1 and read-only replicas are created on other servers in z1. The read-only replicas are optional.

RootService creates, deletes, migrates, or converts replicas to make the distribution and types of replicas meet the specified locality.

Primary zone

You can set tenant-level parameters to distribute the leaders of log streams in a tenant across specified zones. In this case, the zones in which the leaders are located are called the primary zone.

The primary zone is a collection of zones. Zones separated by semicolons (;) have different priorities. Zones separated by commas (,) have the same priority. RootService preferentially schedules leaders to zones with higher priorities based on the specified primary zone and distributes leaders on different servers in zones with the same priority. If no primary zone is specified, all zones in a tenant are considered to have the same priority. RootService distributes the leaders of log streams in the tenant on servers in all zones.

You can set tenant-level parameters to specify or change the primary zone of a tenant.

Here are some examples:

Specify a primary zone when you create a tenant with the following zone priorities specified:

z1=z2>z3.obclient> CREATE TENANT mysql_tenant RESOURCE_POOL_LIST =('resource_pool_1'), primary_zone = "z1,z2;z3", locality ="F@z1, F@z2, F@z3" setob_tcp_invited_nodes='%';Change the primary zone of a tenant with the following zone priorities specified:

z1>z2>z3.obclient> ALTER TENANT mysql_tenant set primary_zone ="z1;z2;z3";Change the primary zone of a tenant with the following zone priorities specified:

z1=z2=z3.obclient> ALTER TENANT mysql_tenant set primary_zone =RANDOM;

Note

The primary zone provides reference for selecting the leader. Whether a log stream replica in the corresponding zone can become a leader also depends on the replica type and log synchronization progress.