This topic describes the common business scenarios in which OBDUMPER is used and provides the corresponding examples.

The following table describes the database information that is used in the examples.

Database information item |

Example value |

|---|---|

| Cluster name | cluster_a |

| IP address of the OceanBase DataBase Proxy (ODP) host | 10.0.0.0 |

| ODP port number | 2883 |

| Tenant name of the cluster | mysql |

| Name of the root/proxyro user under the sys tenant | **u*** |

| Password of the root/proxyro user under the sys tenant | ****** |

| User account (with read/write privileges) under the business tenant | test |

| Password of the user under the business tenant | ****** |

| Schema name | USERA |

Export DDL definition files

Scenario description: Export all supported database object definition statements in the USERA schema to the /output directory. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant.

Sample code:

[admin@localhost]> ./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --ddl --all -f /output

Note

The--sys-useroption specifies the username of a user with required privileges under the sys tenant. If the--sys-useroption is not specified during export,--sys-user rootis specified by default.

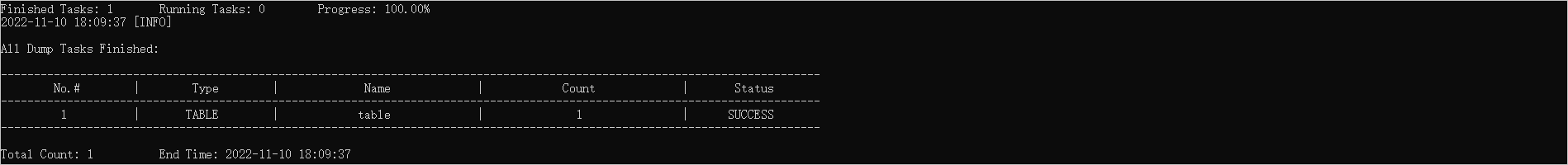

Task result example:

Exported content example:

Export CSV data files

Scenario description: Export data in all tables in the USERA schema to the /output directory in the CSV format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. For more information about the CSV data file specifications, see the RFC 4180. CSV data files (.csv files) store data in the form of plain text. You can open them by using a text editor or Excel.

Sample code:

[admin@localhost]> ./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --csv --table '*' -f /output

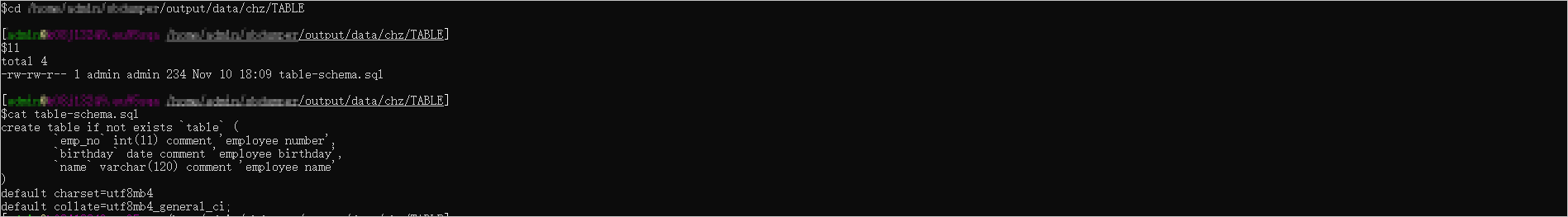

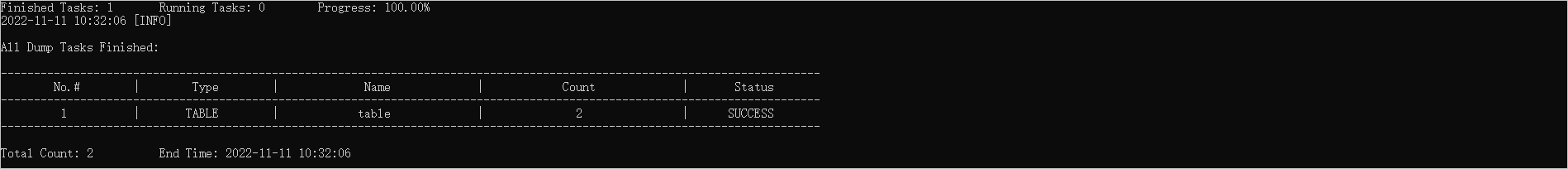

Task result example:

Exported content example:

Export SQL data files

Scenario description: Export data in all tables in the USERA schema to the /output directory in the SQL format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. SQL data files (.sql files) store INSERT SQL statements. You can open them by using a text editor or SQL editor.

Sample code:

[admin@localhost]> ./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --sql --table '*' -f /output

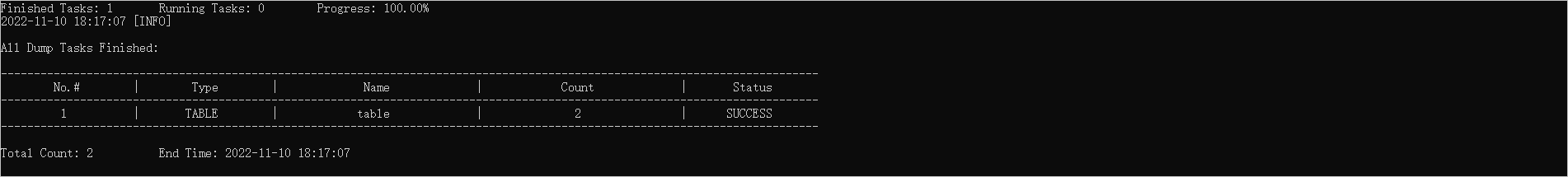

Task result example:

Exported content example:

Export CUT data files

Scenario description: Export data in all tables in the USERA schema to the /output directory in the CUT format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. Specify |@| as the column separator string for the exported data. CUT data files (.dat files) use a character or character string to separate values. You can open CUT data files by using a text editor.

Sample code:

./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --table '*' -f /output --cut --column-splitter '|@|' --trail-delimiter

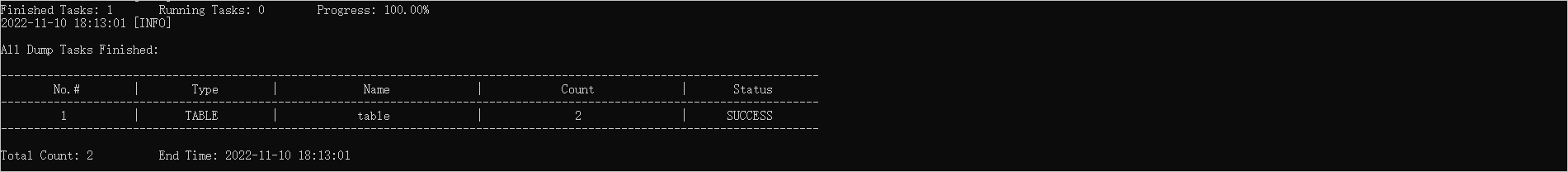

Task result example:

Exported content example:

Customize the name of an exported file

Scenario description: Export data in the table table in the USERA schema to the /output directory in the CSV format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. Specify the name of the exported file as filetest.

Sample code:

./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --csv --table 'table' --file-name 'filetest.txt' -f /output

Task result example:

Exported content example:

Use a control file to export data

Scenario description: Export data in the table table in the USERA schema to the /output directory in the CSV format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. Specify /output as the path of the control file for preprocessing the data to be exported.

Sample code:

./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA --table'table' -f /output --csv --ctl-path /output

Note

The table name defined in the database must be in the same letter case as its corresponding control file name. Otherwise, OBLOADER fails to recognize the control file. For more information about control file definition rules, see Preprocessing functions.

Export data from specified table columns

Scenario description: Export data in the table table in the USERA schema to the /output directory in the CSV format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant. Specify the --exclude-column-names option to exclude the columns that do not need to be exported.

Sample code:

./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA -f /output --table'table' --csv --exclude-column-names 'deptno'

Export the result set of a custom query

Scenario description: Export the result set of the query statement specified in the --query-sql option to the /output directory in the CSV format. OceanBase Database of versions earlier than V4.0.0.0 require the password of the sys tenant.

Sample code:

./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** --sys-user **u*** --sys-password ****** -c cluster_a -t mysql -D USERA -f /output --csv --query-sql 'select deptno,dname from dept where deptno<3000'

Note

Make sure that the SQL query statement has correct syntax and required query performance.

Export database objects and data to a public cloud

Scenario: When the user cannot provide the sys tenant password, export all defined database objects and table data in the USERA schema in a public cloud to the /output directory.

Sample code:

[admin@localhost]> ./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** -D USERA --ddl --csv --public-cloud --all -f /output

Export database objects and data to a private cloud

Scenario: When the user cannot provide the sys tenant password, export all defined database objects and table data in the USERA schema in a private cloud to the /output directory.

Sample code:

[admin@localhost]> ./obdumper -h 10.0.0.0 -P 2883 -u test -p ****** -c cluster_a -t mysql -D USERA --ddl --csv --no-sys --all -f /output

Notice

The export of database object definitions may have defects when the sys tenant password cannot be provided in a public cloud or private cloud environment. For example, sequence definitions cannot be exported form a MySQL tenant, table group definitions cannot be exported from OceanBase Database of versions earlier than V2.2.70, index definitions cannot be exported from Oracle tenants in OceanBase Database V2.2.30 and earlier, partition information of unique indexes cannot be exported from OceanBase Database of versions earlier than V2.2.70, and unique index definitions of partitioned tables cannot be exported from Oracle tenants in OceanBase Database V2.2.70 to V4.0.0.0.

Set session-level variables

# This variable is used to initialize the session.

# The default value is 5 minutes.

ob.query.timeout.for.init.session=5

# This variable is used to initialize the session.

# The default value is 5 minutes.

ob.trx.timeout.for.init.session=5

# This variable is used to query metadata, such as

# to query the database;

# to query the row key, primary key, and macro range;

# to query the primary key;

# to query the unique key;

# to query the load status;

# The default value is 5 minutes.

ob.query.timeout.for.query.metadata=5

# This variable is used to dump records for CSV, CUT, and SQL.

# The default value is 24 hours.

ob.query.timeout.for.dump.record=24

# This variable is used to dump records for query-sql.

# The default value is 5 hours.

ob.query.timeout.for.dump.custom=5

# This variable is used to execute DDL statements, such as statements for loading the schema and truncating the table.

# The default value is 1 minute.

ob.query.timeout.for.exec.ddl=1

# This variable is used to execute DML statements, such as the statement for deleting the table.

# The default value is 1 hour.

ob.query.timeout.for.exec.dml=1

# This variable is used to dump records for CSV, CUT, and SQL.

# The default value is 24 hours.

ob.trx.timeout.for.dump.record=24

# This variable is used to dump records for query-sql.

# The default value is 5 hours.

ob.trx.timeout.for.dump.custom=5

# This variable is used to dump records for CSV, CUT, and SQL.

# The default value is 24 hours.

ob.net.read.timeout.for.dump.record=24

# This variable is used to dump records for query-sql.

# The default value is 5 hours.

ob.net.read.timeout.for.dump.custom=5

# This variable is used to dump records for CSV, CUT, and SQL.

# The default value is 24 hours.

ob.net.write.timeout.for.dump.record=24

# This variable is used to dump records for query-sql.

# The default value is 5 hours.

ob.net.write.timeout.for.dump.custom=5

# This variable is used to set the session variable ob_proxy_route_policy.

# The default value is follower_first.

ob.proxy.route.policy=follower_first

# This variable is used to set the JDBC URL option useSSL.

# The default value is false.

jdbc.url.use.ssl=false

# This variable is used to set the JDBC URL option useUnicode.

# The default value is true.

jdbc.url.use.unicode=true

# This variable is used to set the JDBC URL option socketTimeout.

# The default value is 30 minutes.

jdbc.url.socket.timeout=30

# This variable is used to set the JDBC URL option connectTimeout.

# The default value is 3 minutes.

jdbc.url.connect.timeout=3

# This variable is used to set the JDBC URL option characterEncoding.

# The default value is utf8.

jdbc.url.character.encoding=utf8

# This variable is used to set the JDBC URL option useCompression.

# The default value is true.

jdbc.url.use.compression=true

# This variable is used to set the JDBC URL option cachePrepStmts.

# The default value is true.

jdbc.url.cache.prep.stmts=true

# This variable is used to set the JDBC URL option noDatetimeStringSync.

# The default value is true.

jdbc.url.no.datetime.string.sync=true

# This variable is used to set the JDBC URL option useServerPrepStmts.

# The default value is true.

jdbc.url.use.server.prep.stmts=true

# This variable is used to set the JDBC URL option allowMultiQueries.

# The default value is true.

jdbc.url.allow.multi.queries=true

# This variable is used to set the JDBC URL option rewriteBatchedStatements.

# The default value is true.

jdbc.url.rewrite.batched.statements=true

# This variable is used to set the JDBC URL option useLocalSessionState.

# The default value is true.

jdbc.url.use.local.session.state=true

# This variable is used to set the JDBC URL option zeroDateTimeBehavior.

# The default value is convertToNull.

jdbc.url.zero.datetime.behavior=convertToNull

# This variable is used to set the JDBC URL option verifyServerCertificate.

# The default value is false.

jdbc.url.verify.server.certificate=false

# This variable is used to set the JDBC URL option usePipelineAuth.

# The default value is false.

jdbc.url.use.pipeline.auth=false

# This variable is used to set the JDBC URL option socketProxyHost.

# The default value is null.

jdbc.url.socks.proxy.host=null

# This variable is used to set the JDBC URL option socketProxyPort.

# The default value is null.

jdbc.url.socks.proxy.port=null