OceanBase Database is suitable for hybrid transaction/analytical processing (HTAP) scenarios. OceanBase Database adopts a distributed architecture based on peer nodes. This architecture allows OceanBase Database to handle high-concurrency and scalable online transaction processing (OLTP) tasks and perform parallel computing for online analytical processing (OLAP) tasks based on the massively parallel processing (MPP) architecture in the same data engine, without maintaining two sets of data.

OceanBase Database not only allows you to analyze a large amount of online business data in parallel but also allows you to perform parallel DML (PDML) operations to quickly and securely execute large transactions that concurrently write data in batches. All these are achieved without compromising transaction consistency.

The following describes how to manually run the Transaction Processing Performance Council Benchmark H (TPC-H) benchmark test to show the characteristics of OceanBase Database in operational OLAP scenarios. TPC-H is a commonly used benchmark that measures the analysis and decision support capabilities of database systems by using a series of complex queries on massive amounts of data. For more information, visit the official website of TPC. On May 20, 2021, OceanBase Database set a new world record in running the TPC-H benchmark with a result of 15.26 million QphH. It is by far the only database that achieved top results in running both the TPC-C and TPC-H benchmarks, which testifies its HTAP capabilities in both online transactions and real-time analysis. For more information, see TPC-H Results.

Manually run the TPC-H benchmark test

Use the official TPC-H tools from TPC to manually run the TPC-H benchmark test step by step. Manual testing can help you better understand OceanBase Database, especially the settings of some parameters.

Optimize the test environment

Perform the following steps in the sys tenant:

Optimize OceanBase Database parameters.

Run the following command in the

systenant:obclient -h$host_ip -P$host_port -uroot@sys -A.# Adjust the memory usage of the sys tenant to provide more resources for the test tenant. You need to adjust the memory usage based on the actual environment. ALTER SYSTEM SET system_memory='15g'; ALTER RESOURCE UNIT sys_unit_config memory_size ='15G'; # Optimize the parameters. ALTER SYSTEM SET trace_log_slow_query_watermark='100s'; ALTER SYSTEM SET enable_sql_operator_dump=True; ALTER SYSTEM SET _hash_area_size='3g'; ALTER SYSTEM SET memstore_limit_percentage=50; ALTER SYSTEM SET enable_rebalance=False; ALTER SYSTEM SET memory_chunk_cache_size='0'; ALTER SYSTEM SET major_compact_trigger=5; ALTER SYSTEM SET cache_wash_threshold='30g'; # Adjust the log level and the maximum number of log files that can be saved. ALTER SYSTEM SET syslog_level='PERF'; ALTER SYSTEM SET max_syslog_file_count=100; ALTER SYSTEM SET enable_syslog_recycle='True';Set tenant parameters.

Run the following command in the test user:

obclient -h$host_ip -P$host_port -u$user@$tenant -p****** -A.# Set global parameters. SET GLOBAL ob_sql_work_area_percentage=80; SET GLOBAL optimizer_use_sql_plan_baselines = true; SET GLOBAL optimizer_capture_sql_plan_baselines = true; ALTER SYSTEM SET ob_enable_batched_multi_statement='true'; # Set relevant tenant parameters to prevent transaction timeout. SET GLOBAL ob_query_timeout=36000000000; SET GLOBAL ob_trx_timeout=36000000000; SET GLOBAL max_allowed_packet=67108864; SET GLOBAL secure_file_priv=""; /* We recommend that you set 'parallel_server_target' to the result of the following formula: Number of CPU cores of the resource units allocated to the test tenant × 10 × Number of OBServer nodes × 0.8. */ SET GLOBAL parallel_servers_target=624;Restart the cluster.

Install the TPC-H tools

Download the TPC-H tools from the TPC-H Tools Download page.

Unzip the package and go to the TPC-H directory.

[wieck@localhost ~] $ unzip 7e965ead-8844-4efa-a275-34e35f8ab89b-tpc-h-tool.zip [wieck@localhost ~] $ cd TPC-H_Tools_v3.0.0Copy the

Makefile.suitefile.[wieck@localhost TPC-H_Tools_v3.0.0] $ cd dbgen/ [wieck@localhost dbgen] $ cp Makefile.suite MakefileRedefine the

CC,DATABASE,MACHINE, andWORKLOADparameters in theMakefile.suitefile.CC = gcc # Current values for DATABASE are: INFORMIX, DB2, TDAT (Teradata) # SQLSERVER, SYBASE, ORACLE, VECTORWISE # Current values for MACHINE are: ATT, DOS, HP, IBM, ICL, MVS, # SGI, SUN, U2200, VMS, LINUX, WIN32 # Current values for WORKLOAD are: TPCH DATABASE= MYSQL MACHINE = LINUX WORKLOAD = TPCHModify the

tpcd.hfile and add new macro definitions.#ifdef MYSQL #define GEN_QUERY_PLAN "" #define START_TRAN "START TRANSACTION" #define END_TRAN "COMMIT" #define SET_OUTPUT "" #define SET_ROWCOUNT "limit %d;\n" #define SET_DBASE "use %s;\n" #endifCompile the files.

make

Generate data

Generate 10 GB, 100 GB, or 1 TB of data as needed. The following example shows the command to generate 100 GB of data.

./dbgen -s 100

mkdir tpch100

mv *.tbl tpch100

Generate SQL query statements

Note

You can perform the following steps to generate an SQL query statement and then adjust it, or you can use the SQL query statement provided in GitHub.

However, before you use the SQL query statement provided in GitHub, change the value of the cpu_num parameter in the statement to the actual number of concurrent threads.

Copy the

qgenanddists.dssfiles to thequeriesdirectory.cp qgen queries cp dists.dss queriesIn the

queriesdirectory, create agen.shscript to generate SQL query statements.#!/usr/bin/bash for i in {1..22} do ./qgen -d $i -s 100 > db"$i".sql doneRun the

gen.shscript.chmod +x gen.sh ./gen.shModify the SQL query statements.

dos2unix *

For the modified SQL query statements, see GitHub. You need to change the value of the cpu_num parameter in the SQL statements to the actual number of concurrent threads. To ensure the best performance, we recommend that you set the value to the total number of available CPU cores.

Run the following command under the sys tenant to view the total number of available CPU cores for a tenant:

select sum(max_cpu) from DBA_OB_UNITS ;

Take q1 as an example. The modified SQL statement is as follows:

select /*+ parallel(96) */ --- Specify the execution concurrency.

l_returnflag,

l_linestatus,

sum(l_quantity) as sum_qty,

sum(l_extendedprice) as sum_base_price,

sum(l_extendedprice * (1 - l_discount)) as sum_disc_price,

sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge,

avg(l_quantity) as avg_qty,

avg(l_extendedprice) as avg_price,

avg(l_discount) as avg_disc,

count(*) as count_order

from

lineitem

where

l_shipdate <= date '1998-12-01' - interval '90' day

group by

l_returnflag,

l_linestatus

order by

l_returnflag,

l_linestatus;

Create a table

Create a schema file named create_tpch_mysql_table_part.ddl.

create tablegroup if not exists tpch_tg_100g_lineitem_order_group binding true partition by key 1 partitions 192;

create tablegroup if not exists tpch_tg_100g_partsupp_part binding true partition by key 1 partitions 192;

drop database if exists $db_name;

create database $db_name;

use $db_name;

create table lineitem (

l_orderkey bigint not null,

l_partkey bigint not null,

l_suppkey bigint not null,

l_linenumber bigint not null,

l_quantity bigint not null,

l_extendedprice bigint not null,

l_discount bigint not null,

l_tax bigint not null,

l_returnflag char(1) default null,

l_linestatus char(1) default null,

l_shipdate date not null,

l_commitdate date default null,

l_receiptdate date default null,

l_shipinstruct char(25) default null,

l_shipmode char(10) default null,

l_comment varchar(44) default null,

primary key(l_orderkey, l_linenumber))

tablegroup = tpch_tg_100g_lineitem_order_group

partition by key (l_orderkey) partitions 192;

create index I_L_ORDERKEY on lineitem(l_orderkey) local;

create index I_L_SHIPDATE on lineitem(l_shipdate) local;

create table orders (

o_orderkey bigint not null,

o_custkey bigint not null,

o_orderstatus char(1) default null,

o_totalprice bigint default null,

o_orderdate date not null,

o_orderpriority char(15) default null,

o_clerk char(15) default null,

o_shippriority bigint default null,

o_comment varchar(79) default null,

primary key (o_orderkey))

tablegroup = tpch_tg_100g_lineitem_order_group

partition by key(o_orderkey) partitions 192;

create index I_O_ORDERDATE on orders(o_orderdate) local;

create table partsupp (

ps_partkey bigint not null,

ps_suppkey bigint not null,

ps_availqty bigint default null,

ps_supplycost bigint default null,

ps_comment varchar(199) default null,

primary key (ps_partkey, ps_suppkey))

tablegroup tpch_tg_100g_partsupp_part

partition by key(ps_partkey) partitions 192;

create table part (

p_partkey bigint not null,

p_name varchar(55) default null,

p_mfgr char(25) default null,

p_brand char(10) default null,

p_type varchar(25) default null,

p_size bigint default null,

p_container char(10) default null,

p_retailprice bigint default null,

p_comment varchar(23) default null,

primary key (p_partkey))

tablegroup tpch_tg_100g_partsupp_part

partition by key(p_partkey) partitions 192;

create table customer (

c_custkey bigint not null,

c_name varchar(25) default null,

c_address varchar(40) default null,

c_nationkey bigint default null,

c_phone char(15) default null,

c_acctbal bigint default null,

c_mktsegment char(10) default null,

c_comment varchar(117) default null,

primary key (c_custkey))

partition by key(c_custkey) partitions 192;

create table supplier (

s_suppkey bigint not null,

s_name char(25) default null,

s_address varchar(40) default null,

s_nationkey bigint default null,

s_phone char(15) default null,

s_acctbal bigint default null,

s_comment varchar(101) default null,

primary key (s_suppkey)

) partition by key(s_suppkey) partitions 192;

create table nation (

n_nationkey bigint not null,

n_name char(25) default null,

n_regionkey bigint default null,

n_comment varchar(152) default null,

primary key (n_nationkey));

create table region (

r_regionkey bigint not null,

r_name char(25) default null,

r_comment varchar(152) default null,

primary key (r_regionkey));

Load data

Write your own script based on the data and SQL query statements generated from the preceding steps. The following is an example of how to load data.

Create a directory for loading scripts.

mkdir load cd load cp ../dss.ri ../dss.ddl ./Create the

load.pyscript.$cat load.py #/usr/bin/evn python #-*- encoding:utf-8 -*- import os import sys import time import commands hostname='$host_ip' # Attention! Please fill in the IP address of an OBServer node, such as OBServer A. port='$host_port' # Port number of OBServer A tenant='$tenant_name' # Tenant name user='$user' # Username password='$password' # Password data_path='$path' # Attention! Please fill in the directory where tbl is located on OBServer A. db_name='$db_name' # Database name # Create a table cmd_str='obclient -h%s -P%s -u%s@%s -p%s -D%s < create_tpch_mysql_table_part.ddl'%(hostname,port,user,tenant,password,db_name) result = commands.getstatusoutput(cmd_str) print result cmd_str='obclient -h%s -P%s -u%s@%s -p%s -D%s -e "show tables;" '%(hostname,port,user,tenant,password,db_name) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/customer.tbl' into table customer fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/lineitem.tbl' into table lineitem fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/nation.tbl' into table nation fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/orders.tbl' into table orders fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -D%s -e "load data /*+ parallel(80) */ infile '%s/partsupp.tbl' into table partsupp fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/part.tbl' into table part fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/region.tbl' into table region fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print result cmd_str=""" obclient -h%s -P%s -u%s@%s -p%s -c -D%s -e "load data /*+ parallel(80) */ infile '%s/supplier.tbl' into table supplier fields terminated by '|';" """ %(hostname,port,user,tenant,password,db_name,data_path) result = commands.getstatusoutput(cmd_str) print resultLoad the data.

Notice

To load data, you need to install the OBClient.

$ python load.py (0,'') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.\nTABLE_NAME\nT1\nLINEITEM\nORDERS\nPARTSUPP\nPART\nCUSTOMER\nSUPPLIER\nNATION\nREGION') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.') (0, 'obclient: [Warning] Using a password on the command line interface can be insecure.')Perform a major compaction.

A major compaction combines the current major version's SSTables and MemTables with the full static data of the previous major version and generates a new set of full data. This makes the statistics of the storage layer more accurate and improves the stability of execution plans.

Note

To perform a major compaction, you must log on to the

systenant of the OceanBase cluster as therootuser.MySQL [(none)]> USE oceanbase Database changed MySQL [oceanbase]> ALTER SYSTEM MAJOR FREEZE; Query OK, 0 rows affectedCheck whether the major compaction is complete.

MySQL [oceanbase]> SELECT name,value FROM oceanbase.__all_zone WHERE name='frozen_version' OR name='last_merged_version'; +---------------------+-------+ | name | value | +---------------------+-------+ | frozen_version | 2 | | last_merged_version | 2 | | last_merged_version | 2 | | last_merged_version | 2 | | last_merged_version | 2 | +---------------------+-------+Note

The major compaction is considered complete when the value of

frozen_versionis equal to that oflast_merged_versionfor all zones.

Run the test

Write your own script based on the data and SQL query statements generated from the preceding steps. The following is an example of how to run the test.

Write the

tpch.shscript in thequeriesdirectory.#!/bin/bash TPCH_TEST="obclient -h $host_ip -P $host_port -utpch_100g_part@tpch_mysql -D tpch_100g_part -ptest -c" # Run a warmup. for i in {1..22} do sql1="source db${i}.sql" echo $sql1| $TPCH_TEST >db${i}.log || ret=1 done # Run the formal test. for i in {1..22} do starttime=`date +%s%N` echo `date '+[%Y-%m-%d %H:%M:%S]'` "BEGIN Q${i}" sql1="source db${i}.sql" echo $sql1| $TPCH_TEST >db${i}.log || ret=1 stoptime=`date +%s%N` costtime=`echo $stoptime $starttime | awk '{printf "%0.2f\n", ($1 - $2) / 1000000000}'` echo `date '+[%Y-%m-%d %H:%M:%S]'` "END,COST ${costtime}s" doneRun the test script.

sh tpch.sh

FAQ

Q: What do I do when an error occurred while importing data? Error message:

ERROR 1017 (HY000) at line 1: File not existA: The

tblfile must be placed in a directory on the server that hosts OceanBase Database, because only local data can be imported and loaded.Q: What do I do when an error occurred while viewing data? Error message:

ERROR 4624 (HY000): No memory or reach tenant memory limitA: You are running out of memory. Allocate more memory to the tenant.

Q: What do I do when an error occurred while importing data. Error message:

ERROR 1227 (42501) at line 1: Access deniedA: Grant the access privilege to the logon user by running the following command:

grant file on *.* to tpch_100g_part;Q: What do I do when an error occurred while adjusting

dos2unix *for the SQL query? Error message:-bash: dos2unix: command not foundA: Install dos2unix by running the following command:

yum install -y dos2unix

Try out operational OLAP

Through the previous operation, you have obtained a TPC-H test environment. Now, try out the operational OLAP capability of OceanBase Database. First, log on to your database through the OBClient or a MySQL client.

obclient -h127.0.0.1 -P2881 -uroot@test -Dtest -A -p -c

Before you start, set the degree of parallelism (DOP) based on the configurations of the OceanBase cluster and the tenant. We recommend that you set the DOP to be no more than twice the number of CPU cores of your tenant. For example, if your tenant has a maximum of 8 CPU cores, set the DOP to 16:

MySQL [test]> SET GLOBAL parallel_servers_target=16;

Query OK, 0 rows affected

MySQL [test]> SET GLOBAL parallel_max_servers=16;

Query OK, 0 rows affected

OceanBase Database is compatible with most internal views of MySQL databases. Run the following command to query the sizes of tables in the current environment:

MySQL [test]> SELECT table_name, table_rows, CONCAT(ROUND(data_length/(1024*1024*1024),2),' GB') table_size FROM information_schema.TABLES WHERE table_schema = 'test' order by table_rows desc;

+------------+------------+------------+

| table_name | table_rows | table_size |

+------------+------------+------------+

| lineitem | 6001215 | 0.37 GB |

| orders | 1500000 | 0.08 GB |

| partsupp | 800000 | 0.04 GB |

| part | 200000 | 0.01 GB |

| customer | 150000 | 0.01 GB |

| supplier | 10000 | 0.00 GB |

| nation | 25 | 0.00 GB |

| region | 5 | 0.00 GB |

+------------+------------+------------+

8 rows in set

Run the Q1 query to verify the query capability of OceanBase Database. Q1 query will summarize and analyze the prices, discounts, shipments, and quantities of various products within a specified period of time on the largest table named lineitem. This query will read all the data in the entire table and perform partitioning, sorting, and aggregation.

Execute the query without enabling parallel queries (default)

Run the following command:

select

l_returnflag,

l_linestatus,

sum(l_quantity) as sum_qty,

sum(l_extendedprice) as sum_base_price,

sum(l_extendedprice * (1 - l_discount)) as sum_disc_price,

sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge,

avg(l_quantity) as avg_qty,

avg(l_extendedprice) as avg_price,

avg(l_discount) as avg_disc,

count(*) as count_order

from

lineitem

where

l_shipdate <= date '1998-12-01' - interval '90' day

group by

l_returnflag,

l_linestatus

order by

l_returnflag,

l_linestatus;

The execution result is as follows:

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

| l_returnflag | l_linestatus | sum_qty | sum_base_price | sum_disc_price | sum_charge | avg_qty | avg_price | avg_disc | count_order |

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

| A | F | 37734107 | 56586577106 | 56586577106 | 56586577106 | 25.5220 | 38273.1451 | 0.0000 | 1478493 |

| N | F | 991417 | 1487505208 | 1487505208 | 1487505208 | 25.5165 | 38284.4806 | 0.0000 | 38854 |

| N | O | 74476040 | 111701776272 | 111701776272 | 111701776272 | 25.5022 | 38249.1339 | 0.0000 | 2920374 |

| R | F | 37719753 | 56568064200 | 56568064200 | 56568064200 | 25.5058 | 38250.8701 | 0.0000 | 1478870 |

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

4 rows in set (6.791 sec)

Execute the query while enabling parallel queries

The operational OLAP capability of OceanBase Database is based on a set of data and the execution engine, without the need to synchronize or maintain heterogeneous data. Add a parallel hint to the query statement to set the DOP to 8 and execute the statement again:

select /*+parallel(8) */

l_returnflag,

l_linestatus,

sum(l_quantity) as sum_qty,

sum(l_extendedprice) as sum_base_price,

sum(l_extendedprice * (1 - l_discount)) as sum_disc_price,

sum(l_extendedprice * (1 - l_discount) * (1 + l_tax)) as sum_charge,

avg(l_quantity) as avg_qty,

avg(l_extendedprice) as avg_price,

avg(l_discount) as avg_disc,

count(*) as count_order

from

lineitem

where

l_shipdate <= date '1998-12-01' - interval '90' day

group by

l_returnflag,

l_linestatus

order by

l_returnflag,

l_linestatus;

In the same environment and datasets, the execution result is as follows:

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

| l_returnflag | l_linestatus | sum_qty | sum_base_price | sum_disc_price | sum_charge | avg_qty | avg_price | avg_disc | count_order |

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

| A | F | 37734107 | 56586577106 | 56586577106 | 56586577106 | 25.5220 | 38273.1451 | 0.0000 | 1478493 |

| N | F | 991417 | 1487505208 | 1487505208 | 1487505208 | 25.5165 | 38284.4806 | 0.0000 | 38854 |

| N | O | 74476040 | 111701776272 | 111701776272 | 111701776272 | 25.5022 | 38249.1339 | 0.0000 | 2920374 |

| R | F | 37719753 | 56568064200 | 56568064200 | 56568064200 | 25.5058 | 38250.8701 | 0.0000 | 1478870 |

+--------------+--------------+----------+----------------+----------------+--------------+---------+------------+----------+-------------+

4 rows in set (1.197 sec)

As you can see, the query speed is increased by nearly 6 times with parallel queries. Run the EXPLAIN command to view the execution plan, which contains the DOP (line 18, operator 1, dop=8):

===============================================================

|ID|OPERATOR |NAME |EST. ROWS|COST |

---------------------------------------------------------------

|0 |PX COORDINATOR MERGE SORT | |6 |13507125|

|1 | EXCHANGE OUT DISTR |:EX10001|6 |13507124|

|2 | SORT | |6 |13507124|

|3 | HASH GROUP BY | |6 |13507107|

|4 | EXCHANGE IN DISTR | |6 |8379337 |

|5 | EXCHANGE OUT DISTR (HASH)|:EX10000|6 |8379335 |

|6 | HASH GROUP BY | |6 |8379335 |

|7 | PX BLOCK ITERATOR | |5939712 |3251565 |

|8 | TABLE SCAN |lineitem|5939712 |3251565 |

===============================================================

Outputs & filters:

-------------------------------------

0 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_quantity)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_extendedprice)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_discount)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_discount)), DECIMAL(20, 0))], [T_FUN_COUNT_SUM(T_FUN_COUNT(*))]), filter(nil), sort_keys([lineitem.l_returnflag, ASC], [lineitem.l_linestatus, ASC])

1 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax))], [T_FUN_COUNT_SUM(T_FUN_COUNT(*))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_quantity)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_extendedprice)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_discount)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_discount)), DECIMAL(20, 0))]), filter(nil), dop=8

2 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax))], [T_FUN_COUNT_SUM(T_FUN_COUNT(*))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_quantity)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_extendedprice)), DECIMAL(20, 0))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_discount)) / cast(T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_discount)), DECIMAL(20, 0))]), filter(nil), sort_keys([lineitem.l_returnflag, ASC], [lineitem.l_linestatus, ASC])

3 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_quantity))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_discount))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_discount))], [T_FUN_COUNT_SUM(T_FUN_COUNT(*))]), filter(nil),

group([lineitem.l_returnflag], [lineitem.l_linestatus]), agg_func([T_FUN_SUM(T_FUN_SUM(lineitem.l_quantity))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax))], [T_FUN_COUNT_SUM(T_FUN_COUNT(*))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_quantity))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_extendedprice))], [T_FUN_SUM(T_FUN_SUM(lineitem.l_discount))], [T_FUN_COUNT_SUM(T_FUN_COUNT(lineitem.l_discount))])

4 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(lineitem.l_quantity)], [T_FUN_SUM(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax)], [T_FUN_COUNT(lineitem.l_quantity)], [T_FUN_COUNT(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_discount)], [T_FUN_COUNT(lineitem.l_discount)], [T_FUN_COUNT(*)]), filter(nil)

5 - (#keys=2, [lineitem.l_returnflag], [lineitem.l_linestatus]), output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(lineitem.l_quantity)], [T_FUN_SUM(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax)], [T_FUN_COUNT(lineitem.l_quantity)], [T_FUN_COUNT(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_discount)], [T_FUN_COUNT(lineitem.l_discount)], [T_FUN_COUNT(*)]), filter(nil), dop=8

6 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [T_FUN_SUM(lineitem.l_quantity)], [T_FUN_SUM(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax)], [T_FUN_COUNT(lineitem.l_quantity)], [T_FUN_COUNT(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_discount)], [T_FUN_COUNT(lineitem.l_discount)], [T_FUN_COUNT(*)]), filter(nil),

group([lineitem.l_returnflag], [lineitem.l_linestatus]), agg_func([T_FUN_SUM(lineitem.l_quantity)], [T_FUN_SUM(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount)], [T_FUN_SUM(lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax)], [T_FUN_COUNT(*)], [T_FUN_COUNT(lineitem.l_quantity)], [T_FUN_COUNT(lineitem.l_extendedprice)], [T_FUN_SUM(lineitem.l_discount)], [T_FUN_COUNT(lineitem.l_discount)])

7 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [lineitem.l_quantity], [lineitem.l_extendedprice], [lineitem.l_discount], [lineitem.l_extendedprice * 1 - lineitem.l_discount], [lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax]), filter(nil)

8 - output([lineitem.l_returnflag], [lineitem.l_linestatus], [lineitem.l_quantity], [lineitem.l_extendedprice], [lineitem.l_discount], [lineitem.l_extendedprice * 1 - lineitem.l_discount], [lineitem.l_extendedprice * 1 - lineitem.l_discount * 1 + lineitem.l_tax]), filter([lineitem.l_shipdate <= ?]),

access([lineitem.l_shipdate], [lineitem.l_returnflag], [lineitem.l_linestatus], [lineitem.l_quantity], [lineitem.l_extendedprice], [lineitem.l_discount], [lineitem.l_tax]), partitions(p[0-15])

In this example, OceanBase Database is deployed on a single server. However, the most prominent feature of the parallel execution framework of OceanBase Database lies in that it can concurrently execute analytical queries on large amounts of data on multiple servers. For example, if a table has hundreds of millions of data rows across multiple OBServer nodes, the distributed execution framework generates a distributed parallel execution plan and uses the resources of these nodes for analytical queries. Therefore, OceanBase Database has high scalability. In addition, you can set the DOP in multiple dimensions, such as SQL statement, session, and table.

Use OBD to run the TPC-H benchmark test

Applicability

This section applies only to OceanBase Database Community Edition. OceanBase Database Enterprise Edition does not support OceanBase Deployer (OBD).

Apart from using the official TPC-H tools from TPC, you can also use OBD to run the TPC-H benchmark test. Before you start, install the obtpch component on the server where OceanBase Database and OBD are deployed:

sudo yum install obtpch

Then, run the following command to start the TPC-H benchmark test, with a dataset size of 1 GB. The entire process includes dataset generation, schema import, and automatic test execution. In this example, it is assumed that your test environment is deployed in the same way as that in Get started with OceanBase Database. You can modify the cluster name, password, installation directory, etc. to adapt to your situation.

Notice

Make sure that your disk space is sufficient to store the dataset files, so as to avoid filling up the space and causing system errors.

In this example, the /tmp directory is used to store dataset files.

cd /tmp

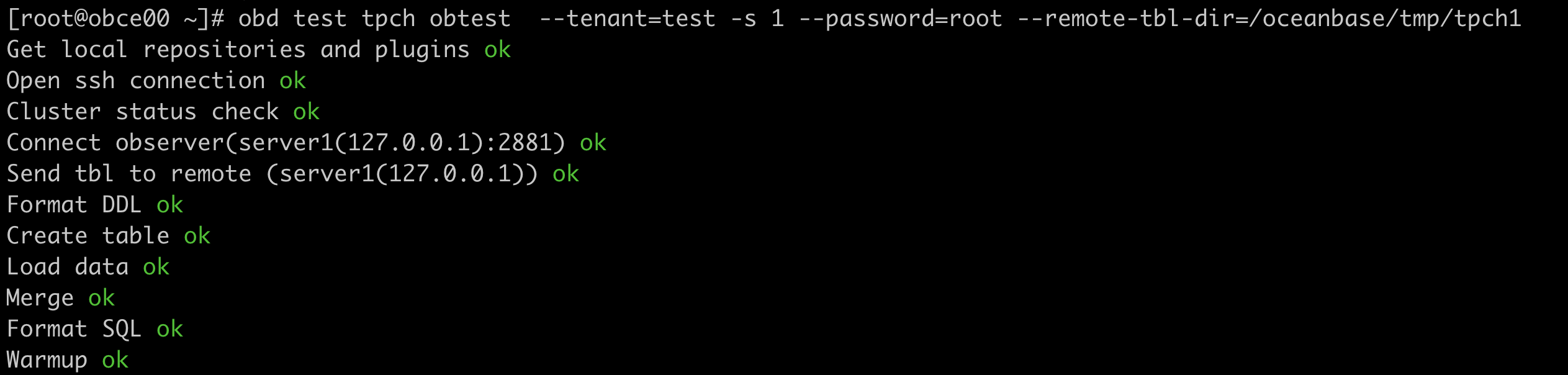

obd test tpch obtest --tenant=test -s 1 --password='******' --remote-tbl-dir=/tmp/tpch1

After the preceding command is executed, OBD starts to run the benchmark test, and you can see each step of the test process.

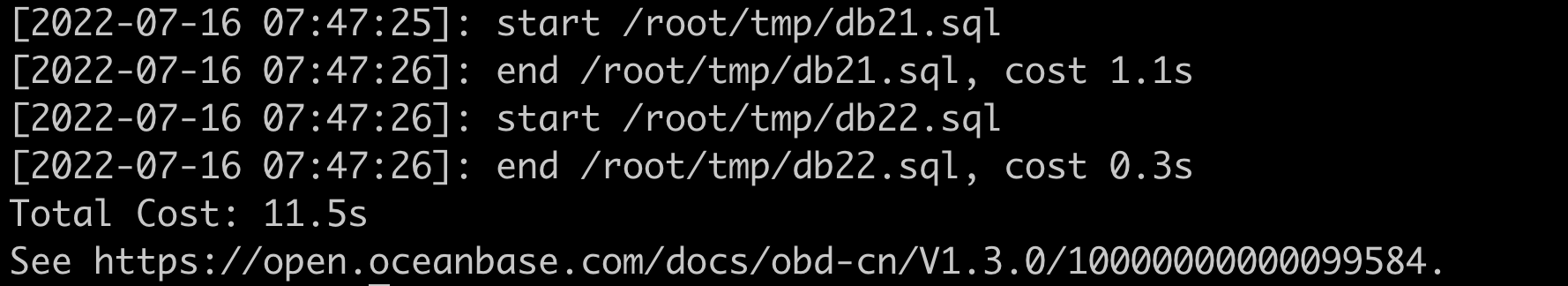

After the data import is completed, OBD automatically executes 22 SQL statements and prints the execution time for each SQL statement as well as the total execution time.