In OceanBase Database V3.x, replicas are distributed on multiple nodes at the partition granularity. The load balancing module can implement partition balancing within a tenant by performing a partition leader/follower switchover or migrating partition replicas. OceanBase Database V4.x adopts the standalone log stream (LS) architecture, where replicas are distributed at the LS granularity and each LS serves a large number of partitions. Therefore, the load balancing module in OceanBase Database V4.2.x cannot directly control the partition distribution. Instead, the module needs to balance partitions based on LS distribution after achieving LS quantity balancing and LS leader balancing.

Notice

Partition balancing applies only to user tables.

LS balancing and partition balancing are in the following priority order:

LS balancing > Partition balancing

LS balancing (homogeneous zone mode)

LS quantity balancing

When you modify the UNIT_NUM parameter, change the priority of zones in PRIMARY_ZONE, or modify the locality (which affects PRIMARY_ZONE) for a tenant, the background thread of the load balancing module will immediately change the LS quantity and location through LS splitting, merging, regrouping, or other actions to achieve the desired tenant status. That is, after the balancing is complete, each tenant unit has an LS group, the number of LSs in an LS group is equal to the number of top priority zones in PRIMARY_ZONE, and leaders in an LS group are evenly distributed to primary zones of the top priority.

Note

- An LS group is an attribute of an LS. LSs with the same LS group ID are gathered together.

- In the homogeneous zone mode of OceanBase Database V4.x, all zones of a tenant must have the same number of units. To facilitate unit management across zones, the system introduces the unit group mechanism. Units with the same group ID (UNIT_GROUP_ID) in different zones belong to the same unit group. All units in the same unit group have exactly the same data distribution and the same LS replicas, and serve the same partition data. Unit groups are used for data grouping in a resource container. Each group of data is served by one or more LSs. The read/write service capabilities can be extended to multiple units in a unit group.

- In the homogeneous zone mode, one LS group corresponds to one unit group. That is, all LSs with the same LS group ID are distributed in the same unit group.

In the homogeneous zone mode, the LS quantity is calculated by using the following formula:

LS quantity = UNIT_NUM * first_level_primary_zone_num

Note

The LS quantity here refers to the number of user log streams, excluding system log streams and broadcast log streams.

The following table describes sample scenarios where LS quantity balancing is triggered.

Sample scenario |

Condition for triggering balancing |

Balancing algorithm |

LS balancing strategy |

|---|---|---|---|

Top priority zones in PRIMARY_ZONE are changed from Z1,Z2 to Z1and UNIT_NUM is changed from 1 to 2 |

Some LS groups are short of LSs and some have redundant LSs, but the total number of LSs meets final status requirements. | Migrate redundant LSs to LS groups with insufficient LSs. | LS_BALANCE_BY_MIGRATE |

Top priority zones in PRIMARY_ZONE are changed from Z1 to Z1,Z2or UNIT_NUM is changed from 2 to 3 |

Only some LS groups are short of LSs. | Assume that the current LS count is M and the LS count after scale-out is N (M < N). Each missing LS must receive M/N tablets. |

LS_BALANCE_BY_EXPAND |

Top priority zones in PRIMARY_ZONE are changed from Z1,Z2 to Z1or UNIT_NUM is changed from 3 to 2 |

Only some LS groups have redundant LSs. | Assume that the LS quantity is changed from M to N (M > N) after the scale-in. Each of the N remaining LSs is assigned with (M-N)/N of the tablets originally served by the reduced LSs. |

LS_BALANCE_BY_SHRINK |

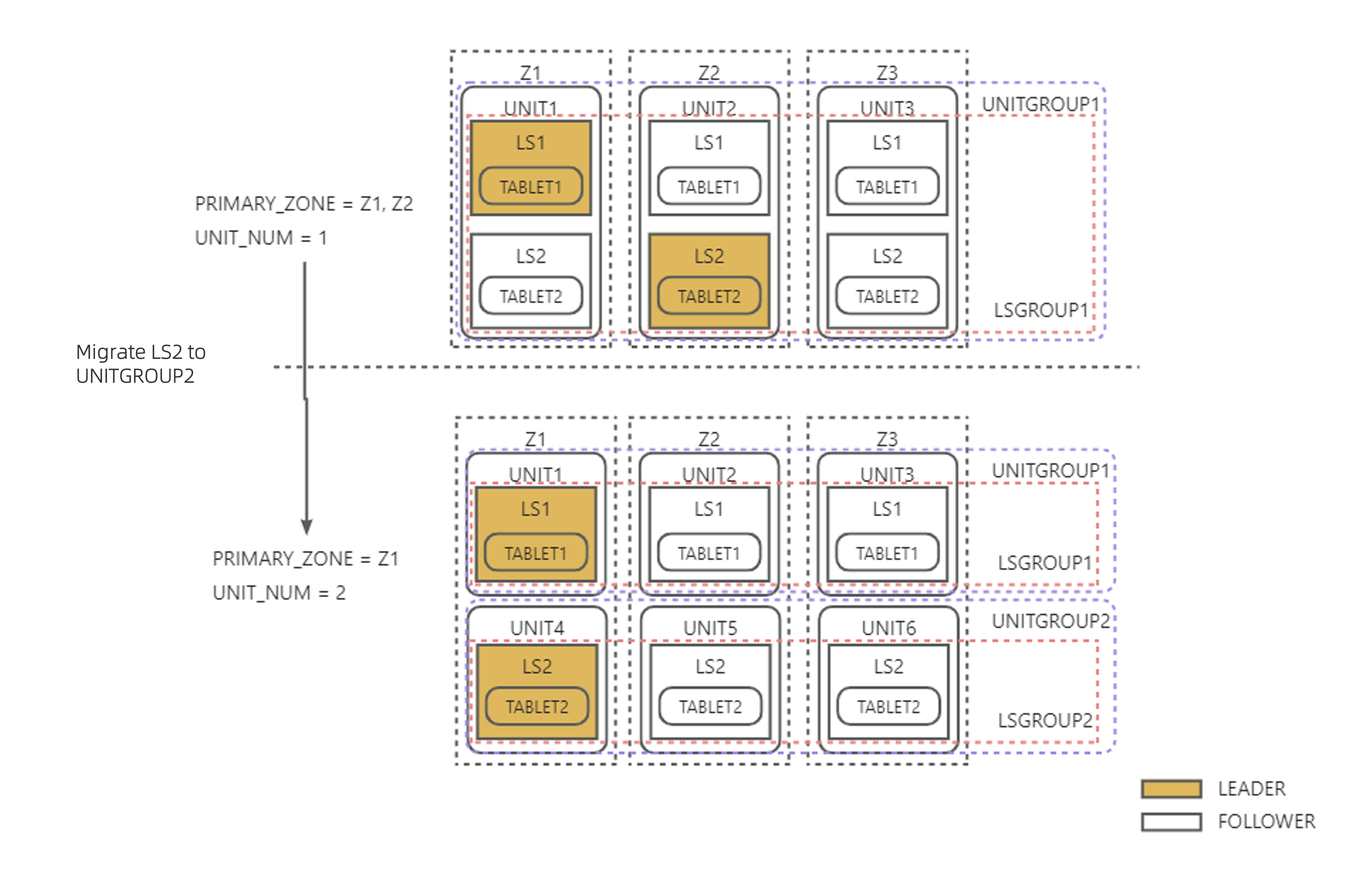

Example 1: As shown in the figure below, top priority zones in PRIMARY_ZONE are changed from Z1,Z2 to Z1 and UNIT_NUM is changed from 1 to 2. The total LS quantity does not change. LS2 is migrated directly to the new unit, and the leader of LS2 is switched to Z1 according to the PRIMARY_ZONE change, completing LS balancing.

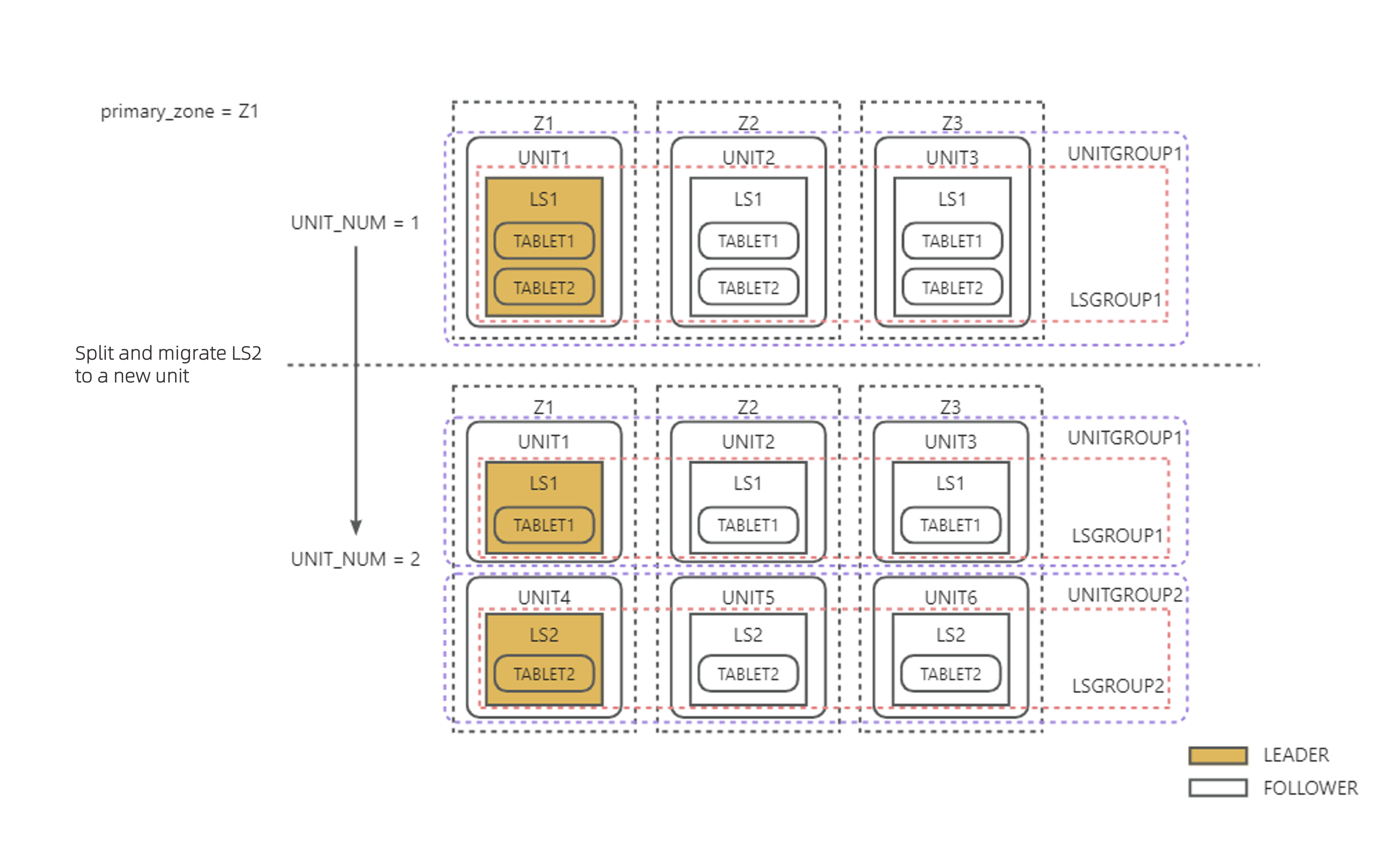

Example 2: As shown in the figure below, PRIMARY_ZONE = Z1 is configured, and UNIT_NUM is changed from 1 to 2. To ensure that every unit has an LS, LS2 is split out, carries 1/2 of the tablets, and is migrated to the new unit.

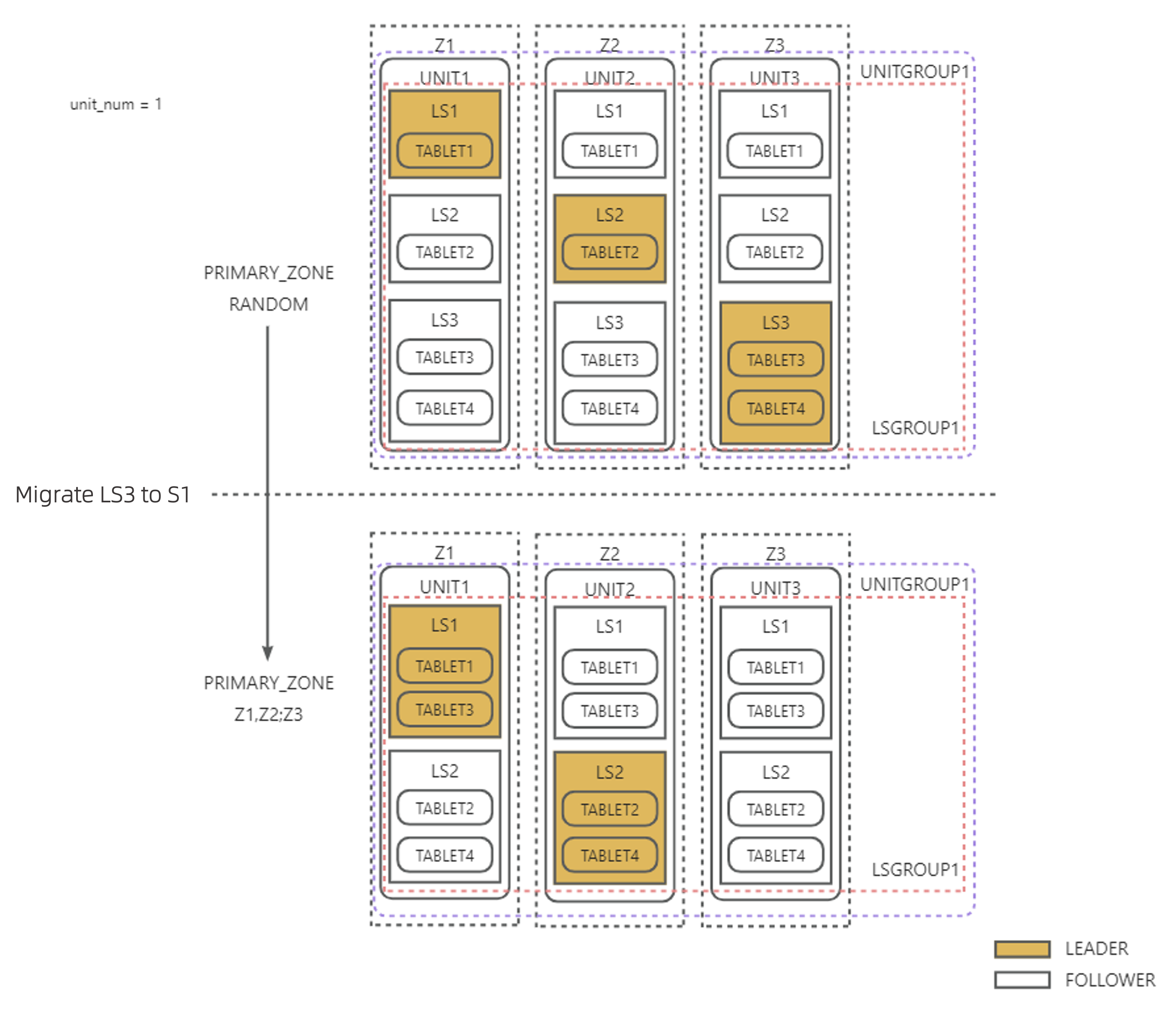

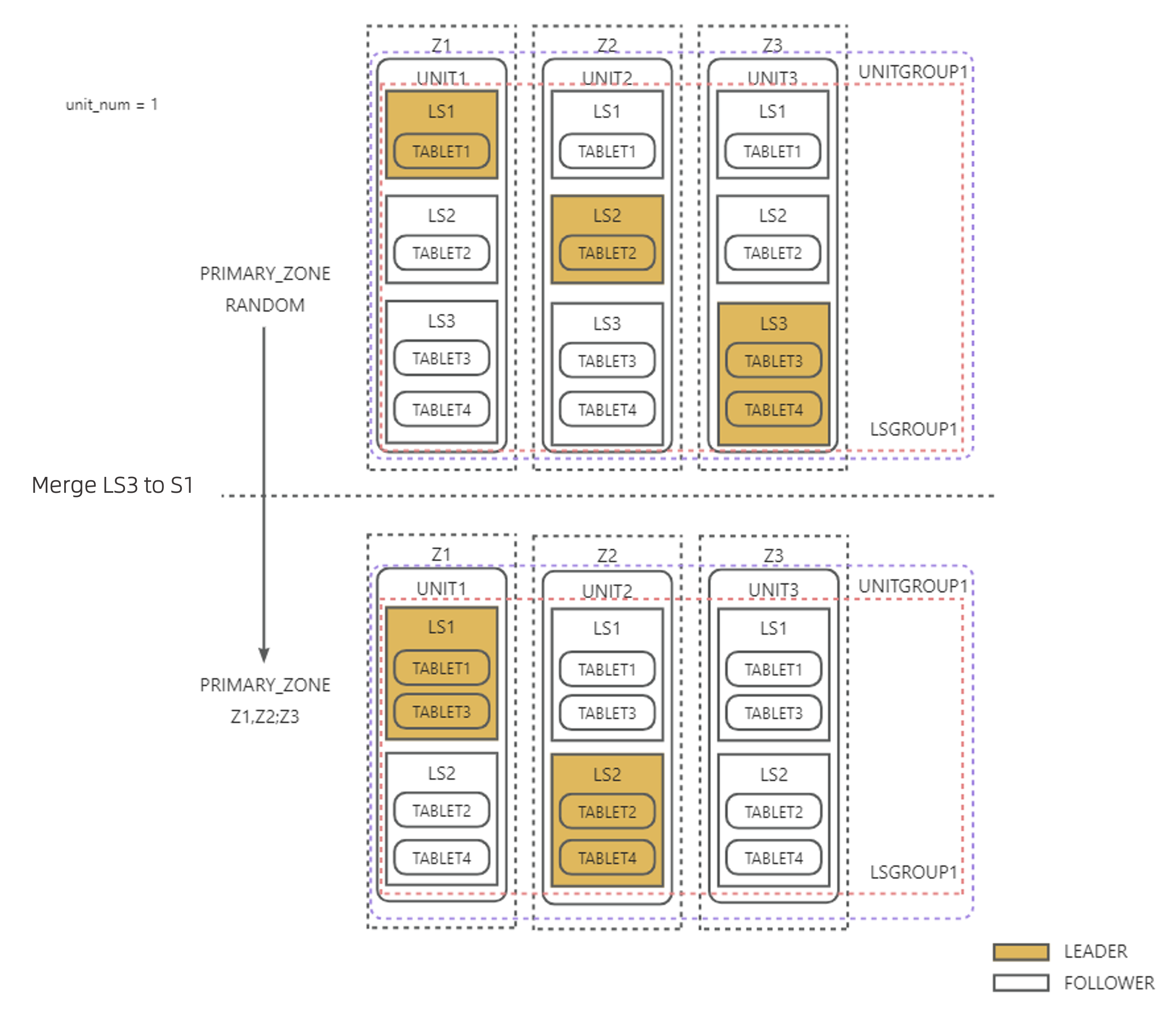

Example 3: As shown in the figure below, UNIT_NUM = 1 is configured, and top priority zones in PRIMARY_ZONE are changed from RANDOM to Z1,Z2. After the balancing is complete, leaders must be only in top priority zones in PRIMARY_ZONE and the tablets must be evenly distributed to LSs. Because LS3 is in the same LS group as LS1 and LS2, you can directly transfer 1/2 of the tablets from LS3 to LS1, and then merge LS3 into LS2. Then, the remaining 1/2 of the tablets will be served by LS2. In this case, tablet distribution remains balanced even though the LS quantity is reduced.

LS leader balancing

LS leader balancing ensures that LS leaders are evenly distributed to primary zones of the top priority on the premise of LS quantity balancing. For example, if you modify PRIMARY_ZONE for a tenant, the load balancing background thread dynamically adjusts the LS quantity based on the priority settings in PRIMARY_ZONE and switches the leader to ensure that each primary zone of the top priority has only one LS leader.

LS leader balancing is controlled by the tenant-level parameter enable_ls_leader_balance. It is enabled by default.

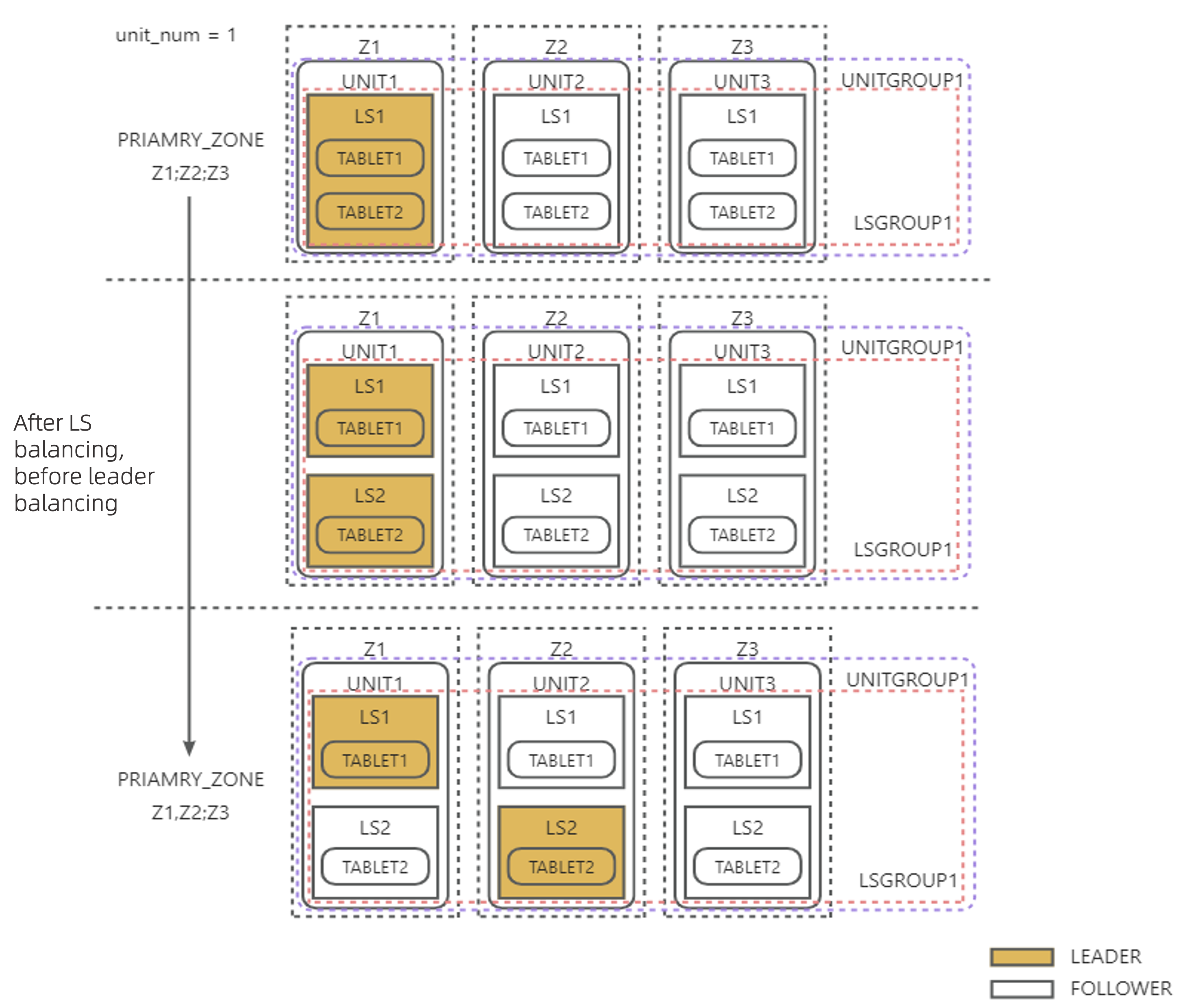

Example 1: As shown in the figure below, UNIT_NUM = 1 is configured, and top priority zones in PRIMARY_ZONE are changed from Z1 to Z1,Z2. To ensure that each top priority zone in PRIMARY_ZONE has a leader, a new LS is created in each unit by splitting the original LS in the same unit, and the new LS leader role is switched to the new LS in Z2.

Example 2: As shown in the figure below, UNIT_NUM = 1 is configured and top priority zones in PRIMARY_ZONE are changed from RANDOM to Z1,Z2. After LS3 is merged into another LS to realize LS quantity balancing, LS leaders are naturally evenly distributed in Z1 and Z2 without any additional LS leader balancing actions.

LS balancing (heterogeneous zone mode)

In the current version, OceanBase Database supports the heterogeneous zone mode for tenants. In the heterogeneous zone mode, the number of units in each zone of a tenant can differ, but a tenant can have at most two different UNIT_NUM values.

In the heterogeneous zone mode, the load balancing algorithm controls the distribution of log streams at the unit granularity:

Within each zone of a tenant, each unit has the same number of log streams.

Within zones that have the same

UNIT_NUM, log streams are aggregated in the same way as in the homogeneous zone mode.

In the heterogeneous zone mode, the LS quantity is calculated by using the following formula:

LS quantity = (LCM of all UNIT_NUM values of the tenant) * first_level_primary_zone_num

Partition distribution during table creation

When you create a user table, OceanBase Database scatters and aggregates partitions to LSs based on a set of balancing distribution strategies to ensure relative balance of partitions among LSs.

User tables of a specified table group

You can specify a table group to flexibly configure partition aggregation and scattering rules for different tables. If you specify a table group with the corresponding sharding attribute and scope attribute (available in V4.4.2 BP1 and later) when you create a user table, the partitions of the new table are distributed to LSs of the corresponding user based on the alignment rules of the table group. The following table describes the rules.

- Versions before V4.4.2 BP1 (excluding)

Sharding attribute of a table group |

Description |

Partitioning method requirement |

Alignment rule |

|---|---|---|---|

| NONE | All partitions of all tables are aggregated to the same server. | Unlimited | All partitions are aggregated to the same LS as the partitions of the table with the smallest table_id in the table group. |

| PARTITION | Scattering is performed at the partition granularity. All subpartitions under the same partition in a subpartitioned table are aggregated. | All tables use the same partitioning method. For a subpartitioned table, only its partitioning method is verified. Therefore, a table group with this sharding attribute may contain both partitioned and subpartitioned tables, as long as their partitioning methods are the same. | Partitions with the same partition value are aggregated, including partitions of partitioned tables and all subpartitions under corresponding partitions of subpartitioned tables. |

| ADAPTIVE | Scattering is performed in an adaptive way. If the tables in a table group with this sharding attribute are partitioned tables, scattering is performed at the partition granularity. If the tables are subpartitioned tables, scattering is performed at the subpartition granularity. |

|

|

V4.4.2 BP1 and later versions

SHARDING/SCOPE attribute valueSERVERZONECLUSTERNONE All partitions in the table group are aggregated on the same node. (The partition distribution method is equivalent to SHARDING = NONEin versions before theSCOPEattribute was introduced.)All partitions in the table group are evenly scattered within a zone. All partitions in the table group are evenly scattered across all nodes. PARTITION Not supported. Not supported. Partitions are clustered at partition granularity, and each partition group is randomly scattered. (The partition distribution method is equivalent to SHARDING = PARTITIONin versions before theSCOPEattribute was introduced.)ADAPTIVE Not supported. Not supported. - For partitioned tables: Partitions are clustered at partition granularity, and each partition group is scattered across the entire cluster.

- For subpartitioned tables: Under each partition, subpartitions are clustered at subpartition granularity, and each partition group is scattered across the entire cluster.

The partition distribution method is equivalent to

SHARDING = ADAPTIVEin versions before theSCOPEattribute was introduced.

Notice

If the table group already contains user tables when you create a new table, the new table's partitions are aligned to those of the user table with the smallest table_id in the table group.

For more information about table groups, see Overview of table groups.

Normal user tables

If you create a normal user table without specifying the table group, OceanBase Database scatters the partitions of the new table to all LSs of the user by default. The specific rules are as follows:

For non-partitioned tables, the new partitions are distributed to the LS with the least partitions.

For partitioned tables, partitions are evenly distributed to all user log streams based on the round-robin algorithm.

For subpartitioned tables, all subpartitions under each partition are evenly distributed to all user log streams based on the round-robin algorithm.

Here are some examples:

Example 1: In a MySQL-compatible tenant, create non-partitioned tables named

tt1,tt2,tt3, andtt4.obclient [test]> CREATE TABLE tt1(c1 int);obclient [test]> CREATE TABLE tt2(c1 int);obclient [test]> CREATE TABLE tt3(c1 int);obclient [test]> CREATE TABLE tt4(c1 int);Query the partition distribution of the four tables.

obclient [test]> SELECT table_name,partition_name,subpartition_name,ls_id,zone FROM oceanbase.DBA_OB_TABLE_LOCATIONS WHERE table_name in('tt1','tt2','tt3','tt4') AND role='LEADER';The query result is as follows:

+------------+----------------+-------------------+-------+------+ | table_name | partition_name | subpartition_name | ls_id | zone | +------------+----------------+-------------------+-------+------+ | tt1 | NULL | NULL | 1001 | z1 | | tt2 | NULL | NULL | 1002 | z2 | | tt3 | NULL | NULL | 1003 | z3 | | tt4 | NULL | NULL | 1001 | z1 | +------------+----------------+-------------------+-------+------+ 4 rows in setExample 2: Create a partitioned table named

tt5.obclient [test]> CREATE TABLE tt5(c1 int) PARTITION BY HASH(c1) PARTITIONS 6;Query the distribution of the partitioned table.

obclient [test]> SELECT table_name,partition_name,subpartition_name,ls_id,zone FROM oceanbase.DBA_OB_TABLE_LOCATIONS WHERE table_name ='tt5' AND role='LEADER';The query result is as follows. The partitions of the table are evenly distributed to

1001,1002, and1003based on the round-robin algorithm.+------------+----------------+-------------------+-------+------+ | table_name | partition_name | subpartition_name | ls_id | zone | +------------+----------------+-------------------+-------+------+ | tt5 | p0 | NULL | 1003 | z3 | | tt5 | p1 | NULL | 1001 | z1 | | tt5 | p2 | NULL | 1002 | z2 | | tt5 | p3 | NULL | 1003 | z3 | | tt5 | p4 | NULL | 1001 | z1 | | tt5 | p5 | NULL | 1002 | z2 | +------------+----------------+-------------------+-------+------+ 6 rows in setExample 3: Create a subpartitioned table named

tt8.obclient [test]> CREATE TABLE tt8 (c1 int, c2 int, PRIMARY KEY(c1, c2)) PARTITION BY HASH(c1) SUBPARTITION BY RANGE(c2) SUBPARTITION TEMPLATE (SUBPARTITION p0 VALUES LESS THAN (1990), SUBPARTITION p1 VALUES LESS THAN (2000), SUBPARTITION p2 VALUES LESS THAN (3000), SUBPARTITION p3 VALUES LESS THAN (4000), SUBPARTITION p4 VALUES LESS THAN (5000), SUBPARTITION p5 VALUES LESS THAN (MAXVALUE)) PARTITIONS 2;Query the partition distribution of the

tt8table.obclient [test]> SELECT table_name,partition_name,subpartition_name,ls_id,zone FROM oceanbase.DBA_OB_TABLE_LOCATIONS WHERE table_name ='tt8' AND role='LEADER';The query result is as follows. The subpartitions under each partition of the table are evenly distributed to all user log streams based on the round-robin algorithm.

+------------+----------------+-------------------+-------+------+ | table_name | partition_name | subpartition_name | ls_id | zone | +------------+----------------+-------------------+-------+------+ | tt8 | p0 | p0sp0 | 1001 | z1 | | tt8 | p0 | p0sp1 | 1002 | z2 | | tt8 | p0 | p0sp2 | 1003 | z3 | | tt8 | p0 | p0sp3 | 1001 | z1 | | tt8 | p0 | p0sp4 | 1002 | z2 | | tt8 | p0 | p0sp5 | 1003 | z3 | | tt8 | p1 | p1sp0 | 1002 | z2 | | tt8 | p1 | p1sp1 | 1003 | z3 | | tt8 | p1 | p1sp2 | 1001 | z1 | | tt8 | p1 | p1sp3 | 1002 | z2 | | tt8 | p1 | p1sp4 | 1003 | z3 | | tt8 | p1 | p1sp5 | 1001 | z1 | +------------+----------------+-------------------+-------+------+ 12 rows in set

Special user tables

For special user tables, such as local index tables, global index tables, and replicated tables, OceanBase Database distributes their partitions to LSs based on the following special rules:

Local index tables have the same partitioning rules as primary user tables. Each partition is bound to the partition distribution of the primary user table.

Global index tables are non-partitioned tables by default. Partitions in such tables are distributed to the user LS with the least partitions during table creation.

Replicated tables exist only on broadcast LSs.

Partition balancing

On the basis of LS balancing, the load balancing module uses the transfer feature to scatter and aggregate partition tablets to LSs, thus achieving partition balancing within a tenant. The partition balancing task SCHEDULED_TRIGGER_PARTITION_BALANCE is controlled by the DBMS_BALANCE.TRIGGER_PARTITION_BALANCE subprogram. By default, partition balancing is triggered once daily at 00:00. For more information about partition balancing operations, see Configure a scheduled partition balancing task.

The partition balancing strategies are in the following priority order:

Partition attribute alignment > Table group and partition weight balancing > Partition quantity balancing > Partition disk balancing

Partition attribute alignment

Table group alignment

When you use an SQL statement to modify the table group attribute of a table or the sharding attribute of a table group, or the SCOPE attribute (supported in V4.4.2 BP1 and later) of a table group, you must wait for the background partition balancing task to complete before the partition distribution meets your expectations. You can manually call the DBMS_BALANCE.TRIGGER_PARTITION_BALANCE subprogram to trigger partition balancing for quick alignment.

For more information about the partition distribution rules of tables in a table group, see User tables of a specified table group in this topic.

For more information about table groups, see Overview of table groups.

duplicate_scope alignment

In the current version of OceanBase Database, changes to the attributes of replicated tables are supported. Replicated tables can only exist on broadcast log streams. After modifying the duplicate_scope attribute, you must wait for partition balancing to complete before using the attribute as expected. You can manually call the DBMS_BALANCE.TRIGGER_PARTITION_BALANCE subprogram to trigger partition balancing for quick alignment.

For more information about how to change replicated table attributes, see Change the attributes of a replicated table (MySQL-compatible mode) and Change the attributes of a replicated table (Oracle-compatible mode).

Table group weight balancing

In the MySQL-compatible mode of OceanBase Database, user tables are aggregated by binding a database to a table group with Sharding = 'NONE'. The current version supports automatic aggregation of user tables within the same database and aggregation of user tables across multiple databases.

By setting weights for table groups with Sharding = 'NONE', table group weight balancing helps distribute tables in the table group according to their weights.

Table group weight balancing is a process in the partition balancing (PARTITION_BALANCE) task. You can trigger it manually or wait for the scheduled partition balancing task to run.

Partition weight balancing (non-table group)

Partition weight is the relative proportion of CPU, memory, disk, and other resources that users set for partitions. Only integer values are supported, in the range [1, +∞). By default, partitions have no weight.

Non-table group partition weight balancing is a process in the partition balancing task. After you set partition weights for a table, you can trigger the partition balancing task manually or wait for the scheduled partition balancing task to run. The partition weight balancing algorithm uses partition movement and partition exchange to minimize the variance of the sum of partition weights on each user log stream.

Only tables with partition weights set participate in partition weight balancing. Partitions without weights in a table are still balanced by partition count.

Application of partition weight balancing

Partition weights for non-table groups are mainly used for partition hotspot scattering. The following two scenarios are supported:

Hotspot partition scattering within a partitioned table

Hotspot partition scattering across non-partitioned tables

Note

- Intra-table balancing for subpartitioned tables is not supported in the current version.

- Scenarios with a mix of non-partitioned and partitioned tables fall under inter-table weight balancing.

When setting partition weights, we recommend using at most three tiers:

Large weight = 100% × partition count

Medium weight = 50% × partition count

Small weight = 1

The following examples illustrate the application of partition weight balancing.

Hotspot partition scattering within a partitioned table

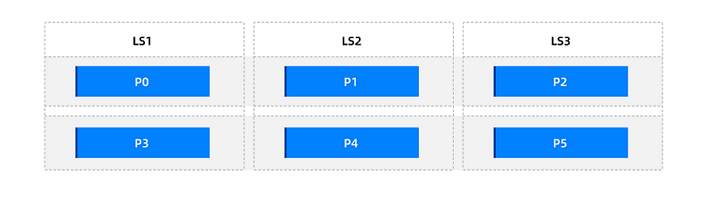

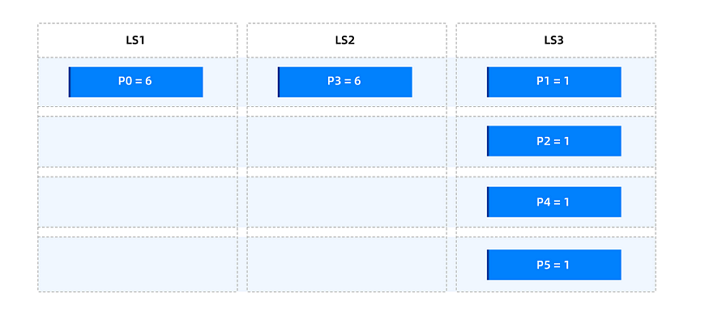

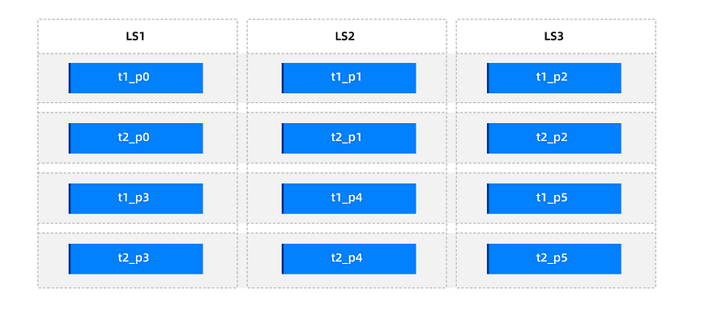

For example, assume a partitioned table t1 with 6 partitions. After the table is created, the partition distribution is as follows.

Application scenarios:

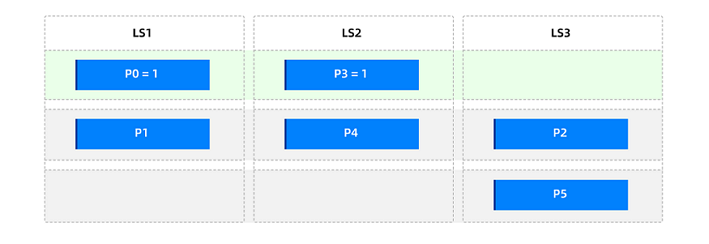

Scenario 1: Scattering a few hotspots

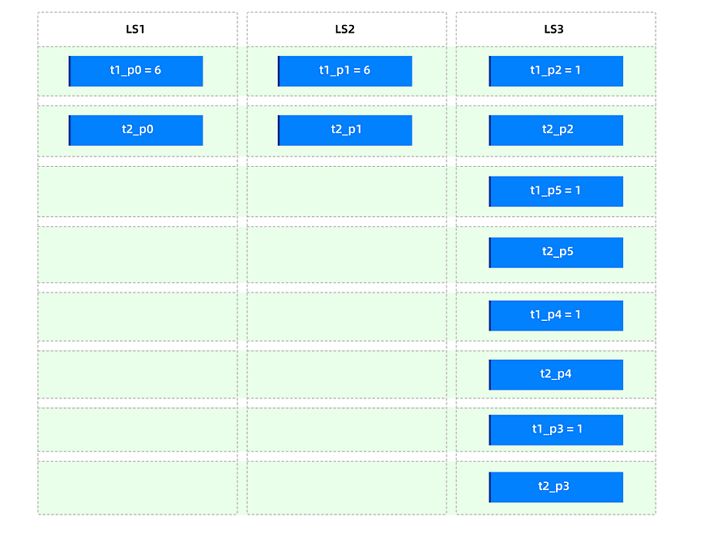

Assume that only p0 and p3 in table t1 are hotspot partitions that need to be scattered. To minimize the number of weight tiers, you can use only one tier: "small weight = 1".

Set partition weights

p0 = 1andp3 = 1. During partition balancing, only these two partitions participate in weight balancing. The remaining partitions do not participate and are balanced by partition count with minimal movement. After partition balancing (manual trigger or scheduled), the overall distribution is as follows.

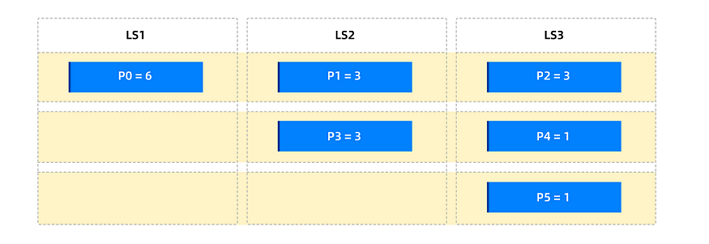

Scenario 2: Distributing all partitions by weight

Assume that p0 in table t1 has the highest traffic, p1, p2, and p3 have medium traffic, and the rest have low traffic. To scatter traffic, use the following three weight tiers:

Large weight = 100% × partition count of the partitioned table = 6

Medium weight = 50% × partition count of the partitioned table = 3

Small weight = 1

First set the table-level partition weight to 1 to define the scope of weight balancing for the entire table. Then set partition weights

p0 = 6,p1 = 3,p2 = 3, andp3 = 3. After partition balancing, the overall distribution is as follows.

Scenario 3: Hotspot partitions exclusive within a balancing group

Assume that the 6 partitions in table t1 have highly uneven traffic, with two oversized partitions p0 and p3. You want other partitions in the table to not affect the read and write performance of these two. Use the following two weight tiers:

Large weight = 100% × partition count of the partitioned table = 6

Small weight = 1

First set the table-level partition weight to 1, then set partition weights

p0 = 6andp3 = 6. After partition balancing, the overall distribution is as follows.

Hotspot scattering for non-partitioned tables

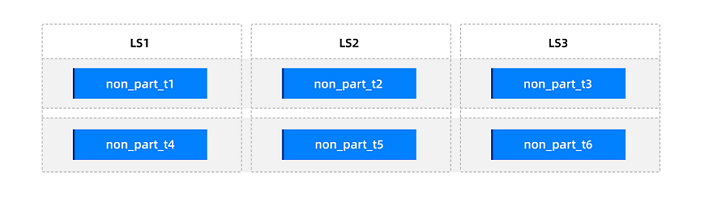

For example, assume 6 non-partitioned tables. After they are created, the distribution is as follows.

Application scenarios:

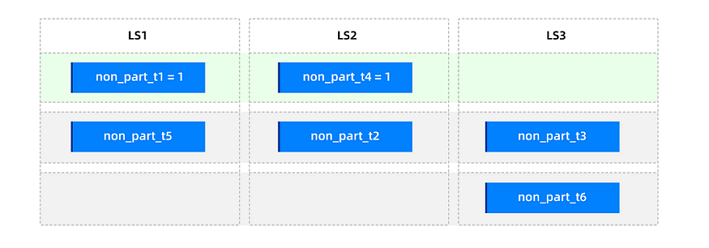

Scenario 1: Scattering a few hotspots

Assume that non_part_t1 and non_part_t4 are two hotspots on the same log stream that need to be scattered. To minimize the number of weight tiers, use only one tier: "small weight = 1".

Set table-level weights

non_part_t1 = 1andnon_part_t4 = 1. After partition balancing, the distribution is as follows.

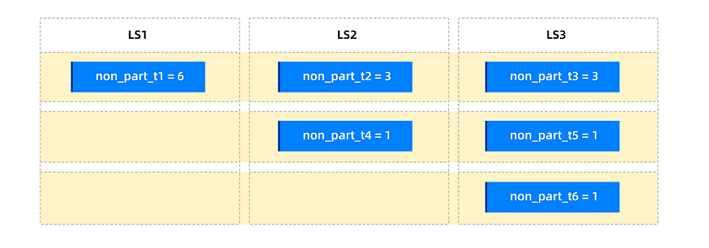

Scenario 2: Distributing by traffic

Assume that the traffic of each non-partitioned table is known: non_part_t1 is highest, non_part_t2 and non_part_t3 are medium, and non_part_t4, non_part_t5, and non_part_t6 are lowest. To scatter by traffic, use the following three tiers:

Large weight = 100% × partition count = 6

Medium weight = 50% × partition count = 3

Small weight = 1

Set table-level partition weights

non_part_t1 = 6,non_part_t2 = 3,non_part_t3 = 3,non_part_t4 = 1,non_part_t5 = 1, andnon_part_t6 = 1. After partition balancing, the distribution is as follows.

Impact of partition weight on table groups

Tables with partition weights can be added to table groups. For table groups, if a table group contains tables with partition weights, partition balancing calculates distribution based on the weighted sum within the partition group. The following table describes the impact of adding tables with partition weights to table groups.

V4.4.2 BP1 (excluding) and earlier

Sharding attribute of the table groupOriginal partition distributionImpact after adding partition weightsNONE All aggregated No impact PARTITION Partitions with the same partition value are aggregated (same partition group); partitions are scattered. A few weighted partitions affect the overall distribution of the table group. ADAPTIVE When every table in the group is a partitioned table (without subpartitions), partitions with the same partition value are aggregated (same partition group); partitions are scattered. When every table in the group is subpartitioned, subpartitions with the same partition and subpartition values are aggregated (same partition group); subpartitions are scattered. When every table in the group is a partitioned table (without subpartitions), a few weighted partitions affect the overall distribution. Partition weights for subpartitioned tables are not supported in the current version. The following example illustrates the impact of setting partition weights for a table in a table group on the overall distribution.

Assume a table group with

SHARDING = PARTITIONthat contains two partitioned tables t1 and t2, each with 6 partitions. The partitions of each table form a partition group. The initial distribution is as follows.

Set the weight of all partitions in table t1 to 1, then set

t1_p0 = 6andt1_p1 = 6. After partition balancing, the partition distribution in the table group is as follows.

V4.4.2 BP1 and later

Sharding attribute and scope of the table groupOriginal partition distributionImpact after adding partition weightsSHARDING = 'NONE'+SCOPE = 'SERVER'All aggregated No impact SHARDING = 'PARTITION'+SCOPE = 'CLUSTER'Partitions with the same partition value are aggregated (same partition group); partitions are scattered. A few weighted partitions affect the overall distribution of the table group. SHARDING = 'ADAPTIVE'+SCOPE = 'CLUSTER'- When every table in the group is a partitioned table (without subpartitions), partitions with the same partition value are aggregated (same partition group); partitions are scattered.

- When every table in the group is subpartitioned, subpartitions with the same partition and subpartition values are aggregated (same partition group); subpartitions are scattered.

When every table in the group is a partitioned table (without subpartitions), a few weighted partitions affect the overall distribution. Partition weights for subpartitioned tables are not supported in the current version. Note

SHARDING = 'NONE'+SCOPE = 'ZONE'andSHARDING = 'NONE'+SCOPE = 'CLUSTER'do not support partition or table weights.

Partition quantity balancing

Partition quantity balancing aims to evenly distribute the partitions of the primary user tables to all LSs of the user, with a partition quantity deviation no greater than 1 between each two LSs.

OceanBase Database uses the concept of balancing group to describe the relationship between partitions to be scattered. Such partitions are divided into balancing groups to achieve partition quantity balancing based on intra-group balancing and inter-group balancing.

The following table describes the balancing group division rules in scenarios where no table group exists.

Table type |

Balancing group division rule |

Distribution method |

|---|---|---|

| Non-partitioned tables | All non-partitioned tables under a tenant are in one balancing group. | All non-partitioned tables under a tenant are evenly distributed to all LSs of the user, with a quantity deviation no greater than 1. |

| Partitioned tables | All partitions of a single table are in one balancing group. | Partitions of a single table are evenly distributed to all LSs of the user. |

| Subpartitioned tables | All subpartitions under each partition of a single table are in one balancing group. | All subpartitions under each partition of a single table are evenly distributed to all LSs of the user. |

In the balancing stage, the system first evenly distributes partitions for each balancing group to achieve intra-group balancing, and then transfers partitions from the LS with the most partitions to achieve inter-group balancing, thus achieving partition quantity balancing.

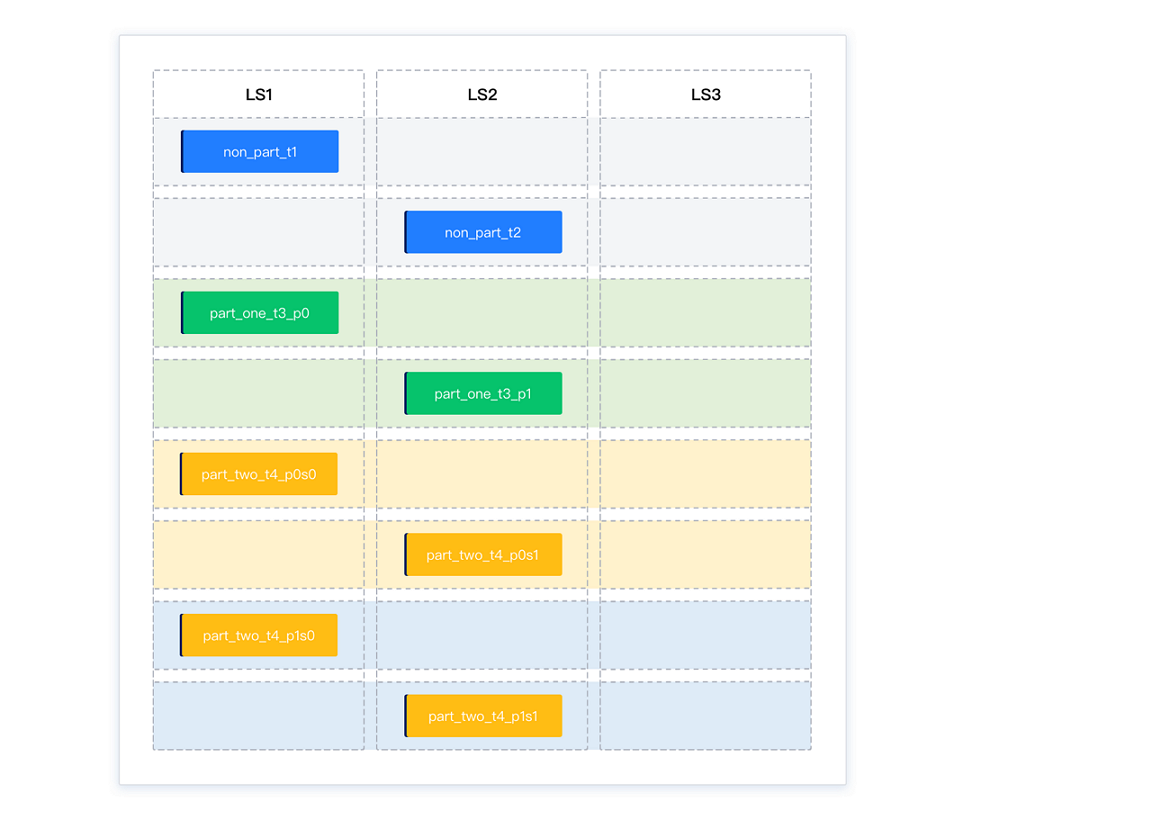

Assume that all partitions of four tables are in LS1. The partitions are divided into three balancing groups:

Balancing group 1: non-partitioned tables

non_part_t1andnon_part_t2Balancing group 2: partitions

part_one_t3_p0andpart_one_t3_p1of a partitioned tableBalancing group 3: subpartitions

part_two_t4_p0s0,part_two_t4_p0s1,part_two_t4_p1s0, andpart_two_t4_p1s1of a subpartitioned table

The initial distribution of partitions is 8-0-0.

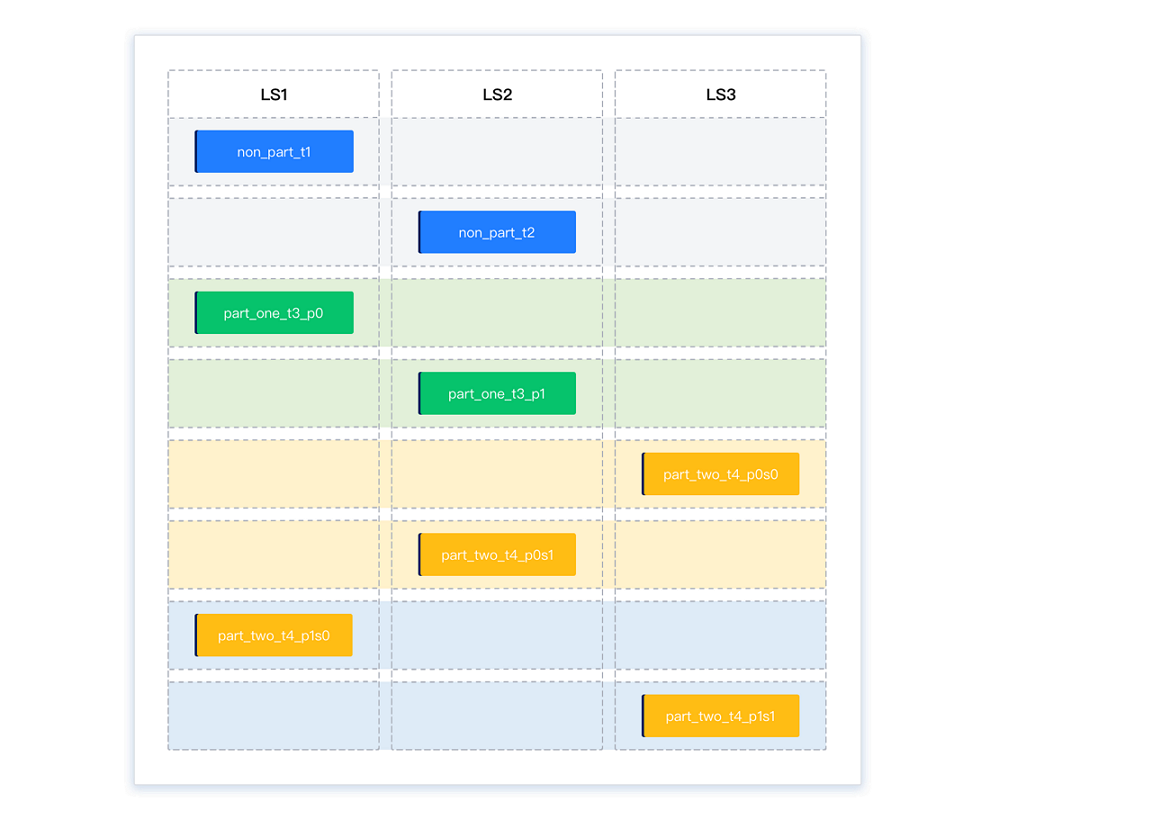

Upon intra-group balancing, the distribution of partitions is 4-4-0. Each balancing group is balanced, but the tenant overall is not.

One partition is transferred each from LS1 and LS2, which have the most partitions, to LS3, which has the least partitions, to achieve inter-group balancing. The distribution of partitions is now 3-3-2, which means that overall balancing is achieved.

When table groups exist, each table group is an independent balancing group. The system binds the partitions to be aggregated in a table group and takes them as one partition. Then the system adopts the same intra-group balancing and inter-group balancing methods to achieve partition quantity balancing. When table groups exist, partition quantity balancing may not be fully achieved in a tenant.

The following table describes the balancing group division rules in scenarios where table groups exist.

Table type |

Balancing group division rule |

Distribution method |

|---|---|---|

Tables in a table group with the sharding attribute NONE |

All tables in a table group are in one balancing group. | All partitions in a table group are distributed to the same LS. |

Partitioned tables in a table group with the sharding attribute PARTITION or ADAPTIVE |

Partitions of all tables in a table group are in one balancing group. | Partitions of the first table are evenly scattered to all LSs, and each partition of subsequent tables is aggregated to the same LS as the corresponding partition in the first table. |

Subpartitioned tables in a table group with the sharding attribute ADAPTIVE |

All subpartitions under each partition of all tables in a table group are in one balancing group. | Subpartitions of the first table are evenly scattered to all LSs, and each subpartition of subsequent tables is aggregated to the same LS as the corresponding subpartition in the first table. |

Assume that you add a table group named tg1 with the sharding = 'NONE' configuration after the preceding balancing is achieved. The table group contains non-partitioned tables non_part_t5_in_tg1, non_part_t6_in_tg1, non_part_t7_in_tg1, non_part_t8_in_tg1, and non_part_t9_in_tg1. Because all tables in the tg1 table group must be bound together, the partition distribution is 4-4-5 even after balancing is completed.

SCOPE = 'ZONE' table groups

For OceanBase Database V4.4.2, starting from V4.4.2 BP1, table groups support the SCOPE attribute. The distribution of partitions within a table group is determined by both the SHARDING and SCOPE attributes. The SHARDING attribute specifies the aggregation method for partitions across tables within the table group. A group of aggregated partitions is called a partition group (Partition Group), which is the smallest unit for distribution. The SCOPE attribute determines the distribution range of all aggregated Partition Groups within the table group.

Quantity balancing

For SCOPE = 'ZONE' (i.e., SHARDING = 'NONE' + SCOPE = 'ZONE'), the number of table groups in each zone is balanced as much as possible. All partitions in a table group are evenly distributed across log streams by round robin among log streams that share the same Primary Zone configuration.

Below is a simple example to demonstrate the distribution effect of SCOPE = 'ZONE' table groups.

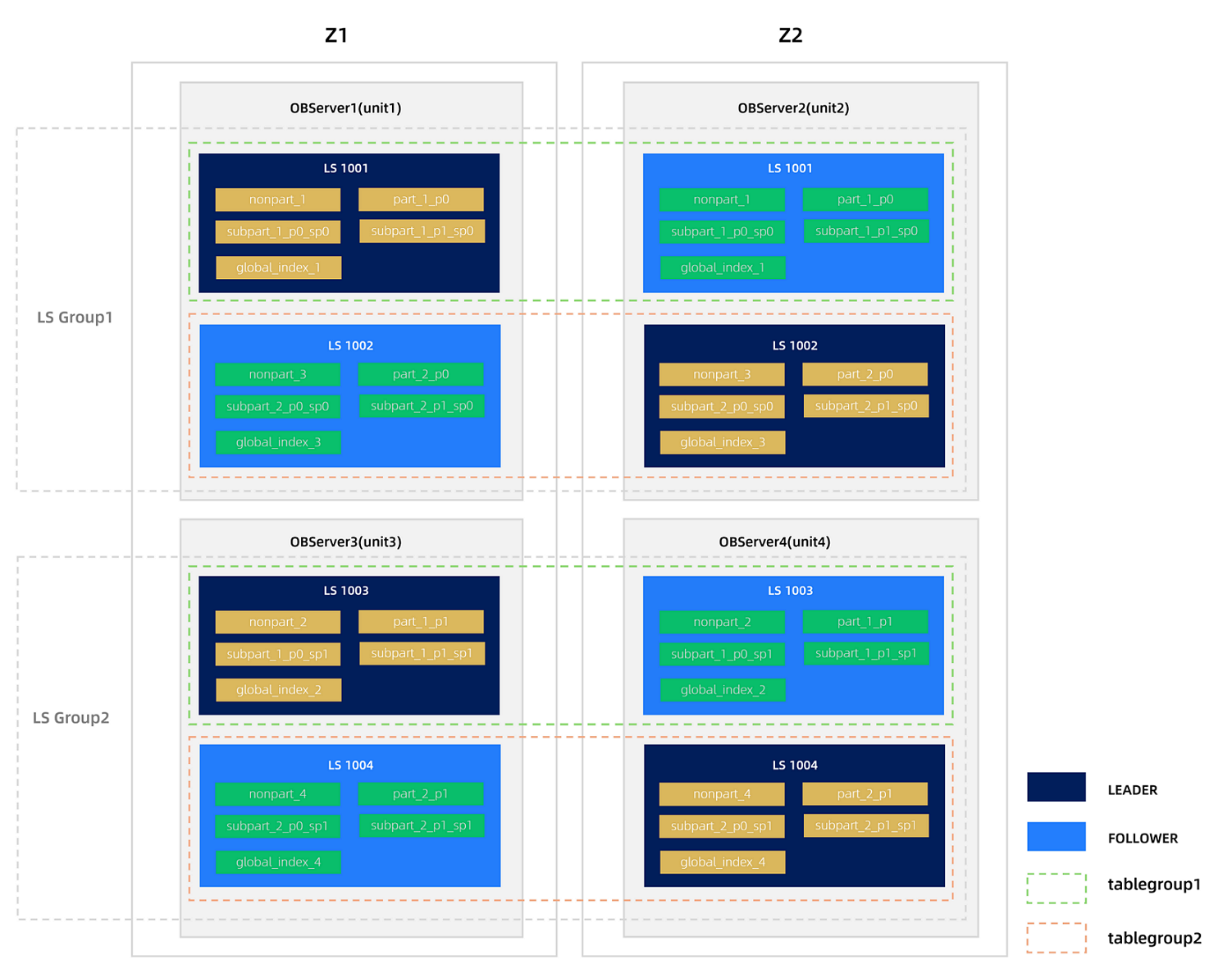

Assume a tenant has a locality of FULL{1}@z1, FULL{1}@z2 (2F1A), with UNIT_NUM = 2 and PRIMARY_ZONE = 'z1,z2'. The tenant has the following tables:

Non-partitioned tables:

nonpart_1,nonpart_2,nonpart_3,nonpart_4Partitioned tables:

part_1,part_2Subpartitioned tables:

subpart_1,subpart_2Global index tables:

global_index_1,global_index_2,global_index_3,global_index_4

Create two table groups, tablegroup1 and tablegroup2, in the tenant.

obclient> CREATE TABLEGROUP tablegroup1 SHARDING = 'NONE', SCOPE = 'ZONE';

obclient> CREATE TABLEGROUP tablegroup2 SHARDING = 'NONE', SCOPE = 'ZONE';

Then, add the corresponding tables to these two table groups.

obclient> ALTER TABLEGROUP tablegroup1 ADD TABLE nonpart_1,nonpart_2,part_1,subpart_1,global_index_1, global_index_2;

obclient> ALTER TABLEGROUP tablegroup2 ADD TABLE nonpart_3,nonpart_4,part_2,subpart_2,global_index_3, global_index_4;

After partition balancing, the distribution effect is as follows:

Weight balancing

For SCOPE = 'ZONE' table groups, you can set weights (supported only in MySQL-compatible mode) to disperse hot table groups across different zones.

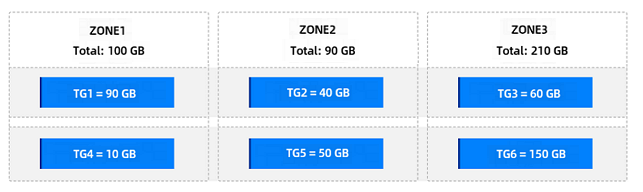

Below is an example. Assume the tenant has six SHARDING = 'NONE' and SCOPE = 'ZONE' table groups without weights. The distribution is as follows.

Scenario 1: Disperse a small number of hot table groups

Assume TG1 and TG4 are two hot table groups in the same zone and need to be dispersed. To minimize the number of weight levels, you can use only one weight level "Small Weight = 1" to solve the issue.

After setting the weights of TG1 and TG4 to 1, the distribution is as follows.

Scenario 2: Spread by traffic

Assume the user knows the traffic of each table group. TG1 has the highest traffic, followed by TG2 and TG3, and TG4, TG5, and TG6 have the lowest traffic. The user wants table groups spread according to traffic.

You can use three weight levels:

Large Weight = 100% * Expected Number of Weighted Table Groups = 6

Medium Weight = 50% * Expected Number of Weighted Table Groups = 3

Small Weight = 1

After setting the weights of TG1 to 6, TG2 to 3, TG3 to 3, TG4 to 1, TG5 to 1, and TG6 to 1, the distribution after partition balancing is as follows.

Disk balancing

For SCOPE = 'ZONE' table groups, the system automatically attempts to exchange table groups between zones to balance the total disk usage across zones, while maintaining the quantity and weight balance of SCOPE = 'ZONE' table groups in each zone.

Table group exchange between zones is initiated only when the total disk usage difference between zones meets certain conditions. The conditions are as follows:

The total disk usage difference of the table groups exceeds the total disk usage of the largest zone multiplied by

zone_disk_balance_tolerance_percentage/100, with a default value of 10%.The tenant-level parameter

zone_disk_balance_tolerance_percentagecontrols the tolerance level of the disk balancing algorithm for imbalance between zones. The value range is [0, 100]. When the value is100, zone disk balancing is disabled.The total disk usage of the largest zone exceeds

50 GB * Number of Log Stream Groups.

For the table groups to be exchanged, the following conditions must also be met:

The weights of the table groups are the same.

The exchange reduces the disk usage difference between the two zones.

Below is an example. Assume there are six SCOPE = 'ZONE' table groups without weights. After table group quantity balancing, the distribution is as follows. At this point, ZONE3 has the highest disk usage.

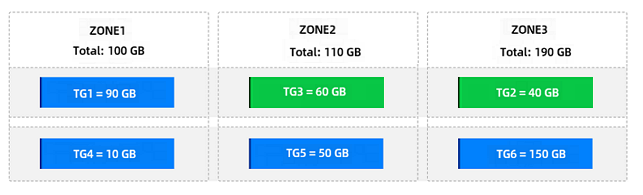

After triggering disk balancing, the system first exchanges the table groups TG3 and TG2 in ZONE3 and ZONE2, which have the highest and lowest disk usage, respectively. The distribution after the exchange is as follows.

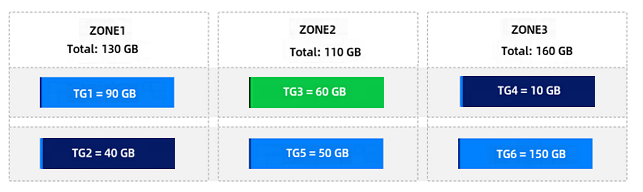

Then, the system exchanges the table groups TG2 and TG4 in ZONE1 and ZONE3. The distribution after the exchange is as follows.

At this point, no further exchanges can be made, and the table group disk balancing process ends.

Partition disk balancing

On the basis of partition quantity balancing or partition weight balancing, the load balancing module exchanges partitions between LSs as much as possible to ensure that the disk usage difference between each two LSs does not exceed the percentage specified by the cluster-level parameter balancer_tolerance_percentage. If a single partition occupies too much disk space, this balancing effect may not be achieved.

To avoid frequent balancing in scenarios with a small data volume, the threshold for triggering partition disk balancing is set to 50 GB in the current version. If the disk usage of a single LS is less than this threshold, disk balancing will not be triggered.

How to verify partition balancing

Partition balancing refers to the balancing for primary user tables. You need to specify table_type = 'USER TABLE' in the query. You can query the data_size column in the CDB_OB_TABLET_REPLICAS view to judge whether partition disk balancing is achieved.

Query statement for judging partition quantity balancing

obclient [test]> SELECT svr_ip,svr_port,ls_id,count(*) FROM oceanbase.CDB_OB_TABLE_LOCATIONS WHERE tenant_id=xxx AND role='leader' AND table_type='USER TABLE' GROUP BY svr_ip,svr_port,ls_id;Query statement for judging partition disk balancing

obclient [test]> SELECT a.svr_ip,a.svr_port,b.ls_id,sum(data_size)/1024/1024/1024 as total_data_size FROM oceanbase.CDB_OB_TABLET_REPLICAS a, oceanbase.CDB_OB_TABLE_LOCATIONS b WHERE a.tenant_id=b.tenant_id AND a.svr_ip=b.svr_ip AND a.svr_port=b.svr_port AND a.tablet_id=b.tablet_id AND b.role='leader' AND b.table_type='USER TABLE' AND a.tenant_id=xxxx GROUP BY svr_ip,svr_port,ls_id;

Scenarios of partition aggregation followed by redistribution

Partition balancing is a continuous process for maintaining system load balance. In actual business operations, the system aggregates and redistributes partitions based on real-time load conditions to achieve optimal resource utilization and performance. Common scenarios involving partition aggregation followed by redistribution include:

A tenant undergoes horizontal scaling down and then scaling up. For example, the number of units (

UNIT_NUM) for a tenant changes from N to 1, and then from 1 back to N.The number of primary zones (

PRIMARY_ZONE) for a tenant first decreases and then increases. For example, the value ofPRIMARY_ZONEchanges fromRANDOMtozone1, and then fromzone1back toRANDOM.A large number of tables are added to a table group with the sharding attribute set to

NONE, and then the tables are removed from the table group.

Based on the existing log stream balancing and partition balancing algorithms, after partition aggregation and redistribution, consecutive partitions within a partitioned table may be grouped on the same log stream, or non-partitioned tables within the same database may be concentrated on the same log stream. To address this issue, OceanBase Database has optimized the partition balancing algorithm:

For non-partitioned tables, after partition aggregation and redistribution, multiple non-partitioned tables within the same database are distributed across user log streams according to the number of partitions, preventing consecutive non-partitioned tables in the same database from being grouped on the same log stream.

For partitioned tables, after partition aggregation and redistribution, consecutive partitions within a partitioned table are distributed across user log streams in a round-robin manner according to the number of partitions, preventing consecutive partitions of a partitioned table from being grouped on the same log stream.