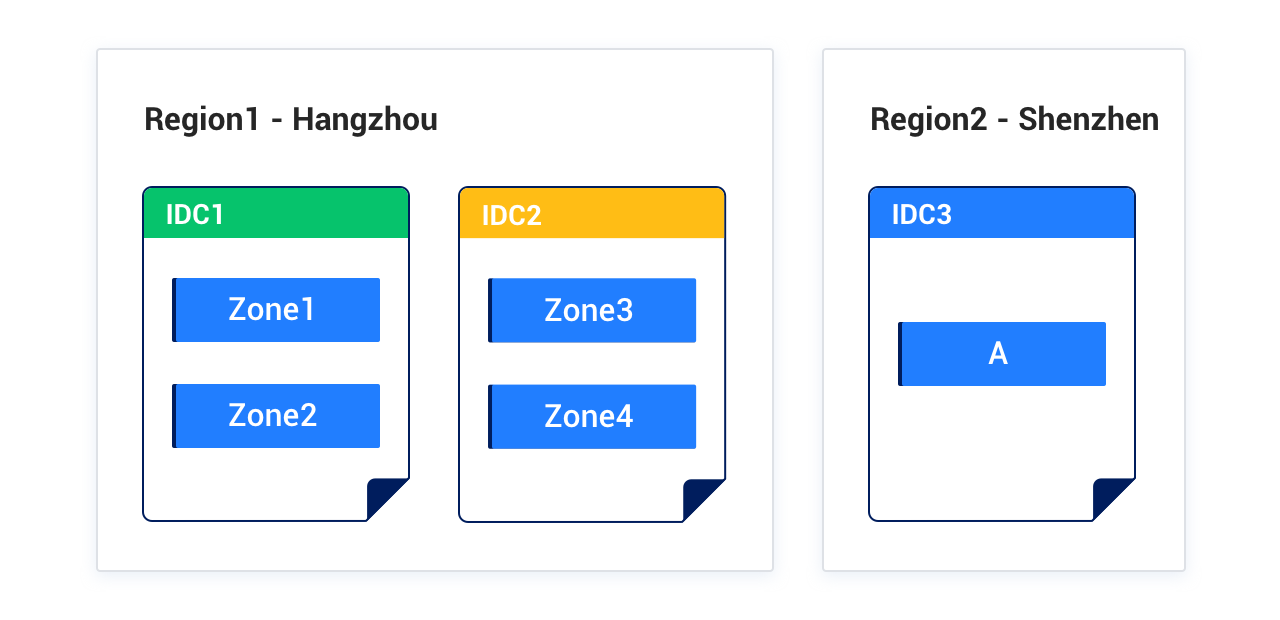

OceanBase Database uses a shared-nothing multi-replica architecture, ensuring no single point of failure and continuous system availability. OceanBase Database supports high availability and disaster recovery at the single-IDC (deploy OceanBase cluster in a single IDC), multi-IDC (deploy OceanBase cluster across IDCs in the same city; IDC is used hereafter to refer to a data center), and city (deploy OceanBase cluster across multiple cities) levels. You can deploy OceanBase Database in a single IDC, two IDCs, three IDCs across two regions, or five IDCs across three regions. You can also deploy the arbitration service to reduce costs.

Deployment solutions

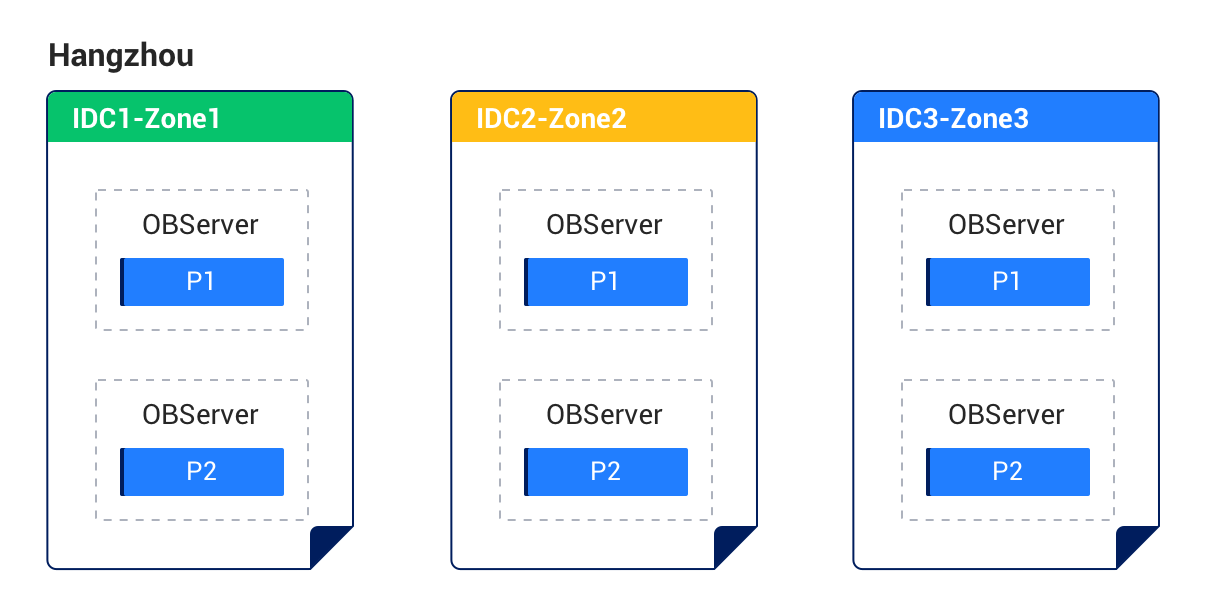

Solution 1: Three IDCs with three replicas in the same region

Characteristics:

- Three IDCs in the same region form a cluster (each IDC is a zone), with network latency between IDCs typically ranging from 0.5 ms to 2 ms.

- When an IDC-level disaster occurs, the remaining two replicas are still in the majority and can synchronize redo logs to ensure RPO=0.

- This deployment solution cannot cope with city-level disasters.

Deployment diagram:

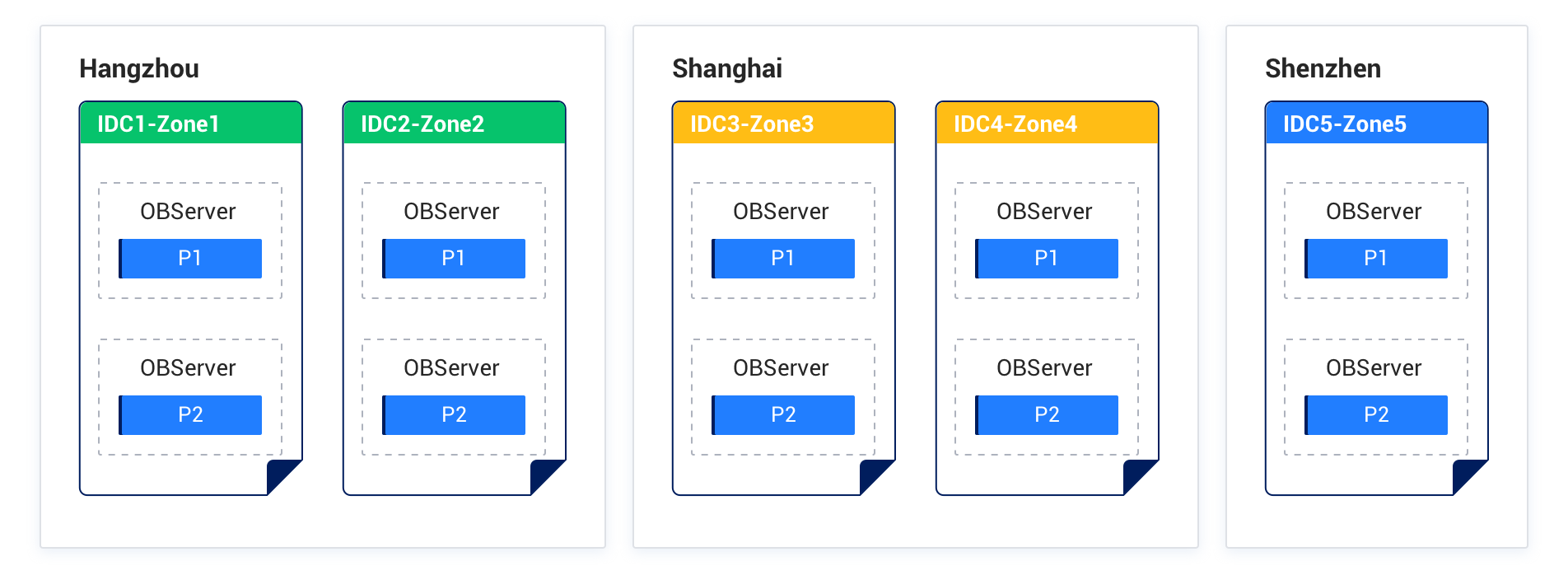

Solution 2: Five IDCs with five replicas across three regions

Characteristics:

- Three cities form a cluster with five replicas.

- When any IDC or city fails, the remaining replicas are still in the majority and can ensure RPO=0.

- At least three replicas are required to form a majority, but each city has at most two replicas. To reduce latency, city 1 and city 2 should be close to each other to lower RedoLog synchronization latency.

Deployment diagram:

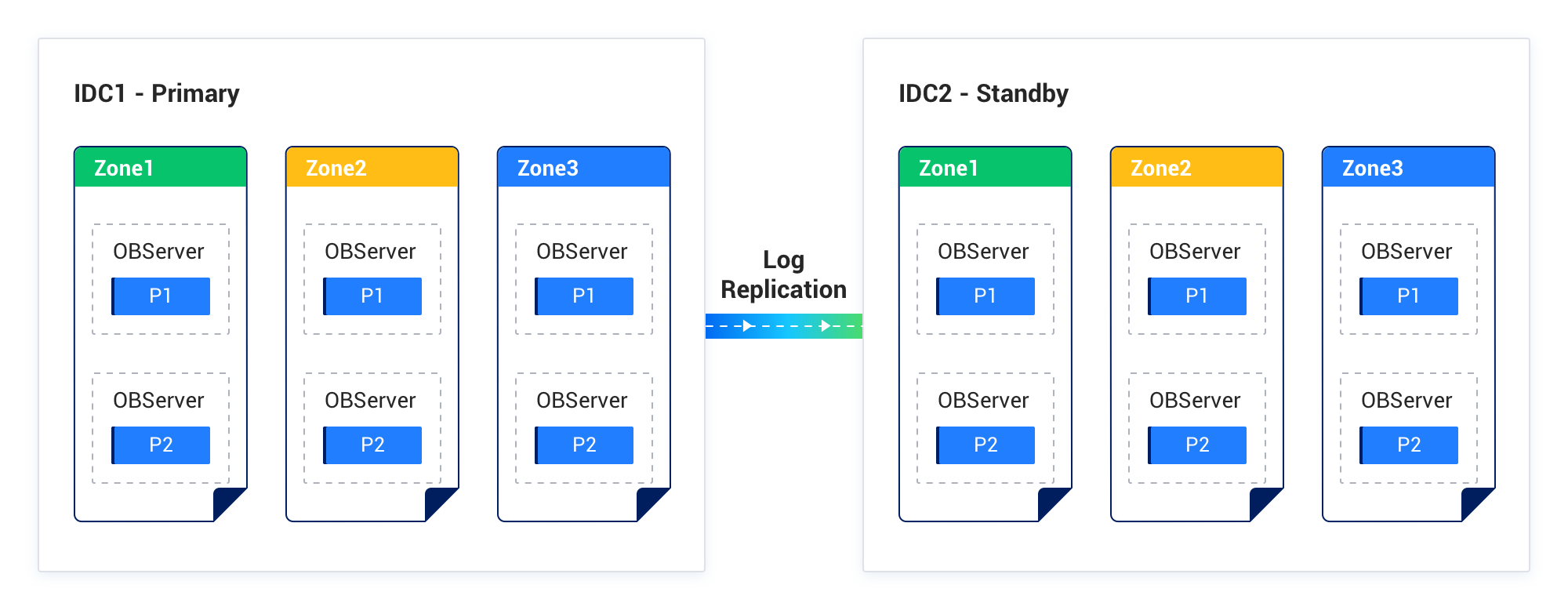

Solution 3: Primary-standby deployment of two IDCs in the same region

Characteristics:

- Each IDC is deployed with an OceanBase cluster, one as the primary and one as the standby. Each cluster has its own Paxos group for multi-replica consistency.

- Data is synchronized between clusters through RedoLog, similar to the "master-slave replication" mode of traditional databases. Data is asynchronously synchronized from the primary to the standby, similar to the "Maximum Performance" mode of Oracle Data Guard.

Deployment diagram:

Solution 4: Primary-standby deployment of three IDCs across two regions

Characteristics:

- The primary city and the standby city form a cluster with five replicas. When any IDC in the primary city fails, at most two replicas are lost, and the remaining three replicas are still in the majority.

- A separate three-replica cluster is deployed in the standby city as the standby database, with data asynchronously synchronized from the primary.

- When the primary city encounters a disaster, the standby city can take over the service.

Deployment diagram:

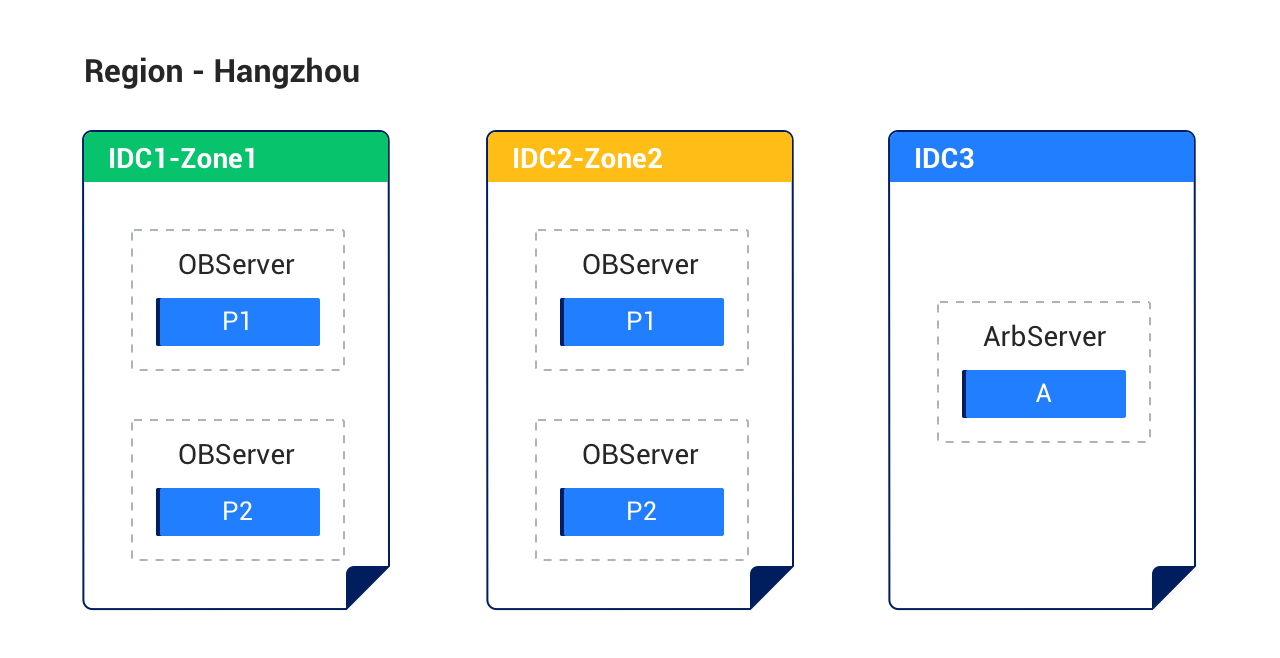

Solution 5: Three IDCs in the same region with the arbitration service

Characteristics:

- Three IDCs in the same region form a cluster, with network latency between IDCs typically ranging from 0.5 ms to 2 ms. Two IDCs hold full-featured replicas, each as a zone. To reduce costs, the third IDC is deployed with the arbitration service (no log synchronization required).

- When an IDC-level disaster occurs, replicas in the remaining two IDCs can elect a leader and perform arbitration downgrade (when the IDC with full-featured replicas fails) to ensure RPO=0.

- This deployment solution cannot cope with city-level disasters.

For detailed information about the arbitration service, see Overview of the arbitration service.

Deployment diagram:

Solution 6: Five IDCs across three regions with the arbitration service

Characteristics:

- Three cities with five IDCs: city 1 and city 2 are close to each other and deploy full-featured replicas; city 3 deploys the arbitration service to reduce costs (no log synchronization required).

- When any IDC fails, the remaining full-featured replicas (3/4) are still in the majority and can ensure RPO=0.

- When any two IDCs or a city-level failure occurs, if the failed IDCs are those with full-featured replicas, the remaining two full-featured replicas (2/4) are not in the majority. Service can be restored by arbitration downgrade (downgrading the two failed replicas to Learners) to ensure RPO=0.

- At least three replicas are required to form a majority, but each city has at most two replicas. To reduce latency, city 1 and city 2 should be close to each other to lower RedoLog synchronization latency.

Deployment diagram:

Solution 7: Three IDCs across two regions with the arbitration service

Characteristics:

- The primary city has two IDCs, each containing two zones for deploying full-featured replicas.

- The standby city has one IDC, where the arbitration service is deployed to reduce deployment costs and cross-city bandwidth overhead.

- When any IDC in the primary city fails, at most two replicas are lost. The remaining replicas (2/4) may not form a majority, but the arbitration service can trigger downgrade recovery to ensure RPO=0.

- This deployment solution cannot cope with disasters in the primary city. A disaster in the standby city has no impact.

Deployment diagram: