This topic introduces vector embedding technology for vector search.

What is vector embedding?

Vector embedding is a technique for converting unstructured data into numerical vectors. These vectors can capture the semantic information of unstructured data, enabling computers to "understand" and process the meaning of such data. Specifically:

Vector embedding maps unstructured data such as text, images, or audio/video to points in a high-dimensional vector space.

In this vector space, semantically similar unstructured data is mapped to nearby locations.

Vectors are typically composed of hundreds of numbers (such as 512 or 1024 dimensions).

Mathematical methods (such as cosine similarity) can be used to calculate the similarity between vectors.

Common vector embedding models include Word2Vec, BERT, and BGE. For example, when developing RAG applications, text data is often embedded into vector data and stored in a vector database, while other structured data is stored in a relational database.

Starting from OceanBase Database V4.3.3, vector data can be stored as a data type in relational tables, so vectors and traditional scalar data can be stored together in OceanBase.

Generate vector embeddings in OceanBase with the AI function service

Starting from OceanBase V4.4.1, you can generate vector embeddings using the AI function service. No extra dependencies are required: register your model and you can generate embeddings inside the database. For details, see AI function syntax and examples.

Common text embedding methods

This section describes text embedding methods.

Preparations

Install the pip command if it is not already available.

Use offline, local pre-trained embedding models

Using pre-trained models locally is flexible but needs more compute. Common options include:

Sentence Transformers

Sentence Transformers turns sentences or paragraphs into vector embeddings. It uses deep learning (including the Transformer architecture) to capture text semantics. If access to Hugging Face is slow from your region, set the mirror first: export HF_ENDPOINT=https://hf-mirror.com, then run the code below.

from sentence_transformers import SentenceTransformer

model = SentenceTransformer("BAAI/bge-m3")

sentences = [

"That is a happy person",

"That is a happy dog",

"That is a very happy person",

"Today is a sunny day"

]

embeddings = model.encode(sentences)

print(embeddings)

# [[-0.01178016 0.00884024 -0.05844684 ... 0.00750248 -0.04790139

# 0.00330675]

# [-0.03470375 -0.00886354 -0.05242309 ... 0.00899352 -0.02396279

# 0.02985837]

# [-0.01356584 0.01900942 -0.05800966 ... 0.00523864 -0.05689549

# 0.00077098]

# [-0.02149693 0.02998871 -0.05638731 ... 0.01443702 -0.02131325

# -0.00112451]]

similarities = model.similarity(embeddings, embeddings)

print(similarities.shape)

# torch.Size([4, 4])

Hugging Face Transformers

Hugging Face Transformers provides many pre-trained models for NLP and other tasks. If access to Hugging Face is slow from your region, set the mirror first: export HF_ENDPOINT=https://hf-mirror.com, then run the code below.

from transformers import AutoTokenizer, AutoModel

import torch

# Load the model and tokenizer

tokenizer = AutoTokenizer.from_pretrained("BAAI/bge-m3")

model = AutoModel.from_pretrained("BAAI/bge-m3")

# Prepare the input

texts = ["This is an example text."]

inputs = tokenizer(texts, padding=True, truncation=True, return_tensors="pt")

# Generate embeddings

with torch.no_grad():

outputs = model(**inputs)

embeddings = outputs.last_hidden_state[:, 0] # Use the [CLS] token's output

print(embeddings)

# tensor([[-1.4136, 0.7477, -0.9914, ..., 0.0937, -0.0362, -0.1650]])

print(embeddings.shape)

# torch.Size([1, 1024])

Ollama

Ollama is an open-source runtime for running, managing, and using large language models locally. It supports both LLMs (e.g. Llama 3, Mistral) and embedding models such as bge-m3.

Deploy Ollama.

On macOS and Windows, download and install from the Ollama website. After installation, Ollama runs as a background service.

On Linux:

curl -fsSL https://ollama.ai/install.sh | shPull an embedding model.

Ollama supports the bge-m3 model for text embedding:

ollama pull bge-m3Use Ollama for text embedding.

You can call Ollama's embedding API over HTTP or via the Python SDK:

HTTP API

import requests def get_embedding(text: str) -> list: """Use the HTTP API of Ollama to obtain text embeddings.""" response = requests.post( 'http://localhost:11434/api/embeddings', json={ 'model': 'bge-m3', 'prompt': text } ) return response.json()['embedding'] # Example usage text = "This is an example text." embedding = get_embedding(text) print(embedding) # [-1.4269912242889404, 0.9092104434967041, ...]Python SDK

First, install the Python SDK for Ollama:

pip install ollamaThen, you can use it like this:

import ollama # Example usage texts = ["First sentence", "Second sentence"] embeddings = ollama.embed(model="bge-m3", input=texts)['embeddings'] print(embeddings) # [[0.03486196, 0.0625187, ...], [...]]

Advantages and limitations:

Advantages:

- Fully local deployment without the need for internet connectivity

- Open-source and free, without the need for an API key

- Supports multiple models, making it easy to switch and compare

- Relatively low resource usage

Limitations:

- Limited selection of embedding models

- Performance may not match commercial services

- Requires self-maintenance and updates

- Lacks enterprise-level support

Consider these trade-offs when choosing Ollama. It is a good fit when you need strong privacy or fully offline operation. For higher stability and performance, a commercial embedding service may be better.

Use online, remote embedding services

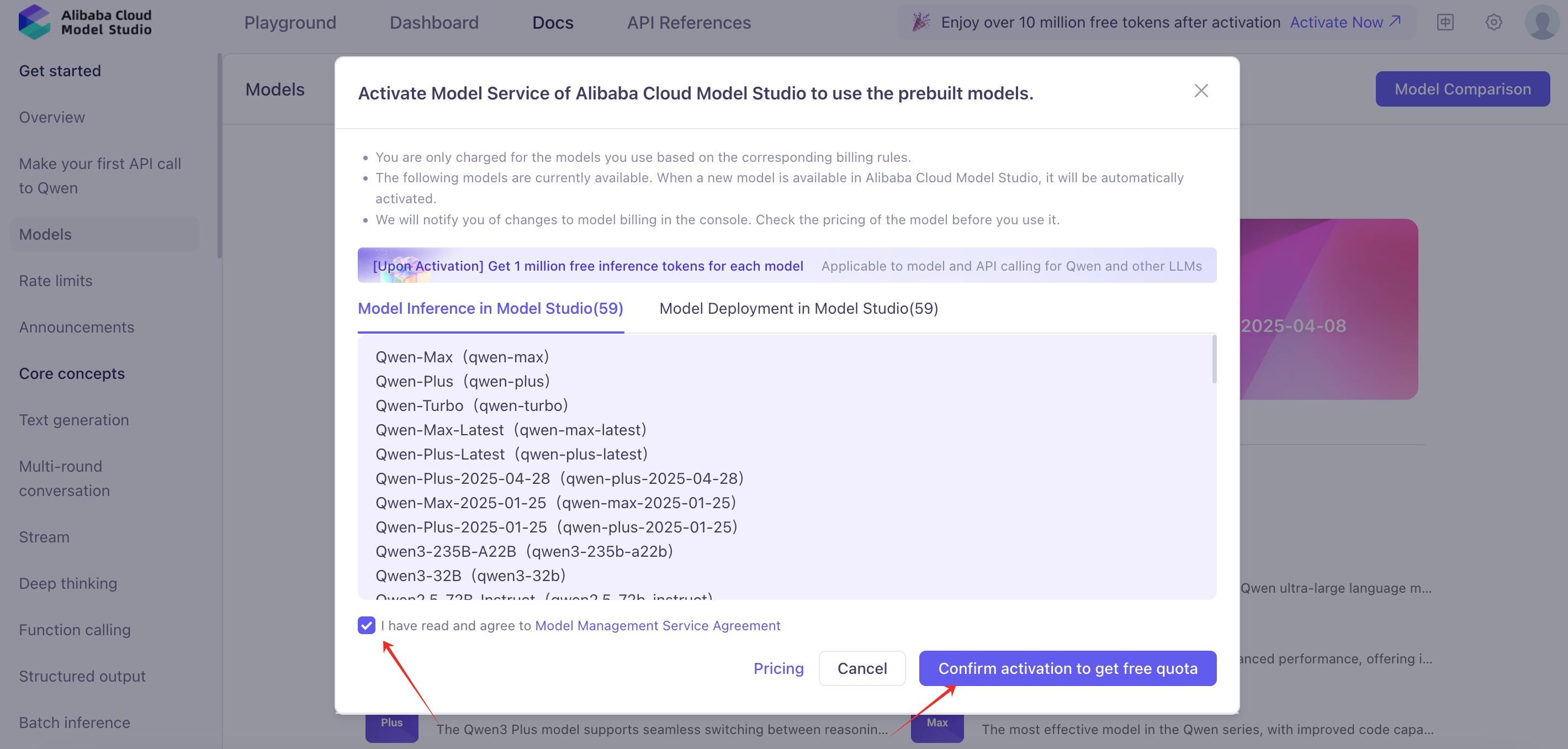

Local embedding models often need powerful hardware and careful management of loading and unloading. Many users prefer an online embedding service. Several AI providers offer text embedding APIs. For example, with Alibaba Cloud Model Studio (Bailian), you register, obtain an API key, and call the public API to get embeddings.

HTTP API

After you have an API key, you can run the code below to get text embeddings. Install the requests package first if needed: pip install requests.

import requests

from typing import List

class RemoteEmbedding():

def __init__(

self,

base_url: str,

api_key: str,

model: str,

dimensions: int = 1024,

**kwargs,

):

self._base_url = base_url

self._api_key = api_key

self._model = model

self._dimensions = dimensions

"""

OpenAI compatible embedding API. Tongyi, Baichuan, Doubao, etc.

"""

def embed_documents(

self,

texts: List[str],

) -> List[List[float]]:

"""Embed search docs.

Args:

texts: List of text to embed.

Returns:

List of embeddings.

"""

res = requests.post(

f"{self._base_url}",

headers={"Authorization": f"Bearer {self._api_key}"},

json={

"input": texts,

"model": self._model,

"encoding_format": "float",

"dimensions": self._dimensions,

},

)

data = res.json()

embeddings = []

try:

for d in data["data"]:

embeddings.append(d["embedding"][: self._dimensions])

return embeddings

except Exception as e:

print(data)

print("Error", e)

raise e

def embed_query(self, text: str, **kwargs) -> List[float]:

"""Embed query text.

Args:

text: Text to embed.

Returns:

Embedding.

"""

return self.embed_documents([text])[0]

embedding = RemoteEmbedding(

base_url="https://dashscope.aliyuncs.com/compatible-mode/v1/embeddings", # See https://bailian.console.aliyun.com for API details

api_key="your-api-key", # Replace with your API key

model="text-embedding-v3",

)

print("Embedding result:", embedding.embed_query("The weather is nice today"), "\n")

# Embedding result: [-0.03573227673768997, 0.0645645260810852, ...]

print("Embedding results:", embedding.embed_documents(["The weather is nice today", "What about tomorrow?"]), "\n")

# Embedding results: [[-0.03573227673768997, 0.0645645260810852, ...], [-0.05443647876381874, 0.07368793338537216, ...]]

Qwen (Dashscope) SDK

Qwen provides the Dashscope SDK to call its models. Install it with pip install dashscope, then you can get text embeddings as follows:

import dashscope

from dashscope import TextEmbedding

# Set the API key.

dashscope.api_key = "your-api-key"

# Prepare the input text.

texts = ["This is the first sentence", "This is the second sentence"]

# Call the embedding service.

response = TextEmbedding.call(

model="text-embedding-v3",

input=texts

)

# Retrieve the embeddings.

if response.status_code == 200:

print(response.output['embeddings'])

# [{"embedding": [-0.03193652629852295, 0.08152323216199875, ...]}, {"embedding": [...]}]

Common image embedding methods

This section describes image embedding methods.

Use offline, local pre-trained embedding models

CLIP

CLIP (Contrastive Language-Image Pre-training) is a model from OpenAI that learns from image and text together. It captures the relationship between images and text and is used for image classification, image search, and text-to-image tasks.

from PIL import Image

from transformers import CLIPProcessor, CLIPModel

model = CLIPModel.from_pretrained("openai/clip-vit-base-patch32")

processor = CLIPProcessor.from_pretrained("openai/clip-vit-base-patch32")

# Prepare the input image

image = Image.open("path_to_your_image.jpg")

texts = ["This is the first sentence", "This is the second sentence"]

# Call the embedding service

inputs = processor(text=texts, images=image, return_tensors="pt", padding=True)

outputs = model(**inputs)

# Obtain the embedding results

if outputs.status_code == 200:

print(outputs.output['embeddings'])

# [{"embedding": [-0.03193652629852295, 0.08152323216199875, ...]}, {"embedding": [...]}]