Dynamic AI-to-Database Queries with Claude, MCP, and OceanBase

- By connecting Claude to OceanBase via an MCP server, you get natural-language SQL generation, schema-aware query planning, and real-time data access out of the box.

- The integration eliminates hundreds of lines of brittle prompt engineering and manual schema injection the LLM discovers your database context dynamically.

- The same pattern scales: from a single developer prototyping on a laptop to a production multi-tenant AI application backed by OceanBase's distributed engine.

- The database has always been the hardest part of building an AI application not because the technology is difficult, but because the gap between "what the AI knows" and "what the database contains" has historically required enormous human engineering effort to bridge.

Every AI-powered data application eventually hits the same wall: you need your LLM to understand your schema, respect your data types, generate correct SQL, and return useful results all without hallucinating table names or fabricating rows that don't exist.

The conventional solution involves carefully crafted system prompts, hardcoded schema descriptions, and fragile few-shot examples that break every time your schema evolves. It works until it doesn't.

This is the problem that we set out to solve. By integrating Claude with OceanBase through the Model Context Protocol (MCP), we created a bridge that lets the AI dynamically understand the database, query it intelligently, and reason over the results all without bespoke prompt engineering for every schema change.

What Is the Model Context Protocol (MCP)?

Before diving into the integration, it helps to understand why MCP exists.

Large language models are powerful reasoners, but they are inherently stateless and context-limited. They know nothing about your database, your APIs, or your live data unless you tell them and telling them the right things, at the right time, in the right format, is a significant engineering challenge.

MCP is Anthropic's answer to this challenge. It is an open, JSON-RPC-based standard that defines how an AI model can discover and interact with external tools and data sources at runtime. Think of it as a universal adapter between an LLM and the outside world.

MCP in one sentence

MCP gives an AI model a structured, dynamic way to discover what tools and data exist, ask for what it needs, and act on the results without the developer having to hardcode every interaction.

An MCP setup has three components:

- The MCP Host — the AI environment (in our case, Claude via the Claude Desktop or Claude API).

- The MCP Server — a lightweight process that exposes tools, resources, and prompts to the host.

- The Backend — the actual system the server wraps (in our case, OceanBase).

Why OceanBase + Claude Is a Natural Fit

OceanBase serves as a powerful foundation beneath MCP, making AI-to-database interaction not just possible—but production-ready at scale.

As a distributed, MySQL-compatible relational database, OceanBase is built for mission-critical workloads. It natively supports HTAP (Hybrid Transactional and Analytical Processing), scales seamlessly to petabyte-level data, and maintains strong ACID guarantees across distributed nodes.

On the AI side, Claude is inherently tool-aware—it can request data, interpret structured responses, and chain multiple queries into a coherent reasoning flow. When connected through MCP to OceanBase, this creates a highly efficient and intelligent data interaction layer:

- Claude can dynamically explore database schemas without manual setup

- It generates MySQL-compatible SQL, executed directly by OceanBase—no translation needed

- Query results return as structured context, enabling deeper multi-step reasoning

- The integration is schema-agnostic, working across any OceanBase dataset without code changes

What makes this combination particularly powerful is OceanBase’s underlying architecture:

- MySQL Compatibility ensures seamless SQL generation from Claude

- Native HTAP allows real-time transactional data and large-scale analytics in a single query

- Distributed Scalability enables the same MCP integration to scale from local testing to enterprise workloads

- Multi-Tenancy provides strong data isolation, ideal for AI SaaS environments

In short: MCP provides the bridge, Claude provides the intelligence, and OceanBase provides the foundation that makes the entire system reliable, scalable, and production-ready.

Architecture: How the Integration Works

The architecture of this integration is elegantly simple, even though what it enables is sophisticated. Here is the data flow at a high level:

User (natural language question)

│

▼

Claude (LLM / MCP Host)

│ discovers tools via MCP

▼

OceanBase MCP Server

├── list_tables() → returns schema metadata

├── describe_table() → returns column definitions

├── execute_query() → runs SQL, returns results

└── explain_query() → returns execution plan

│

▼

OceanBase Database

(distributed, ACID, MySQL-compatible)The MCP Server

The MCP server is a small Node.js (or Python) process that you run alongside your application. It connects to OceanBase using the standard MySQL protocol and exposes a set of tools that Claude can call:

- list_tables — returns all tables in the target schema with basic metadata.

- describe_table(table_name) — returns full column definitions, types, and indexes.

- execute_query(sql) — executes a read query and returns results as JSON.

- get_sample_rows(table_name, limit) — returns sample data for context grounding.When Claude receives a user question, it first calls list_tables and describe_table to ground itself in the actual schema. Only then does it generate SQL ensuring the query references real tables and columns, not hallucinated ones.

When Claude receives a user question, it first calls list_tables and describe_table to ground itself in the actual schema. Only then does it generate SQL ensuring the query references real tables and columns, not hallucinated ones.

Setting Up the Integration: Step by Step

Step 1 — Prerequisites

You will need:

- A running OceanBase instance (local Docker or cloud).

- Node.js 18+ or Python 3.11+ for the MCP server.

- Claude Desktop or access to the Claude API with tool use enabled.

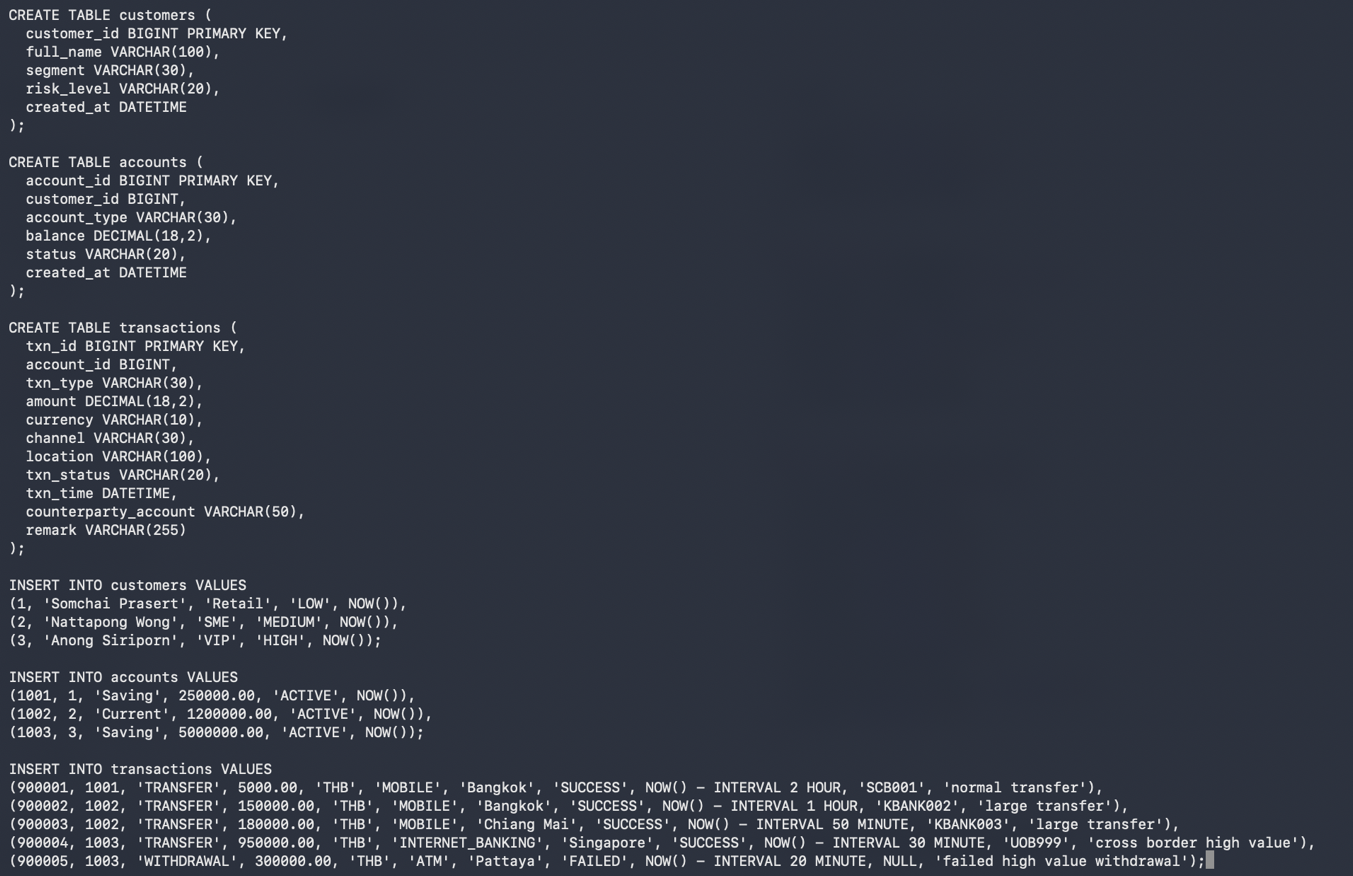

- Pre-pare banking_demo dataset

Step 2 — Install the OceanBase MCP Server

Clone the MCP server repository and install dependencies:

git clone https://github.com/oceanbase-devhub/mcp-server-oceanbase

cd mcp-server-oceanbase

npm installStep 3 — Configure the Connection

Create a .env file with your OceanBase connection details:

OB_HOST=your-oceanbase-host

OB_PORT=2881

OB_USER=root@your_tenant

OB_PASSWORD=your_password

OB_DATABASE=your_schemaStep 4 — Register the MCP Server with Claude

In Claude Desktop, open Settings > Developer > MCP Servers and add the following configuration:

{

"mcpServers": {

"oceanbase": {

"command": "node",

"args": ["/path/to/mcp-server-oceanbase/index.js"],

"env": {

"OB_HOST": "your-oceanbase-host",

"OB_PORT": "2881",

"OB_USER": "root@your_tenant",

"OB_PASSWORD": "your_password",

"OB_DATABASE": "your_schema"

}

}

}

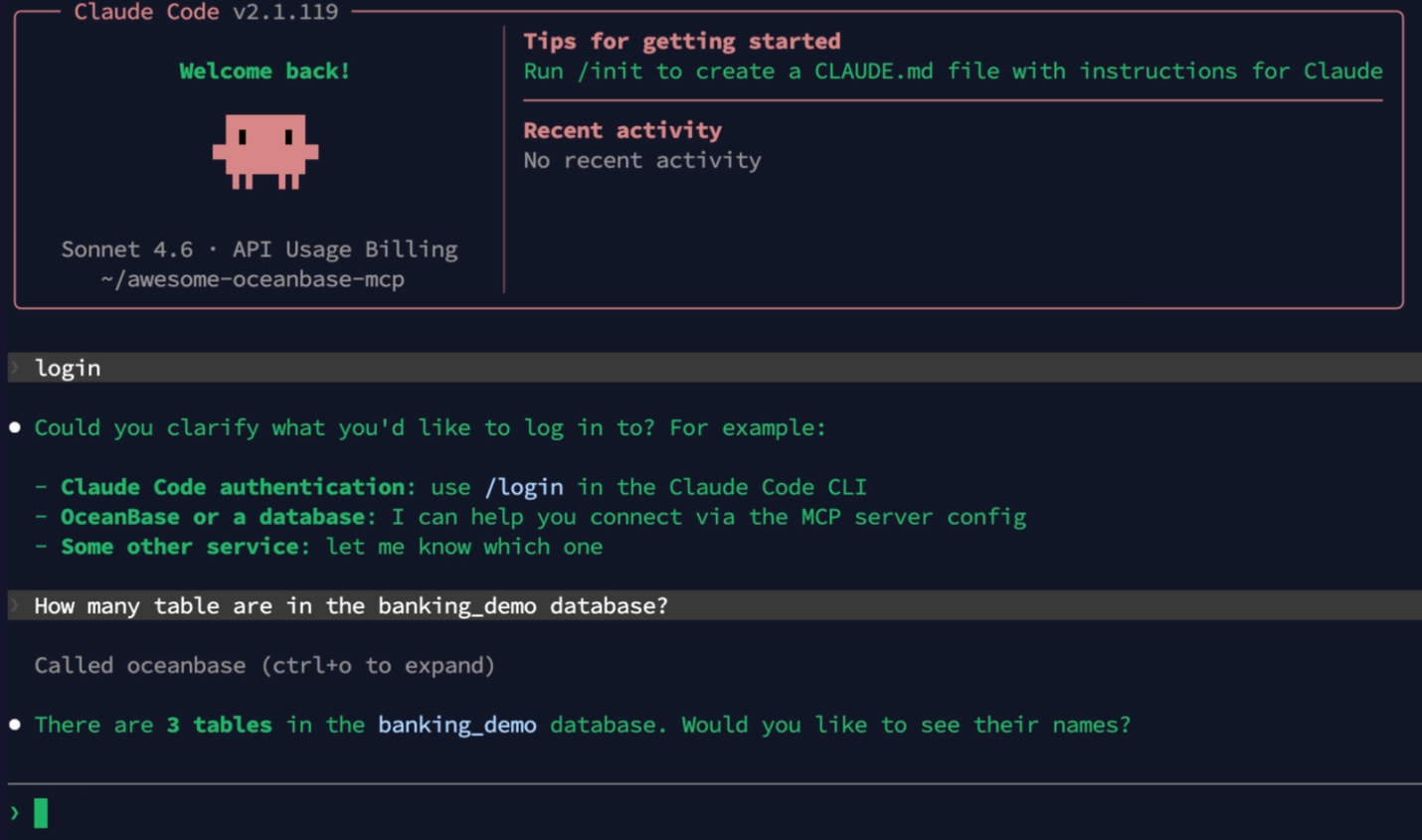

}Step 5 — Start Talking to Your Database

Restart Claude Desktop. You will see a hammer icon indicating active MCP tools. Now you can ask Claude questions in plain English.

A Real-World Demo: Banking Intelligence Dashboard

To validate the integration, I built a banking demo on top of OceanBase with realistic financial data: accounts, transactions, customers, and credit scores across multiple tenants.

The goal was simple: could a non-technical business analyst ask natural language questions and get accurate, data-grounded answers without writing a single line of SQL?

The Questions We Asked Claude

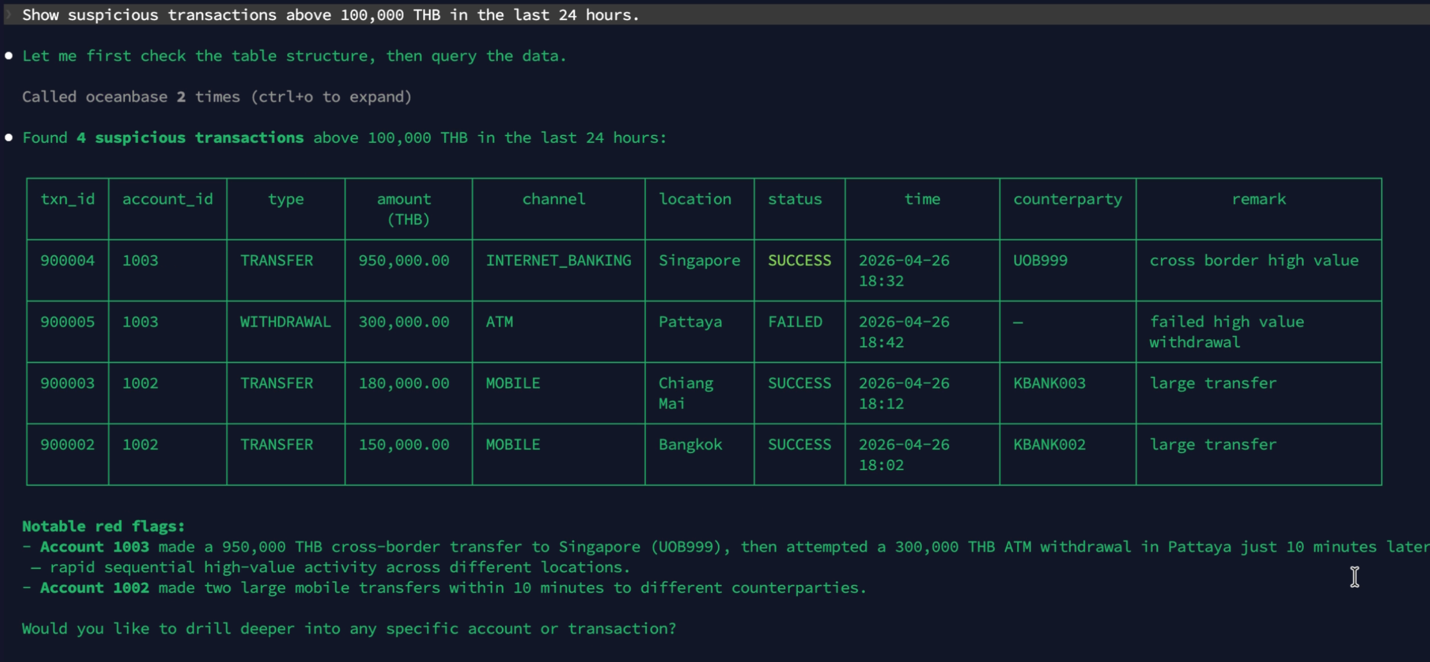

- "Show suspicious transactions above 100,000 THB in the last 24 hour”

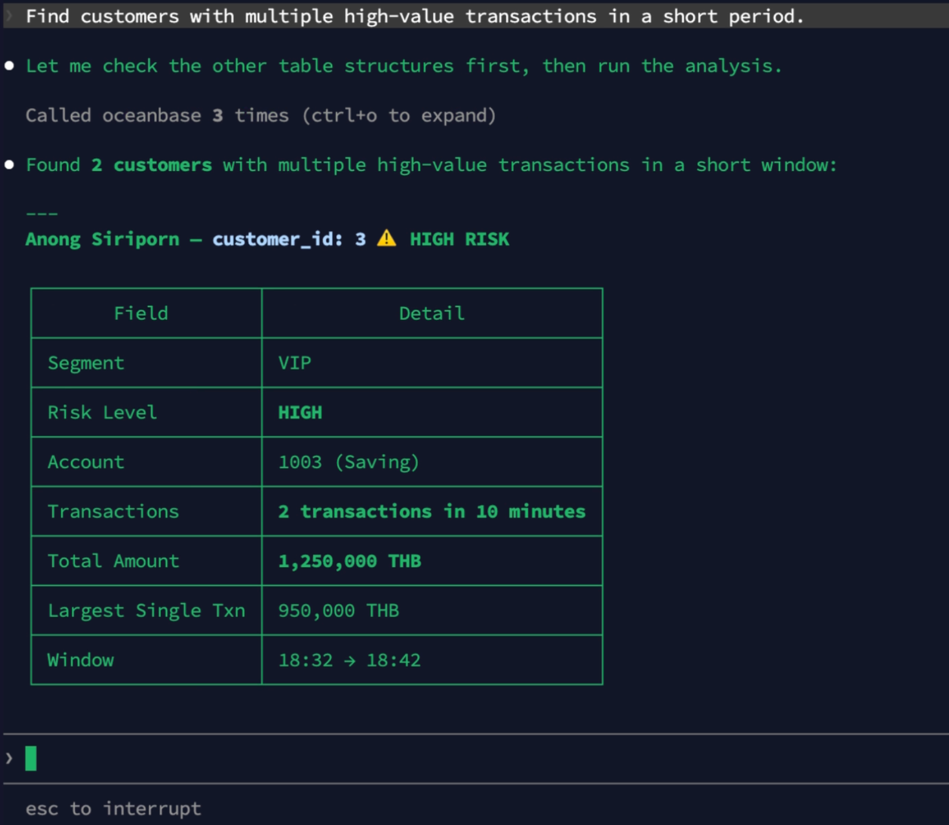

- "Find customers with multiple high-value transactions in a short period”

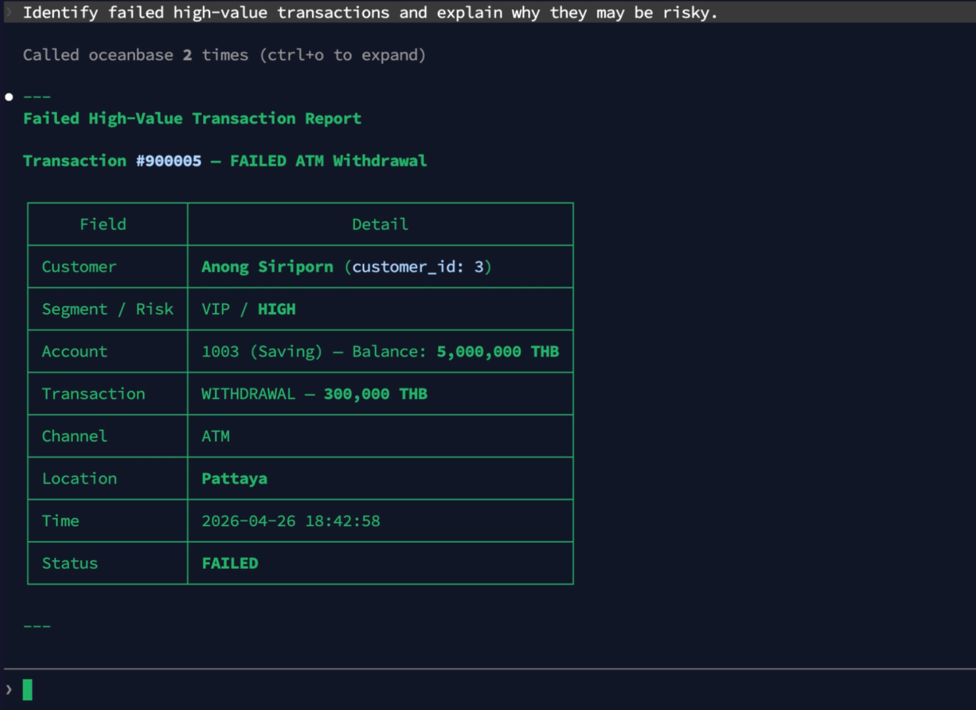

- “Identify failed high-value transactions and explain why they may be risky.”

What We Observed

The results were striking. Claude consistently:

- Correctly identified the relevant tables without any hints, purely from schema inspection.

- Generated syntactically valid SQL on the first attempt in the majority of cases.

- Handled multi-table JOINs and subqueries accurately.

- Explained its reasoning describing which tables it used and why making results auditable.

Key observations:

Claude never fabricated a table name or column that didn't exist in the schema. The dynamic schema discovery via MCP completely eliminated hallucination-driven SQL errors — the most common failure mode in LLM-to-database integrations.

Why This Matters: The End of Glue Code

Traditional LLM-to-database integrations require substantial engineering investment:

| Traditional Approach | Claude + OceanBase MCP |

| Hardcoded schema in system prompt | Dynamic schema discovery at runtime |

| Breaks on every schema migration | Self-healing — reads new schema automatically |

| Requires per-database prompt tuning | Schema-agnostic, works with any OceanBase DB |

| SQL validation is manual or post-hoc | Executes against real DB, errors caught immediately |

| 500+ lines of glue code per integration | MCP server: ~200 lines, works universally |

What's Next: Expanding the Integration

The current integration demonstrates the core MCP pattern, but there is significant room to expand:

- Write operations — extending the MCP server to support INSERT and UPDATE with confirmation flows.

- Vector search — integrating OceanBase seekdb's AI_EMBED and hybrid search capabilities, letting Claude query semantic meaning alongside structured filters.

- Multi-agent workflows — chaining Claude with specialized agents for report generation, anomaly detection, and automated remediation, all grounded in live OceanBase data.

- Observability — adding MCP tools that expose OceanBase's execution plans and performance metrics, letting Claude reason about query efficiency.

Conclusion: A New Paradigm for AI-Native Data Applications

The integration of Claude with OceanBase via MCP represents more than a useful technical shortcut it is a glimpse of how AI applications will be built going forward.

The era of hardcoded schema prompts and fragile glue code is ending. With MCP, the AI model becomes a genuine database citizen: it discovers the data model, reasons over live data, and generates correct queries dynamically, at runtime, with no manual intervention.

OceanBase provides the production-grade foundation this pattern needs: distributed scalability, ACID guarantees, MySQL & Oracle compatibility, and native HTAP all behind a single, clean API that Claude's tool-use capabilities can fully leverage.

The OceanBase MCP server is open source. Visit github.com/oceanbase-devhub/mcp-server-oceanbase to get started.

Keep Reading

View all posts

Exploring OceanBase 4.3: New Features and Enhancements

At the OceanBase DevCon 2024, we introduced the OceanBase 4.3.0 Beta, unveiling a brand new columnar engine. This release achieves near petabyte-scale, real-time analytics in seconds, and enhances the integration of TP and AP capabilities.

How seekdb M0 Gives OpenClaw Persistent Memory and Shared Experience

OpenClaw's memory degrades over time—an architectural limitation, not a configuration issue. seekdb M0 solves this with cloud-based memory that persists across sessions and shares learned experience across agents.

Why Your Vector Database Benchmark Is Wrong for AI Agents

Under streaming AI workloads, vector databases see high P99 jitter (1.1×–10.3×) under concurrency. seekdb v1.3.0’s fixed delta+snapshot HNSW avoids this, delivering 22× QPS and 19× P99 gains over prior version.