Beyond Memorization: How seekdb M0 Teaches Agents to Learn

- seekdb M0 now splits accumulated agent knowledge into Experience (strategic: what to do) and Skill (operational: how to do it), linked via

skill_refsand delivered by progressive loading — the short strategy goes into context first, the full procedure is expanded only on demand. - On AppWorld with the same distillation source data, GPT-4o + M0 reached a 39% pass rate (vs 24% baseline, +62% relative), with −35% steps and −32% tokens — against 22% for Hermes's SKILL.md approach on identical traces.

- New economics for agent products: strong model teaches once, weak models work forever. Use GPT-5.4 or Claude Sonnet 4.6 to build the knowledge base; subsequent runs on GPT-4o perform better at a fraction of the cost.

Agent capabilities have come a long way in the past year. But once agents hit real production, one problem keeps showing up: a task that was clearly accomplished once — next time the agent sees something similar, it starts from scratch. Execution paths don't visibly converge. Pits it fell into yesterday aren't reliably avoided today.

The issue usually isn't that the agent is missing a Skill. It's that the system lacks a mechanism for turning successful trajectories into reusable capability. Last month, seekdb M0 solved the cross-session memory problem. This time we went further and split experience into Experience + Skill — not to make agents remember more history, but to make them actually learn from the work they've done. The last version solved "don't forget." This one solves "actually learn."

If the design works, its value shouldn't show up in "how much did we remember" — it should show up in whether real tasks get done better, faster, and cheaper. So we tested it on something closer to real work: AppWorld.

The headline, up front. On AppWorld (a benchmark widely recognized as hard for agents), we ran a strict controlled experiment:

- Same distillation source data, fed separately to M0 and Hermes's native approach

- GPT-4o pass rate climbed from 24% to 39% (+62% relative)

- Average steps dropped from 9.5 to 6.2 (-35%), tokens from 2.56M to 1.74M (-32%)

Below is why we rethought what "experience" means.

From Locomo to AppWorld: Chatty Agents and Working Agents Are Different Beasts

Early M0 versions were benchmarked on locomo — a conversational memory benchmark grading whether an agent recalls what a user told it earlier. That works for a "companion" agent, but doesn't probe working agents like OpenClaw or Hermes.

Working agents don't chat with you — they do things for you. Tool calls, multi-step reasoning, structured API responses. An agent that "remembers you like TypeScript" and one that "builds you a Taylor Swift playlist on Spotify" are evaluated on completely different axes.

AppWorld is built for the second kind: 9 everyday apps (music, notes, shopping, social), 457 APIs, 750 natural-language tasks.

Take one task, "build me a Taylor Swift playlist." At minimum the agent has to:

- Log into the Spotify-like music account

- Search for "Taylor Swift"

- Filter out songs whose titles contain "Taylor Swift" but are by other artists

- Paginate the results

- Create an empty playlist

- Add songs one by one

Every step has pitfalls — field disambiguation, pagination, skipping member-only tracks. None are solved by a Skill doc; they're learned by hitting them.

AppWorld's evaluation is state-based unit tests: not just "did the final answer look right," but "did you corrupt the user's existing playlists along the way?" It allows multiple valid paths, so memorizing one API sequence doesn't cut it. GPT-4o runs bare below 10%. With ReAct prompting, it reaches 24% — the 2024 SOTA. Most teams don't try to publish numbers on AppWorld at all.

What "Experience" Actually Is — and Why We Had to Split It

In earlier M0 versions, "experience" was a single concept. On real workflows, we realized the word smuggled in two fundamentally different things:

Experience A: "Spotify's list endpoints (show_song_library / show_album_library / show_playlist_library) are paginated. Default page_limit is only 5 — you must set it to 20 explicitly. Loop the call until an empty list comes back." (In M0 this is stored as a structured Skill with 4 steps and 2 pitfalls.)

Experience B: "To find a user's most-played R&B songs, you can't just query the song library — you also need to collect song_ids from the album and playlist libraries, dedupe them, and call show_song on each to get genre and play_count. Querying only show_song_library misses a lot of songs."

Same domain (Spotify song queries), completely different nature.

Experience A is operational knowledge — the exact API call, its gotchas, a structured flow (init params → call → check → loop), pitfalls. Reusable across users, worth sharing.

Experience B is strategic knowledge — how to approach the problem. No parameters, no response fields. Just the direction: query three libraries, dedupe, fetch details. Without it, the agent silently misses songs and returns incomplete results.

Cramming both into one pool causes two problems:

Retrieval signals interfere. Operational knowledge tends to be long (a full login + pagination flow can be a dozen lines with code); strategic knowledge is short. In vector search, long text has richer semantics and squeezes out the short strategic entries you actually wanted.

Context management breaks down. Dumping the full operation manual into the agent's context made GPT-4o worse — the model started "reading from the manual" instead of reasoning about state. The experiment below confirms it.

Experience + Skill: The Split

- Experience: strategic knowledge. One or two sentences on "what to do, what to watch for." Lightweight, low injection cost.

- Skill: operational knowledge. Structured procedures (steps + pitfalls + prerequisites) with a clear execution path.

The two are linked via skill_refs. One Experience can reference multiple Skills:

Experience: "Spotify operations require login first. All list endpoints require pagination (max 20 per page). Search results must be filtered by artist." skill_refs: [#3 Spotify-Login, #7 Spotify-Pagination]

The agent reads the Experience for overall strategy (login, paginate, filter). When it needs a specific step, it follows skill_refs to expand the full Skill.

This "summarize first, expand on demand" design is the core. We call it progressive loading.

Four-Way Hybrid Search

After the split, Experience and Skill live in separate OceanBase tables. Each table has title and description fields, both indexed for vector similarity and full-text search. Retrieval runs four paths in parallel:

title_vector + description_vector + title_fulltext + description_fulltext

↓ ↓

RRF fusion (Reciprocal Rank Fusion, k=60)

↓

final ranking

Title matching finds entries by name; description matching finds by content. Vector and full-text complement each other — vectors handle semantic equivalence ("create playlist" ≈ "build a song collection"), full-text handles exact matches (API names, error codes).

Distillation: Skills First, Experience Second

Extracting knowledge from a trace follows a specific order:

- Extract Skills first — identify operational flows and structure them into steps/pitfalls/prerequisites.

- Store Skills — dedupe (vector similarity > 0.75 auto-merges), review, write.

- Build Skill context — pass the stored Skill list to the next step.

- Extract Experiences next — the LLM can see existing Skills and naturally references their IDs.

- Store Experiences — same dedup/review/write flow;

skill_refsrecords references.

Skills-first ordering means the Experience-to-Skill link isn't human-curated — it forms naturally during distillation.

The Fair Comparison Experiment

Why stress "fair"? Different systems using different strong models to accumulate different knowledge, then claiming "my approach is better" isn't fair — the strong-model trace quality may be doing all the work.

Design:

- Run Hermes + GPT-5.4 once on AppWorld dev (54 tasks) and record every trace — 34 successes, 20 failures.

- Feed the same traces to both distillers:

- M0

distill_all()→ 85 Experiences (withskill_refs) - Hermes

extract_skill()→ 44 SKILL.md files - Have GPT-4o run AppWorld with each side's distilled knowledge, 15-step limit.

Single variable: distillation algorithm + storage/retrieval. Same model, same tasks, same step limit.

Core comparison

Framework | Mode | Passed | Pass rate | Gain | Avg steps | Step Δ | Tokens | Token Δ |

|---|---|---|---|---|---|---|---|---|

| — | GPT-4o baseline | 13/54 | 24% | — | 9.5 | — | 2.56M | — |

| M0 | +Experience→Skill | 21/54 | 39% | +8 (+15pp) | 6.2 | -35% | 1.74M | -32% |

| Hermes | +SKILL.md | 12/54 | 22% | -1 (-2pp) | 10.4 | +11% | — | — |

Strong-model baseline (GPT-5.4, the source of the distillation data)

Framework | Pass rate | Avg steps | Distillation output |

|---|---|---|---|

| Hermes (no-skill, 15 steps) | 34/54 (63%) | 12.4 | 54 traces |

| → M0 distillation | — | — | 85 experiences |

| → Hermes distillation | — | — | 44 SKILL.md |

Baseline alignment check

We verified both frameworks, with no experience at all, produced the same GPT-4o pass rate — ruling out framework-level differences:

Framework | Pass rate | Steps |

|---|---|---|

| M0 ReAct | 13/54 (24%) | 9.5 |

| Hermes tool-calling | 13/54 (24%) | 9.4 |

Task-level analysis

Tasks M0 Experience→Skill rescued (baseline FAIL → with-experience PASS):

Task ID | Category |

|---|---|

| 23cf851_1, 23cf851_3 | Spotify |

| 37a8675_3, 68ee2c9_1 | Cross-app |

| 4ec8de5_1 / 2 / 3 | Spotify advanced query |

| 50e1ac9_1 / 2 | Spotify aggregation |

| fac291d_1 | Venmo |

10 rescued, 2 lost (396c5a2_1 / 3) → net +8.

Hermes SKILL.md: rescued 6 (383cbac_1 / 3, 50e1ac9_1 / 2, 6171bbc_3, d4e9306_1), lost 7 (396c5a2_3, 4ec8de5_1, b119b1f_1 / 3, d4e9306_3, fac291d_1 / 3) → net -1.

Why M0 Worked This Time

Side by side:

Dimension | M0 Experience→Skill | Hermes SKILL.md |

|---|---|---|

| Storage | OceanBase (vector + full-text indexes) | Local filesystem |

| Retrieval | 4-way hybrid (title_vec + desc_vec + title_ft + desc_ft → RRF) | Filename / tag match |

| Knowledge structure | Experience (strategy) + Skill (ops), linked via skill_refs | Single SKILL.md (full manual) |

| Loading | Progressive: inject Experience summary first, expand Skills on demand | Inject matching SKILL.md in full into system prompt |

| Dedup | Vector similarity + LLM merge | Same filename overwrites |

| Pass rate gain | +15pp | -2pp |

| Step change | -35% | +11% |

| Token change | -32% | — |

The lesson: dumping the full manual hurts. Hermes injects the matched SKILL.md straight into the system prompt. With GPT-4o this was a net negative — steps up 11%, pass rate below baseline. The 44 files flooded the model's attention, shifted it from state-based reasoning to "following the recipe," and when a manual step diverged slightly from actual API responses (e.g. field-name casing), left it more confused than if it had nothing.

M0's progressive loading avoids this. The agent first sees one line of Experience — "Spotify needs login, list endpoints paginate." That's enough to steer strategy. Only when it reaches the pagination step does it pull the full code template via skill_refs.

Context isn't valuable because it's abundant. It's valuable because it's relevant — delivered at the right time.

The Efficiency Dividend: Strong Model Teaches Once, Weak Models Work Forever

Metric | Baseline | +Experience→Skill | Δ |

|---|---|---|---|

| Avg steps | 9.5 | 6.2 | -35% |

| Total tokens | 2.56M | 1.74M | -32% |

Even when tasks fail, an experienced agent fails with fewer steps and tokens — experience helps it skip unnecessary exploration.

Behind those numbers is a commercially useful pattern: strong model teaches, weak model delivers. Running the 54-task set:

- GPT-5.4 single run: ~$57.60 (at $22.5 / 1M tokens)

- GPT-4o baseline bare: 2.56M tokens, ~$25.60 (at $10 / 1M tokens)

- GPT-4o + M0 experience: 1.74M tokens, ~$17.40

Use GPT-5.4 or Claude Sonnet 4.6 to get the task done once. M0 distills the trace into Experiences and Skills in the cloud. After that, the same kind of task runs fine on GPT-4o or cheaper — better pass rate, fewer steps, smaller bill.

A step-change for production. In a typical agent product, 70% of requests are repeat patterns — you don't need to burn the most expensive model every time. Teach once; the rest of the traffic rides the experience dividend. Hermes Agent users running heavy research tasks have asked whether they could "use the strong model only on the first run." This is the answer: yes, and it's the recommended usage.

Experience That Shares and Evolves

Someone will ask: isn't this basically Hermes's "self-evolution"? Conceptually yes — both let agents learn from practice. But the paths differ:

- Hermes has one layer — Skill — a full operation manual

- M0 has two — Experience + Skill — separating strategy from operations; retrieval moves from filename matching to four-way hybrid search; loading moves from full injection to on-demand expansion

Different algorithms, different data structures. Same benchmark: 39% vs 22%. AppWorld is hard to fudge — it doesn't measure "recall accuracy" as a proxy; it measures whether tasks complete.

M0's experience system isn't a single-user closed loop. Once an Experience accumulates enough positive feedback, it's promoted to the public pool. Every agent connected to M0 can retrieve it.

Which means: the pit you fell into is a pit nobody else has to fall into. If one agent figures out that "Spotify search results need artist-field filtering to exclude matching titles by other artists," every other user's agent benefits once that Experience is published. Each public Experience carries a contributor list; vector dedup auto-merges independent discoveries and stacks contributor records — knowledge aggregation as a side effect of distillation, not a submit-and-review flow.

Closing Thoughts

Last month's M0 solved "memory doesn't get lost" — agents retain who the user is across sessions. This upgrade solves "experience becomes useful" — agents actually get smarter, faster, and cheaper at the work they do.

Two years ago teams didn't dare publish numbers on AppWorld; the rates were too ugly. M0's upgrade hits 39% — plenty of absolute headroom left. But +62% pass rate, -35% steps, -32% tokens against the GPT-4o baseline, same model and tasks, says something clear: the engineering value of a well-designed experience system is real and measurable.

A few things are still open:

Retrieval could be sharper. The four-way search indexes titles and descriptions but doesn't yet match Skill internals (steps, pitfalls) at fine granularity. A specific error code should ideally hit the exact pitfall entry.

AppWorld pass rate has headroom. 39% is +62% relative, but the absolute number isn't high enough. Part is distillation quality — Experience pulled from failed traces is noisier. Part is coverage — some task types aren't in the pool at all.

We'd welcome you to connect M0 and let your OpenClaw agent start accumulating its own Experiences and Skills. A pit you fall into once is a pit you don't have to fall into again.

Getting Started

For existing M0 users this upgrade is automatic — your agent builds its own Experiences and Skills during normal use, no configuration.

If you haven't connected M0 yet, tell your OpenClaw agent:

Read https://m0.seekdb.ai/SKILL.md and follow the instructions to install and configure m0.

The agent reads the doc, requests an Access Key, downloads the plugin, writes the config, and restarts the Gateway — end to end, no manual steps.

Links

- seekdb M0 cloud memory service: https://m0.seekdb.ai

- PowerMem open source: https://github.com/oceanbase/powermem

- AppWorld benchmark: https://appworld.dev

- seekdb D0 sandbox: https://d0.seekdb.ai

Keep Reading

View all posts

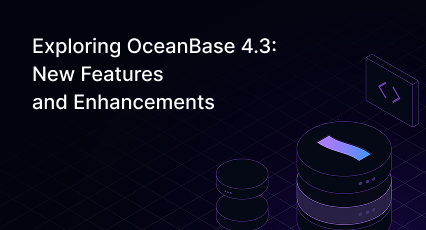

Exploring OceanBase 4.3: New Features and Enhancements

At the OceanBase DevCon 2024, we introduced the OceanBase 4.3.0 Beta, unveiling a brand new columnar engine. This release achieves near petabyte-scale, real-time analytics in seconds, and enhances the integration of TP and AP capabilities.

How seekdb M0 Gives OpenClaw Persistent Memory and Shared Experience

OpenClaw's memory degrades over time—an architectural limitation, not a configuration issue. seekdb M0 solves this with cloud-based memory that persists across sessions and shares learned experience across agents.

Why Your Vector Database Benchmark Is Wrong for AI Agents

Under streaming AI workloads, vector databases see high P99 jitter (1.1×–10.3×) under concurrency. seekdb v1.3.0’s fixed delta+snapshot HNSW avoids this, delivering 22× QPS and 19× P99 gains over prior version.